2022 could go down in history as the year AI art went mainstream.

An explosion of quality tools from multiple sources, built on different AI models, is making AI art accessible to anyone with a smartphone and an Internet connection. The tools use an AI model to convert text input, known as a prompt, into an image.

The prompt is key: Adding or removing a single word can lead to remarkably different results. “’Prompt engineering’ is quickly becoming a valuable skill, and models that are trained on the same data and with the right prompt should produce the same results,” says Pranav Vaidhyanathan, chief technology officer of the AI-powered social media marketplace GenerAI. There’s even a growing market for prompts that create specific results.

Here’s five tools to help you get started. To compare them, I gave them all the same prompt: “A person and a robot standing beside a large oak tree on a hill with clouds in the sky.”

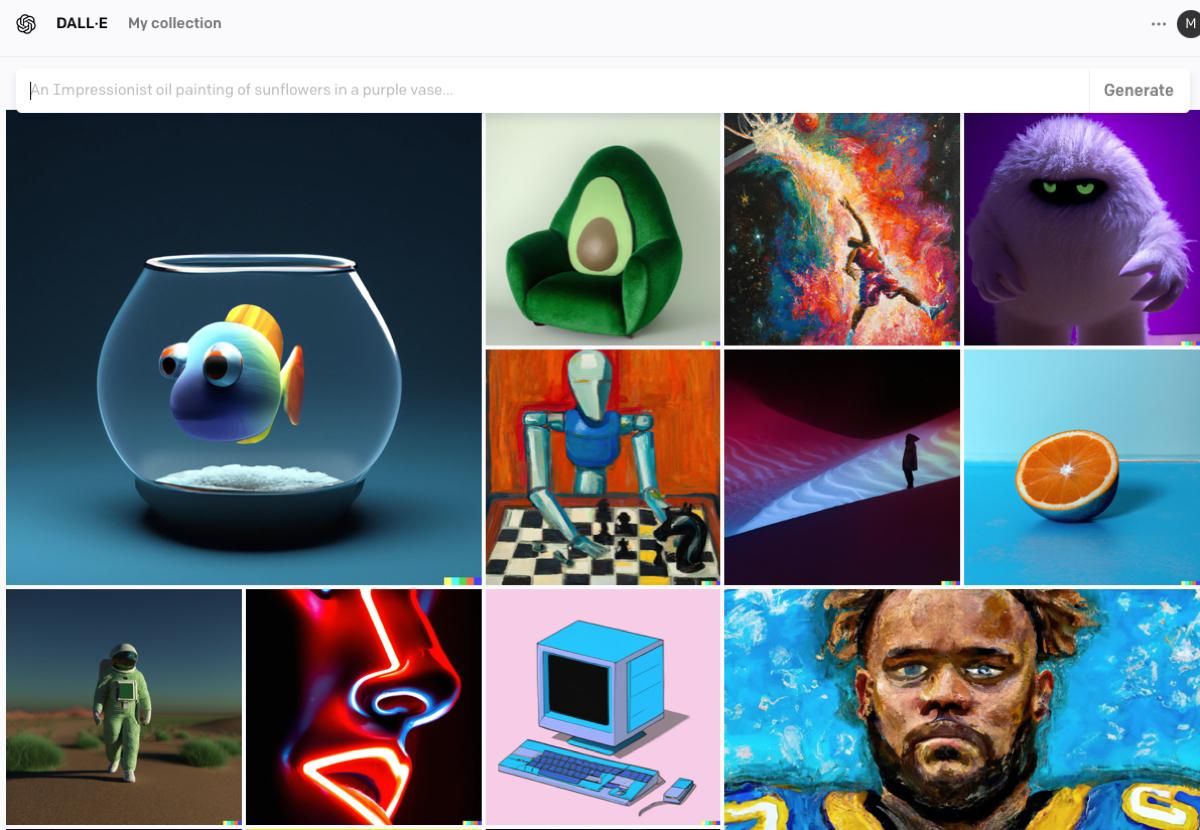

DALL-E 2

OpenAI, founded in 2015, made headlines with the release of GPT-3, a natural-language model, in 2020. The DALL-E digital image model followed in January of 2021, which has since been evolved into DALL-E 2. OpenAI’s model offers excellent images across a wide variety of styles. Specific prompts can lead to specific results, or you can offer a vague prompt and enjoy several radically different results.

DALL-E 2, now open to everyone through OpenAI’s website, is the best tool for those curious what the hype is about. It’s quick, beating others I’ve tried by a noticeable margin, and the website is easy to navigate. It provides four results at once, typically in much different styles, which reduces how often you need to rerun a prompt. DALL-E 2’s results are good, too. It’s the only AI model that depicted both the person and the robot.

This is a commercial tool. Signing up provides you with 50 free credits, with an additional 15 free credits offered monthly. Additional credits can be purchased at a rate of 115 credits for US $15.

Stable Diffusion / Dream Studio

Stable Diffusion, from Stability AI, is popular for the same reasons as DALL-E 2: it’s quick, effective, and can produce usable images from a wide variety of prompts.

Anyone can use Stable Diffusion free of charge through Stable Diffusion’s demo page. It’s not as quick as DALL-E 2 is but usually offers results in 30 seconds or less. It also provides four variations at once, just like DALL-E 2.

Stable Diffusion’s model is open source, so serious users can thoroughly tweak how it works. This has supercharged its popularity as enthusiasts flock toward the model. “We are definitely seeing a trend of artists and others being attracted to open-source models such as Stable Diffusion over closed-source and controlled models such as OpenAI’s DALL-E 2,” says Vaidhyanathan.

Stability AI has a commercial tool, Dream Studio, built on Stable Diffusion. It provides a trial, after which it sells credits to generate new images. In exchange, users can access sliders to tweak the model’s results.

Midjourney

Midjourney earned a reputation for quality, and stirred controversy, after a contestant used it to win a digital art prize at the Colorado State Fair—without disclosing the image’s method of creation. The tool is great at vivid, ethereal, surreal images, and the user base has embraced its style.

The tool is accessible only through Discord, a popular instant-messaging platform. Prompts are entered directly into chat. Chat is public, so everyone in a channel can view the prompt you’ve entered and the results. It’s sure to confuse readers not savvy to how Discord works—which is likely considered a feature, not a bug.

Midjourney is a commercial product and monetized like other commercial AI art-generation tools. Everyone starts with about 25 credits but must pay a monthly membership for more. Payment is handled through a web app that can also be used to view the images generated in response to your prompts.

Craiyon

Originally called DALL-E Mini, Craiyon has no direct link to OpenAI’s model, and its creators offer the tool free of charge. Results can take up to 2 minutes to generate and are low in resolution, but nine results appear at once.

Craiyon differs in its use of unfiltered data and makes no specific effort to refine, train, or correct the results. Results are usually lackluster compared with those of other tools, and it has trouble dealing with fine details. Human faces, for example, look downright disturbing.

There is a novelty to the tool. Serving results raw exposes the general strengths and weaknesses of AI image generation and the difficulty of creating usable results. It also highlights ethical issues, as Craiyon doesn’t filter prompts. Entering an offensive prompt demonstrates how disturbing AI image generation can be if used with malicious intent.

VQGAN+CLIP

AI image generators’ recent popularity has inspired hundreds of tools that pair advanced AI models with a bare-bones interface. VQGAN+CLIP, which runs entirely in a Google Colaboratory notebook, is one such tool.

It earns a mention because it’s (somewhat) easy to use but offers a peek under the hood. You’ll get to watch the tool iterate new variations in real time. And though accessed in a Colaboratory notebook, the model runs on your local machine. Each prompt begins as a blob but slowly morphs into a usable image.

Well, sometimes, at least. The tool’s results often aren’t great. It’s slow, delivers only one variation at a time, and consumes significant video memory. On the plus side, however, it’s entirely free and contains no ads, so it’s a fine choice if you have some time on your hands.

- What Can AI Tell Us About Fine Art? - IEEE Spectrum ›

- DALL-E 2's Failures Are the Most Interesting Thing About It - IEEE ... ›

- AI-Generated Fashion Is Next Wave of DIY Design - IEEE Spectrum ›

- With AI Watermarking, Creators Strike Back - IEEE Spectrum ›

- Stable Attribution Identifies the Art Behind AI Images - IEEE Spectrum ›

- How Generative AI Helped Me Imagine a Better Robot - IEEE Spectrum ›

- AI Art Generators Can Be Fooled Into Making NSFW Images - IEEE Spectrum ›

Matthew S. Smith is a freelance consumer-tech journalist. An avid gamer, he is a former staff editor at Digital Trends and is particularly fond of wearables, e-bikes, all things smartphone, and CES, which he has attended every year since 2009.