Why Mobile Voice Quality Still Stinks—and How to Fix It

Technologies such as VoLTE and HD Voice could improve sound quality, but cellular carriers aren’t deploying them fast enough

After several rings,John Beerends picks up my call on his cellphone. Beerends, a senior researcher at the Netherlands Organization for Applied Scientific Research, in Delft, is one of the world’s top experts on sound perception, and I’ve called from Boston to ask his opinion on the quality of audio on mobile phones. But the connection keeps cutting out, and what I can hear is almost unintelligible. I must sound just as bad, because he asks me to dial him back on his landline. This time, his voice is much clearer. And he immediately confirms what now seems glaringly obvious: Despite their ubiquity and decades-long existence, cellphones still make for pretty poor phones.

How can that be? After all, today’s smartphones are incredible feats of engineering. Packing the processing power of a mid-1980s supercomputer into a sleek, pocket-size slab, they can take photographs, play music and videos, and stream tens of megabits of data to the palm of your hand every second. But try calling your boss in rush-hour traffic to say you’re running late, and there’s a good chance your message won’t get through. “Mobile companies have rather lost the focus on a smartphone also being a telephone,” says Jeremy Green, now a tech-industry analyst at Machina Research, in Reading, England—on a cell connection that keeps dropping words.

Laboratory tests by Broadcom confirm that it’s not just my aging ears: Even in the best conditions, including a quiet environment and a strong wireless signal, users consistently rate voice quality lower on a cellphone than on a landline. Weaken the cellular link or add background noise, such as from wind or street traffic, and callers’ opinions of the experience drop dramatically.

For example, engineers at Nokia found that when they compressed voice data to 5.15 kilobits per second, which cellphones do automatically when a tower connection is weak, user ratings fell from “good” to “fair.” When the engineers decoded and then recompressed the data, which happens when a call travels through the backbone network to another cellphone, the ratings dipped lower still.

So why do we mobile subscribers—all 4.5 billion of us—put up with such crummy voice service? In the early days of cellphones, their fickleness wasn’t such a big deal. Back then, mobility was a luxury, a handy supplement to a dependable wired line. But now, more and more people are cutting the cord—or never installing one. Today in the United States, for instance, 40 percent of homes rely exclusively on mobile phones for making and receiving calls. In Africa, cellular subscriptions outnumber landlines 52 to 1, according to the International Telecommunication Union.

At the same time, U.S. wire-line carriers, which by law must provide service to everyone at reasonable prices, are pushing the government to let them phase out the aging public switched telephone network. Rather than replace decaying or damaged copper circuits, they plan to deliver Internet-based service, known as Voice over IP (VoIP), using cable or fiber lines. In remote areas where wired broadband is too costly, however, customers could be forced to rely on wireless links. On Fire Island, in New York, for example, Verizon refused to rebuild the copper infrastructure destroyed by Hurricane Sandy in 2012, offering a fixed wireless service called Voice Link in its stead. Although Verizon eventually promised to install a fiber network on the island after customers protested, other communities may not be so lucky in the future.

Upgrading cellular networks to provide high-quality voice service is now more important than ever. So what’s the holdup?

One explanation is that there’s no silver-bullet fix. Most cellular voice traffic today passes through a patchwork of diverse systems, each exchange point an opportunity for degradation and delays. But lest you despair, here’s some good news: Solutions to many of these impediments are in the works or already available, including a standard known as HD voice or wideband audio, and an add-on to today’s 4G LTE systems called Voice over LTE. Although many operators, particularly in the United States, have been slow to roll them out, these technologies could very well prove to be game changers.

The first obstacle to a good-quality voice connection on today’s mobile phones is their design. Handsets have evolved considerably since Motorola debuted the original “brick” phones, made famous by Michael Douglas’s suave character Gordon Gekko in the 1987 movie Wall Street. With its ear-size speaker and microphone pointed directly at his mouth, the monstrosity Gekko used was clearly constructed with voice calls in mind. Modern phone makers have taken a new tack. The smartphone’s form “is driven by industrial design and not voice quality,” says Chris Kyriakakis, founder and chief technology officer of Audyssey, a Los Angeles–based acoustic design company.

For example, to create an elegant, palmable chassis for watching videos and thumbing through music playlists, smartphone designers shrink and flatten speakers and sometimes even cover them in plastic, Kyriakakis explains. By themselves, small, compressed speakers damp down low frequencies, causing Darth Vader to sound like Tiny Tim. So smartphones use software to lessen such distortions, making voices sound more realistic.

Your smartphone’s puny microphone is similarly problematic. And the farther it is from your mouth, the more unwanted noise it picks up. Many high-end smartphones address this problem by using multiple microphones, typically three. With one microphone situated as close as possible to the user’s lips and the additional ones set farther away—at the opposite end, for example—a smartphone can compare the different incoming signals to better filter out background sounds.

But noise-cancellation algorithms aren’t a sure-fire fix, because they can take a few seconds to recognize a noise. So while they’re quite good at removing consistent sounds such as the thrum of a leaf blower or the hiss inside a passenger jet, they do a poor job of eliminating sudden or irregular disruptions, like a baby crying. Voice echoes are especially difficult to weed out because the algorithms must also preserve speech, says Jari Sjoberg, an audio expert at Microsoft and Nokia. Too much noise suppression removes much of the natural acoustic variation in human speech, making it sound robotic.

And you can pretty much forget about getting a clear voice signal through a Bluetooth ear clip or the speakerphone in your car. These setups put the microphone closer to the speaker than to your mouth, causing callers’ voices or reflections within the car to echo back at them.

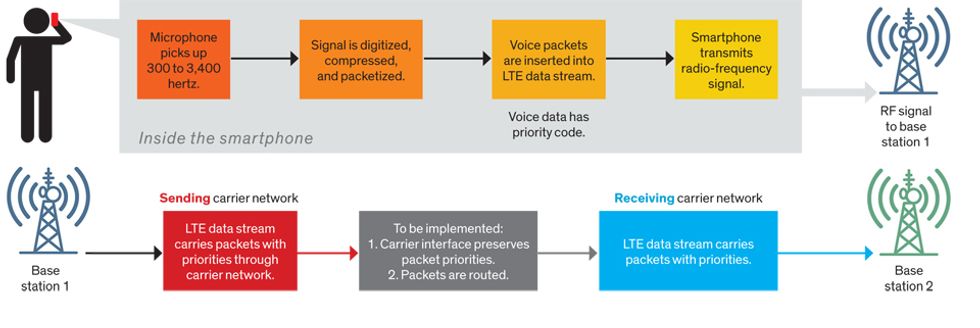

Yet even if your cellphone distills crisp, noise-free speech, there’s no guarantee it will arrive at the listener intact. The next threat comes when the phone transmits the call to a base station. Modeled after standard wire-line phones, most mobile phones today digitize audio frequencies from 300 to 3,400 hertz. But unlike landlines, which provide each caller with a dedicated, full-capacity channel, cellphones must share a limited amount of wireless spectrum. So they compress the voice data to let more users connect.

Standard compression rates vary from 12.2 kb/s to 4.75 kb/s, depending on the volume of voice traffic and the strength of the wireless signal. Calls compressed to speeds as low as 7.95 kb/s can still sound almost as good as a landline connection. But beyond that, “you start to hear compression artifacts,” including missing syllables and distortions such as ringing or warbling, says Jerry Gibson, a wireless-engineering expert at the University of California, Santa Barbara.

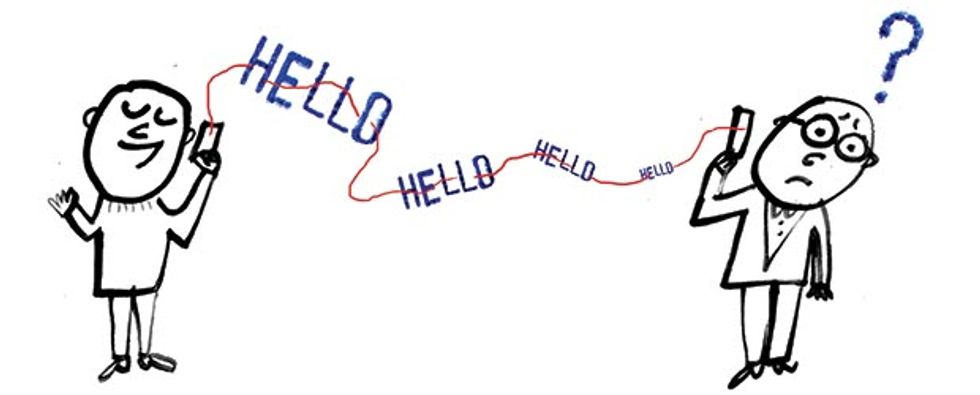

If you’re making a local call to a mobile user on your own carrier network, count yourself lucky. The compressed data will likely travel to the receiving cellphone without further manipulation, and so voice quality may not be half bad. But say you’re talking to someone across the country or on a different carrier. In those cases, your local network will typically direct the call into the backbone telephone network, which was designed to carry landline traffic at 64 kb/s. So transcoding equipment at the exchange point must convert the mobile voice data to the higher wire-line rate.

A standard landline phone can decode that signal without losing more information. But if your call is sent to another cellphone, voice quality will take another nosedive when the base station serving the phone recompresses the data to fit into a cramped wireless channel.

Other parts of the telephone network may require additional conversions, which can further degrade quality. For instance, international carriers sometimes compress voice data to stuff more calls through subsea cables rather than pay for additional capacity. The extra compression cycles “can explain the very poor international voice call quality that we sometimes experience,” says Jan Derksen, head of technical marketing at Ericsson, in Stockholm.

A couple of technologies already exist that can circumvent these choke points—or at least lessen the damage. Many new smartphones have one or both built in. But for you to use these enhancements to their full potential, carriers will have to make major network upgrades, which will take time and money.

One solution is HD voice. This transmission standard more than doubles the range of audio frequencies that represent speech, letting phone systems collect and relay signals from 50 to 7,000 Hz. At their healthiest, normal human ears can perceive frequencies as low as 20 Hz and as high as 20,000 Hz. But early telephone networks had limited bandwidth, and engineers decided that frequencies between 300 and 3,400 Hz would be adequate for conveying intelligible speech.

By the 1980s, however, acoustic researchers had demonstrated that people need to hear a wider range of wavelengths to fully understand speech. Frequencies above 3,400 Hz, for example, help listeners distinguish between some consonants. “If I said ‘fox’ and ‘socks’ in isolation, you couldn’t tell them apart over standard telephone bandwidth,” says Mark Clements, a signal-processing expert at Georgia Tech. Likewise, names like Jeff and Jess sound the same on the phone. Believe me, I speak from experience.

After the International Telecommunications Union standardized HD voice more than a quarter century ago, radio broadcasters were among the first adopters. Today, they still use the technology to transmit remote interviews from a sports stadium or another studio over high-speed digital telephone lines. In my experience, the improvement in sound quality is striking. And others seem to agree. In laboratory tests at Nokia, for instance, users rated HD voice calls nearly a full point higher than standard voice calls on a five-point scale. “It really is better,” says Machina Research’s Green.

In September 2009, in an unlikely test ground sandwiched between Romania and Ukraine—the country of Moldova—the wireless carrier Orange became the first company to launch HD voice on a cellular network. The technology has since spread around the world. According to the latest count, 329 smartphone models support the standard, and 109 mobile operators offer service in 73 countries. “The widest deployments are in Asia and Europe, where it’s really taken off,” says Alan Hadden, president of the Global mobile Suppliers Association (GSA), which tracks these numbers.

If you’re wondering why you haven’t noticed these changes, that’s because HD voice equipment still typically defaults to standard “narrowband” service. Even if your phone is HD compatible, for instance, you won’t hear an improvement unless the person you’re talking with is also on an HD phone and all of the networking equipment in between supports the technology. But that situation almost never happens because the circuit-switched backbone still uses standard voice technology. Until it’s upgraded, you won’t be able to roam among different HD networks or place an HD call to someone on a different carrier.

A promising fix for this problem is the second technology capable of boosting cellular voice quality: Voice over LTE (VoLTE). Today, the majority of mobile calls, including HD traffic, are carried on a 2G or 3G network despite the widespread deployment of LTE technology. This newest cellular standard is the first generation to ferry data via Internet-style packet switching.

VoLTE lets mobile carriers deliver voice traffic just like regular data—a characteristic it shares with other VoIP services, such as Microsoft’s Skype and Verizon’s FiOS. By compressing a voice call into a series of standardized packets that can travel between carriers and across national borders over an IP backbone, VoLTE eliminates the need to convert the data into different formats for different parts of the system. So no information is lost. “The same bits that leave one phone will enter the other phone without any changes,” Ericsson’s Derksen explains. And because LTE is designed to deliver any data packet regardless of its content, VoLTE networks can support HD voice right out of the box.

But most LTE carriers don’t yet offer VoLTE. “LTE was originally designed without a native voice service,” says Peter Carson, senior director of technical marketing at Qualcomm. That’s because traditional packet switching doesn’t ensure good voice quality. By treating all packets on a first-come, first-served basis, LTE carriers can’t guarantee that voice packets will arrive at their destination in a timely manner. Packets can be lost or delayed, for example, when a network is busy, creating unintentional silences that can garble speech or cause callers to talk over one another. This unreliability helps explain why VoIP calls can sometimes sound great one minute and poor the next.

In general, the quality of VoIP services has gotten better in recent years as broadband speeds have increased. And some users have configured their private home or business networks to prioritize voice traffic over other data. But when VoIP packets enter the Internet or a cellular network, they’re handled as “best-effort traffic” along with other data, Carson says. So VoIP providers can’t promise that sound quality will always be adequate.

Mobile operators want to control quality so that their customers will keep paying premium prices for cellular voice service. VoLTE lets LTE carriers manage voice traffic using a software platform called the IP Multimedia Subsystem, or IMS. This control layer essentially acts as a traffic cop, opening fast lanes for voice data and other time-sensitive streams, such as video calls and online gaming.

The IMS prioritizes some types of traffic over others by assigning each data connection a single-digit code, called the QoS (quality-of-service) class identifier, or QCI. This number, which is stored in a routing table, describes the transmission requirements for the link, including the maximum packet latency, acceptable number of losses, and whether the network will guarantee a given bit rate. Voice calls, for example, get a QCI of 1, which ensures that 99.99 percent of packets will arrive at their destination within 100 milliseconds, even during peak use times. By comparison, typical Internet traffic, such as e-mail and browsing data, receives the lowest-priority QCIs: 8 and 9. Each router along the way can now usher packets into different transmission queues depending on their QCIs, preventing VoLTE packets, for example, from getting stuck in a Netflix traffic jam.

For years, VoLTE deployments lagged far behind HD voice over 2G and 3G networks. But now, carriers are finally committing to the upgrade, says GSA’s Hadden. More than 100 smartphone models include the technology, and although the service is currently available from only 10 carriers, in Hong Kong, Japan, Singapore, South Korea, and the United States, others are quickly getting on board. As of July, 56 more operators had announced plans to test or commercialize VoLTE in 35 countries around the globe, including Algeria, Germany, Kazakhstan, and Russia.

Meanwhile, carriers are expanding their IP infrastructures, including backbone networks and local broadband links, which will let VoLTE packets flow seamlessly between mobile handsets and other IP phones, including computers and landlines. Voice quality should continue to improve as more networks support priority protocols and callers move onto the same packet-based system.

Eventually, if the carriers get their way, the old circuit-switched networks will go dark. But that transition will take time as companies invest in new equipment and regulators work to ensure that some customers aren’t left with shoddy voice service—or none at all.

There was a time when telephone operators took pride in their voice networks. When Sprint built the first nationwide fiber footprint in the late 1980s, for example, it ran commercials boasting that the system was “so incredibly quiet that you could actually hear a pin drop.”

But since the arrival of the smartphone era, carriers have been strangely—and frustratingly—mum about sound quality. Could the tide be turning at last? Even the second-, third-, and fourth-largest U.S. carriers—AT&T, Sprint, and T-Mobile—which long shied away from discussing voice quality with the public, announced plans for VoLTE rollouts this year. Verizon, the top U.S. carrier, which plans to start deploying the technology before year’s end, calls it “the next evolution in wireless calling.”

If that’s really true, it’s reason for me and other voice customers to be optimistic. But I’ve experienced too many lousy connections to take these promises at face value: I’ll believe it when I hear it.

This article originally appeared in print as “All Smart, No Phone.”

About the Author

An IEEE life member, Jeff Hecht writes for Laser Focus World and New Scientist and is the author of 11 books. As a journalist, he’s become painfully aware of the deterioration in telephone voice quality, as interviewees increasingly take his calls on cellphones. “If everyone’s on a cellphone, how are we going to understand each other?” he wonders.