Household Radar Can See Through Walls and Knows How You’re Feeling

Modern wireless tech isn’t just for communications. It can also sense a person’s breathing and heart rate, even gauge emotions

When I was a boy, I secretly hoped I would discover latent within me some amazing superpower—say, X-ray vision or the ability to read people’s minds. Lots of kids have such dreams. But even my fertile young brain couldn’t imagine that one day I would help transform such superpowers into reality. Nor could I conceive of the possibility that I would demonstrate these abilities to the president of the United States. And yet two decades later, that’s exactly what happened.

There was, of course, no magic or science fiction involved, just new algorithms and clever engineering, using wireless technology that senses the reflections of radio waves emanating from a nearby transmitter. The approach my MIT colleagues and I are pursuing relies on inexpensive equipment that is easy to install—no more difficult than setting up a Wi-Fi router.

These results are now being applied in the real world, helping medical researchers and clinicians to better gauge the progression of diseases that affect gait and mobility. And devices based on this technology will soon become commercially available. In the future, they could be used for monitoring elderly people at home, sending an alert if someone has fallen. Unlike users of today’s medical alert systems, the people being monitored won’t have to wear a radio-equipped bracelet or pendant. The technology could also be used to monitor the breathing and heartbeat of newborns, without having to put sensors in contact with the infants’ fragile skin.

You’re probably wondering how this radio-based sensing technology works. If so, read on, and I will explain by telling the story of how we managed to push our system to increasing levels of sensitivity and sophistication.

It all started in 2012, shortly after I became a graduate student at MIT. My faculty advisor, Dina Katabi, and I were working on a way to allow Wi-Fi networks to carry data faster. We mounted a Wi-Fi device on a mobile robot and let the robot navigate itself to the spot in the room where data throughput was highest. Every once in a while, our throughput numbers would mysteriously plummet. Eventually we realized that when someone was walking in the adjacent hallway, the person’s presence disrupted the wireless signals in our room.

We should have seen this coming. Wireless communication systems are notoriously vulnerable to electromagnetic noise and interference, which engineers work hard to combat. But seeing these effects firsthand got us thinking along completely different lines about our research. We wondered whether this “noise,” caused by passersby, could serve as a new source of information about the nearby environment. Could we take a Wi-Fi device, point it at a wall, and see on a computer screen how someone behind the wall was moving?

That should be possible, we figured. After all, walls don’t block wireless signals. You can get a Wi-Fi connection even when the router is in another room. And if there’s a person on the other side of a wall, the wireless signal you send out on this side will reflect off his or her body. Naturally, after the signal traverses the wall, gets reflected back, and crosses through the wall again, it will be very attenuated. But if we could somehow register these minute reflections, we would, in some sense, be able to see through the wall.

Using radio waves to detect what’s on the other side of a wall has been done before, but with sophisticated radar equipment and expensive antenna arrays. We wanted to use equipment not much different from the kind you’d use to create a Wi-Fi local area network in your home.

Elliptical Reasoning About Location

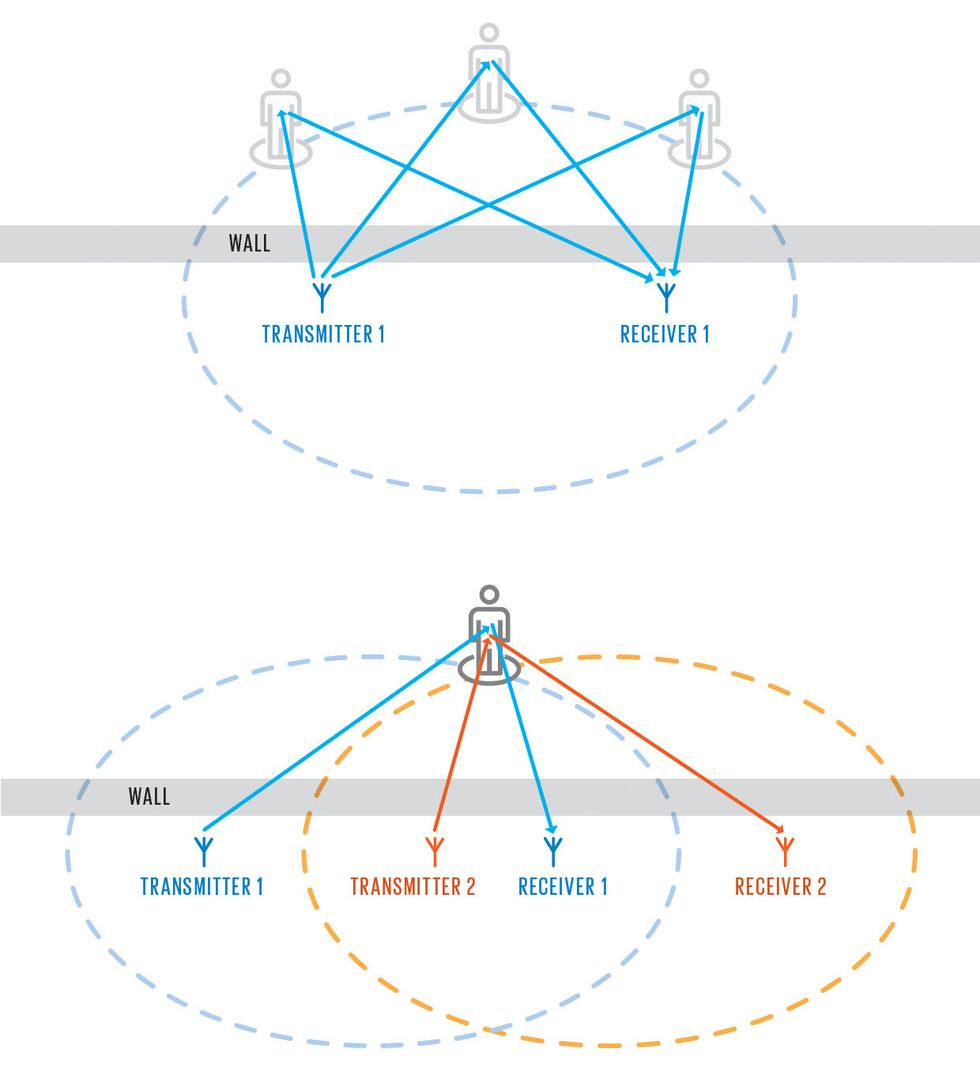

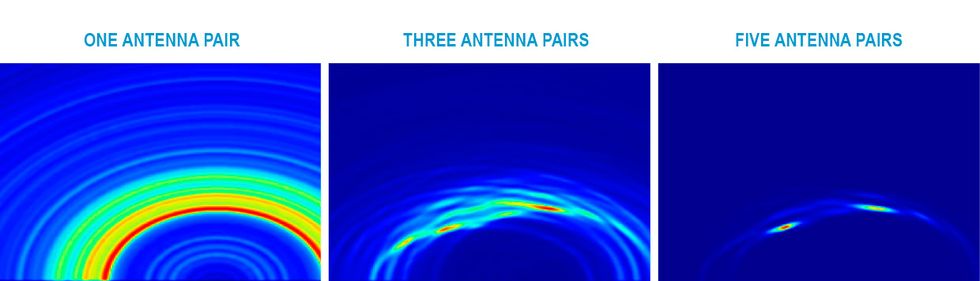

The time needed for a radio signal to travel from a transmitting antenna to a reflective object (here, a person) and back to a receiving antenna can be used to constrain the position of that object. Knowing the travel time between one such pair of antennas, you can determine that the reflector is located somewhere on an ellipse that has the two antennas at its foci (an ellipse being the set of points for which the distances to the two foci sum to a constant value) [top]. With two such pairs of antennas, you can better pin down the location of the reflector, which must lie at the intersection of two ellipses [middle]. With even more antenna pairs, it’s possible to work out where two or more reflecting objects are located. The hot colors in the lower diagrams [bottom] show the location of two people in a room, as seen from above.

As we started experimenting with this idea, we discovered a host of practical complications. The first came from the wall itself, whose reflections were 10,000 to 100,000 times as strong as any reflection coming from beyond it. Another challenge was that wireless signals bounce off not only the wall and the human body but also other objects, including tables, chairs, ceilings, and floors. So we had to come up with a way to cancel out all these other reflections and keep only those from someone behind the wall.

To do this, we initially used two transmitters and one receiver. First, we sent off a signal from one transmitter and measured the reflections that came back to our receiver. The received signal was dominated by a large reflection coming off the wall.

We did the same with the second transmitter. The signal it received was also dominated by the strong reflection from the wall, but the magnitude of the reflection and the delay between transmitted and reflected signals were slightly different.

Then we adjusted the signal given off by the first transmitter so that its reflections would cancel the reflections created by the second transmitter. Once we did that, the receiver didn’t register the giant reflection from the wall. Only the reflections that didn’t get canceled, such as those from someone moving behind the wall, would register. We could then boost the signal sent by both transmitters without overloading the receiver with the wall’s reflections. Indeed, we now had a system that canceled reflections from all stationary objects.

Next, we concentrated on detecting a person as he or she moved around the adjacent room. For that, we used a technique called inverse synthetic aperture radar, which is sometimes used for maritime surveillance and radar astronomy. With our simple equipment, that strategy was reasonably good at detecting whether there was a person moving behind a wall and even gauging the direction in which the person was moving. But it didn’t show the person’s location.

As the research evolved, and our team grew to include fellow graduate student Zachary Kabelac and Professor Robert Miller, we modified our system so that it included a larger number of antennas and operated less like a Wi-Fi router and more like conventional radar.

People often think that radar equipment emits a brief radio pulse and then measures the delay in the reflections that come back. It can, but that’s technically hard to do. We used an easier method, called frequency-modulated continuous-wave radar, which measures distance by comparing the frequency of the transmitted and reflected waves. Our system operated between about 5 and 7 gigahertz, transmitted signals that were just 0.1 percent the strength of Wi-Fi, and could determine the distance to an object to within a few centimeters.

Using one transmitting antenna and multiple receiving antennas mounted at different positions allowed us to measure radio reflections for each transmit-receive antenna pair. Those measurements showed the amount of time it took for a radio signal to leave the transmitter, reach the person in the next room, and reflect back to the receiver—typically several tens of nanoseconds. Multiplying that very short time interval by the speed of light gives the distance the signal traveled from the transmitter to the person and back to the receiver. As you might recall from middle-school geometry class, that distance defines an ellipse, with the two antennas located on the ellipse’s two foci. The person generating the reflection in the next room must be located somewhere on that ellipse.

With two receiving antennas, we could map out two such ellipses, which intersected at the person’s location. With more than two receiving antennas, it was possible to locate the person in 3D—you could tell whether a person was standing up or lying on the floor, for example. Things get trickier if you want to locate multiple people this way, but as we later showed, with enough antennas, it’s doable.

It’s easy to think of applications for such a system. Smart homes could track the location of their occupants and adjust the heating and cooling of different rooms. You could monitor elderly people, to be sure they hadn’t fallen or otherwise become immobilized, without requiring these seniors to wear a radio transmitter. We even developed a way for our system to track someone’s arm gestures, enabling the user to control lights or appliances by pointing at them.

The natural next step for our research team—which by this time also included graduate students Chen-Yu Hsu and Hongzi Mao, and Professor Frédo Durand—was to capture a human silhouette through the wall.

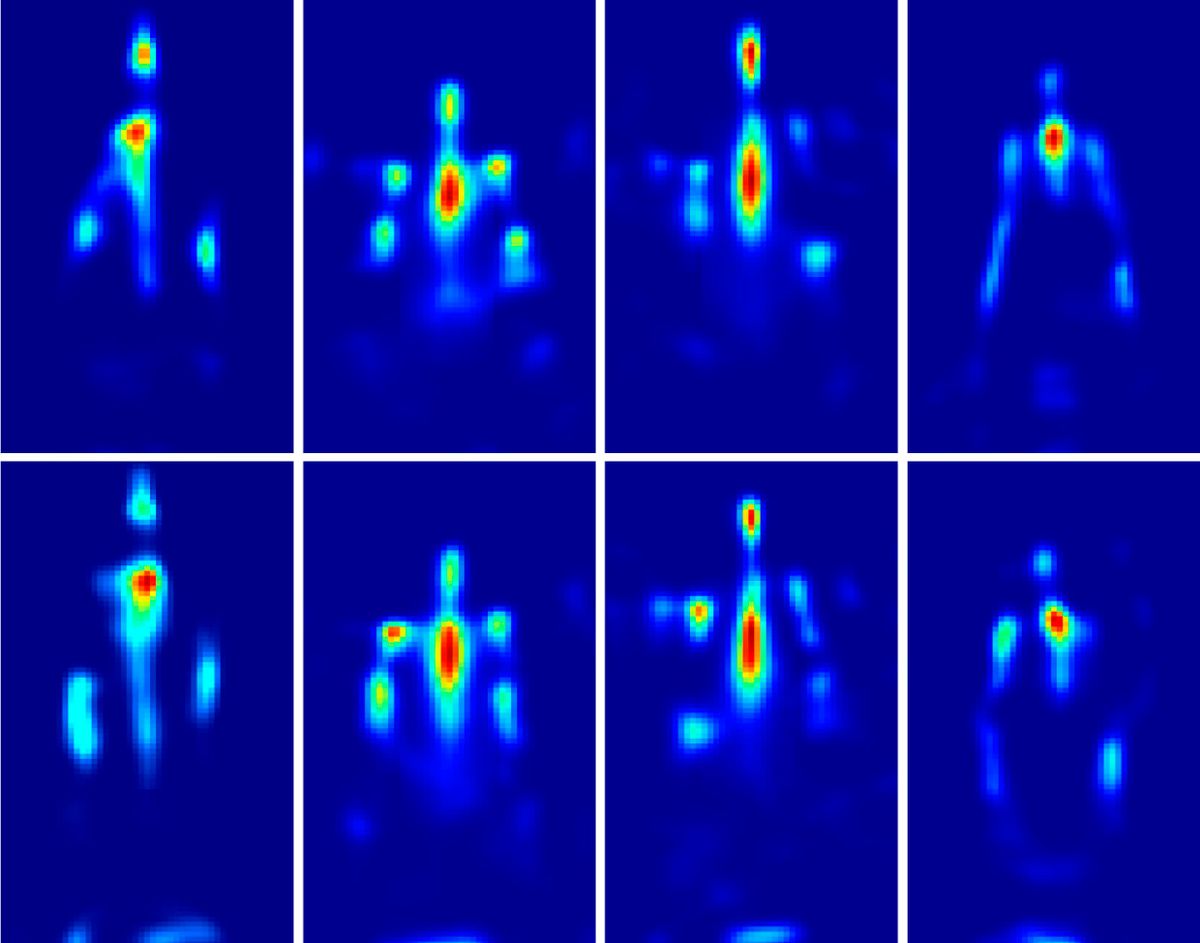

The fundamental challenge here was that at Wi-Fi frequencies, the reflections from some body parts would bounce back at the receiving antenna, while other reflections would go off in other directions. So our wireless imaging device would capture some body parts but not others, and we didn’t know which body parts they were.

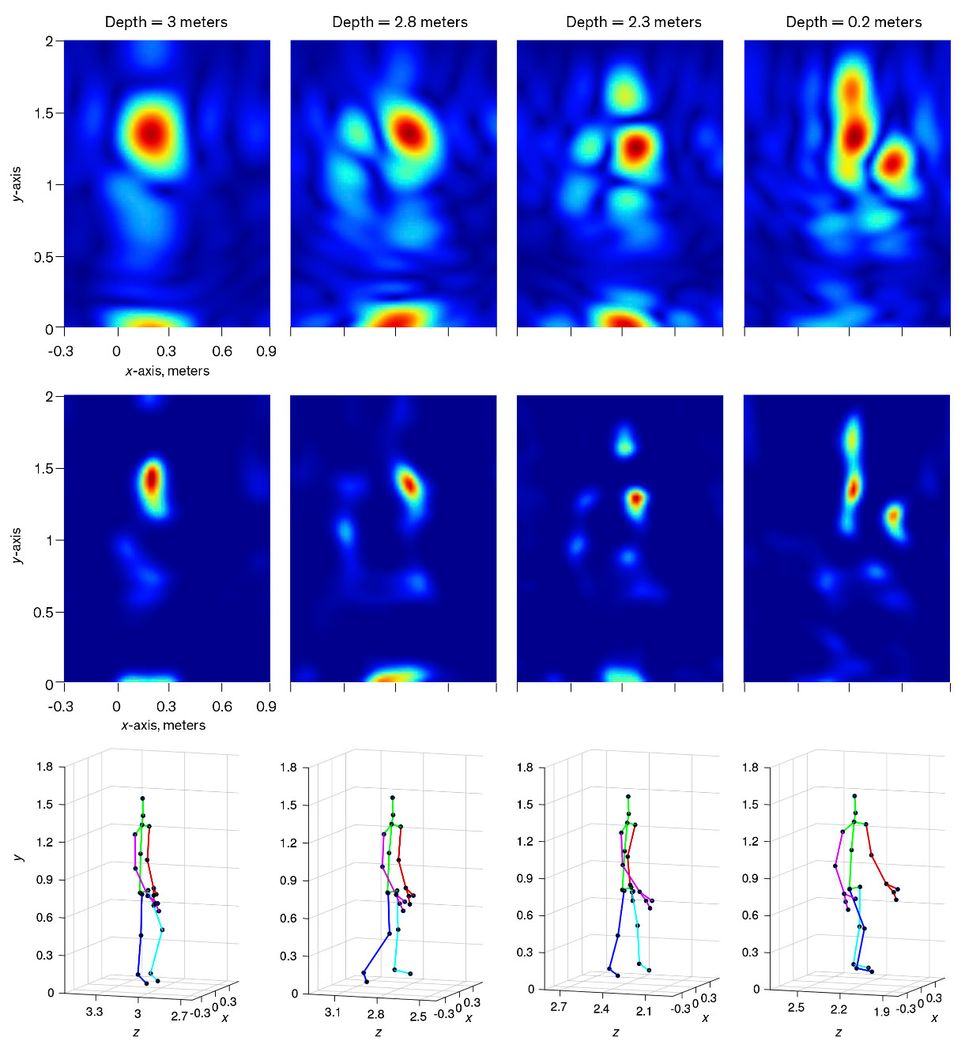

Our solution was quite simple: We aggregated the measurements over time. That works because as a person moves, different body parts as well as different perspectives of the same body part get exposed to the radio waves. We designed an algorithm that uses a model of the human body to stitch together a series of reflection snapshots. Our device was then able to reconstruct a coarse human silhouette, showing the location of a person’s head, chest, arms, and feet.

While this isn’t the X-ray vision of Superman fame, the low resolution might be considered a feature rather than a bug, given the concerns people have about privacy. And we later showed that the ghostly images were of sufficient resolution to identify different people with the help of a machine-learning classifier. We also showed that the system could be used to track the palm of a user to within a couple of centimeters, which means we might someday be able to detect hand gestures.

Initially, we assumed that our system could track only a person who was moving. Then one day, I asked the test subject to remain still during the initialization stage of our device. Our system accurately registered his location despite the fact that we had designed it to ignore static objects. We were surprised.

Ghost In the Machine

It’s possible to form a ghostly silhouette of a person reflecting radio waves [see topmost set of images]. The first step is simply to form heat maps that show where radio energy is being reflected from as the subject moves [top row]. Because these images are obtained when the person is at different distances from the antennas, an adjustment has to be made to account for the geometrical spreading of the radio waves [middle row]. The adjusted images are then combined with a model of the human head, torso, and limbs to form a final image. For comparison, a Kinect was used to measure the person’s movements [bottom row].

Looking more closely at the output of the device, we realized that a crude radio image of our subject was appearing and disappearing—with a periodicity that matched his breathing. We had not realized that our system could capture human breathing in a typical indoor setting using such low-power wireless signals. The reason this works, of course, is that the slight movements associated with chest expansion and contraction affect the wireless signals. Realizing this, we refined our system and algorithms to accurately monitor breathing.

Earlier research in the literature had shown the possibility of using radar to detect breathing and heartbeats, and after scrutinizing the received signals more closely, we discovered that we could also measure someone’s pulse. That’s because, as the heart pumps blood, the resulting forces cause different body parts to oscillate in subtle ways. Although the motions are tiny, our algorithms could zoom in on them and track them with high accuracy, even in environments with lots of radio noise and with multiple subjects moving around, something earlier researchers hadn’t achieved.

By this point it was clear that there were important real-world applications, so we were excited to spin off a company, which we called Emerald, to commercialize our research. Wearing our Emerald hats, we participated in the MIT $100K Entrepreneurship Competition and made it to the finals. While we didn’t win, we did get invited to showcase our device in August 2015 at the White House’s “Demo Day,” an event that President Barack Obama organized to show off innovations from all over the United States.

It was thrilling to demonstrate our work to the president, who watched as our system detected my falling down and monitored my breathing and heartbeat. He noted that the system would make for a good baby monitor. Indeed, one of the most interesting tests we had done was with a sleeping baby.

Video on a typical baby monitor doesn’t show much. But outfitted with our device, such a monitor could measure the baby’s breathing and heart rate without difficulty. This approach could also be used in hospitals to monitor the vital signs of neonatal and premature babies. These infants have very sensitive skin, making it problematic to attach traditional sensors to them.

About three years ago, we decided to try sensing human emotions with wireless signals. And why not? When a person is excited, his or her heart rate increases; when blissful, the heart rate declines. But we quickly realized that breathing and heart rate alone would not be sufficient. After all, our heart rates are also high when we’re angry and low when we’re sad.

Looking at past research in affective computing—the field of study that tries to recognize human emotions from such things as video feeds, images, voice, electroencephalography (EEG), and electrocardiography (ECG)—we learned that the most important vital sign for recognizing human emotions is the millisecond variation in the intervals between heartbeats. That’s a lot harder to measure than average heart rate. And in contrast to ECG signals, which have very sharp peaks, the shape of a heartbeat signal on our wireless device isn’t known ahead of time, and the signal is quite noisy. To overcome these challenges, we designed a system that learns the shape of the heartbeat signal from the pattern of wireless reflections and then uses that shape to recover the length of each individual beat.

Using features from these heartbeat signals as well as from the person’s breathing patterns, we trained a machine-learning system to classify them into one of four fundamental emotional states: sadness, anger, pleasure, and joy. Sadness and anger are both negative emotions, but sadness is a calm emotion, whereas anger is associated with excitement. Pleasure and joy, both positive emotions, are similarly associated with calm and excited states.

Testing our system on different people, we demonstrated that it could accurately sense emotions 87 percent of the time when the testing and training were done on the same subject. When it hadn’t been trained on the subject’s data, it still recognized the person’s emotions with more than 73 percent accuracy.

In October 2016, fellow graduate student Mingmin Zhao, Katabi, and I published a scholarly article [PDF] about these results, which got picked up in the popular press. A few months later, our research inspired an episode of the U.S. sitcom “The Big Bang Theory.” In the episode, the characters supposedly borrow the device we’d developed to try to improve Sheldon’s emotional intelligence.

While it’s unlikely that a wireless device could ever help somebody in this way, using wireless signals to recognize basic human mental states could have other practical applications. For example, it might help a virtual assistant like Amazon’s Alexa recognize a user’s emotional state.

There are many other possible applications that we’ve only just started to explore. Today, Emerald’s prototypes are in more than 200 homes, where they are monitoring test subjects’ sleep and gait. Doctors at Boston Medical Center, Brigham and Women’s Hospital, Massachusetts General Hospital, and elsewhere will use the data to study disease progression in patients with Alzheimer’s, Parkinson’s, and multiple sclerosis. We hope that in the not-too-distant future, anyone will be able to purchase an Emerald device.

When someone asks me what’s next for wireless sensing, I like to answer by asking them what their favorite superpower is. Very possibly, that’s where this technology is going.

This article appears in the June 2019 print issue as “Seeing With Radio.”

About the Author

Fadel Adib is an assistant professor at MIT and founding director of the Signal Kinetics research group at the MIT Media Lab.