The history of AI is often told as the story of machines getting smarter over time. What’s lost is the human element in the narrative, how intelligent machines are designed, trained, and powered by human minds and bodies.

In this six-part series, we explore that human history of AI—how innovators, thinkers, workers, and sometimes hucksters have created algorithms that can replicate human thought and behavior (or at least appear to). While it can be exciting to be swept up by the idea of super-intelligent computers that have no need for human input, the true history of smart machines shows that our AI is only as good as we are.

Part 3: Turing’s Error

In 1950, at the dawn of the digital age, Alan Turing published what was to be become his most well-known article, “Computing Machinery and Intelligence,” in which he poses the question, “Can machines think?”

Instead of trying to define the terms “machine” and “think,” Turing outlines a different method for answering this question derived from a Victorian parlor amusement called the imitation game. The rules of the game stipulated that a man and a woman, in different rooms, would communicate with a judge via handwritten notes. The judge had to guess who was who, but their task was complicated by the fact that the man was trying to imitate a woman.

Inspired by this game, Turing devised a thought experiment in which one contestant was replaced by a computer. If this computer could be programmed to play the imitation game so well that the judge couldn’t tell if he was talking to a machine or a human, then it would be reasonable to conclude, Turing argued, that the machine was intelligent.

This thought experiment became known as the Turing test, and to this day, remains one of the best known and most contentious ideas in AI. The enduring appeal of the test is that it promises a non-ambiguous answer to the philosophically fraught question: “Can machines think?” If the computer passes Turing’s test, then the answer is yes. As philosopher Daniel Dennett has written, Turing’s test was supposed to be a philosophical conversation stopper. “Instead of arguing interminably about the ultimate nature and essence of thinking,” Dennett writes, “why don’t we all agree that whatever that nature is, anything that could pass this test would surely have it."

But a closer reading of Turing’s paper reveals a small detail that introduces ambiguity into the test, suggesting that perhaps Turing meant it more as a philosophical provocation about machine intelligence than as a practical test.

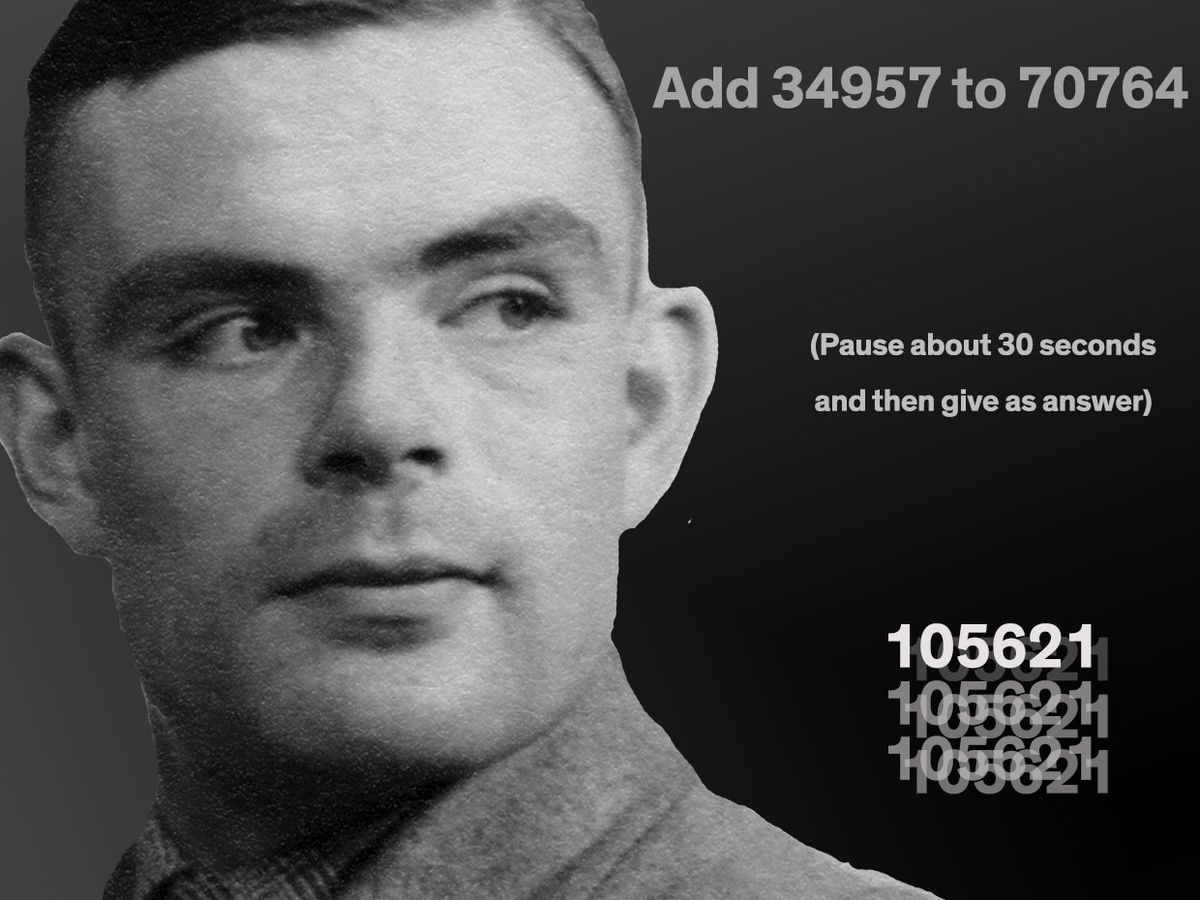

In one section of “Computing Machinery and Intelligence,” Turing simulated what the test might look like with an imagined intelligent computer of the future. (The human is asking questions, the computer responding.)

Q: Please write me a sonnet on the subject of the Forth Bridge.

A: Count me out on this one. I never could write poetry.

Q: Add 34957 to 70764.

A: (Pause about 30 seconds and then give as answer) 105621.

Q: Do you play chess?

A: Yes.

Q: I have K at my K1, and no other pieces. You have only K at K6 and R at R1. It is your move. What do you play?

A: (After a pause of 15 seconds) R-R8 mate.

In this exchange, the computer has actually made a mistake with the arithmetic. The real sum of the numbers is 105721, not 105621. It’s unlikely that Turing, a brilliant mathematician, left this mistake there by accident. More likely, it’s a type of Easter egg for the alert reader.

Turing seemed to suggest elsewhere in his article that the miscalculation was a programming trick, a sleight of hand to fool a judge. Turing understood that if careful readers of the computer’s response picked up the mistake, they would believe that they were corresponding with a human, assuming that a machine would not make such a basic arithmetic error. A machine could be programmed to “deliberately introduce mistakes in a manner calculated to confuse the interrogator,” Turing wrote.

While the idea of using mistakes as a way to imply human intelligence might have been hard to comprehend in 1950, it has become a design practice for programmers working in natural language processing. For example, in June 2014, a chatbot called Eugene Goostman was reported to have become the first computer to pass the Turing test. But critics were quick to point out that Eugene only passed the test because of an in-built cheat: Eugene simulated a 13-year old boy with English as a second language. This meant that his mistakes in syntax and grammar and his incomplete knowledge were mistaken for naivety and immaturity, rather than shortcomings in natural language processing ability.

Similarly, after Google’s voice assistant system, Duplex, wowed crowds with its human-like umms and ahhhs last year, many pointed out that this wasn’t the consequence of genuine thinking on the part of the system, but hand-coded hesitations that simulated human cognition.

Both cases are enactments of Turing’s idea that computers can be designed to make simple mistakes in order to give the impression of humanness. Like Turing, the programmers of Eugene Goostman and Duplex understood that a surface-level guise of human fallibility can be enough to fool us.

Perhaps the Turing test doesn’t assess whether a machine is intelligent, but whether we’re willing to accept it as intelligent. As Turing himself said: “The idea of intelligence is itself emotional rather than mathematical. The extent to which we regard something as behaving in an intelligent manner is determined as much by our own state of mind and training as by the properties of the object under consideration.”

And perhaps intelligence is not a substance that can be programmed into a machine, Turing seemed to suggest—but instead, a quality constructed through social interaction.

This is the third installment of a six-part series on the untold history of AI. Part 2 told the story of the forgotten women who programmed the first electronic digital computer in the United States. Come back next Monday for Part 4, which delves into DARPA’s earliest dreams of cyborg intelligence.