So you want to build a brain-machine interface. With 100 billion neurons in the brain, you'd be right to wonder how a few measly electrodes could possibly extract enough information to learn anything interesting about what those brain cells are up to.

Most implanted brain-machine interfaces consist of a couple dozen electrodes recording the waveforms of neurons firing in one specific part of the brain. Biomedical engineers have gone so far as to collect recordings from about 100 channels in the brain, but that may well be insufficient--perhaps we need 10 000 to truly grasp why we coo over photos of chubby cats and belt out showtunes in the shower. But that presents a new type of problem. Because it can't have any wires dangling from the scalp, an implanted system will naturally have a limited bandwidth for extracting data. An interface with 1024 electrodes, for example, might end up producing about 250 MBps, estimates Andrew Mason, an electrical and computer engineering professor at Michigan State University, in East Lansing. For today's implantable transmitters, that quantity is simply too much.

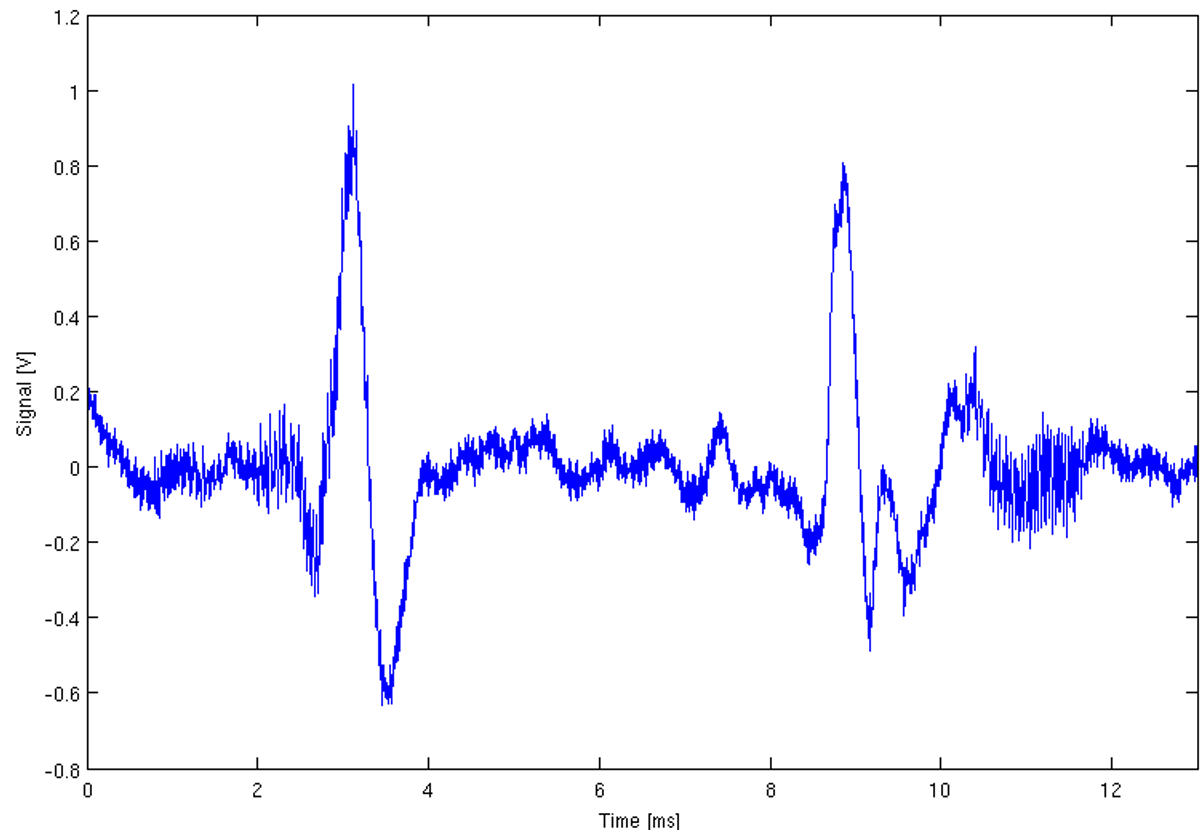

Neuroscientists already have methods for parsing brain data off-chip, namely for sifting out that distinctive up-spike, down-spike, undulating-tail pattern that is the action potential of a firing neuron. But those techniques--which might compare a neural recording to some existing templates of spikes--tend to be power-hungry and computationally demanding, requiring hardware that would be too large to implant in the brain. So to handle the large electrode arrays of the near future, new data reduction techniques will need to sift through the recordings on-chip and in-brain.

The constraints are daunting: The compression algorithm needs to run in real-time, it should be simple, and it must, of course, be accurate. To tackle this problem, Mason and the students in his laboratory at Michigan State University reported one possible solution at the IEEE Biological Circuits and Systems conference last week. They designed an ultra-low-power circuit for detecting spikes, then came up with a method for separating out the right segments of the waveforms to be sent off-brain. First, the circuit assessed the level of noise in the data, and then chose one of two ways to process the data. Most of the time the data isn't overwhelmingly noisy, so we can use a very simple technique that consumes hardly any power at all. If the data is pretty noisy, though, it should go through another method, called a stationery wavelet transform (SWT)--a bunch of math requiring 16 additions and 16 multiplications; in a nutshell, too much for both this blogger's brain and any implanted hardware. But with some minor compromises, Mason and his students were able to squeeze a version of SWT onto the circuit. For one channel operating at 25 kHz, they used just 450 nanowatts on a 0.082 square-millimeter CMOS circuit, an acceptable size for an implant.

Once you've found a spike, another algorithm tells you what data to bother keeping, as the authors reported in a separate paper. The most distinctive features of a neuron's spike are its amplitude, or energy, the relative position of its positive and negative peaks, and the width of the spike. Taken together, however, those parameters can be used to derive a new feature--namely, where the waveforms cross the x axis before and after a spike. That's considered the data-rich part of a neural recording, and the algorithm backs away from every zero crossing to give each spike a buffer. Using this zero-crossing method, they were able to compress the data to an impressive 2 percent of its original size.

If you get nothing else from this post, consider this: In contrast with much of the engineering done in a world where computing is cheap and software can get away with being woefully messy, biomedical applications are often elegant by necessity. In few other domains will you see as clear a demonstration of the value of ultra-low-power simplicity.