Bits of History

Artifacts from the first 2000 years of computing at the Computer History Museum

In some ways, collecting old computers isn’t much different from collecting anything old: You have to take care of the stuff. “Is it decaying?” asks Dag Spicer, senior curator at the Computer History Museum, in Mountain View, Calif. He describes the remains of sound-dampening foam that once hushed the whir of cooling fans in 1960s and ’70s mainframes. “It turns into a tarry mess—really gross, black sludge,” he says. That’s relatively easy to clean out, but some troubles require more technical expertise. Reading the information on a 1950s disk stack might be hard, says Spicer, a circuit designer turned historian, but harder still is making sense of it. “Do you recognize what these bits are?” he asks, explaining the need for both obsolete hardware and outdated operating systems.

Despite such challenges, Spicer has helped the Computer History Museum acquire more than 100 000 technological artifacts, building the largest collection of its kind. Of these the museum has selected 1200 for a new exhibit called Revolution: The First 2000 Years of Computing.

The curator estimates that 3000 people come each week to see Revolution, which opened in January 2011. The stories behind the artifacts attract all those visitors, says the museum’s president, John Hollar, who particularly enjoys exhibits that highlight tales of engineering triumph. A favorite artifact is a piece of the Apollo Guidance Computer, which helped put men on the moon despite having only 36 kilobytes of memory. But Spicer admits that the computer relics draw visitors for simpler reasons too. Sans sludge, “they’re very beautiful objects,” he says.

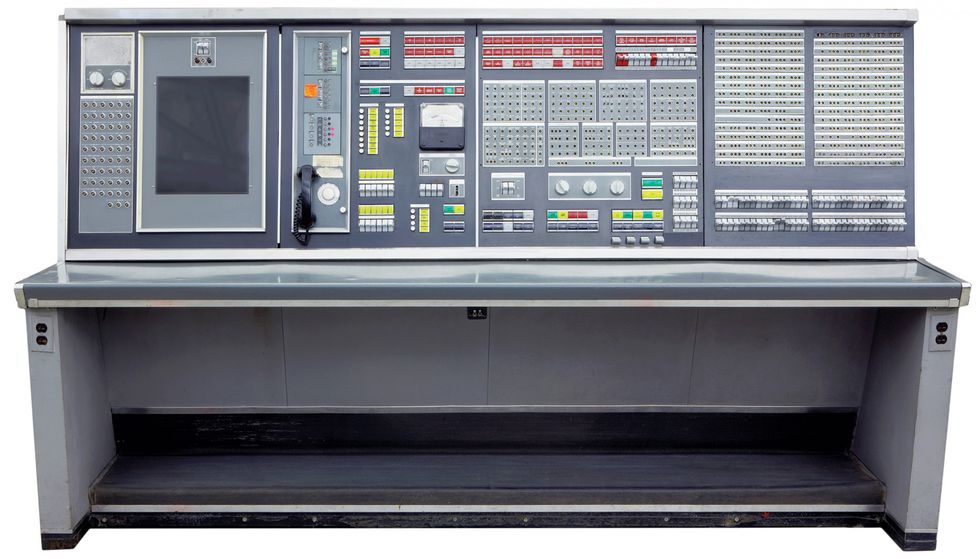

Cold War Computer: The biggest pieces in the Computer History Museum’s collection belong to the Semi-Automatic Ground Environment (SAGE) system, developed for the U.S. Air Force. SAGE, explains senior curator Dag Spicer, was really a network of 23 Costco-size warehouses located throughout the United States and Canada. The system stored flight information for all authorized commercial and military flights and flagged any “unknowns” spotted by radar, in an attempt to protect the United States from Soviet bombers. “It probably cost more than the Manhattan Project, and yet very few people know about it,” Spicer says. “At one point, something like three-quarters of all programmers in the country were working on SAGE, so it trained a whole generation.”

Arm Waving: From the Norwegian for “snake,” the Orm was an early attempt at a computer-controlled robotic arm. Created in 1969 by Stanford engineers Victor Scheinman and Larry Leifer, the arm could extend by inflating several of 28 air sacs sandwiched between seven metal disks. Despite its elegant design, accuracy was never its forte. Scheinman would go on to produce the Stanford Electric Arm, which was capable of building a Ford Model T water pump. Manufacturers also consulted him on many of the key industrial robot designs of the 1970s and ’80s, Spicer says.

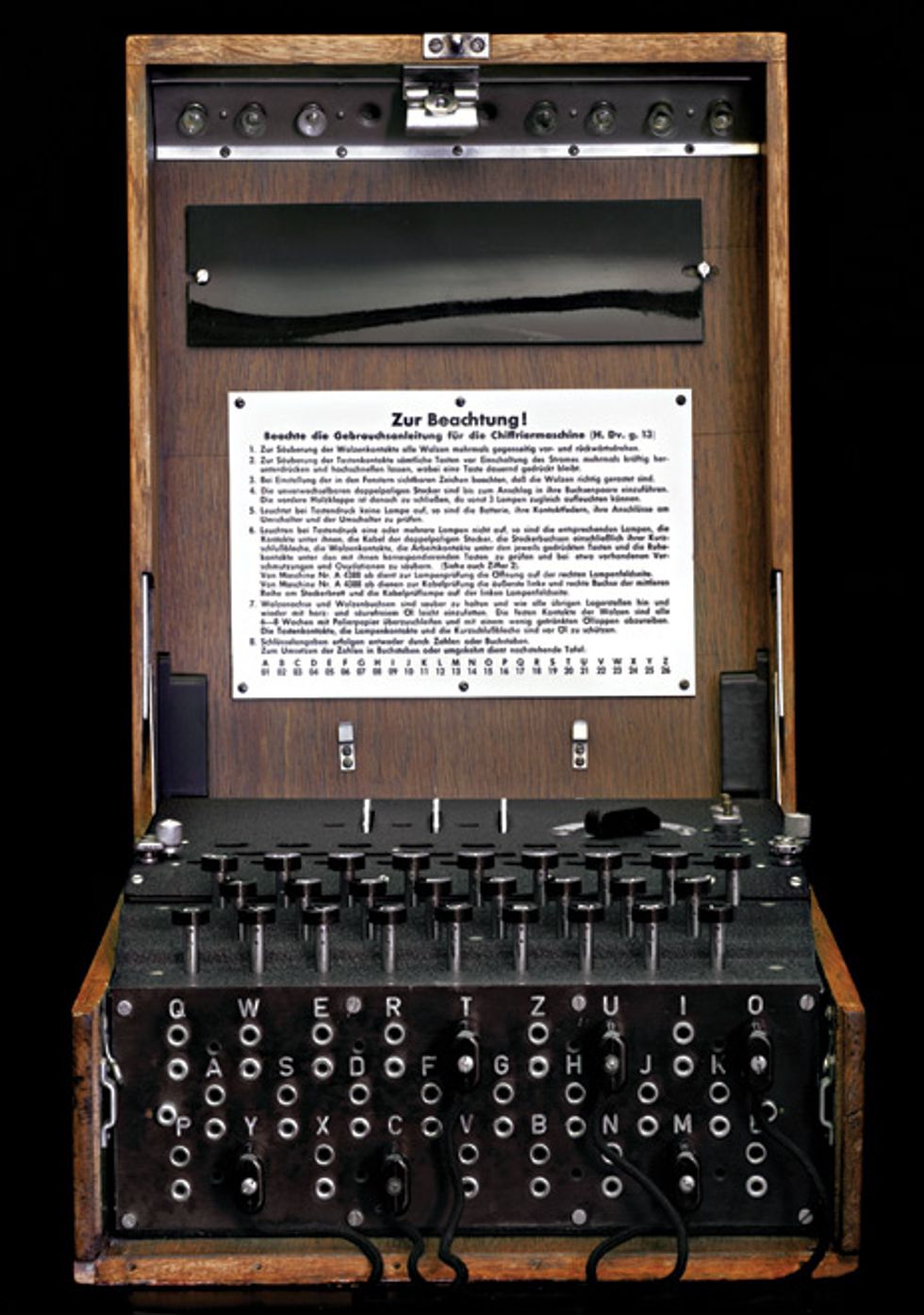

Code Maker: One of Spicer’s favorite artifacts is this World War II German enciphering machine, called the Enigma. After the operator turned a few wheels above the machine’s keyboard and arranged wires on the device’s front, he would type a letter, say a T, and a light would blink above the corresponding letter, say an E, for the encrypted message. A recipient with another Enigma could then decipher the message by using an identical arrangement of the wires on his machine. Typing a letter, such as an E, in a seemingly nonsensical coded sentence would then reveal the sender’s true intent, a T.

“It doesn’t even count as a computer—it’s basically just a flashlight circuit,” Spicer says, “but this machine had an enormous significance for humanity.” Some historians believe that by cracking Enigma’s codes, the Allies may have shortened the war by as much as two years. “Gut-wrenching decisions,” Spicer says, “came out of this very simple, tiny little box.”

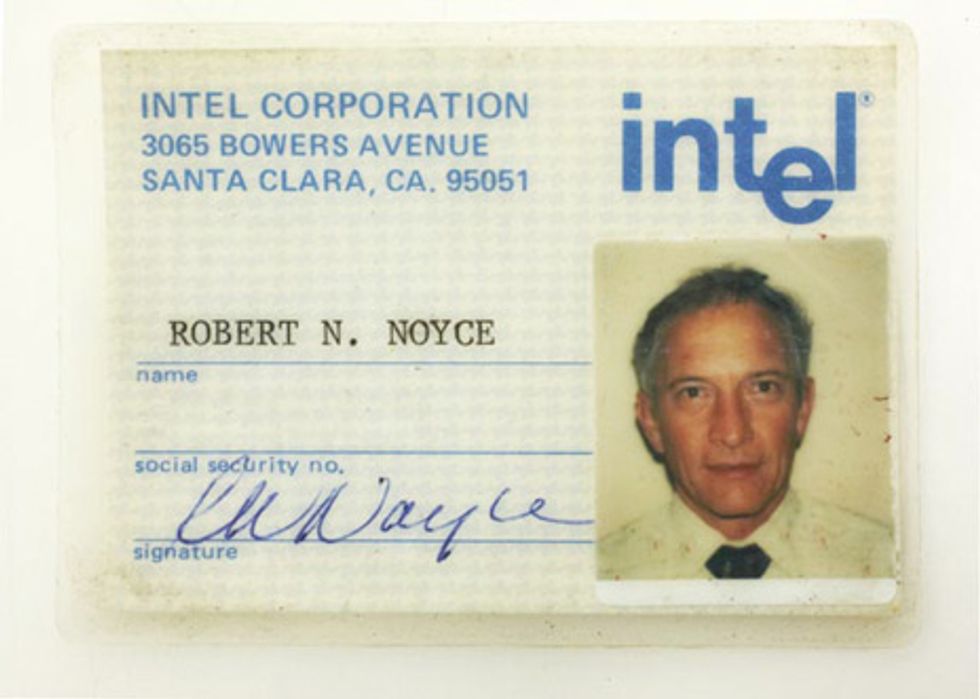

The Mayor’s ID: This 1980s Intel Corp. ID card shows the smiling mug of Robert Noyce, who cofounded the company in 1968. Known as the “Mayor of Silicon Valley,” Noyce devised one of the first integrated circuits while at Fairchild Semiconductor in 1959. Historians credit Texas Instruments engineer Jack Kilby as the microchip’s coinventor because he, too, combined discrete circuit elements into a single piece of semiconductor at about the same time.

When he wasn’t designing silicon devices or starting leading chip companies, Noyce enjoyed flying planes and singing madrigals.

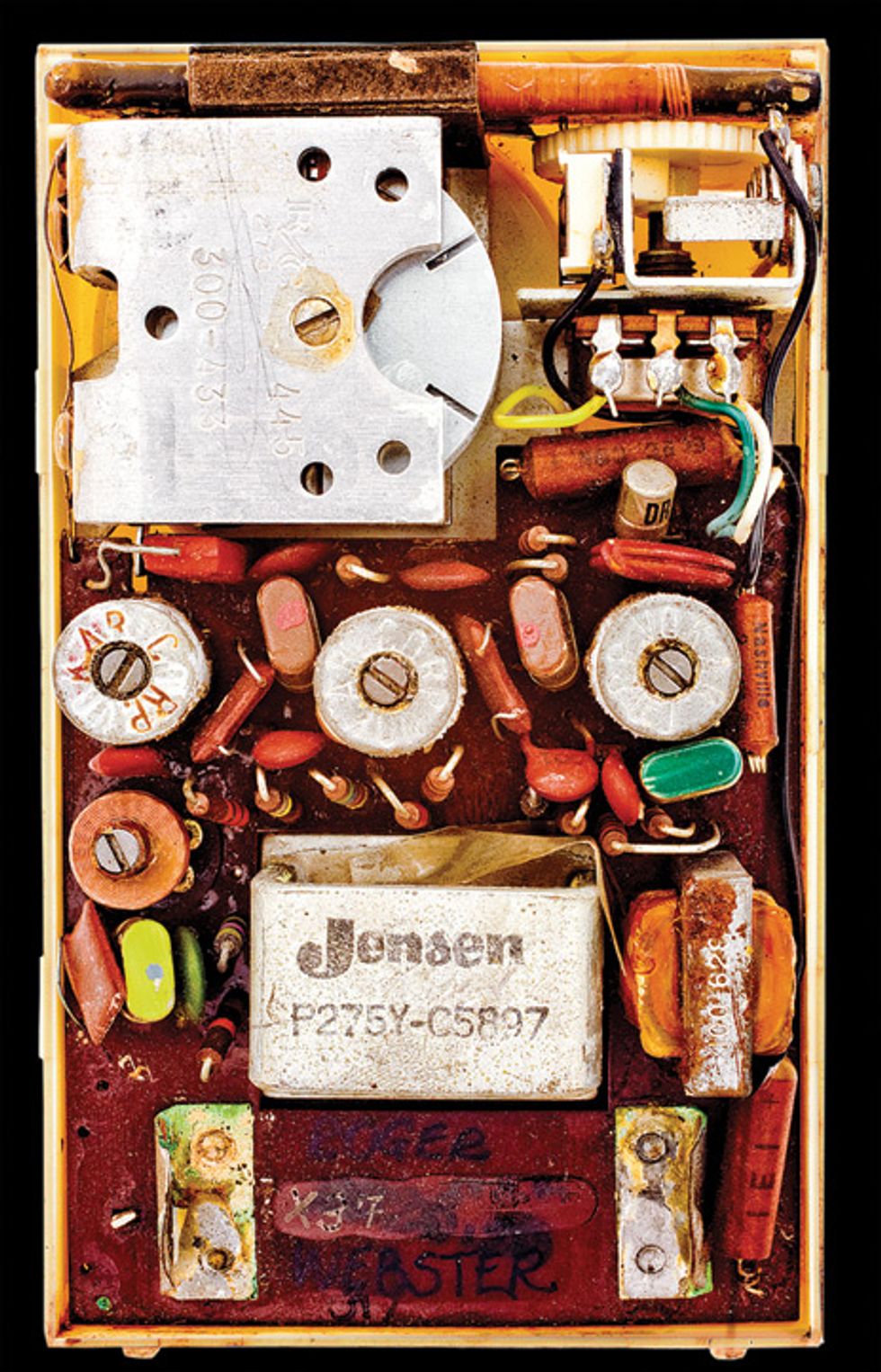

Transistor Transmissions: In October 1954, this radio helped to bring the word transistor into popular parlance. Texas Instruments’ Regency Model TR-1 radio was the first to forgo vacuum tubes, replacing them with germanium transistors.

Previously, manufacturers had made transistors by tediously assembling tiny semiconducting bars, but TI developed a method for mass-producing them in a furnace. The company sold around 100 000 of the pocket radios, but Spicer says they’re now hard to come by. “They didn’t cost very much,” he says, “and they were kind of throwaway things.” He points out the slight rusting inside the museum’s radio, noting the device’s popularity among beachgoers.

Squirrel Bot: Looking very unlike its mammalian counterpart, Squee, a 1940s robot squirrel, employed two phototube light sensors, contact switches, and three motors to find and hunt “nuts”—golf and tennis balls. Squee’s creator, computer scientist Edmund C. Berkeley, might have more accurately dubbed the device a robotic raccoon, because it was clearly nocturnal, requiring very dark rooms to hunt for its spherical quarry, which it successfully found only about 75 percent of the time, after following a flashlight’s beam. Spicer says that it was very early for a robot to use such a feedback-control system and that the machine certainly excited the public’s imagination, but “there’s not much to it, in terms of circuitry.”

In addition to Squee, Berkeley also designed Simon, an early personal computer, which he describes in his 1949 book Giant Brains or Machines That Think, one of the first books about electronic computers for a general audience.

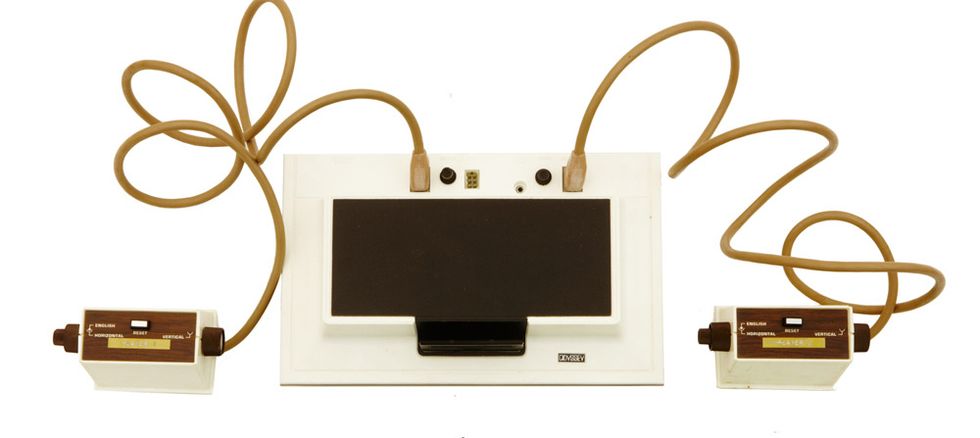

Player No. 1: Many claim that the 1972 Magnavox Odyssey was the first video-game console, but Spicer prefers to call it “pioneering.” “I don’t usually like to say that anything is the first,” he says, “because it’s usually wrong.” The Odyssey’s creator, engineer Ralph Baer, started work on a prototype called the Brown Box in 1966.

Unlike today’s gaming systems, the Odyssey had no microprocessor. The computer had all the analog components necessary to display two players, a ball, and a wall hardwired into the system. A set of 12 printed circuit boards inserted into the machine’s front could change such characteristics as the width and location of these on-screen objects. But that was only part of the video-game’s graphics capabilities: Complementary color transparencies fitted on a television screen could turn an otherwise dreary black-and-white game into a colorful tennis match or haunted-house adventure. Sound, however, was limited to the players’ own exclamations.

Better Than Beer: Two weeks after designer Allan Alcorn installed the 1972 video game Pong in Andy Capp’s bar in Sunnyvale, Calif., it stopped functioning. Nothing had gone awry with the completely analog electronics inside the table-tennis game. Too many quarters in the machine’s coin acceptor were to blame. The easily fixed problem was a sign of the game’s popularity and Atari’s financial success to come.

Remembering Vacuum Tubes: Isolated gates, called eyelets, stored charge in this Selectron vacuum-tube memory, which Jan Rajchman started developing in 1946 while at RCA Laboratories. The 1953 version, shown here, held 256 bits—a far cry from the device’s original design goal of 4096 bits.

The only machine ever to use the expensive Selectron memory was the Johnniac, or John von Neumann Numerical Integrator and Automatic Computer, which the Rand Corp. built in 1953. Spicer says that the custom-made computer, which operated continuously for 13 years, only narrowly avoided the scrap heap before finding a home at the museum. “It was sitting in a parking lot…and someone who had worked on it recognized it and rescued it. It was pretty dramatic,” Spicer says. “This is an irreplaceable, priceless computer—it was like finding a Fabergé egg in your recycle bin.”

Slow and Shakey: While many previous robots required users to spell out every instruction, Shakey the robot could parse and execute high-level commands, such as “push the block off the platform.” Developed by computer scientist Charles Rosen and his team at Stanford Research Institute International, Shakey was programmed in Fortran and Lisp. In 1966, the robot’s SDS-940 computer had 64 000 24-bit words of memory. By 1969, upgrades allowed Shakey’s control computer to store 300 000 36-bit words’ worth of programming.

A New Face for Education: The orange glow from these 1970s plasma displays welcomed students who used the University of Illinois’s networked teaching terminals. For more than a decade, the Programmed Logic for Automated Teaching Operations (PLATO) system provided users with basic instruction in a range of subjects—including genetics, the operation of nuclear power plants, and aircraft piloting—before microcomputers finally replaced the system. The displays’ ability to store images without external memory made them especially attractive.

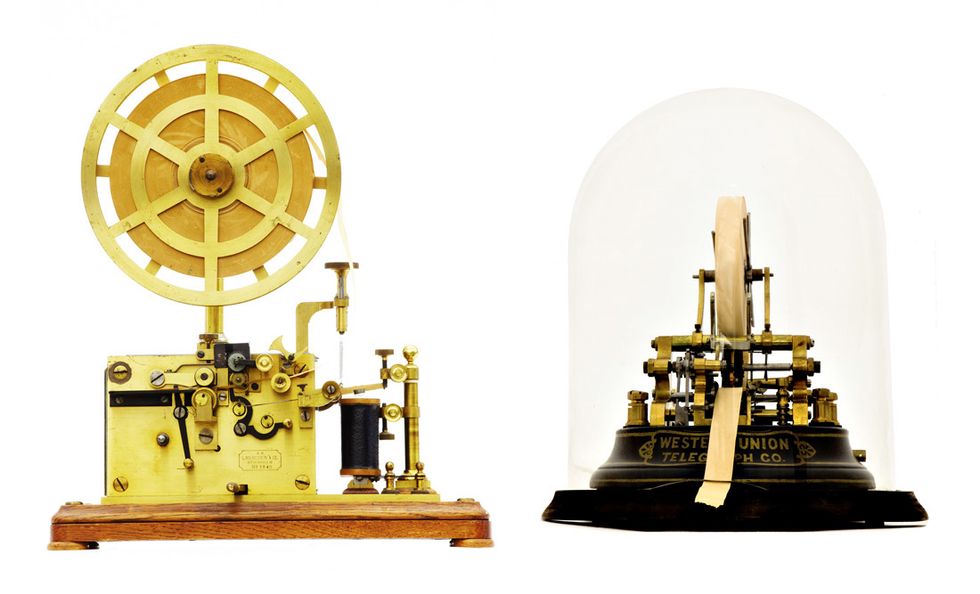

A Parade of Tickers: Many machines existed for transforming the telegraph’s dots and dashes into a readable printout. An 1890s telegraph receiver manufactured by the Swedish firm L.M. Ericsson and Co. [left] converted Morse code into indentations on paper tape as it unwound from an ornate wheel. An 1870s Western Union Telegraph Co. stock ticker [right] printed selling prices, at one character per second, live from the floor of the stock exchange. The sound that such devices produced while printing inspired a descriptive moniker for both the machines and the “ticker” tape they unfurled.

Storage Stack: Introduced in 1956, IBM’s 305 Random Access Memory Accounting System, or RAMAC, included the world’s first hard disk drive. Fifty 60-centimeter disks spun at 1200 rotations per minute and could hold 5 million characters of information—the equivalent of 62 500 punch cards. Reminiscent of a jukebox, the drive had a pneumatic “access mechanism” to select a particular disk for recording at up to 40 bits per centimeter. By 1958, IBM offered a second disk stack for additional storage in each system, complete with a second access arm.

The 305 RAMAC was one of IBM’s last vacuum-tube computers. The company produced more than 1000 of the machines.

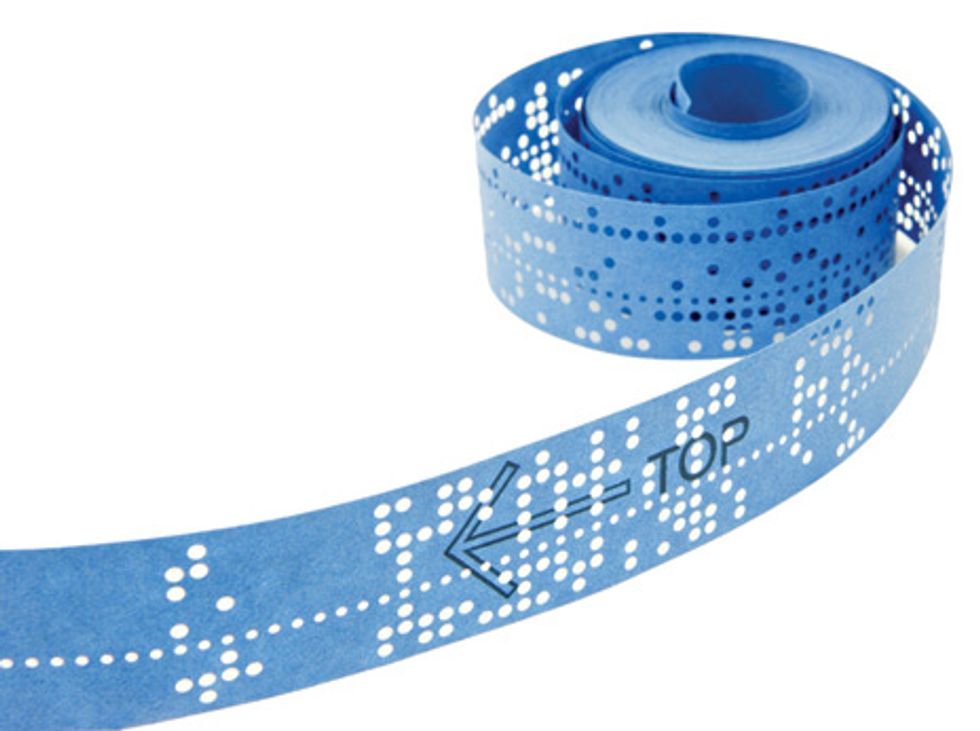

Before Windows: Inspired by a Popular Electronics cover story on the Micro Instrumentation and Telemetry Systems (MITS) Altair 8800—a do-it-yourself personal microcomputer—Honeywell programmer Paul Allen and Harvard sophomore Bill Gates set out to write software for the machine. After the pair endured several all-day programming sessions, Allen completed the BASIC software while flying to MITS headquarters in Albuquerque. They dubbed the partnership they had formed “Micro-Soft.” The 1975 Altair BASIC Interpreter, on the source tape shown above, would prove a big seller into the early 1980s and a popular piracy target of hobbyists.

Peripherals: The museum’s collection includes a wide range of pointing devices. Researchers at the Stanford Linear Accelerator Center used this 1974 prototype joystick [left] to trace particle-track images from their experiments for digitization and later analysis. The 2003 Hewlett-Packard Spaceball [right] allows users to orient objects in three dimensions by gripping and rotating the gray ball.

Proto-Roomba: With its 12-inch television for a face, more than mothers could love Hubot, the 1981 home companion robot. Weighing 40 kilograms and standing a little more than a meter tall, the robotic servant included an AM/FM stereo cassette player, a sonic obstacle-avoidance system, a 5.25-inch floppy disk drive, and an 8-bit, 4-megahertz CPU. A removable serving tray and vacuum cleaner attachment and a built-in Atari video-game system provided tools for both work and leisure. Developed by Hubotics in Carlsbad, Calif., the robot responded to commands input from a 64-key detachable keyboard and could use its voice synthesizer to talk back with a 1200-word vocabulary.

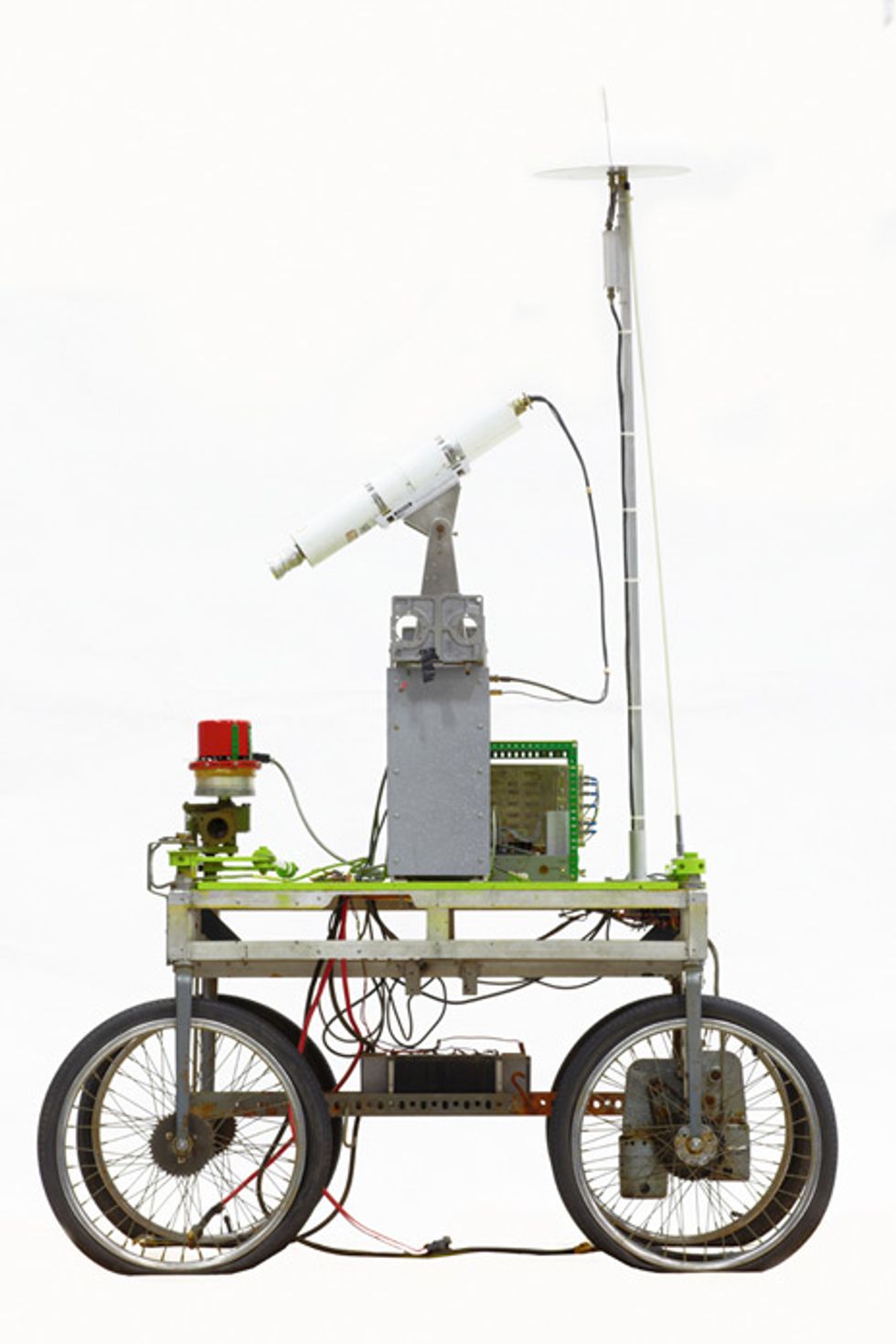

Mars Rover’s Grandmother: In 1961, Stanford mechanical engineering doctoral student James L. Adams created the Stanford Cart, a remotely controlled steerable rover with four bicycle wheels and a magnetic tape loop, used to simulate radio delays. For his dissertation, Adams showed that with the communication delay that a rover might experience on the moon, a remote operator wouldn’t be able to steer a machine moving faster than 0.3 kilometer per hour. From 1964 to 1971, the cart received an overhaul at the hands of Stanford Artificial Intelligence Lab researchers, giving it the capability to automatically follow a high-contrast white line at around 1.3 km/h. Then, in the 1970s, the cart received a final makeover, gaining stereoscopic vision from a swiveling television camera for obstacle avoidance.

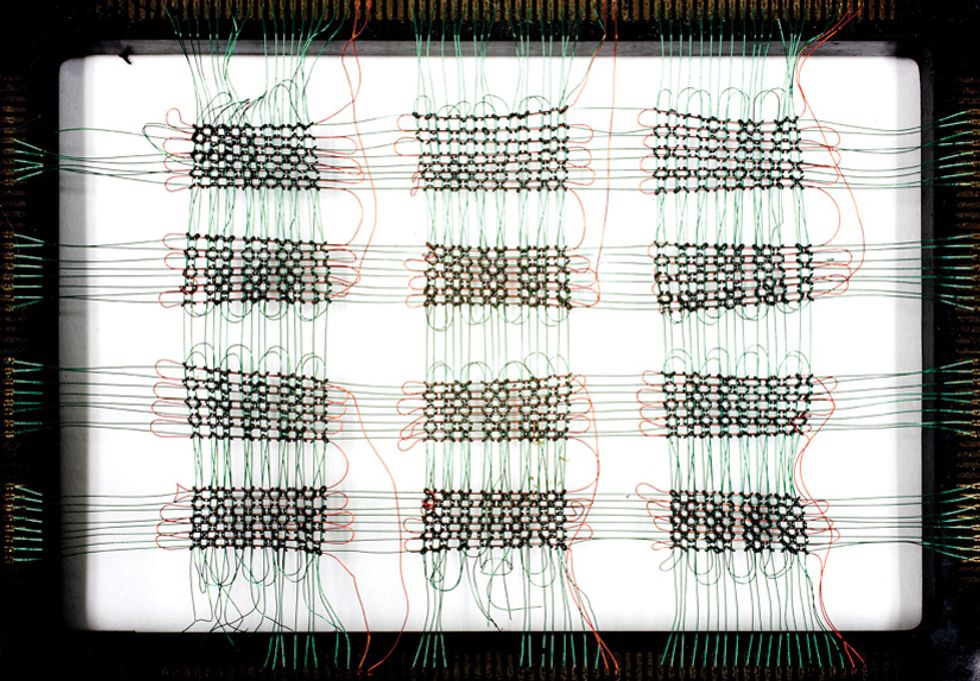

Ring Recordings: This 1965 IBM artifact is core memory, which recorded bits as the orientation of the magnetization in tiny ferro-magnetic rings. The computer read and wrote bits using the wires attached to each ring in the grid. The example shown here contains 1536 magnetic memory cores. Even today, in the age of semiconductor memory, some people refer to a file that holds the working memory’s contents after a computer crash as a “core dump.”

About the Author

Joseph Calamia is a freelance writer in New York City. The chats he had with the staff of the Computer History Museum while writing “Bits of History” inspired him to dust off his own obsolete gadgets: the 1980s Apple IIGS and the original Nintendo Entertainment System. Neither worked. Calamia holds a master’s in science writing from MIT and has also written for Discover.

To Probe Further

For more about photographer Mark Richards, see the Back Story, “Admiring the Obsolete.” Richard’s previous collaboration with the Computer History Museum resulted in the 2007 book Core Memory: A Visual Survey of Vintage Computers (published by Chronicle Books, San Francisco). This slide show features nine images from the book.

Several years ago a team of retired engineers restored a vintage IBM 1401 mainframe computer for the Computer History Museum. Their efforts were captured in the November 2009 article and slideshow “Rebuilding the IBM 1401.” IBM’s online tribute to its 1401 looks at the machine’s technical breakthroughs and cultural impact.

For more on history-making microchips, see IEEE Spectrum’s special report “25 Microchips That Shook the World.”