With the DRC Finals kicking off this week, competing teams have been practicing hard to get their robots ready for competition. A few weeks ago, we visited Team TROOPER at Lockheed Martin Advanced Technology Laboratories (or more accurately, a nameless and windowless building in an office park somewhere near Philadelphia) to see how they’ve been preparing for the DRC Finals, and what we came back with should give you a good sense of what to expect.

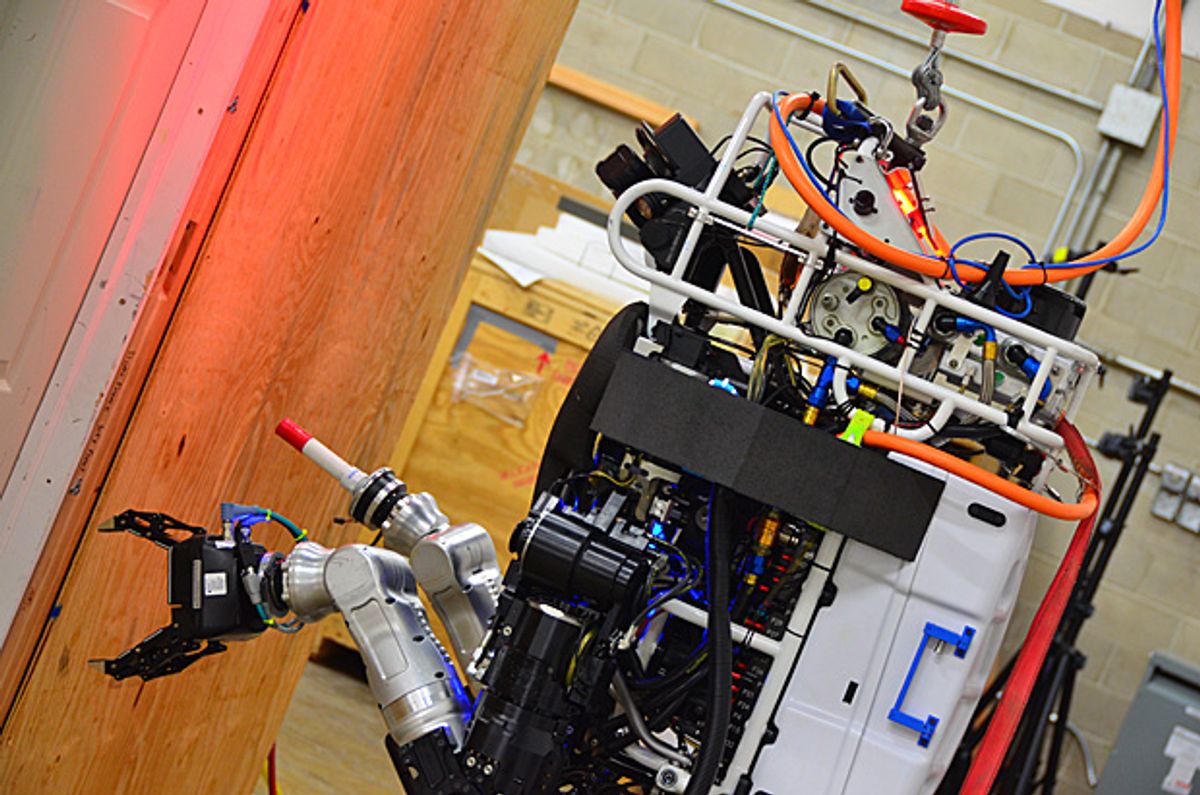

When we arrived at Lockheed Martin to do this interview, Leo (named after Leonardo da Vinci, of course) was undergoing some minor surgery. A tech from Boston Dynamics was working on an arm joint controller to try to resolve some fine manipulation problems, so even at this late stage, hardware tweaks (and repairs) are going on.

In terms of software (which is really the emphasis of the DRC Finals), one of the techniques that Lockheed has been focusing on is the ability for a human user to interface with and control Leo on multiple different levels of autonomy. There’s always some low-level autonomy running to keep the robot from falling over while it’s standing still or whatever, but in addition to that, a human stays in the loop almost all the time. At a minimum, a human will be monitoring the progress of the robot as it executes autonomously generated plans, but there are lots of other options, too:

- At a high level, to get the robot to pick up (for example) a cutting tool and then turn it on, Lockheed can send one command that says “pick up the cutting tool and turn it on,” and Leo will autonomously plan and execute that task once a human has approved it.

- On a lower level, if the cutting tool is unfamiliar to Leo (if the robot didn’t have a model of it beforehand), the human can still identify the object as a tool, and once the robot knows that, it can then track and autonomously make a plan to pick up, turn on, and use the tool.

- The lowest level of interaction comes in if, say, Leo needs to use a totally different kind of tool. In this case, a human can plan arm and finger trajectories manually, essentially teleoperating the robot. Doing this requires a lot of data to be streamed back and forth to the robot, which isn’t ideal, but it’s an option for unfamiliar or otherwise very complex situations.

In general, Lockheed’s goal is to use as many high-level autonomy behaviors as possible, since that’s the fastest and most efficient way to complete the DRC tasks, but if they need to, they can always take more direct control. It’s versatility like this that’s going to be very important when robots like Leo enter real disaster areas that may be full of unexpected things, or things that are particularly hard for Leo’s sensors to identify but that a human in the loop could compensate for.

As of a few weeks ago, Lockheed is planning to attempt the driving task, and it sounds like they’re also planning on attempting to get out of the vehicle without any human help, which (from what we’ve heard) is likely to be the most difficult task of the entire Finals competition. Leo is also better at moving over terrain rather than moving debris, so they’ll be taking that route during the indoor tasks. We’ll have a bunch more on the tasks on Thursday.

As Dr. Todd Danko explains in the video, falling over is still a serious concern. ATLAS is big and fragile, and most teams that we’ve talked using the DARPA hardware don’t seem confident that a.) their robot will be able to survive a fall unscathed or b.) even if it does, they’ll be able to get up again autonomously. With every fall that requires human assistance knocking a minimum of 10 minutes off of the 60-minute timer (we’ll have more on that on Thursday), falling more than once per run will likely result in most teams running out of time to complete the course. And again, that’s only if their robot doesn’t smash into a thousand pieces on impact. Here’s the current strategy (assuming the robot is on a flat surface):

- Roll onto front

- Get into push-up position

- Move knees up into a sort of runner’s starting stance

- Through a dynamic motion, jerk upper body back and enable balancing controller while rising to a standing posture

We can’t wait to see that in practice, and we hope it actually works, since Lockheed says they haven’t (yet) been able to execute that on the physical robot: “There’s a lot to do, and not a lot of time left,” Dr. Danko told us. “But things are coming together.”

[ Lockheed Martin ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.