Superconductivity’s First Century

In the 100 years since superconductivity was discovered, only one widespread application has emerged

Absolute zero, as the name suggests, is as cold as it gets.

In 1848, Lord Kelvin, the great British physicist, pegged it at –273 °C. He thought that bringing something to this temperature would freeze electrons in their tracks, making what is normally a conductor into the perfect resistor. Others believed that electrical resistance would diminish gradually as a conductor cooled, so that by the time it reached absolute zero, all vestiges of resistance would disappear. It turns out that everybody was wrong.

Heike Kamerlingh Onnes, professor of physics at Leiden University, in the Netherlands, found the answer early in 1911 by measuring the resistance of mercury that was frozen solid and chilled to within a few degrees of absolute zero. He found that the resistance declined in proportion to the temperature all the way down to 4.3 kelvins (4.3 °C above absolute zero), at which point it fell abruptly to zero. Onnes first thought he had a short circuit. It took him a while to realize that what he had was, in fact, the makings of a Nobel Prize—the discovery of superconductivity.

Since then, physicists have sought to understand the quantum-mechanical origins of superconductivity, and engineers have tried to make use of it. While scientific efforts in this area have been rewarded by no fewer than seven Nobel prizes, all commercial applications of superconductivity have pretty much fizzled except one, which came out of the blue: magnetic resonance imaging (MRI).

Why did MRI alone pan out? Can we expect to see a second widespread application anytime soon? Without a crystal ball, it’s hard to know, of course, but reviewing the evolution of superconductivity’s first century offers some interesting clues about what we might expect for its second.

Onnes himself expected that superconductivity would be valuable because it would allow for the transmission of electrical power without a loss of energy in the wires. Those early hopes were, however, dashed by the observation that there were few materials that became superconducting at temperatures above 4 K and that those materials stop superconducting if you try to pass much current through them. This is why for the next five decades most of the research in this field was centered on finding materials that could remain superconducting while carrying appreciable amounts of current. But that was not the only requirement for practical devices. The people working on them also needed to find superconducting materials that weren’t too expensive and that could be drawn into thin, reasonably strong wires.

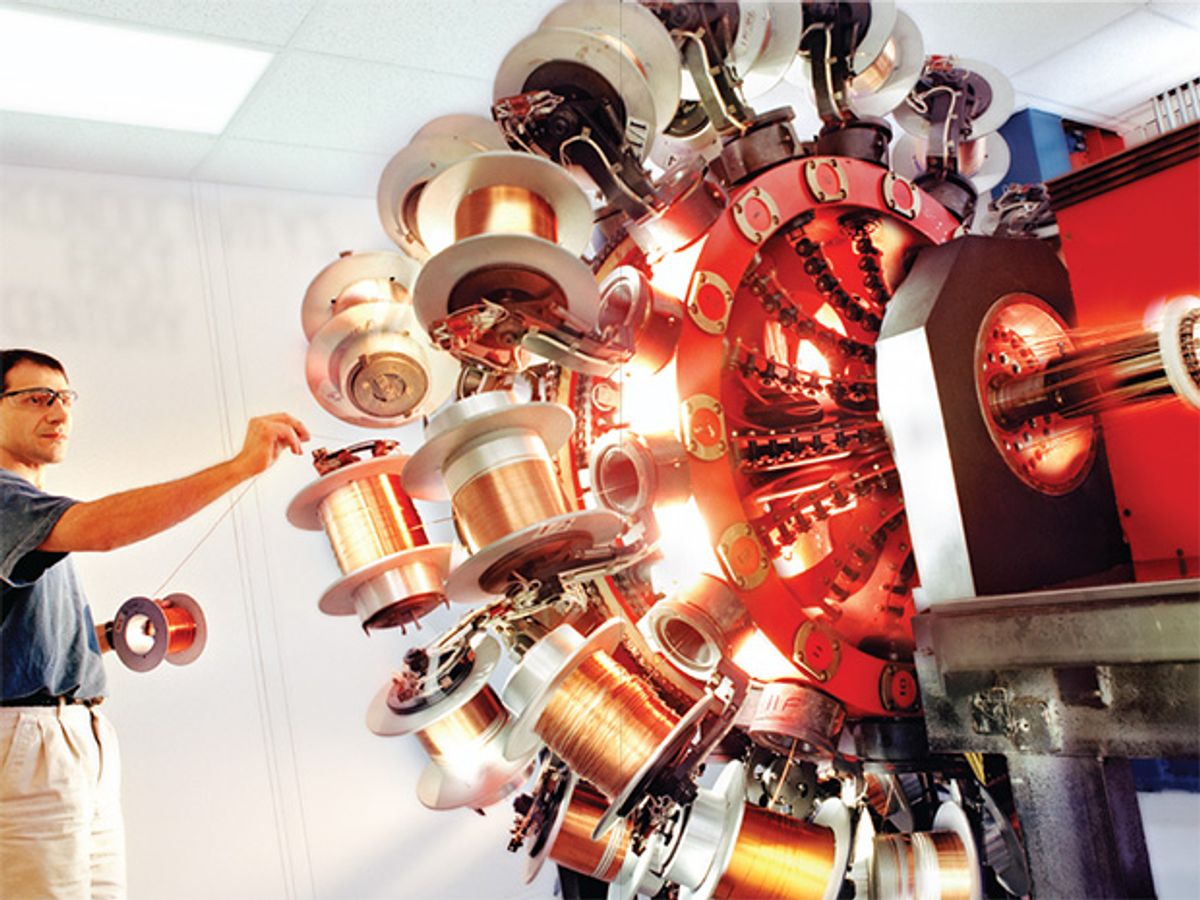

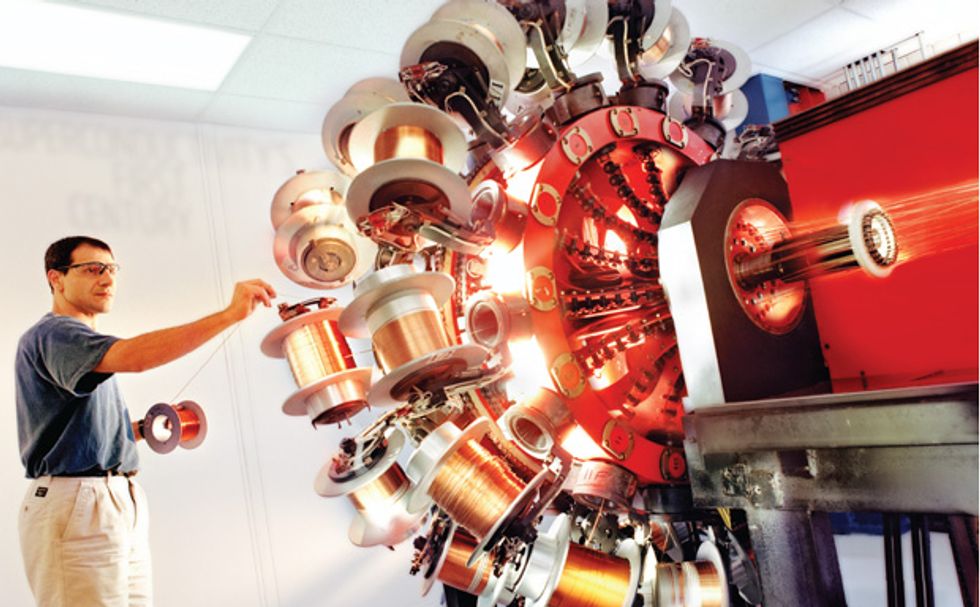

In 1962 researchers at Westinghouse Research Laboratories, in Pennsylvania, developed the first commercial superconducting wire, an alloy of niobium and titanium. Soon after, other researchers, at the Rutherford Appleton Laboratory, in the United Kingdom, improved it by adding copper cladding. At the time, the most promising application appeared to be in the giant magnets physicists use for particle accelerators, as superconducting magnets were able to offer much higher magnetic fields than ones made from ordinary copper wire.

With this and other similar applications in mind, one of us (Abetti) and his fellow scientists and engineers at the General Electric Co. location in Schenectady, N.Y., succeeded within months in building the world’s first 10-tesla magnet using superconducting wire. Although a scientific and technical triumph, that magnet was a commercial failure. Development costs ran to more than US $200 000, well above the fixed-price contract of $75 000 that Bell Telephone Laboratories had paid GE for this magnet, which was to be used for basic research in materials science.

Around this time, engineers at GE and elsewhere demonstrated some other practical applications for superconductors, such as for the windings of large generators, motors, and transformers. But superconducting versions of such industrial machinery never caught on. The problem was that the existing equipment was technologically mature, having already achieved high electrical efficiencies. Indeed, motors, generators, and transformers were practically commodities, with reliability and low cost being what customers most cared about.

That seemingly left only one niche open: superconducting cables for power transmission in areas where overhead lines could not be used—over large bodies of water and in densely populated areas, for example. While the promised gains in efficiency were attractive, the need for expensive and unreliable cooling vessels for the cables made them a dicey proposition.

GE’s management considered the market for superconductivity’s one proven product—magnets—too small and uncertain. But one of the researchers at GE wouldn’t take no for an answer. In 1971, Carl Rosner created an independent spin-off, Intermagnetics General Corp., or IGC, in Latham, N.Y., which made and sold laboratory-size magnets and received government research-and-development grants. The new company was immediately profitable.

At about this time, Martin Wood, a senior research officer at the University of Oxford’s Clarendon Laboratory, and his wife, Audrey, also decided to try to turn superconductivity into a business. In addition to design and consulting work, their newly hatched company, Oxford Instruments, developed and marketed magnets for research purposes, building the first high-field superconducting magnet outside the United States in March of 1962. By 1970, Oxford Instruments had 95 employees.

The 1970s saw the emergence of a few other start-ups that used superconductivity for building such things as sensitive magnetometers. And various research efforts were spawned to explore other applications, including superconductive magnets for storing energy and for levitating high-speed trains. But GE, Philips, Siemens, Westinghouse, and other big players still showed little interest in superconductivity, which, in the view of the managements of these companies, seemed destined to remain a sideshow. They were, however, proved very wrong toward the end of the decade, when a stunning new use for superconducting magnets appeared on the scene: MRI.

MRI was an outgrowth of an analysis technique chemists had long been using called nuclear magnetic resonance (NMR), which itself has since created a significant market for superconducting magnets. There were hints as far back as the 1950s that the NMR signals emanating from different points within a magnet could be distinguished, but it wasn’t until the late 1970s that it became apparent that medically relevant images could be made this way.

The advent of medical imagers that required a person to be immersed in an intense magnetic field brought a swift change in the business calculus at GE, where managers suddenly smelled a billion-dollar market. They knew that the technical risk involved in making the superconducting magnets required for MRI was low—after all, GE’s spin-off IGC had already fabricated comparable products. They knew also that GE could take advantage of its long-established presence in the medical-imaging market, for which it had produced X-ray machines and, more recently, computerized axial tomography scanners. Also, by this time GE had a more entrepreneurial climate, which encouraged the company’s operating units to take risks.

This really was superconductivity’s golden moment, and GE seized it. In 1984 the company rolled out its first MRI system, and by the end of the decade, GE could boast an installed base of over 1000 imagers. Although it constructed its MRI magnets in-house, GE used niobium-titanium wire manufactured by IGC.

Meanwhile, IGC learned to build MRI magnets of its own, which it sold to GE’s competitors. And with a budget of only $5 million, IGC succeeded in building MRI scanners that were functionally equivalent to those GE, Hitachi, Philips, Siemens, and Toshiba were then selling. IGC, however, lacked the marketing clout and reputation within the health-care industry to compete with these multinational giants. So it fell back on its main business, manufacturing superconducting MRI magnets, which it sold primarily to Philips.

Although there were a few attempts at the time to find other commercial uses for superconductivity—in X-ray photolithography or for separating ore minerals, for example—MRI provided the only substantial market. It was around this time, though, that yet another scientific breakthrough put superconductivity back on everyone’s radar.

In 1986, Karl Alexander Müller and Johannes Georg Bednorz, researchers at IBM Research–Zurich, concocted a barium-lanthanum-copper oxide that displayed superconductive properties at 35 K. That’s 12 or so kelvins warmer than any other superconductive material known at the time. What made this discovery even more remarkable was that the material was a ceramic, and ceramics normally don’t conduct electricity. There had been hints of superconducting ceramics before, but until this time, none of them had shown much promise.

Müller and Bednorz’s work triggered a flurry of research around the world. And within a year scientists at the University of Alabama at Huntsville and the University of Houston found a similar ceramic compound that showed superconductivity at temperatures they could attain using liquid nitrogen. Before, all superconductors had required liquid helium—an expensive, hard-to-produce substance—for cooling. Liquid nitrogen, however, can be made from air without that much effort. So the new high-temperature superconductors, in principle, threw the door wide open for all sorts of practical uses, or at least they appeared to.

The discovery of high-temperature superconductors sparked tremendous publicity—which in retrospect is easy to see was hype. Newsweek called it a dream come true. The cover of Time magazine showed a futuristic automobile controlled by superconducting circuits. BusinessWeek declared, “Superconductors! More important than the light bulb and the transistor” on its cover. Many sober scientists and engineers shared this enthusiasm. Among them were Yet-Ming Chiang, David A. Rudman, John B. Vander Sande, and Gregory J. Yurek, the four MIT professors who founded American Superconductor Corp. during this time of feverish excitement over the new high-temperature materials.

Despite all the hoopla, managers at Oxford Instruments, one of the few companies with any real experience using superconductivity at that point, had a dim view of the prospects for the high-temperature ceramics. For the most part, they decided to stick to their former course: working to improve the company’s low-temperature niobium-titanium wire and making incremental improvements in its MRI magnets. Oxford Instruments put only a small effort into studying the new high-temperature superconductors.

The management at IGC, which at the time included one of us (Haldar), saw more promise in the new materials and worked hard to see how they could commercialize them for such things as electrical transmission cables, industrial-scale current limiters, energy-storage coils, motors, and generators. American Superconductor, which went public in 1991, did the same.

It took more than a decade to do, but IGC eventually developed a high-temperature superconducting wire and in collaboration with Waukesha Electric Systems, in Wisconsin, built a transformer with it in 1998. In 2000, IGC and Southwire, of Carrollton, Ga., demonstrated a superconducting transmission cable. Soon after, Haldar and his IGC colleagues established a subsidiary, called IGC-SuperPower, to develop and market electrical devices based on high-temperature superconductivity.

In 2001, American Superconductor tested a superconducting cable for the transmission of electrical power at one of Detroit Edison’s substations. In 2006, SuperPower connected a 30-meter superconductive power cable to the grid near Albany, N.Y. American Superconductor carried out an even more impressive demonstration of this kind in 2008, when it threw the switch for a 600-meter-long superconducting power cable used by New York’s Long Island Power Authority, part of a program funded by the U.S. Department of Energy.

While all these projects were technically successful, they were merely government-sponsored demonstrations; electric utilities are hardly clamoring for such products. The only commercial initiative now slated to use superconducting cables is the proposed Tres Amigas Superstation in New Mexico, an enterprise aimed at tying the eastern, western, and Texas power grids together in one spot. Using superconducting cables would allow the station to transfer massive amounts of power, and because these lines can be relatively short, they wouldn’t be prohibitively expensive.

But Tres Amigas is an exception. For the most part, the electric power industry has shown a stunning lack of interest in superconductors, despite the many potential benefits over conventional copper and aluminum wire: three to five times as much capacity within a given conduit size, half the power losses, no need for toxic or flammable insulating materials. With all those advantages, you might well wonder why this technology hasn’t taken the electric-power industry by storm over the past two decades.

One reason (other than cost) may stem from the changing nature of electric utilities, which in many countries have lost their former monopoly status. These companies are by and large reluctant to make substantial investments in infrastructure, especially for projects that don’t promise a quick return [see “How the Free Market Rocked the Grid,” IEEE Spectrum, December 2010]. So the last thing many of them desire is to assume the risk of adopting anything as radical as superconductive cables, generators, or transformers.

It may be that superconductivity just needs time to mature. Plenty of technologies work that way. Perhaps the next generation of wind turbines will sport superconducting generators in their nacelles, an application that American Superconductor is working toward. A better bet in our view, though, is that superconductivity will remain limited to applications like MRI, where it’s very difficult to build something any other way.

What will those applications be? A ship that cuts through the waves using superconducting magnetic propulsion instead of propellers? Unlikely: Japanese engineers built such a vessel in 1991, and it’s long since been mothballed. An antigravity device that can make living creatures float? Probably not: The 2010 Nobel laureate Andre Geim demonstrated that this could be done in 1997, and it hasn’t been put to any real use. A magnetically levitated train that can top 580 kilometers per hour? Japanese engineers built one in 2003; yet few rail systems are giving up on wheels. A supercooled microprocessor that can run at 500 gigahertz? Perhaps: IBM and Georgia Tech captured that speed record in 2007, but it would be hard to make such a setup practical.

We certainly don’t know what’s ahead. But we suspect that the next big thing for superconductivity, whatever it is, will, like MRI, take the world by surprise.

About the Authors

Pradeep Haldar and Pier Abetti together have almost a half century of experience with superconductivity. Haldar, an IEEE Senior Member, is a professor of nanoengineering at the University of Albany, of the State University of New York. Abetti, an IEEE Life Fellow and professor of enterprise management at Rensselaer Polytechnic Institute, once had Haldar as a student. Only after Abetti began lecturing about the commercialization of superconductivity did he learn that Haldar had been a director of technology in that very industry. “He made me get up and talk about the whole thing,” says Haldar.