Humanoid robots can sense the world around them, move their bodies, and interact with people in ways that are similar to the ways that real people interact. But a robot’s “human-ness” is (at least for now) all just a simulation. It’s a combination of clever software, and in some cases, hardware that’s designed to make it easy for us to fool ourselves into thinking that some glorified box of circuits is even a little bit like a person. We’re very, very good at fooling ourselves like this, to the point where it starts to get a little weird.

Researchers from Stanford University will present a paper at the Annual Conference of the International Communication Association in Fukuoka, Japan, in June, with the title of “Touching a Mechanical Body: Tactile Contact With Intimate Parts of a Human-Shaped Robot is Physiologically Arousing.” The study shows that when a NAO robot asks humans to touch its butt, we get uncomfortable. This is weird because NAO doesn’t really have a butt in the traditional sense of the word, and even if it did, it’s just a robot, and on a very basic level it doesn’t care where you touch it. So what is going on here?

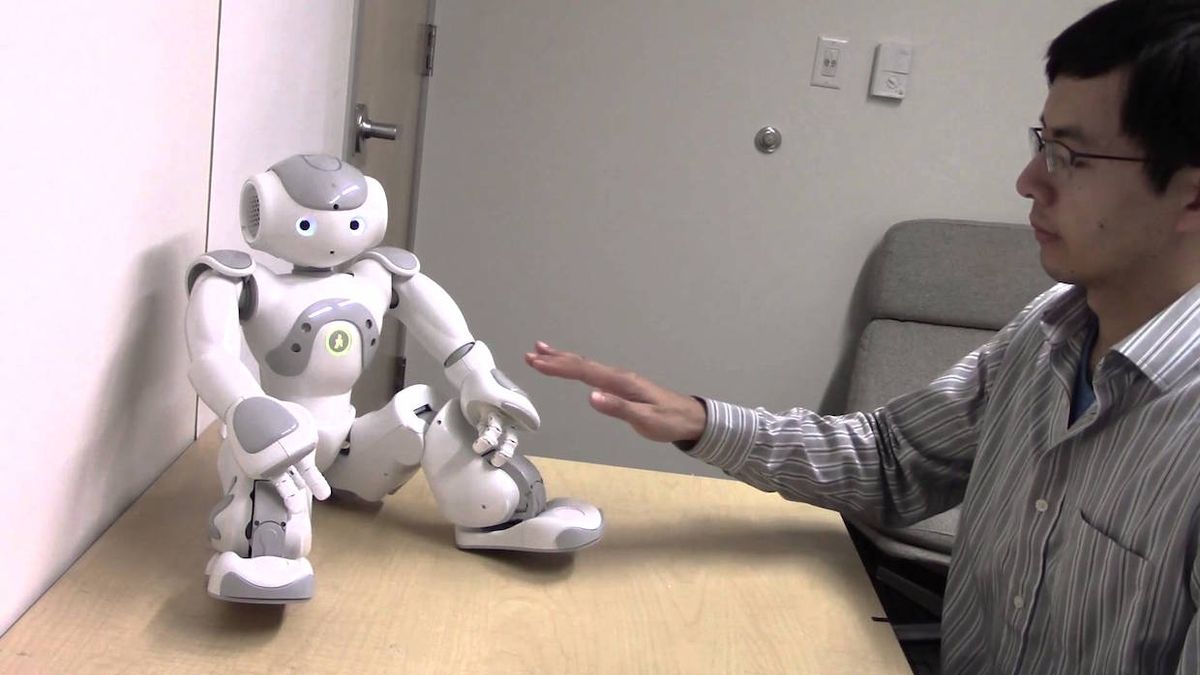

The study itself is fairly straightforward. Subjects (undergrads, of course) were put into a room alone with a NAO robot. They placed their non-dominant hand on a skin conductance sensor to measure their physiological arousal. We should clarify here that “physiological arousal” is a generalized term referring to attention, alertness, and awareness. Despite the fact that we’re talking about touching and arousal at the same time, sexual arousal is a specific subset that the study is (as far as we know) not addressing directly.

Anyway, once the subjects were all hooked up to the sensor, NAO introduced itself, and then asked the subjects to use their dominant hand (the one without the sensor on it) to either point to different parts of its body, or touch them. Each anatomical region was scored on its accessibility: how often, in general, people touch other people in those areas. For example, high accessibility areas include the hands, arms, forehead, and neck, while low accessibility areas include (unsurprisingly) inner thighs, breasts, buttocks, and (you guessed it) the genital area.

You will probably not be shocked to learn the results of this, as the researchers describe in their paper:

“Touching less accessible regions of the robot (e.g., buttocks and genitals) was more physiologically arousing than touching more accessible regions (e.g., hands and feet). No differences in physiological arousal were found when just pointing to those same anatomical regions.

Further evidence of participants’ sensitivity to touching low-accessible regions of a robot emerged in an analysis of response time, which was longer for participants who touched low accessible but not high-accessible areas.”

Okay, so people definitely get uncomfortable, and are reluctant, to touch these low-accessibility areas on a humanoid robot. The researchers suggest that the cause of this may be a very primitive response to “human-ness” that overrides our brains telling us that it’s just a robot:

“Robots can elicit powerful social responses from people. These responses arise from an inherent tendency for people to treat media that are ‘close enough’ to being human like real people. These responses are not simply an act of playing along—they occur on a deeper physiological level. People are not inherently built to differentiate between technology and humans. Consequently, primitive responses in human physiology to cues like movement, language and social intent can be elicited by robots just as they would by real people.

The question that this raises is just where, exactly that “close enough” point is, so we asked Jamy Li, who conducted the study along with Wendy Ju and Byron Reeves, what he thought about it:

“The robot we used spoke with people, gestured and looked like a person, so it would be interesting to look at how different degrees of human likeness would affect how much people react to the robot. For a robot that appears to move of its own accord, I’d say that the robot should have a basic human-like form with a head, arms and feet; our study didn’t look at this so that is just my impression.”

It’s important to note that the study didn’t explicitly evaluate the reasons for increased physiological arousal when touching the robot, and there all kinds of variables here that probably have some effect on how humans and robots interact. For example, the results would definitely be different with a robot of a different design, and they’d likely be different if the robot was interacting with the human in a different way. Having said that, this research does still suggest some things that robot designers could keep in mind, as Li described to us:

“Developers could make their robots more comfortable for users to interact with through touch by thinking about how robots should utilize social conventions. For example, people might feel more comfortable interacting with humanoid robots in the majority of social contexts where the touch buttons are primarily on its hands, arms and forehead as opposed to buttons that are on areas like its eyes, buttocks or throughout its body. Developers could also be aware that when those norms are violated (for example, a robot that has a humanoid form and that gives social cues like speech and gesture asks the person to touch it in an area that people don’t usually touch robots), people may feel uncomfortable about it, so it may be necessary to design around that.”

For a different perspective on this research, we spoke with Dr. Angelica Lim, a software engineer at Aldebaran Robotics (and IEEE Spectrum contributor). “I touch Pepper’s butt all the time,” Dr. Lim says, referring to the social humanoid robot built by Aldebaran and SoftBank. “It’s weird at first, but then you get used to it. That’s the mantra in general for robots. You just get used to it, because it’s not a human, it’s a robot.”

Dr. Lim also points out that context is a significant factor in the way people react to robots. “The researchers created a particular context in which the robot is touched. People grab NAO’s bum when they carry it like a baby, but when the robot is sitting up, shoulders back, staring at you, and talking, we put the robot’s IQ at a higher level, project maturity, and recreate the social constructs that we have between adult humans.”

As robots get more sophisticated and better able to mimic humans in both appearance and actions, this question of touch interaction is going to become increasingly relevant. It’ll also be important to better define the gradient towards human-ness that makes it somehow okay to touch the butt of a cellphone, but weird to touch the butt of a NAO.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.