Over a decade ago, Stanford roboticists started experimenting with ways of using arrays of very small spines to help climbing robots grip rough surfaces. These microspine grippers have been used on all kinds of research robots since then, and recently, NASA has decided that microspines are the best way for spacecraft to grab onto asteroids.

Yesterday at the IEEE/RSJ International Conference on Intelligent Robots and Systems in South Korea, Shiquan Wang from Stanford presented a new microspine-based palm design for rock-climbing robots. These palms use microspines that can support four times the weight of previous designs, which will be enough to turn JPL’s RoboSimian DRC robot into a champion rock climber. And we’re not talking just scrambling up slopes: It’ll be able to scale vertical rock faces, and even clamber around overhangs.

Microspines work just like tiny little claws. They catch and hold onto rough surfaces, and while each spine is itty bitty and can’t hold much, if you use enough of them, you can support a lot of weight (or resist a lot of force). The previous generation of microspines, which include the ones that NASA is using for its Asteroid Redirect Mission, are highly compliant, able to hook onto very rough surfaces by allowing each spine to find its own little spot to grab onto. The compliant design is very robust for lots of applications, but the compliant mechanism is bulky, and reduces the amount of spines you can stuff into your gripper.

If your goal is to support as much weight as possible for applications like rock climbing, you want to have as many spines sticking into the surface and supporting weight as you can, and this is where Stanford’s new gripper design comes from: by removing almost all of the compliance, you can vastly increase your spine density. The overall percentage of spines that are engaged onto the surface goes down, but since you have so many more spines, you still end up way, way ahead on how much load you can handle.

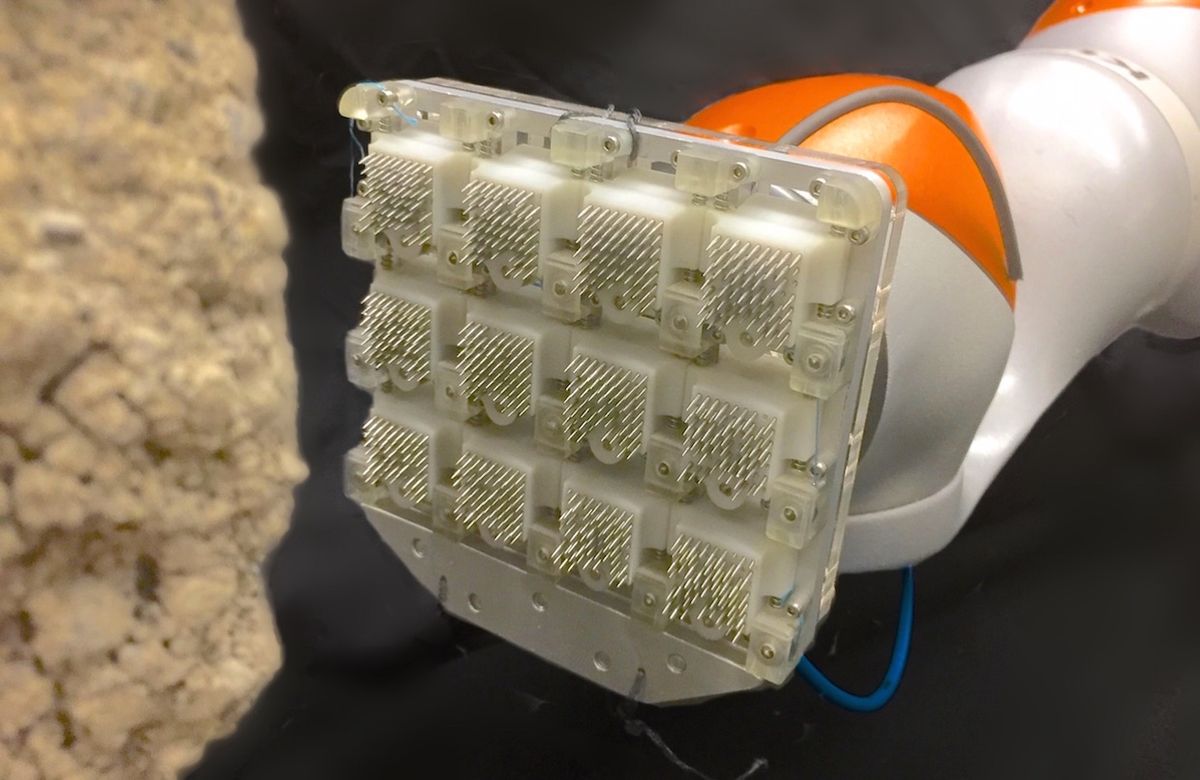

The design of these new spines is straightforward: each 15-mm steel spine sits in a 3D printed sleeve, with a spring that pushes it down towards the surface it’s trying to grip. That single compliant axis helps the spines grip very rough surfaces, and 60 spines will fit into a single 18-mm x 18-mm “tile.” Twelve tiles together form this prototype palm, and each tile has a little bit of wiggle room to help it engage better to optimize load sharing. All of the spines are angled slightly towards the surface that the palm is gripping, meaning that they engage when a force is pulling on the palm, but lifting in the opposite direction causes the palm to easily detach.

The researchers tested the full palm on nine different surfaces and achieved up to 710 N of shear adhesion, which is over four times better than previous designs. It doesn’t like surfaces that are super smooth or super rough, but for most rock surfaces (including concrete), it works great. Next, the palm design will expand to include compliant fingers and toes, each covered with microspine tiles, and they’ll see what JPL’s RoboSimian can do with them. Look for that to happen next year.

“A Palm for a Rock Climbing Robot Based on Dense Arrays of Micro-Spines,” by Shiquan Wang, Hao Jiang, and Mark R. Cutkosky from Stanford University, was presented yesterday at the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems in Daejeon, Korea.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.