Cameras and audio equipment are getting better all the time, but mostly through brute force: more pixels, more sensors, and better post-processing. Mammalian eyes and ears beat them handily when it comes to efficiency and the ability to only focus on what’s interesting or important.

Neuromorphic engineers, who try to mimic the strengths of biological systems in manmade ones, have made big strides in recent years, especially with vision. Researchers have made machine-vision systems that only take pictures of moving objects, for example. Instead of taking many images at a steady, predetermined rate, these kinds of cameras monitor for changes in a scene and only record those. This strategy, called event-based sampling, saves a lot of energy and can also enable higher resolution.

One example is a silicon retina made by Tobi Delbrück of the Institute for Neuroinformatics in Zurich; it was used as the eyes in a robotic soccer goalie. This design, made in 2007, has a 3-millisecond reaction time.

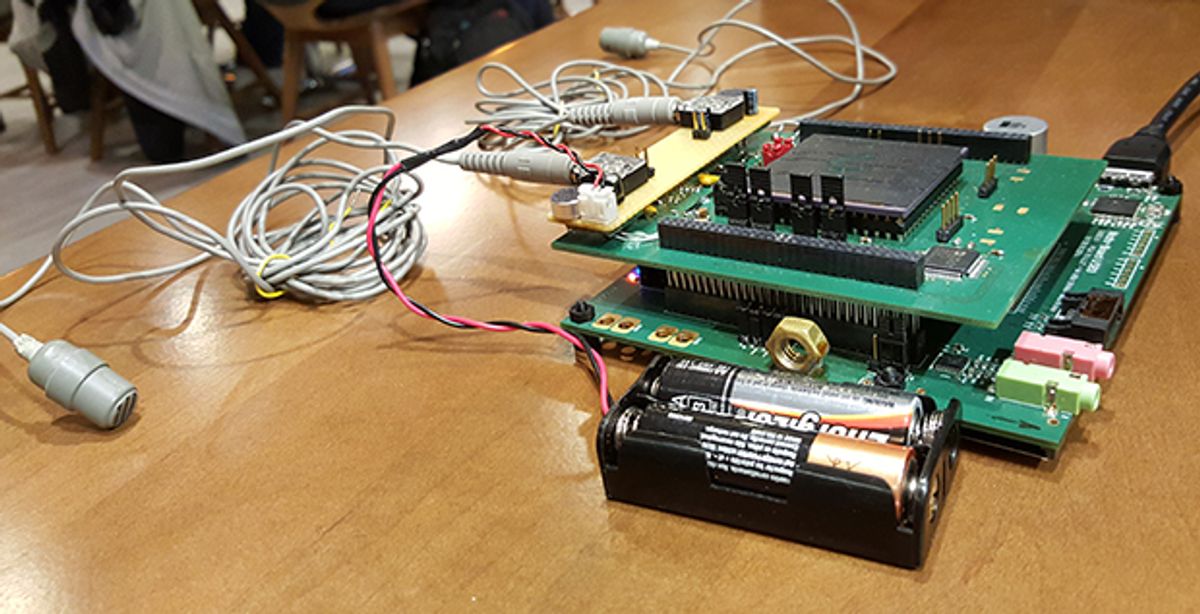

Last week, at the IEEE International Solid-State Circuits Conference in San Francisco, another group showed how this approach can also work for hearing. Shih-Chii Liu, co-leader of the Sensors Group at the Institute of Neuroinformatics, described a silicon cochlea that uses just 55 microwatts of power (three orders of magnitude less than previous versions of the system) to detect sound in a humanlike way.

The neuromorphic auditory system uses two “ears,” with each one capable of being moved independently of the other. The difference in timing between sound waves reaching the two ears makes it possible to locate the origin of a sound, says Liu. Each silicon ear has 64 channels, which each responds to a different frequency band, from low pitches to high. These channels mimic the cells in the human cochlea, which also responds to different frequencies (about a thousand in the real thing).

Liu connects the silicon cochlea to her laptop and shows what it’s recording with a graph of frequency over time. When we’re quiet, there’s no activity. When one of us speaks into the microphone, there are spikes around the 100-to-200-hertz range. The other channels, ranging from 20 Hz to 20 kilohertz, are not recording.

Liu says her group and Delbruck’s are now working to integrate the silicon cochlea and retina. This could give a humanoid robot a lot more low-power smarts. Besides being more humanlike, multimodal sensing means machines will miss less of what’s going on. This is evident in the way human senses support each other. When you talk to someone in a noisy restaurant, for example, you can’t always hear her every word. But your brain fills in the missing auditory pieces with visual information gathered when you watch their lips.

The neuromorphic researchers want to integrate these smart, low-power sensors with processors running deep learning algorithms. This kind of artificial intelligence does a good job of recognizing what’s going on in an image; some versions can even generate a surprisingly accurate sentence describing a scene. Neural networks excel at understanding and generating speech, too. Combining neuromorphic engineering with deep learning could yield computers that mimic human sensory perception better than ever before.

Asked whether this advance would someday help humans who are deaf or hard of hearing, Liu said the current design wouldn’t work for cochlear implants, so that is not an application her group is pursuing. She notes that although it could work in theory, it would probably involve some fundamental changes in hearing aid design that might cost so much to implement that the ultimate payoff would not be worth the effort.

This post was corrected on 4 April to clarify Liu’s name and affiliation.

Katherine Bourzac is a freelance journalist based in San Francisco, Calif. She writes about materials science, nanotechnology, energy, computing, and medicine—and about how all these fields overlap. Bourzac is a contributing editor at Technology Review and a contributor at Chemical & Engineering News; her work can also be found in Nature and Scientific American. She serves on the board of the Northern California chapter of the Society of Professional Journalists.