Robots that make maps tend to be highly reliant on vision of one sort or another, whether it’s a camera image or something off the end of the visible spectrum like a laser scanner. This is understandable: humans are adapted to use vision, so we understand it pretty well, and we can get a lot of useful information out of a visual image. Animals, on the other hand, take advantage of a much broader suite of senses, specialized for their environments. If you only come out at night, or if you live in a hole, vision is perhaps not the best solution for you, and a robot modeled after a shrew can now make maps using just tactile feedback from a prodigious set of artificial whiskers.

We met Shrewbot in January of last year; it’s an adorable robot with artificial whiskers modeled after the Etruscan pygmy shrew. Here’s the video from 2012:

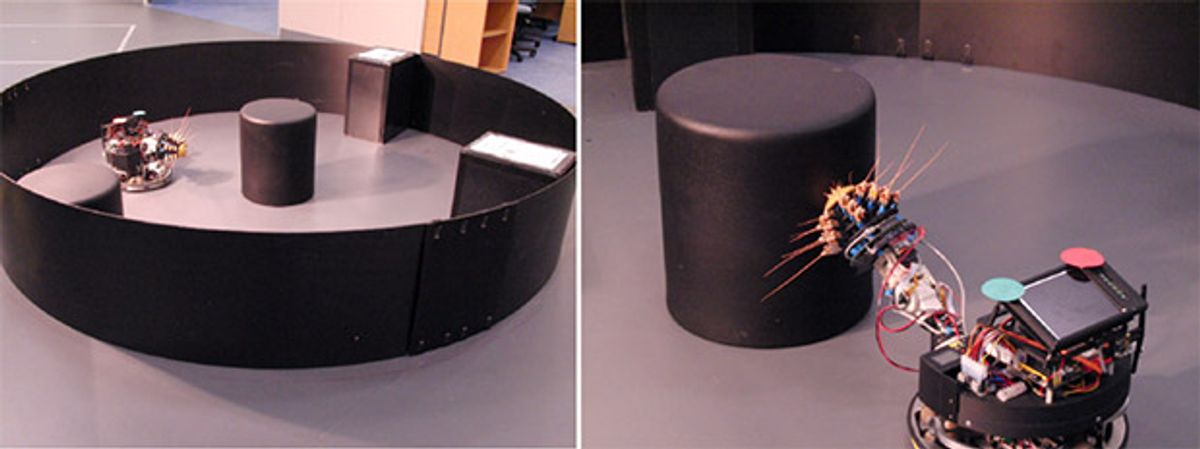

New research presented at the IEEE International Conference on Robotics and Automation (ICRA) this week has Shrewbot performing what the researchers are calling tSLAM, which is tactile Simultaneous Localization and Mapping. The robot has an array of 18 individually-actuated whiskers mounted on a 3 degree-of-freedom neck, attached to an omni-drive mobile platform. Using a combination of wheel odometry and detection by whisking (the behavior really is called whisking), Shrewbot is able to gradually make a tactile map of an area by combining hundreds (or thousands) of whisk contacts that it feels when it encounters walls or other obstacles.

This video shows the mapping in action; the blue line shows the actual location of the robot, while the red line shows where the robot thinks it is. Notice how the red line more closely matches the blue line as the robot makes more whisk contacts:

By the end of this process, you can see that Shrewbot has a reasonably good idea of what its environment looks like. And remember, the resulting map (and ability to localize) is achieved purely through touch. Robots like Shrewbot are ideal for exploring and mapping spaces where laser, acoustic, or visual sensors don’t work very well, like dark spaces, spaces filled with dust or smoke, or even in turbid water, and future research will investigate how well this technique works at larger scales, with an eye towards practical deployment, and perhaps even an implementation of texture detection with whiskers as well.

“Simultaneous Localization and Mapping on a Multi-Degree of Freedom Biomimetic Whiskered Robot,” by Martin J. Pearson, Charles Fox, J. Charles Sullivan, Tony J. Prescott, Tony Pipe, and Ben Mitchinson from the Bristol Robotics Laboratory and Sheffield University's Adaptive Behaviour Research Group, was presented this week at ICRA 2013 in Germany.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.