In the 48 years since the introduction of the first microprocessor, in 1971, the number of electronic components that can be crammed onto a given area on a chip has increased seven orders of magnitude. That corresponds to a doubling about every two years [see “Moore’s Curse,” IEEE Spectrum, April 2015].

You might think that the performance of previous vacuum-tube electronics could not possibly compare with that record of improvement. Not so. It’s just that the key metric of improvement is different.

The diode, the simplest vacuum tube, was invented in 1904 by John A. Fleming; three years later came Lee de Forest’s triode, and tetrodes and pentodes followed in the 1920s. These “gridded” tubes use the voltage in a grid to modulate current from an electron source. Work on magnetrons—another type of vacuum tube that generates microwaves by squeezing electrons through a magnetic field—led to the first patent in 1935 and to the first deployment in radar, in the United Kingdom in 1940. The klystron (used in radar, later in satellite communications and in high-energy physics) was patented in 1937, and gyrotrons (generating power in the millimeter wavelengths, used for heating materials and plasmas) were first introduced in the Soviet Union in the mid-1960s.

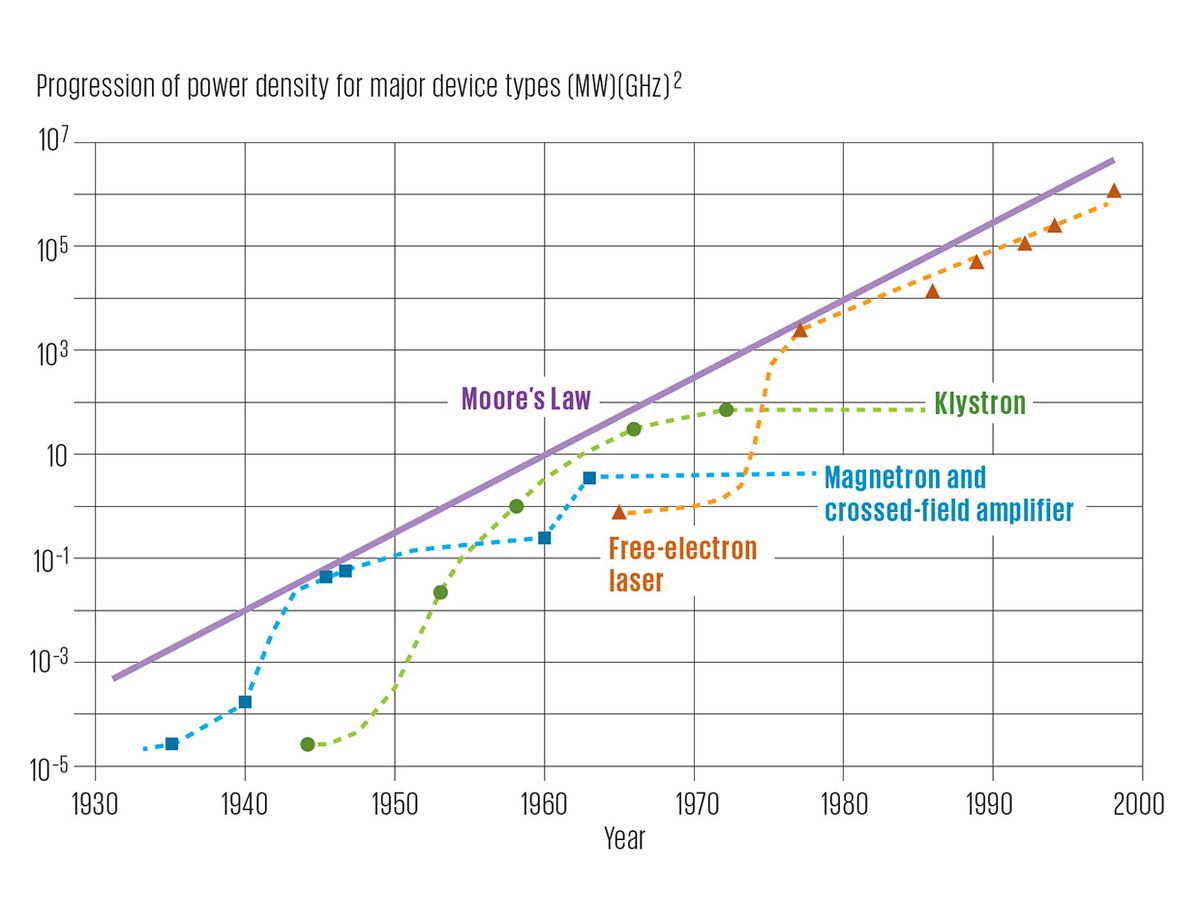

These successive generations of tubes improved by leaps and bounds in their power density—that is, in the maximum power that can be transported through a device—which is proportional to the cross-sectional area of the circuit, which in turn is inversely proportional to the operating frequency. In 1960 Leon Nergaard, at that time the director of RCA Microwave Research Laboratory, proposed average power density as a figure of merit for comparing the performance growth of these diverse devices. Four decades later Victor L. Granatstein, Robert K. Parker, and Carter M. Armstrong estimated the numbers as the product of megawatts and gigahertz to the second power—(MW) (GHz)2—in the Proceedings of the IEEE, May 1999.

The researchers demonstrated successive waves of innovation: The power densities of the early tubes were first overtaken by the densities of magnetrons, then by those of klystrons, and finally, in the 1970s, by those of gyrotron oscillators and free-electron lasers. Each family of devices followed a logistic curve as it approached its performance limits before yielding to the next family.

Between the mid-1930s and the late 1960s, the maximum power density of gridded tubes (triode and higher) increased by four orders of magnitude. During the same time, the power density of cavity magnetrons and crossed-field amplifiers rose by five orders of magnitude; between 1944 and 1974, the maximum power density of klystrons rose by six orders. The same improvement came for gyrotrons and free-electron lasers between the 1960s and 2000.

If you plot the entire sequence of logistic curves on a semilogarithmic graph, the envelope of the curves forms a straight line that gains nearly 1.5 orders of magnitude per decade. As soon as I came across the graph, I realized that the ascent must be very close to the growth rate dictated by Moore’s Law, and a simple calculation confirmed the rate. Between 1935 and 2000 the average annual rise of the linear envelope line indicates that the growth of maximum power densities of vacuum electronics was almost exactly 35 percent—virtually identical to the mean annual rate of growth for the post-1965 crowding of transistors on a chip.

To be sure, the trend lines of vacuum tubes and of integrated circuits involve different figures of merit. But it is certainly noteworthy that the first family of electronic devices improved as fast in its domain as the second family did in its different domain. A kind of Moore’s Law was in effect in electronics long before Gordon Moore set it down, in 1965.

This article appears in the February 2019 print issue as “The Vacuum Tube’s Power Law.”