Solar cells convert light to electricity. Image sensors also convert light to electricity. If you could do them both at the same time in the same chip, you’d have the makings of a self-powered camera. Engineers at University of Michigan have recently come up with just that—an image sensor that does both things well enough to capture 15 images per second powered only by the daylight falling on it.

With such an energy-harvesting imager integrated with and powering a tiny processor and wireless transceiver, you could “put a small camera, almost invisible, anywhere,” says Euisik Yoon, the professor of electrical engineering and computer science at University of Michigan who led its development. They reported their results this week in IEEE Electron Device Letters.

Earlier attempts at self-powered image sensors have mostly gone one of two ways. One is to fill some of the sensor area with photovoltaics. This straightforward approach can work, but it greatly reduces the amount of light available for producing an image. The other is to have the imager’s pixels alternate between acting as a photodetector and acting as a photovoltaic cell. This too works, but at the cost of complexity and at least half the potential images.

The solution Yoon and postdoctoral researcher Sung-Yun Park came up with has neither drawback. Noting that a number of photons zip through a pixel’s photodetector diode without causing charge to accumulate, they buried a second diode beneath the photodetector to act as a photovoltaic and scoop up those strays. “It’s not really recycling; it’s more like collecting waste,” says Yoon. “It’s almost free energy.”

Because the photovoltaic is beneath the sensor, nearly all the pixel area can go to sensing the image. And because it’s using stray photons that the imaging sensor missed, it’s constantly collecting them to convert to electricity.

Though the prototype imager was constructed using standard CMOS process technology, its pixels require both a different structure and different electrical characteristics from those on a standard imager. Most obviously, the new pixel contains a p-n junction—an extra diode, essentially—beneath the image-sensing diode. Second, typical pixels use electrons as the main charge carrier. But to get both the photovoltaic and sensing diodes working simultaneously, Yoon and his team had to build their device so that it collects positively charged holes—electronic vacancies in the silicon—instead. Holes move less quickly than electrons in silicon, but not so slowly that it interferes with image capture.

Images from a University of Michigan’s self-powered sensor were captured at 7.5 frames per second (left) and 15 frames per second (right).Images: University of Michigan

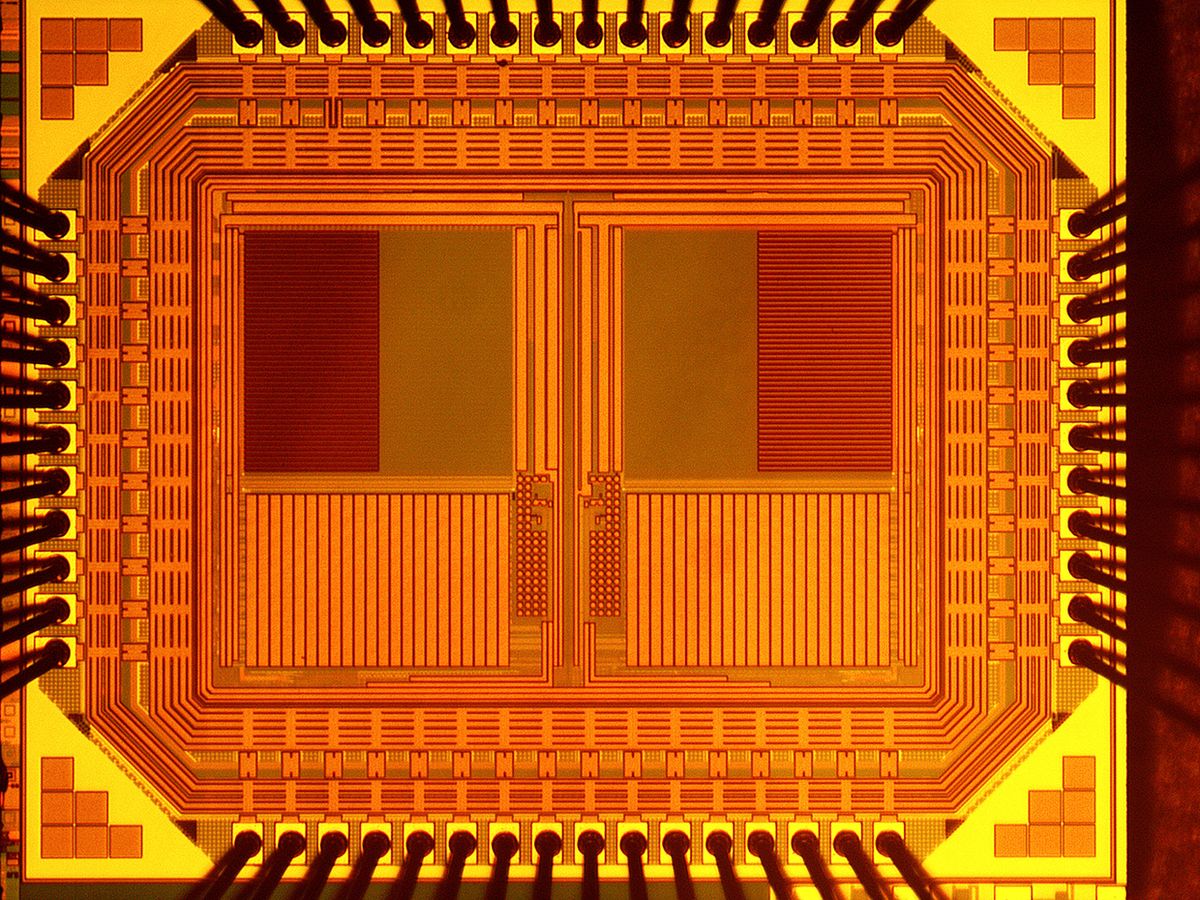

The resulting chip, with its 5-micrometer-wide pixels, was capable of the highest power-harvesting density (998 picowatts per lux per square millimeter) of any energy-harvesting image sensor yet. On a sunny, 60,000-lux day, that’s enough power for 15 frames per second. Normal daylight conditions (20,000–30,000 lux) reduce that to 7.5 frames per second. Thirty frames per second is considered video rate, but that’s not always necessary.

Concerned only with getting a proof-of-concept chip, “we didn’t optimize the power consumption of the sensor itself,” says Park. So there is definitely room to improve the frame rate or reduce the lighting conditions needed toward what’s typical indoors. Yoon and Park have plenty of experience at that, having developed many ultralow power technologies for image sensors such as circuits that automatically adapt the frame rate to the available illumination and microwatt-scale feature detection systems.

If the project continues, they’ll work to integrate everything needed for a self-powered wireless camera.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.