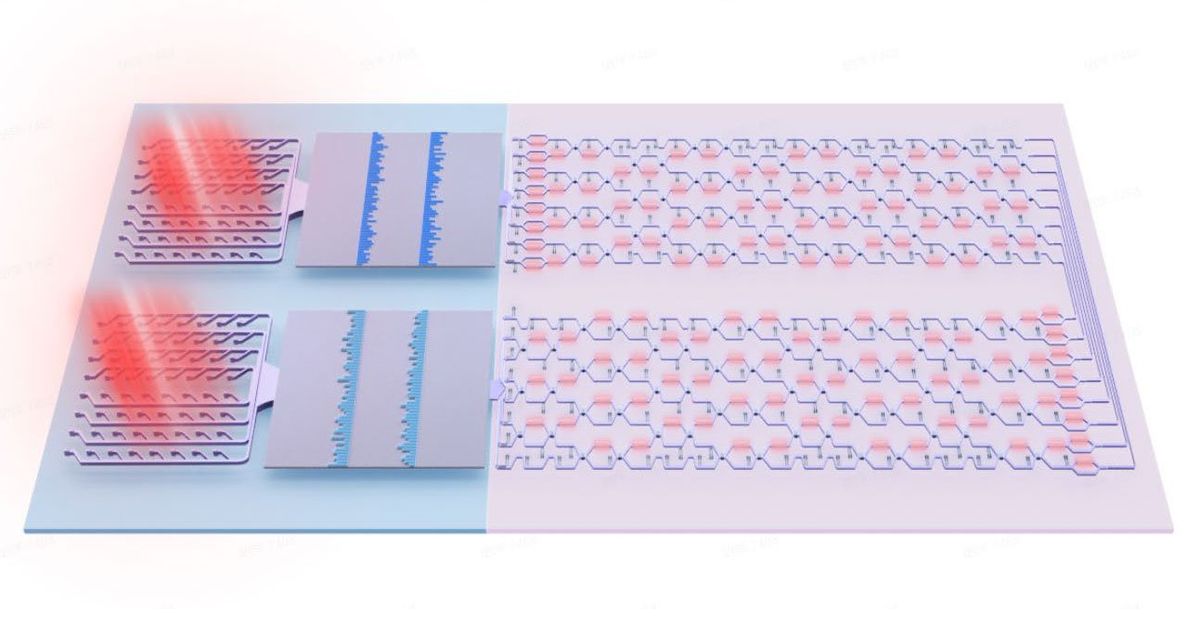

Members of the U.S. National Science Foundation's Ocean Observatory Initiative (OOI) are approaching the end of an nearly three month-long cruise during which they installed a fiberoptic cables and power lines that form the backbone of a seafloor observatory in the Pacific Ocean. The observatory will make it possible for oceanographers and other researchers to gather data about the ocean floor in real time from a network of seismometers, cameras, and other sensors hundreds of kilometers off-shore and as deep as 1800 meters beneath the ocean's surface.

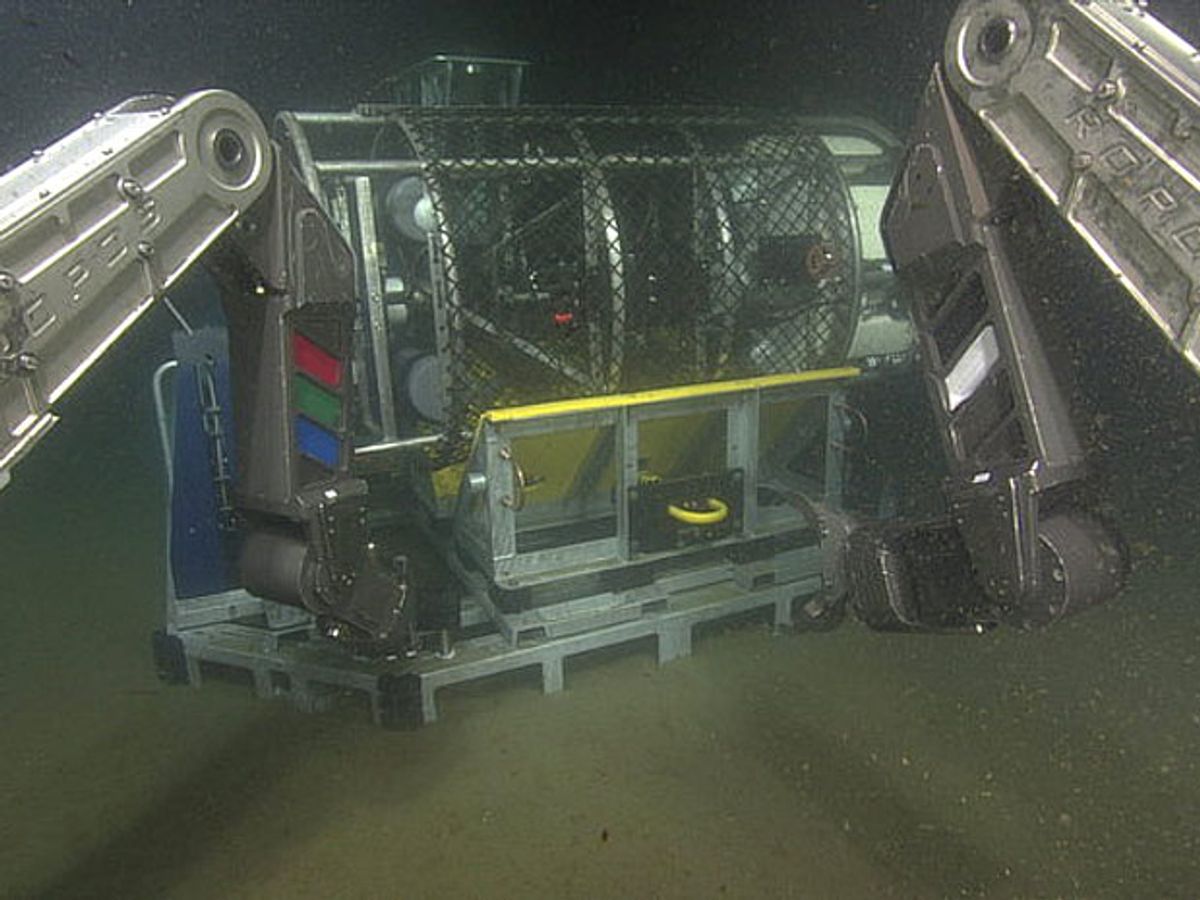

The expedition has laid thousands of meters of fiber optic cable and high-capacity power lines capable of delivering up to 200 kW of power and transmitting data at up to 240 gigabits per second. These cables will connect ocean scientists to a network of sensors dotting the seafloor of the Pacific Ocean off the coast of Washington and Oregon. The University of Washington (UW) led work on the cabled observatory, known as Regional Scale Nodes (RSN). Researchers travelled aboard the UW research vessel Thomas G. Thompson and were assisted in their work by the submersible robot ROPOS.

The now complete RSN takes its place alongside the Pioneer Array and Endurance Array as the American arm of the NEPTUNE program, which has been in development since the late 1990s. Once all three systems become active in early 2015, these sensors will provide oceanographers an unprecedented glimpse of ocean ecosystems. When the American-led arrays go live, they will join their neighbors to the north, the Neptune Canada observatory. Despite a few technical and budgetary setbacks, that project has been gathering video, sonar, and seismology data from the seafloor west of British Columbia since 2009.

The spine of the RSN system, some 869 kilometers of electrooptical cable that provided power and Internet access to the seven nodes that make up the RSN, was laid down in 2011. Over the course of three months, this summer, the latest expedition out of UW, VISIONS 14, laid another 61 kilometers of extension cables and installed other pieces of infrastructure like junction boxes to protect intersecting cables from damage. The expedition also installed three sensor pods, which will move up and down on moored undersea cables, taking measurements of water velocity, oxygen content, photosynthetic light, and other properties of interest to ocean researchers.

Previously, practical matters like getting space on a research vessel and hoping for good weather have limited the scope of ocean research. Oceanographers could often get only snapshots of data from a vast set of information. With RSN in place, researchers will be able to monitor tectonic activity, ocean salinity, currents, and other aspects the world deep below the surface of the Pacific via live, 24/7 feeds. Now, instead of having to scramble research vessels to study seismic activity on the Juan de Fuca tectonic plate, for example, they will be able to parse readings from their own labs. But the new system didn't come to life without some obstacles of its own.

"We had some really interesting terrain on the seafloor to deal with, all kinds of lava flows and some big collapsed areas," said Dana Manalang, a senior engineer at UW's Applied Physics Lab in a statement. "And so figuring out how to lay the cable in a way that we would start it and end each cable in a location that we needed to make our measurements was a challenge."

While UW has taken the lead on creating the RSN observatory, the data it gathers will be freely available to researchers across the world. The National Science Foundation will make RSN data on the Juan de Fuca plate accessible in real time (give or take an occasional couple seconds of latency) to researchers via the Consortium for Ocean Leadership.