In the hierarchy of things that I want robots to do for me, cooking dinner is right up there with doing the laundry and driving my car. And writing all my articles. For now, the best we can do is just watch progress being made toward getting all of these things to work reliably (and affordably). We’ve seen plenty of examples of robots that can cook, but generally, they’re all following some level of pre-programmed instructions. Telling robots what to do and how to do it is one of the trickiest things about robotics, especially for end users, so it’s a good thing we can all just sit back and let them learn things by watching videos on YouTube.

This project is taking place at the University of Maryland, and this video does a very good job of not really saying all that much over the course of 2 minutes, but here it is anyway:

The research we’re talking about here is from a paper titled, “Robot Learning Manipulation Action Plans by ‘Watching’ Unconstrained Videos

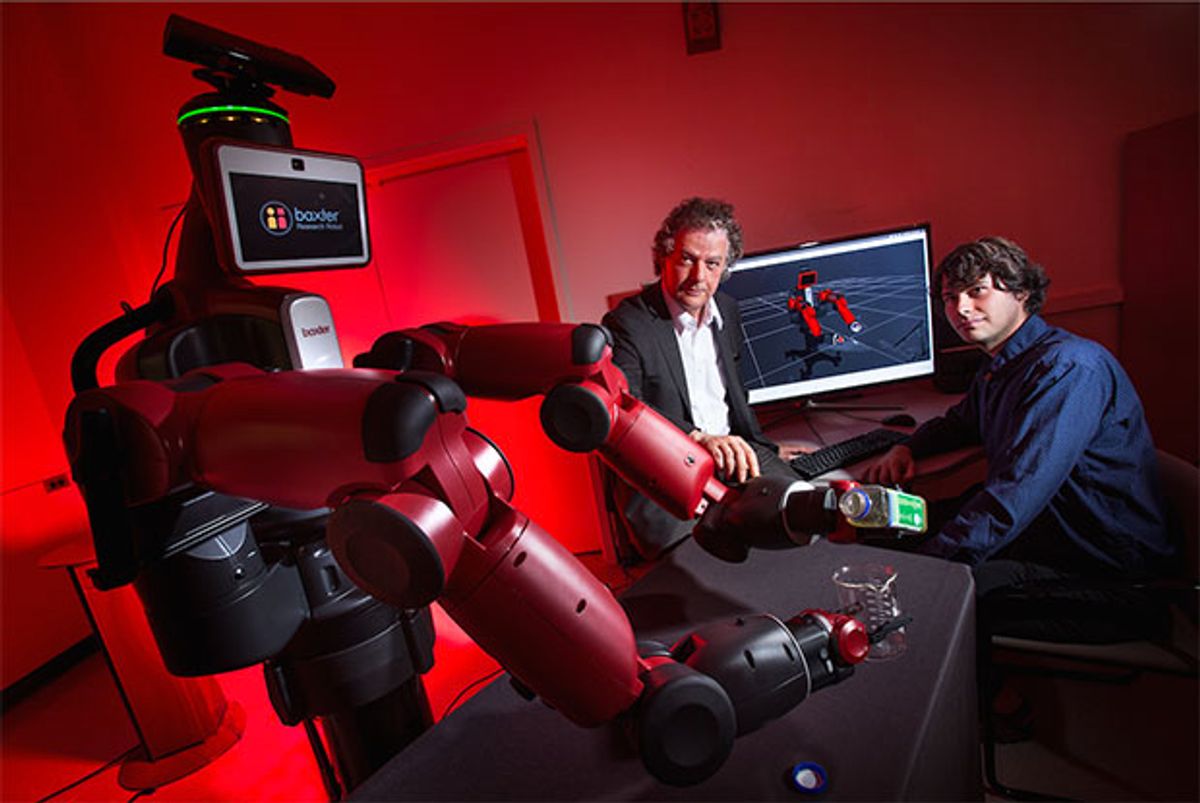

from the World Wide Web.” The paper is really about visual processing: watching a human interacting with objects in a video, and then figuring out what that human is doing and how they’re doing it, with a final step of replicating those actions using the manipulation capabilities of a robot (Baxter, in this case).

The University of Michigan has a dataset called YouCook, which consists of 88 open-source third-person YouTube cooking videos. Each video was given a set of unconstrained natural language descriptions by humans, and each video also has frame-by-frame object and action annotations. Using these data, the UMD researchers developed two convolutional neural networks: one to recognize and classify the objects in the videos, and the other to recognize and classify the grasps that the human is using.

While object recognition is a familiar thing, recognizing grasps is important because the robot may have different end effectors that it uses for different grasping purposes, and different grasps can also provide hints about what actions might happen next. From the paper:

The grasp contains information about the action itself, and it can be used for prediction or as a feature for recognition. It also contains information about the beginning and end of action segments, thus it can be used to segment videos in time. If we are to perform the action with a robot, knowledge about how to grasp the object is necessary so the robot can arrange its effectors. For example, consider a humanoid with one parallel gripper and one vacuum gripper. When a power grasp is desired, the robot should select the vacuum gripper for a stable grasp, but when a precision grasp is desired, the parallel gripper is a better choice.

For this particular case, grasps were divided into six types: power grasps and precision grasps, each for a small object, large objects, or spherical object. Objects, meanwhile, were divided into 48 classes, ranging from “apple” to “whisk.” Based on the YouCook data set, the overall recognition accuracy that the system demonstrated was 83 percent, with a 68 percent success rate at translating the grasp and object combinations into commands that a robot could then execute.

In future work, the researchers would like to develop finer grasp categorizations (more than just the six based on object size and whether power or precision is required), and then use those categorizations to better predict what action is happening in the video, or (ideally) what action is probably going to come next. By that we think the researchers are saying they’re scouring YouTube for a meal that they can sit back and watch their robots cook for them.

[ Paper ] via [ DARPA ] and [ Engadget ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.