I think the idea of designing robots that look like humans to better interact with humans is a solid “meh.” The concept is good, but the execution is usually horrible, and the more your robot tries to look like a human, the more horrible it gets. Having said that, I think that the idea of using robots with specific human features, like eyes, can be a substantial asset for human-robot interaction, if you know what you’re doing.

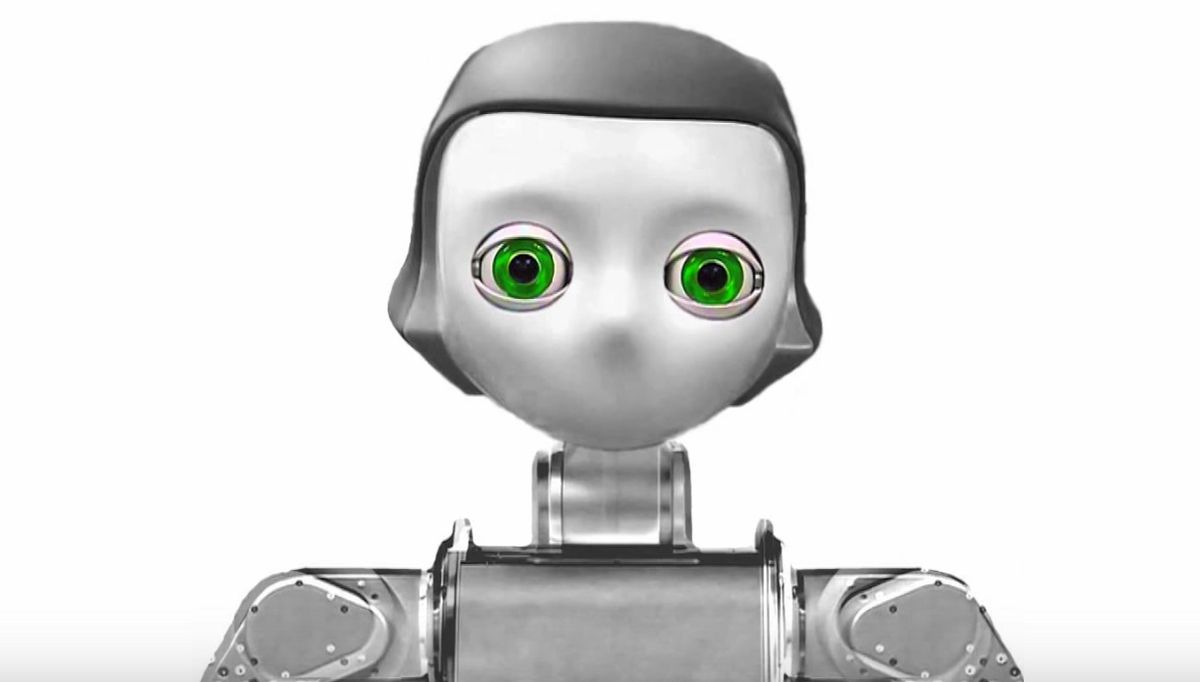

Sean Andrist, a PhD student at the University of Wisconsin-Madison (who knows what he’s doing), has been researching social gaze with robots. He’s developed algorithms that help robots look at people at the right times and in the right ways. It’s not just making the robots less creepy, but more helpful as well.

Here’s Sean’s thesis in 3 minutes:

It’s very cool stuff, so let’s take a closer look at why gaze, and matching gaze to personality, is important.

Extroverts (you know who you are) tend to get along better with other extroverts. Introverts (you know who you are, too) tend to get along better with either other introverts, or nobody (for introverts this is often the preference). This goes farther than just who you feel comfortable hanging out with: it’s also who you’re most effective around, in terms of things like productivity and problem solving. One of the most reliable ways to tell what kind of “vert” someone is (besides whether or not they come to your parties, and if they do, whether or not they hide in a closet) is their gaze: extroverts tend to look at the people they’re talking to significantly more than introverts do. You may have noticed this “mutual gaze,” unless you’re an introvert, in which case you were probably looking somewhere else.

If you’re a humanoid robot, you can use these data to help you interact more effectively with humans. First, you can try to use the human’s gaze to determine whether they’re introverted or extroverted, and then you can match your robot’s gaze with theirs to help them feel more comfortable, and consequently make them more effective at completing cooperative tasks. The paper frames this a little bit nefariously: “Nonverbal behaviors, especially gaze, have long been recognized in social-sciences literature as useful tools in persuading others to comply with requests or demands.” Not that anyone would ever use robots for evil, of course.

In a paper published last year entitled “Look Like Me: Matching Robot Personality via Gaze to Increase Motivation,” Andrist (along with Bilge Mutlu and Adriana Tapus) tested several hypotheses related to adaptive gaze interaction:

Hypothesis 1—Matching the robot’s personality to the user’s personality will improve the user’s subjective ratings of the robot’s performance.

Hypothesis 2—Matching the robot’s personality to the user’s personality will improve compliance with the robot’s requests to engage in the task for a longer period of time.

Participants were told that they would be completing the Tower of Hanoi puzzle under the supervision of the robot, and that the robot would provide all the necessary instructions for the rules and for progressing through the various stages of the puzzle. After initial introductions, the robot carefully explained the goal and rules of the puzzle and asked the participant to complete it. After the participant’s first successful completion, the robot explained that it would be asking the participant to complete the same puzzle several times and reminded the participant that it was up to them to decide when they would like to stop.

The results here are interesting enough that I’m just going to shut up and quote from the paper:

Our first hypothesis predicted that, in line with similarity attraction theory, participants would give higher subjective ratings to the performance of a robot that matches their personality. This hypothesis was partially supported in the experiment, in that introverted participants reported a marginal preference for the introverted robot behaviors. Extroverted participants reported no difference in ratings. Introverts may have been more consciously sensitive to the behaviors of the robot, as previous work has shown introverts have a superior detection rate and perceptual sensitivity than extroverts [7]. In a study involving the rating of other people, previous work has also found that introverts preferred other introverts on the measures of “reliable friend” and “honest and ethical,” while extroverts were ambivalent in these measures [16].

The experiment provided support for our second hypothesis, which predicted that participants would comply more with robots that matched their personality. On the measure of total participation time, both extroverts and introverts exhibited significantly greater compliance with the personality-matching robot. However, in the measures of total puzzles solved and total disks moved, only extroverts exhibited significantly greater compliance with the personality-matching robot. Previous work in HCI has shown a similar result in that matching a synthesized voice’s personality to a user’s personality improved feelings of social presence, but only for extroverts [22].

You can read the paper itself here.

What this shows is that things like gaze interaction between humans and robots have tangible effects beyond simply whether the humans feel more or less comfortable. For things like social and physical assistance, it’s going to be critical for robots to use every tool at their disposal to encourage humans to complete tasks that may be boring or repetitive. This particular study was performed on a small group of people in one specific situation, and the researchers acknowledge that personality and nonverbal behavior are significant more complex than is reflected here. In the future, they’re planning to increase the granularity and sophistication of these gaze models, as well as testing on more targeted populations who are likely to benefit most from robotic assistance.

[ Sean Andrist ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.