When you open Netflix and hit “play,” your computer sends a request to the video-streaming service to locate the movie you’d like to watch. The company responds with the name and location of the specific server that your device must access in order for you to view the film.

For the first time, researchers have taken advantage of this naming system to map the location and total number of servers across Netflix’s entire content delivery network, providing a rare glimpse into the guts of the world’s largest video-streaming service.

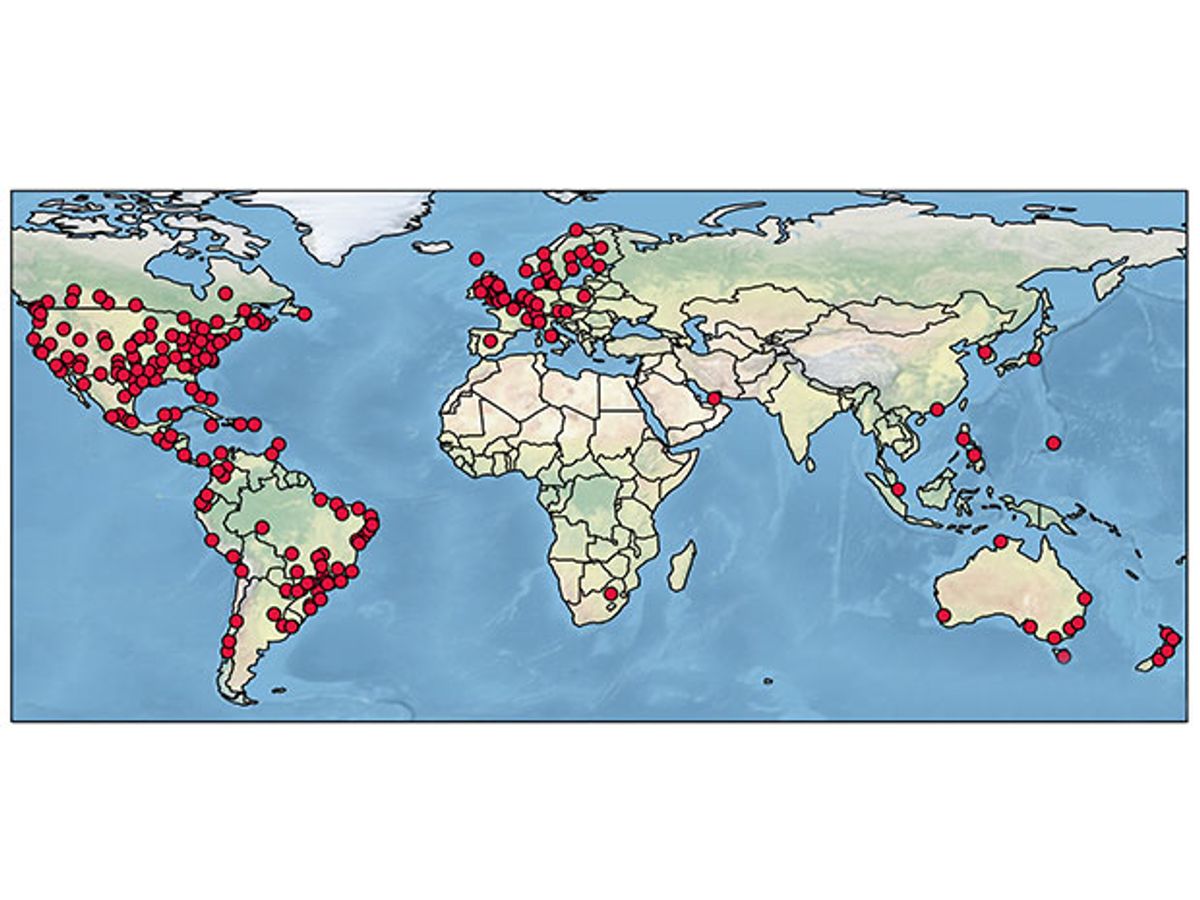

A group from Queen Mary University of London (QMUL) traced server names to identify 4,669 Netflix servers in 243 locations around the world. The majority of those servers still reside in the United States and Europe at a time when the company is eager to develop its international audience. The United States also leads the world in Netflix traffic, based on the group’s analysis of volumes handled by each server. Roughly eight times as many movies are watched there as in Mexico, which places second in Netflix traffic volume. The United Kingdom, Canada, and Brazil round out the top five.

The QMUL group presented its research to Netflix representatives earlier this year in a private symposium.

“I think it's a very well-executed study,” says Peter Pietzuch, a specialist in large-scale distributed systems at Imperial College London who was not involved in the research. “Netflix would probably never be willing to share this level of detail about their infrastructure, because obviously it's commercially sensitive.”

In March, Netflix did publish a blog post outlining the overall structure of its content delivery network, but did not share the total number of servers or server counts for specific sites.

Last January, Netflix announced that it would expand its video-streaming service to 190 countries, and IHS Markit recently predicted that the number of international Netflix subscribers could be greater than U.S. subscribers in as few as two years. Still, about 72 percent of Netflix customers were based in the United States as of 2014.

Steve Uhlig, the networks expert at Queen Mary University of London who led the mapping project, says repeating the analysis over time could track shifts in the company’s server deployment and traffic volumes as its customer base changes.

“The evolution will reveal more about the actual strategy they are following,” he says.“That's a bit of the frustrating part about having only the snapshot. You can make guesses about why they do things in a specific market, but it's just guesses.”

Netflix launched streaming service in 2007 and began to design its own content delivery network in 2011. Companies that push out huge amounts of online content have two options when it comes to building their delivery networks. They may choose to place tons of servers at Internet exchange points (IXPs), which are like regional highway intersections for online traffic. Or, they can forge agreements to deploy servers within the private networks of Internet service providers such as Time Warner, Verizon, AT&T, and Comcast so that they’re even closer to customers.

Traditionally, content delivery services have chosen one strategy or the other. Akamai, for example, hosts a lot of content with Internet service providers, while Google, Amazon, and Limelight prefer to store it at IXPs. However, Uhlig’s group found that Netflix uses both strategies, and varies the structure of its network significantly from country to country.

Timm Böttger, a doctoral student at QMUL who is a member of the research team, says he was surprised to find two Netflix servers located within Verizon’s U.S. network. Verizon and other service providers have argued with Netflix over whether they would allow Netflix to directly connect servers to their networks for free. In 2014, Comcast required Netflix to pay for access to its own network.

Tellingly, the group did not find any Netflix servers in Comcast’s U.S. network. As for the mysterious Verizon servers? “We think it is quite likely that this is a trial to consider broader future deployment,” Böttger says. Netflix did not respond to a request for comment.

To outline Netflix’s content delivery network, Uhlig and his group began by playing films from the Netflix library and studying the structure of server names that were returned from their requests. The researchers also used the Hola browser extension to request films from 753 IP addresses in different parts of the world in order to find even more server names than would otherwise be accessible from their London lab.

“We first tried to behave like the regular users, and just started watching random movies and took a look at the network packages that were exchanged,” says Böttger.

Their search revealed that Netflix’s server names are written in a similar construction: a string of numbers and letters that include traditional airport codes such as lhr001 for London Heathrow to mark the server’s location and a “counter” such as c020 to indicate the number of servers at that location. A third element written as .isp or .ix shows whether the server is located within an Internet exchange point or with an Internet service provider.

Once they had figured out this naming structure, the group built a crawler that could search for domain names that shared the common nflxvideo.net address. The team supplied the crawler with a list of countries, airport codes, and Internet service providers compiled from publicly available information. After searching all possible combinations of those lists, the crawler returned 4,669 servers in 243 locations. (Though the study cites 233 locations, Böttger said in a follow-up email that 243 is the correct number.)

To study traffic volumes, the researchers relied on a specific section of the IP header that keeps a running tally of data packets that a given server has handled. By issuing multiple requests to these servers and tracking how quickly the values rose, the team estimated how much traffic each server was processing at different times of the day. They tested the servers in 1-minute intervals over a period of 10 days.

Their results showed that the structure and volume of data requested from Netflix’s content delivery network varies widely from country to country. In the United States, Netflix is largely delivered through IXPs, which house 2,583 servers—far more than the 625 found at Internet service providers.

Meanwhile, there are no Netflix servers at IXPs in Canada or Mexico. Customers in those countries are served exclusively by servers within Internet service providers, as well as possibly through IXPs along the U.S. borders. South America also relies largely on servers embedded within ISP networks—with the exception of Brazil, which has Netflix servers stashed at several IXPs.

The U.K. has more Netflix servers than any other European country, and most of those servers are deployed within Internet service providers. All French customers get their films streamed through servers stationed at a single IXP called France-IX. Eastern Europe, meanwhile, has no Netflix servers because those countries were only just added to the company’s network in January.

And the entire continent of Africa has only eight Netflix servers, all of which are deployed at IXPs in Johannesburg, South Africa. That’s only a few more than the four Netflix serversthe team found on the tiny Pacific Ocean island of Guam, which is home to the U.S.-operated Andersen Air Force Base.

“It's kind of striking to see those differences across countries,” Pietzuch says. “[Netflix’s] recent announced expansion isn't really that visible when you only look at the evolution of their CDN structure.”

Before the group’s analysis, Uhlig expected to see servers deployed mostly through Internet service providers as a way to ease the traffic burden for service providers and get as close as possible to Netflix’s 83 million customers. He was surprised to see how heavily the company relies on IXPs, despite the company’s insistence that 90 percent of its traffic is delivered through ISPs.

“If you really want to say, ‘I really want to be close to the end users,’ you need to deploy more, and we didn't see that,” he says. “I think the centralized approach is convenient because you have more control and you can scale things up or down according to what the market tells you.”

Uhlig didn’t expect to find Mexico and Brazil so high on the traffic list, even though Netflix has tried to expand its Spanish- and Portuguese-language offerings.

In March, the company said it delivers about 125 million total hours of viewing to customers per day. The researchers learned that Netflix traffic seems to peak just before midnight local time, with a second peak for IXP servers occurring around 8 a.m., presumably as Netflix uploads new content to its servers.