Unlike the past month, last week saw an overflowing cornucopia of IT-related malfunctions, errors and complications. We start off this week’s edition of IT Hiccups with a strange story that has followed in the wake of a major air traffic control outage in the U.S.—one of several incidents that aggravated air travelers around the world.

The story starts off simply enough: On Wednesday afternoon, a little after 3:00 p.m. Pacific time, a computer problem occurred with the En Route Automation Modernization (ERAM) system at the Los Angeles Air Route Control Center. The snafu, a USA Today article reported, left, “controllers temporarily unable to track planes across Southern California and parts of Nevada, Arizona and Utah.” The FAA issued a ground stop on planes wanting to fly to Los Angeles for about an hour until the problem could be cleared up. However, that action caused the cancellation of some 50 flights arriving and departing Los Angeles International Airport and delayed another 455 flights across the country.

ERAM is part of a $2.2 billion Federal Aviation Administration (FAA) modernization effort, which as I have written about previously, has had its problems. So, while the computer problem was a major annoyance, nothing there seemed out of the ordinary. The FAA issued its usual bland statement indicating that it was investigating the issue: “The FAA will fully analyze the event to resolve any underlying issues that contributed to the incident and prevent a recurrence.”

A spokesperson for the Professional Aviation Safety Specialists, the union that represents many FAA employees, hinted at the source of problem by telling USA Today that, “There was so much information coming into the system that it overloaded.”

That seemed to be the end of the incident—well, that is until the weekend, when NBC News ran a story online citing “sources familiar with the incident” who claimed that a U-2 spy plane flying in the area triggered the problems with the ERAM computers. (The article's title said the U-2 had "fried" the system.) The NBC story reported that:

“The computers at the L.A. Center are programmed to keep commercial airliners and other aircraft from colliding with each other. The U-2 was flying at 60,000 feet, but the computers were attempting to keep it from colliding with planes that were actually miles beneath it.”

“Though the exact technical causes are not known, the spy plane’s altitude and route apparently overloaded a computer system called ERAM, which generates display data for air-traffic controllers. Back-up computer systems also failed.”

NBC News contacted the FAA, which basically reissued its previous statement, but also would neither confirm nor deny that the U-2 was the cause of the outage. The U.S. Air Force also declined to comment directly on the story's details, and the Pentagon was not responsive to an inquiry from Reuters. The Wall Street Journal ran a story about the incident today, again, with government officials all deciding to remain mum.

When I saw the U-2 story at the NBC News website, I was more than a bit skeptical, especially over the "frying" statement. It is hard to believe that this is the first time military aircraft have passed through the LA ERAM control space at altitudes above normal commercial airline altitudes. On the other hand, I wouldn’t totally discount that there couldn’t have been some unique set of circumstances involving a military aircraft that just happened to be a U-2 triggering an unknown problem within the ERAM software. Still, my instinct is to attribute the problem to a more prosaic explanation. If some official explanation comes out, I will update the post. However, feel free to speculate on what happened in the meantime.

Update 6 May 2014 : U-2 did indeed cause the LA-area ATC problem

The FAA admitted late yesterday that a U-2 did, in fact, trigger the problems last week with the LA-area ERAM system. Piecing together the FAA explanation from various news sources, e.g., NBC News, Reuters and CNN (no one seems to have published the FAA statement yet in its entirety):

“On April 30, 2014, an FAA air traffic system that processes flight plan information experienced problems while processing a flight plan filed for a U-2 aircraft that operates at very high altitudes under visual flight rules.”

“The computer system interpreted the flight as a more typical low altitude operation, and began processing it for a route below 10,000 feet.”

“The extensive number of routings that would have been required to de-conflict the aircraft with lower-altitude flights used a large amount of available memory and interrupted the computer’s other flight-processing functions.”

“The FAA resolved the issue within an hour, and then immediately adjusted the system to now require specific altitude information for each flight plan.”

“The FAA is confident these steps will prevent a reoccurrence of this specific problem and other potential similar issues going forward.”

The CNN story, which provides (so far) more detail than anyone else, indicates that the ERAM system was overtaxed because of the many waypoints the U-2 flight plan had filed. In addition, however, CNN reports that, “Simultaneously, there was an outage of the Federal Telecommunications Infrastructure, a primary conduit of information among FAA facilities.” CNN did not indicate what caused the FTI to go out, however.

Altogether, the complex U-2 flight plan and the FTI outage added up to what one government official said on background was a “perfect storm” that took down the ERAM system. Well, sometimes truth is stranger than fiction.

U.K. Airline Travelers Unhappy over Passport Control Failure

Los Angeles-bound passengers weren’t the only ones unhappy last Wednesday. International airports and ports across the U.K. experienced unbelievably long queues at passport control as the U.K. Border Force computers went down at around 2:30 p.m. London time and the outage lasted for some 12 hours. Some passengers at Gatwick reported waiting in line for over four hours, and fights were said to have broken out among passengers waiting in line at both Gatwick and Luton Airports. While non-EU passengers waited the longest, UK citizens also had to wait for up to two hours as well, newspapers reported.

The Home Office put out a statement that read in part, “We apologise for the delays that some passengers experienced at passport controls yesterday, but security must remain our priority at all times.” The Home Office also said that its technical staff has been asked “to look into the incident to ensure lessons are learnt.”

However, whether those lessons to be learnt will ever be publicly disclosed is still to be determined. The Home Office is refusing to disclose what caused the massive computer meltdown, which seems to be its standard operating policy.

Completing the trifecta of airport problems that occurred last Wednesday was a report that a construction crew cut a fiber optic cable at Florida’s Fort Lauderdale-Hollywood International Airport at about 4:00 p.m. Eastern time. The loss of the cable resulted in in dozens of flights being canceled, delayed, or rerouted. No doubt passengers trying to travel to the U.S. West Coast from the airport thought they were snake-bit.

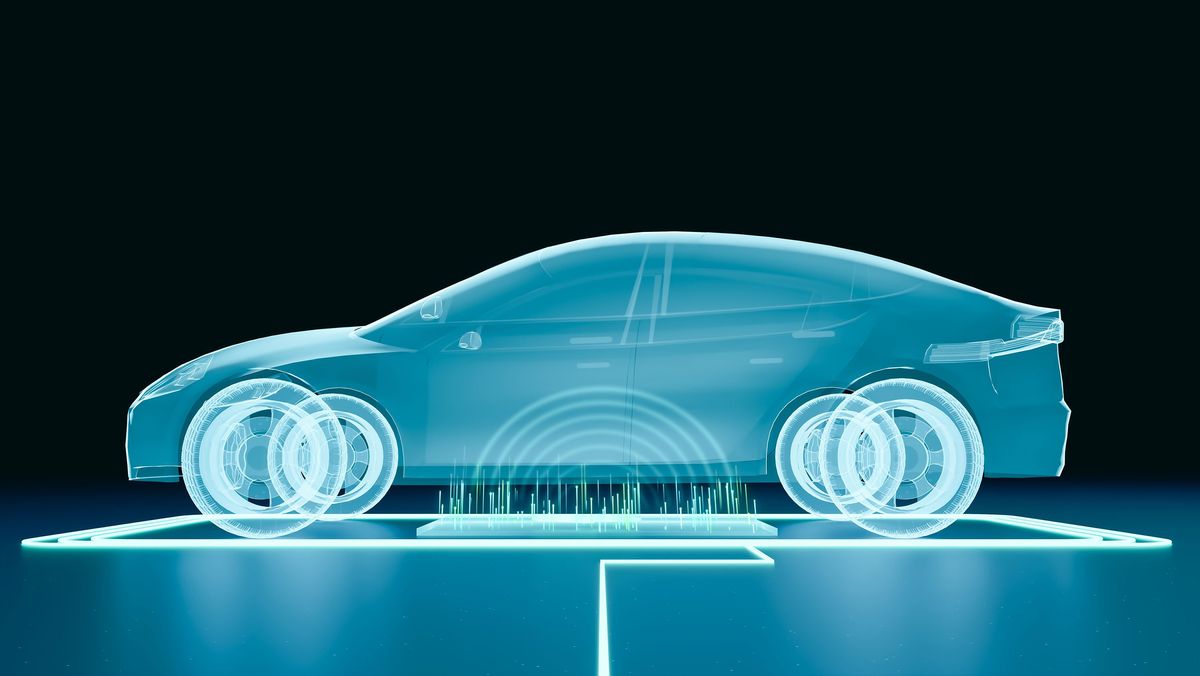

More General Motor Recalls for Software Issues

General Motors announced two more vehicle recalls to correct software issues in its vehicles. First, it is recalling some 56 400 Cadillac SRX crossover vehicles from the 2013 model year because of a software problem in the SRX transmission control module. According to the National Highway Traffic Safety Administration, “In certain driving situations, there may be a three to four second lag in acceleration due to the transmission control module programming.” No crashes have been attributed to the issue, GM reports.

In addition, General Motors said it is issuing recalls for 51 640 Buick Enclave, Chevrolet Traverse and GMC Acadia SUVs, from the 2014 model year that were built between 26 March and 15 August 2013. In these vehicles, GM says, the engine control software may cause the fuel gauge to read inaccurately the amount of fuel remaining, leading the vehicle unexpectedly running out of fuel. GM says it doesn’t know of any crashes that are linked to this problem, either.

In Other News of Interest….

U.S. Selective Service Sends Erroneous Military Draft Registration Letters to Marylanders

New Mexico’s Albuquerque Water Utility Authority Admits $9 Million Accounting Error

Washington State’s 911 Calls Routed to Colorado in Recent Outage

Virgin Mobile Australia Outage Hits 350 000 Customers

Virginia Tunnel E-Z Pass Toll Problems Continue Unabated

What’s the Wait for the Next London Tube Train? How About 939 Minutes?

Taipei Metro Suffers Fresh Problems

Some Indiana Schools Opt to Avoid Online ISTEP Tests

Michigan Hospital Still Struggling with New EHR System Installed in March

Is Australian Splendour Concert Ticketing Problem Glitch, Hack or Prank?

Computer Error Forces NY Stock Exchange to Cancel 20 000 Trades

Northern California Kaiser Permanente Says Insurance Billing Error to be Corrected Soon

Indiana’s Lebanon Utilities Trying to Correct Delayed Billing

Computer Issue Delays Paychecks for Flint Michigan Schools

Nova Scotia Power Overcharges Thousands of Customers

Computer Issue Causes Bank of Tokyo-Mitsubishi UFJ to Delay 23,000 Scheduled Wire Transfers

U.K.’s Norfolk County Council Staff Email Out for Over a Week

Computer Problems Delay Visitor Entry to Kentucky Derby

Erroneous Delinquent Tax Notices Sent to Michigan Residents

Kansas Farm Machinery Manufacturer Forced Shut by Computer Problems

UK Farmers Still Struggling with Single Payment Scheme Online System

National Australia Bank Forced to Make More Compensation in Wake of 2012 Computer Problems

British Columbia’s Pharmanet Computer System Operating Once More

Robert N. Charette is a Contributing Editor to IEEE Spectrum and an acknowledged international authority on information technology and systems risk management. A self-described “risk ecologist,” he is interested in the intersections of business, political, technological, and societal risks. Charette is an award-winning author of multiple books and numerous articles on the subjects of risk management, project and program management, innovation, and entrepreneurship. A Life Senior Member of the IEEE, Charette was a recipient of the IEEE Computer Society’s Golden Core Award in 2008.