Inspired by dog-agility courses, a team of scientists from Google DeepMind has developed a robot-agility course called Barkour to test the abilities of four-legged robots.

Since the 1970s, dogs have been trained to nimbly jump through hoops, scale inclines, and weave between poles in order to demonstrate agility. To take home ribbons at these competitions, dogs must have not only speed but keen reflexes and attention to detail. These courses also set a benchmark for how agility should be measured across breeds, which is something that Atil Iscen—a Google DeepMind scientist in Denver—says is lacking in the world of four-legged robots.

Despite great developments in the past decade, including robots like MIT’s Mini Cheetah and Boston Dynamics’ Spot which have shown how animal-like robots’ movement can be, a lack of standardized tasks for these types of robots has made it difficult to compare their progress, Iscen says.

Quadruped Obstacle Course Provides New Robot Benchmarkyoutube

“Unlike previous benchmarks developed for legged robots, Barkour contains a diverse set of obstacles that requires a combination of different types of behaviors such as precise walking, climbing, and jumping,” Iscen says. “Moreover, our timing-based metric to reward faster behavior encourages researchers to push the boundaries of speed while maintaining requirements for precision and diversity of motion.”

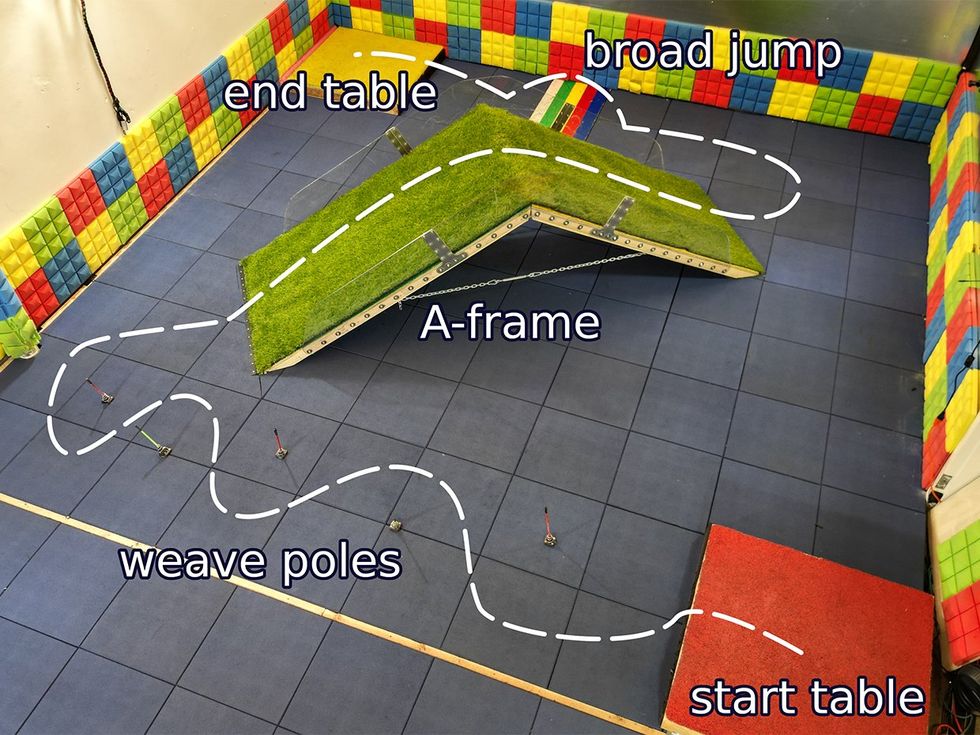

For their reduced-size agility course—the Barkour course was 25 meters squared instead of up to 743 square meters used for traditional courses—Iscen and colleagues chose four obstacles from traditional dog-agility courses: a pause table, weave poles, climbing an A-frame, and a jump.

“We picked these obstacles to put multiple axes of agility, including speed, acceleration, and balance,” he said. “It is also possible to customize the course further by extending it to contain other types of obstacles within a larger area.”

As in dog-agility competitions, robots that enter this course are deducted points for failing or missing an obstacle, as well as for exceeding the course’s time limit of roughly 11 seconds. To see how difficult their course was, the DeepMind team developed two different learning approaches to the course: a specialist approach that trained on each type of skill needed for the course—for example, jumping or slope climbing—and a generalist approach that trained by studying simulations run using the specialist approach.

After training four-legged robots in both of these different styles, the team released them onto the course and found that robots trained with the specialist approach slightly edged out those trained with the generalized approach. The specialists completed the course in about 25 seconds, while the generalists took closer to 27 seconds. However, robots trained with both approaches not only exceeded the course time limit but were also surpassed by two small dogs—a Pomeranian/Chihuahua mix and a Dachshund—that completed the course in less than 10 seconds.

“There is still a big gap in agility between robots and their animal counterparts, as demonstrated in this benchmark,” the team wrote in their conclusion.

While the robots’ performance may have fallen short of expectations, the team writes that this is actually a positive because it means there’s still room for growth and improvement. In the future, Iscen hopes that the easy reproducibility of the Barkour course will make it an attractive benchmark to be employed across the field.

“We proactively considered reproducibility of the benchmark and kept the cost of materials and footprint to be low. We would love to see Barkour setups pop up in other labs.”

—Atil Iscen, Google DeepMind

“We proactively considered reproducibility of the benchmark and kept the cost of materials and footprint to be low,” Iscen says. “We would love to see Barkour setups pop up in other labs and we would be happy to share our lessons learned about building it, if other research teams interested in the work can reach out to us. We would like to see other labs adopting this benchmark so that the entire community can tackle this challenging problem together.”

As for the DeepMind team, Iscen says they’re also interested in exploring another aspect of dog-agility courses in their future work: the role of human partners.

“At the surface, (real) dog-agility competitions appear to be only about the dog’s performance. However, a lot comes to the fleeting moments of communication between the dog and its handler,” he explains. “In this context, we are eager to explore human-robot interactions, such as how can a handler work with a legged robot to guide it swiftly through a new obstacle course.”

A paper describing DeepMind’s Barkour course was published on the arXiv preprint server in May.

- Weak, Brainless Quadruped Robot Autonomously Generates Gaits ›

- HyQ Quadruped Robot From Italy Can Trot, Kick ›

- Robot Videos: Deep Robotics, Robust AI, and More - IEEE Spectrum ›

Sarah Wells is a science and technology journalist interested in how innovation and research intersect with our daily lives. She has written for a number of national publications, including Popular Mechanics, Popular Science, and Motherboard.