It's starting to seem like programming a robot to do anything is old and busted, and the new hotness is to program a robot to learn instead. And it makes sense: why spend a bunch of time and effort programming a robot to solve a specific problem when (with perhaps a little more time and effort) you can create a generalist that can learn to do absolutely anything?

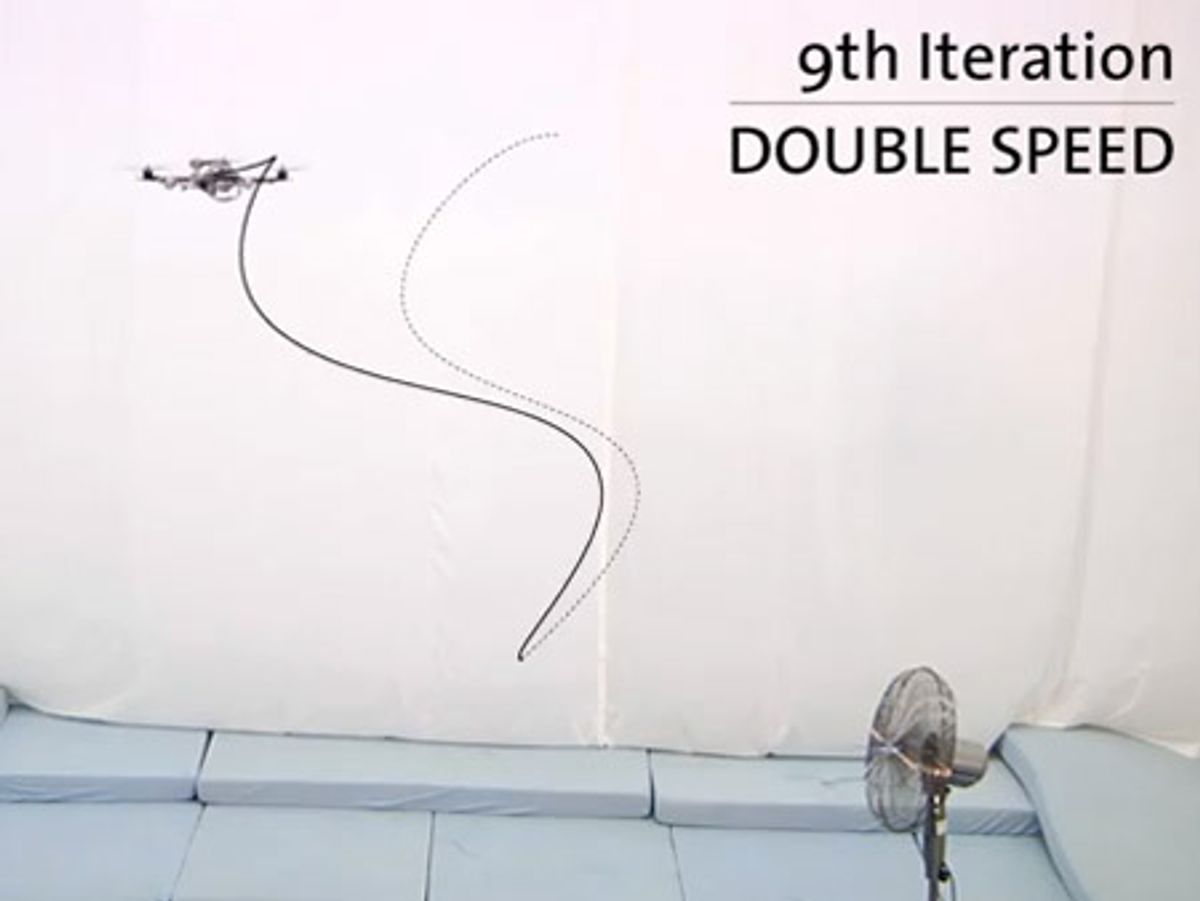

Learning is essentially just the inherent ability to adapt to a new situation, and new situations crop up disturbingly frequently out there in what they call "real life." UAVs, for example, have to deal with annoyances like wind, which has a tendency to blow them off of whatever route they're supposed to be taking. While you could certainly program a UAV to follow a specific trajectory, and then program it to be able to account for wind of varying degrees of windishness, it's much easier just to program it to follow a trajectory adaptively, learning to deal with wind (or any other type of disturbance as it goes). Those crazy quadrotors from the Flying Machine Arena at ETH Zurich demonstrate the concept:

Just like humans, these robots start off being fairly terrible at a given task. Also like humans, they get better quickly, and quite unlike humans, robots never make the same mistake twice, never get tired or bored, can practice and practice until they perfectly master whatever task they've been assigned. Got a new variable to introduce? No problem! Just add in a few more practice sessions and the robot will figure it out

Eventually, the hope is that robots will be able to figure out new situations completely on their own, without even having to ask a human for help. And since networked robots can learn from the mistakes of other networked robots, all it takes is just a few adventurous non-souls to take the plunge on a given task, and robots everywhere can learn and benefit from whatever mayhem may or may not (but probably will) ensue.

[ ETH Zurich ]

Thanks Markus!

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.