We're huge fans of the AR Drone, not just because it's dirt cheap and a huge amount of fun, and also not just because it's actually being used for serious research, but because we love how Parrot just keeps on making it better year after year. At CES last week, they showed us a bunch of upgrades along the path to autonomy, along with their newest toy: a camera equipped eBee UAV from SenseFly.

Let's start with the AR Drone. Parrot has been working on two hardware add-ons, including a 1,500 mAh battery pack that'll give you 50% more flying time, and (much more excitingly) a GPS receiver:

Sadly, the GPS receiver isn't set up to allow full autonomy (yet). At launch, it'll simply record a track of your AR Drone's flight, as well as helping to keep the drone from drifting, but obviously, there's a lot that Parrot (or other clever blokes) should be able to do with this.

If you're looking for more immediate autonomy, Parrot is also releasing some software called Director's Mode,which gives a little taste:

All those buttons down at the bottom let you direct the drone in different ways, like translating it left and right or forward and backward, or rotating around a point. The idea is that it'll make filming things with the drone's camera a lot smoother (hence "Director's Mode"), but Parrot hinted that more advanced scriptability may be forthcoming.

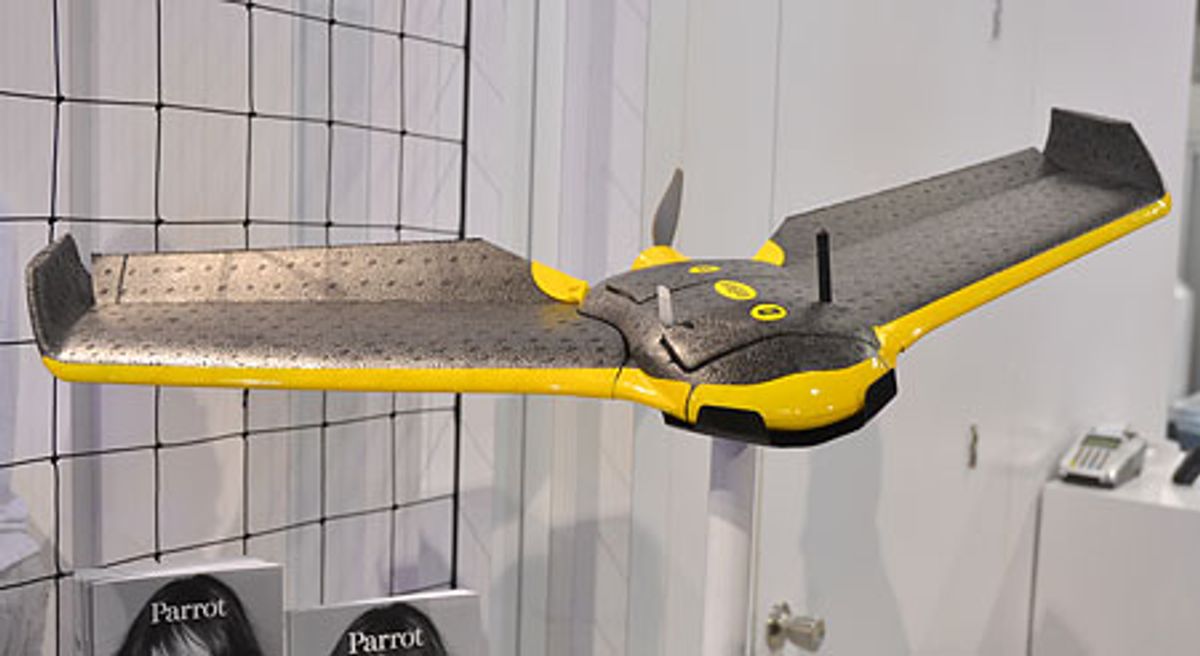

The final new thing that we saw was the eBee, part of Parrot's SenseFly acquisition from earlier this year. The eBee is fully autonomous, and uses an onboard 16 megapixel Canon PowerShot to autonomously survey sites and stitch together big georeferenced and orthorectified 3D panoramas.

It's easy to carry around, easy to launch, lands autonomously, does all of the image processing by itself, and can be yours in 2-3 months for a really-not-that-much-if-you-think-about-it $12,000.

[ Parrot AR Drone ]

[ SenseFly eBee ]

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.