16 July 2009—T-shirts that can snap photos or carpets that are able to report a buildup of dust may one day be possible, thanks to the creation of a fiber that can detect images. Researchers at the Massachusetts Institute of Technology have created a polymer fiber that can detect the angle, intensity, phase, and wavelength of light hitting it, information that can be used to re-create a picture of an object without a lens.

”Once you have the phase and amplitude of a wave, you can then figure out what the object was that the wave emanated from,” says Yoel Fink, director of MIT’s Photonic Bandgap Fibers and Devices Group.

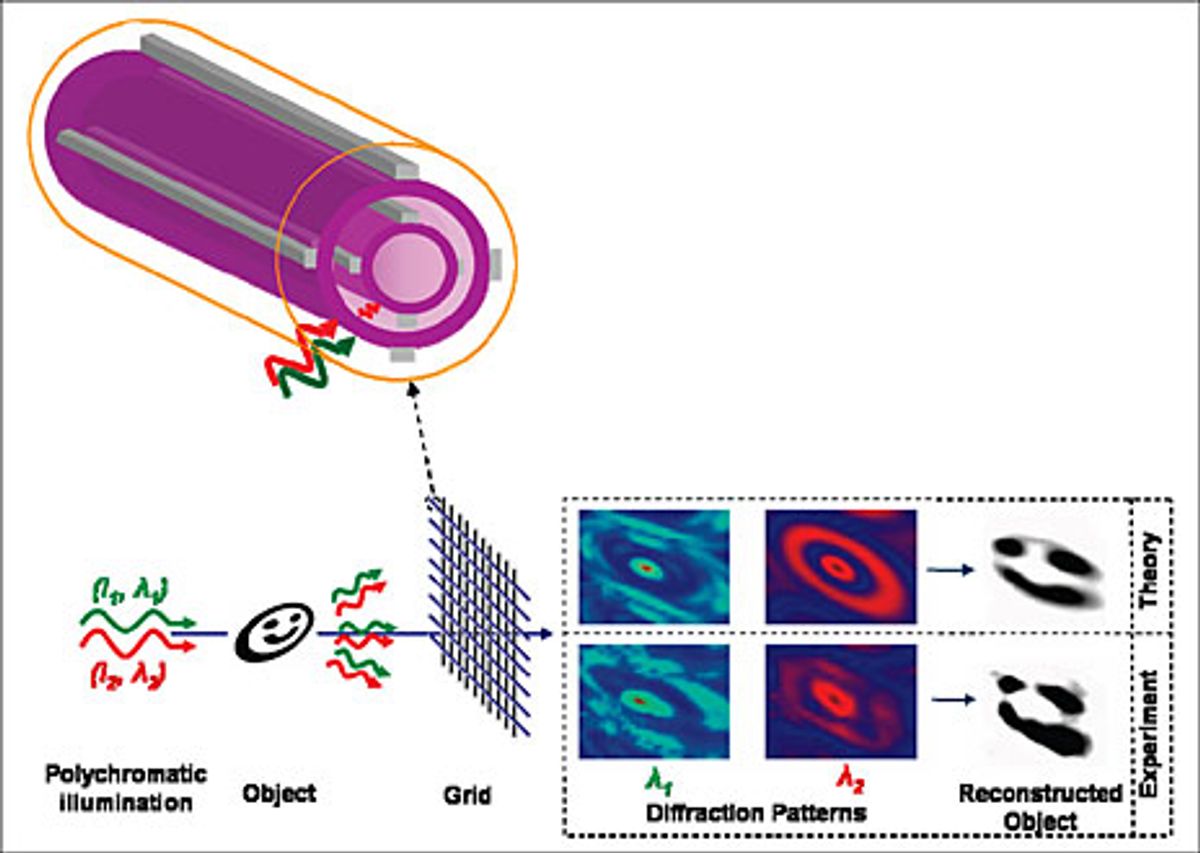

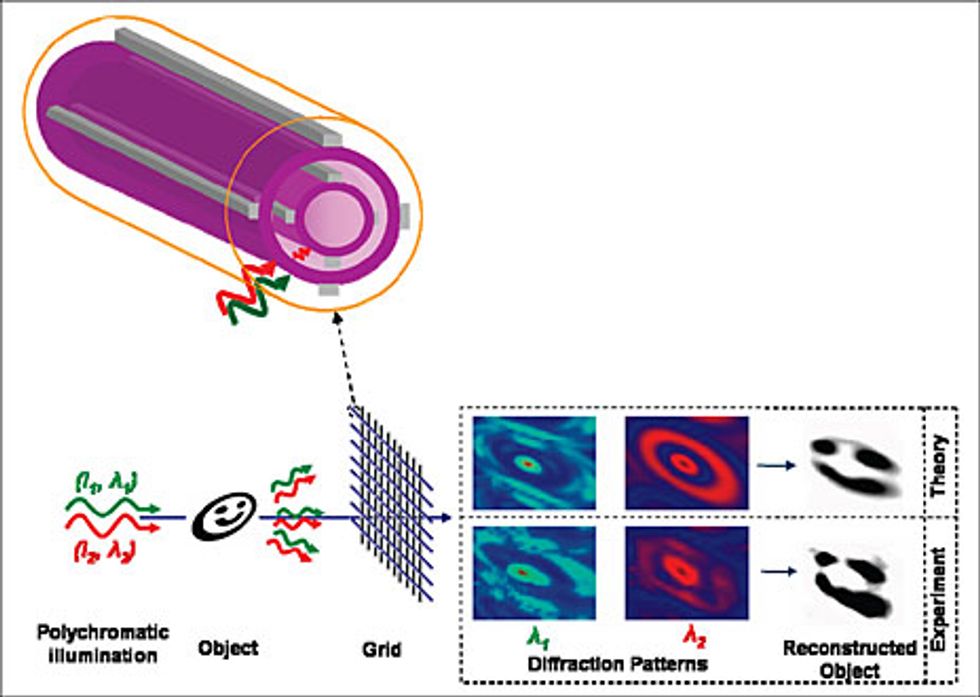

Fink and his team reported in the July issue of Nano Letters that they’d managed to build semiconductor detectors with metal contacts inside a polymer fiber. They then used a grid of those fibers to image an object, specifically a picture of a smiley face. The devices were two concentric tubes of a semiconducting glass, each with four tin contacts serving as electrodes. Each tube was embedded in insulating polyethersulfone. As is usual when creating optical fibers, the researchers started with a thick boule of the materials, which they heated and drew out into a fiber that was just micrometers in diameter.

Illustration: Fink Lab/MIT

A mesh of such fibers captures an image in a less straightforward way than a conventional digital camera does. Light striking the fiber causes a change in the conductivity of the semiconductor, measuring the light’s intensity. Because each of the eight electrodes measures a different change, the researchers can extract information about the angle of illumination. Because there are two layers of semiconductor, the device can deduce a wavelength as well. That’s because the first layer will absorb a certain percentage of the light, and the second layer will absorb another fraction, with the proportion that each absorbs depending on the wavelength. Further, by using two wavelengths, the fibers can calculate the phase of the light. Given all of that information, a computer algorithm can reconstruct the image.

Fink compares this process to using a conventional digital camera but removing the lens and using only the imaging chip to collect the light. Such a setup would produce a diffraction pattern rather than an image. In standard photography, the lens provides the phase information needed to turn the diffraction pattern into an image, whereas with the fibers, it’s the algorithm that essentially does the focusing.

What’s more, if they add a third layer of semiconductor, the fiber would likely get enough wavelength information to reproduce color. He says the team has not verified that experimentally yet.

In fact, he says the camera’s main significance is that it contains eight separate functional devices inside a fiber. The challenge was finding the right combination of different materials with different characteristics, such as viscosity and tensile strength, and assembling them in such a way that they would maintain

their geometry during the drawing process. ”This is all part of a grander scheme,” Fink says. ”Our goal is to achieve a very high level of sophisticated functionality in a fiber, similar to semiconductor devices but using fiber-draw techniques.”

Juan Hinestroza, director of the Textiles Nanotechnology Laboratory at Cornell University, calls Fink’s work ”pretty cool.”

”The ability to impart a fiber with more functions than structural or cosmetic [ones] is very interesting,” Hinestroza says.

About the Author

Neil Savage writes about technology from Lowell, Mass. In May 2009, he reported on efforts to use evolutionary algorithms to design future transistors.