Optical atomic clocks will likely redefine the international standard for measuring a second in time. They are far more accurate and stable than the current standard, which is based on microwave atomic clocks.

Now, researchers in the United States have figured out how to convert high-performance signals from optical clocks into a microwave signal that can more easily find practical use in modern electronic systems.

Synchronizing modern electronic systems such as the Internet and GPS navigation is currently done using microwave atomic clocks that measure time based on the frequency of natural vibrations of cesium atoms. Those vibrations occur at microwave frequencies that can easily be used in electronic systems.

But newer optical atomic clocks, based on atoms such as ytterbium and strontium, vibrate much faster at higher frequencies and generate optical signals. Such signals must be converted to microwave signals before electronic systems can readily make use of them.

“How do we preserve that timing from this optical to electronic interface?” says Franklyn Quinlan, a lead researcher in the optical frequency measurements group at the U.S. National Institute of Standards and Technology (NIST). “That has been the big piece that really made this new research work.”

By comparing two optical-to-electronic signal generators based on the output of two optical clocks, Quinlan and his colleagues created a 10-gigahertz microwave signal that synchronizes with the ticking of an optical clock. Their highly precise method has an error of just one part in a quintillion (a one followed by 18 zeros). The new development and its implications for scientific research and engineering are described in the 22 May issue of the journal Science.

The improvement comes as many researchers expect the international standard that defines a second in time—the Système International (SI)—to switch over to optical clocks. Today’s cesium-based atomic clocks require a month-long averaging process to achieve the same frequency stability that an optical clock can achieve in seconds.

“Because optical clocks have achieved unprecedented levels of accuracy and stability, linking the frequencies provided by these optical standards with distantly located devices would allow direct calibration of microwave clocks to the future optical SI second,” wrote Anne Curtis, a senior research scientist at the National Physical Laboratory in the United Kingdom, in an accompanying article. Curtis was not involved in the research.

Optical clocks can already be linked together physically through fiber-optic networks, but this approach still limits their usage in many electronic systems. The new achievement by the U.S. research team—with members from NIST, the University of Colorado-Boulder, and the University of Virginia in Charlottesville—could remove such limitations by combining the performance of optical clocks with microwave signals that can travel in areas without a fiber-optic network.

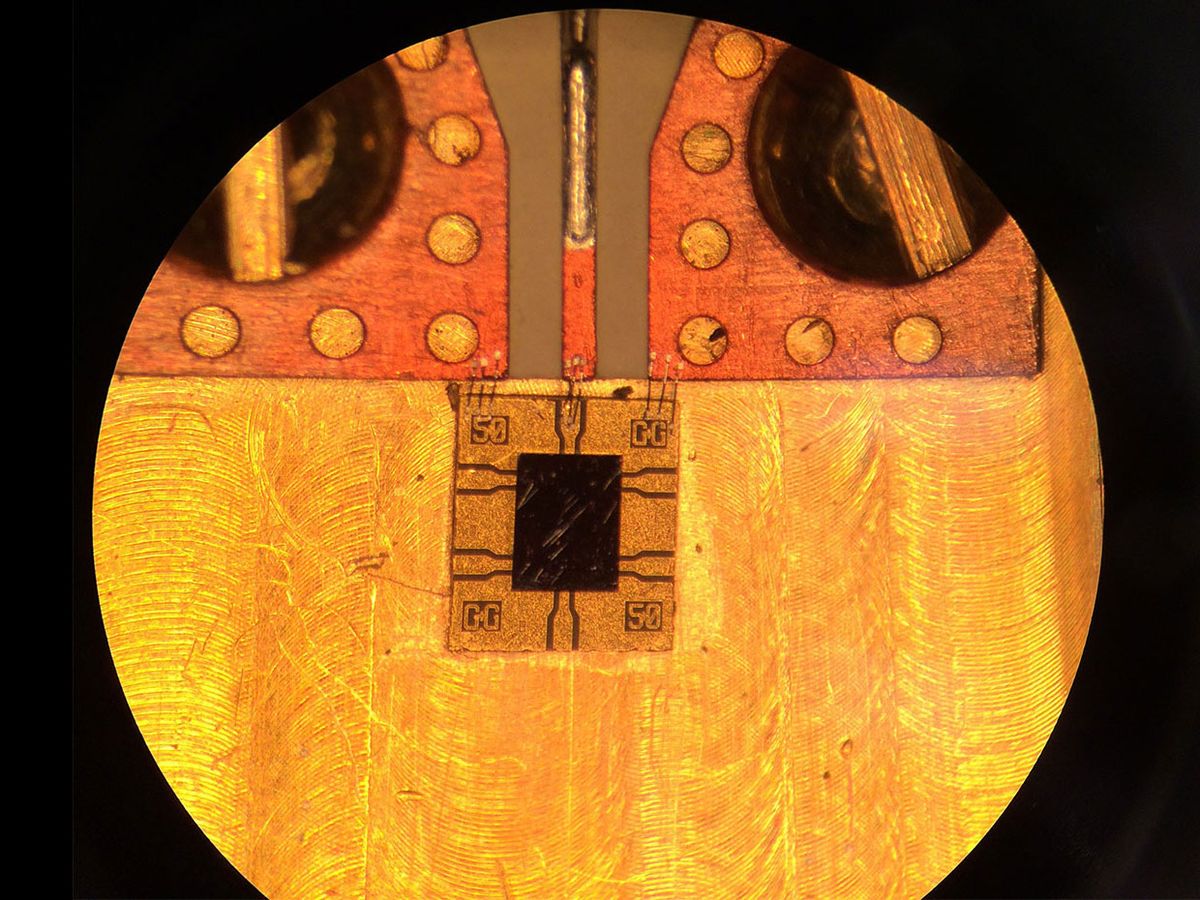

For its demonstration, the team built its own version of an optical frequency comb, a pulsed-laser device that uses very brief light pulses to create a repetition rate that, when converted to frequency numbers, resembles “a comb of evenly spaced frequencies or tones spanning the optical regime,” Curtis explains. Modern optical frequency combs were first developed 20 years ago and have played a starring role in both fundamental research experiments and various technological systems since that time.

By measuring the optical beats between a single comb tone and an unknown optical frequency, researchers knew they should be able to directly link faster optical frequencies to slower microwave frequencies. Doing that required a photodetector developed by researchers at the University of Virginia to carry out the optical-to-microwave conversion and generate an electrical signal. The team also wrote its own software for off-the-shelf digital sampling hardware to help digitize and extract the phase information from the optical clocks.

“The piece that has lagged a bit is the high-fidelity conversion of optical pulses to microwave signals with the optical-to-electrical convertor,” Quinlan says. “So if you have pulses where you know the timing to within a femtosecond (one quadrillionth of a second), how do you convert those photons to electrons while maintaining that level of timing stability? That has taken a lot of effort and work to understand how to do that really well.”

The researchers didn’t quite reach their original benchmark for minimizing the potential instability and errors in the microwave signals synchronized with the optical clocks. But even with the current performance, Quinlan and his colleagues realized: “Okay, great, that'll support current and next-generation optical clocks.”

Curtis describes the improved capability to synchronize microwave signals with optical clock signals as a “paradigm shift” that will impact “fundamental physics, communication, navigation, and microwave engineering.” One of the most immediate applications could involve higher-accuracy Doppler radar systems used in navigation and tracking. A more stable microwave signal can help radar detect even smaller frequency shifts that could, for example, better distinguish slow-moving objects from the background noise of stationary objects.

Future space telescopes based on very-long-baseline interferometry (VLBI) could also benefit from the highly stable microwave signals synchronized with optical clocks. Today’s ground-based VLBI telescopes use receiver devices spread across the globe to detect microwave and millimeter-wave signals and combine them into high-resolution images of cosmic objects such as black holes. A similar VLBI telescope located in space could boost the imaging resolution while avoiding the Earth’s atmospheric distortions that interfere with astronomers’ observations. In that scenario, having optical-clock-level stability to synchronize all the signals received by the VLBI telescope could improve observation time from seconds to hours.

“Essentially you’re collecting signals from multiple receivers and you need to time-stamp those signals to combine them in a meaningful way,” Quinlan says. “Right now the atmosphere distorts the signal enough so that [it] is a limitation rather than the time-stamping from a stable clock, but if you get away from atmospheric distortions, you could do much better and then you can utilize a much more stable clock.”

There is still more work to be done before more electronic systems can take advantage of such optical-to-microwave conversion. For one thing, the sheer size of optical clocks means that nobody should expect a mobile device to have a tiny optical clock inside anytime soon. In the team’s latest research, their optical atomic clock setup occupied a lab table about 32 square feet in size (almost 3 square meters).

“Some of my coauthors on this effort led by Andrew Ludlow at NIST, as well as other folks around the world, are working to make this much more compact and mobile so that we can kind of have optical-clock-level performance on mobile platforms,” Quinlan says.

Another approach that could bypass the need for miniature optical clocks involves figuring out whether microwave transmissions could maintain the stability of the optical clock performance when transmitted across large distances. If this works, stable microwave transmissions could wirelessly synchronize performance across multiple mobile devices.

At the moment, optical clocks can be linked only through either fiber-optic cables or lasers beamed through the air. The latter often becomes ineffective in bad weather. But the team plans to explore the beaming possibility further with microwaves, especially after its initial success and with support from both NIST and the Defense Advanced Research Projects Agency.

“What would be great is if we had a microwave link that basically maintains the stability of the optical signal but can then be transmitted on a microwave carrier that doesn't suffer from rainy days and from dusty conditions,” Quinlan says. “But it's still yet to be determined whether or not such a link could actually maintain the stability of the optical clock on a microwave carrier.”

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.