In-hand manipulation is one of those things that’s fairly high on the list of “skills that are effortless for humans but extraordinarily difficult for robots.” Without even really thinking about it, we’re able to adaptively coordinate four fingers and a thumb with our palm and friction and gravity to move things around in one hand without using our other hand—you’ve probably done this a handful (heh) of times today already, just with your cellphone.

It takes us humans years of practice to figure out how to do in-hand manipulation robustly, but robots don’t have that kind of time. Learning through practice and experience is still the way to go for complex tasks like this, and the challenge is finding a way to learn faster and more efficiently than just giving a robot hand something to manipulate over and over until it learns what works and what doesn’t, which would probably take about a hundred years.

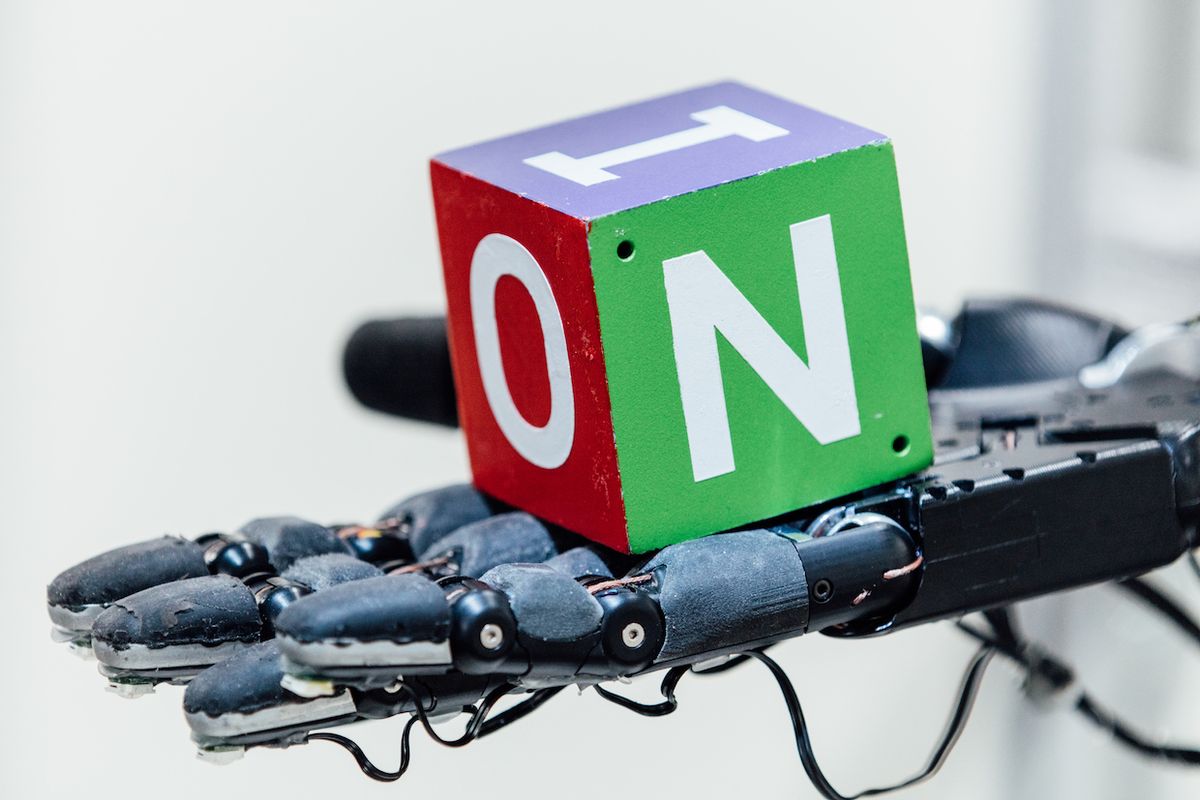

Rather than wait a hundred years, researchers at OpenAI have used reinforcement learning to train a convolutional neural network to control a five-fingered Shadow hand to manipulate objects, all in just 50 hours. They’ve managed this by doing it in simulation, a technique that is notoriously “doomed to succeed,” but by carefully randomizing the simulation to better match real-world variability, a real Shadow hand was able to successfully perform in-hand manipulation on real objects without any retraining at all.

Ideally, all robots would be trained in simulation, because simulation is something that can be scaled without having to build more physical robots. Want to train a bajillion robots for a bajillion hours in one bajillionth of a second? You can do it, with enough computing power. But try to do that in the real world, and the fact that nobody knows exactly how much a bajillion is will be the least of your problems.

The issue with using simulation to train robots is that the real world is impossible to precisely simulate, and it’s even more impossible to precisely simulate when it comes to thorny little things like friction and compliance and object-object interaction. So the accepted state of things has always been that simulation is nice, but that there’s a big scary step between simulation success and real world success that somewhat diminishes the value of the simulation in the first place. It doesn’t help that the things it would be really helpful to simulate (like in-hand manipulation) are also the things that tend to be the most difficult to simulate accurately, because of how physically finicky they are.

A common approach to this problem is to try to make the simulation as accurate as possible, in the hope that it’ll be close enough to the real world that you’ll be able to get some useful behaviors out of it. OpenAI is instead making accuracy secondary to variability, giving its moderately realistic simulations a bunch of slightly different tweaks with the goal of making the behaviors that they train robust enough to function outside of simulation as well.

The randomization process is the key to what makes the system (called Dactyl) able to transition from simulation to real world effectively. To reiterate, OpenAI is well aware that the simulations that it’s using aren’t nearly complex enough to accurately model a huge pile of important things, from friction to the way that the fingertips of a real robot hand experience wear over time. To get the robot to generalize what it’s learning to do, OpenAI randomizes as many aspects of the simulation as they can, in an attempt to cover all of the variability that can’t be modeled very well. This includes the mass and dimensions of the object, friction of both the object’s surface and the robot’s fingertips, how well the robot’s joints are damped, actuator forces, joint limits, motor backlash and noise, and more. Small random forces are applied to the object to handle additional unmodeled dynamics. And that’s just the manipulation—there’s just as much variability in the way the RGB cameras were trained in object pose estimation, which is a bit easier to visualize:

OpenAI calls this “domain randomization,” and with in-hand manipulation, OpenAI says, “we wanted to see if scaling up domain randomization could solve a task well beyond the reach of current methods in robotics.” Here’s how it went, with two independently trained networks (one for vision and one for manipulation) visually detecting the pose of the cube and then manipulating it into different orientations.

These cube manipulations (the system can do at least 50 in a row successfully) are the result of 6,144 CPU cores and 8 GPUs collecting 100 years of simulated robot experience in 50 hours. The only feedback that the system gets (both in simulation and IRL) is the position of the cube and the position of the fingertips of the hand, and the system didn’t start out with any particular notions about how a cube should be grasped or manipulated. It had to discover everything by itself, from scratch, including finger pivoting, multi-finger coordination, controlled use of gravity, and coordinated force application. It managed to come up with all of the same techniques that humans use, with some minor (and interesting) modifications:

For precision grasps, our policy tends to use the little finger instead of the index or middle finger. This may be because the little finger of the Shadow Dexterous Hand has an extra degree of freedom compared to the index, middle and ring fingers, making it more dexterous. In humans the index and middle finger are typically more dexterous. This means that our system can rediscover grasps found in humans, but adapt them to better fit the limitations and abilities of its own body.

We observe another interesting parallel between humans and our policy in finger pivoting, which is a strategy in which an object is held between two fingers and rotate around this axis. It was found that young children have not yet fully developed their motor skills and therefore tend to rotate objects using the proximal or middle phalanges of a finger. Only later in their lives do they switch to primarily using the distal phalanx, which is the dominant strategy found in adults. It is interesting that our policy also typically relies on the distal phalanx for finger pivoting.

The upshot of all of this is that it turns out you can, in fact, train robots to do complex physical things in simulation, and then immediately use those skills out of simulation, which is a big deal, because training in simulation is much much faster than training in the real world.

For more details, we spoke with Jonas Schneider, member of the technical staff at OpenAI, via email.

IEEE Spectrum: Why is in-hand manipulation such a difficult problem for robots?

Jonas Schneider: All of the manipulation happens in a very compact space with using a robot with a large number of degrees of freedom. Successful manipulation strategies require that all these degrees of freedom are properly coordinated, so there’s lower margin for error than for simpler types of interactions such as grasping. In-hand manipulation also results in a lot of contacts being made with the object. Modeling these contacts is very hard and error-prone. Errors during execution have to be corrected as the policy runs, which many traditional planning-based approaches struggle with, for example because they may only have linear feedback which doesn’t capture non-linear contact dynamics.

It sounds like randomness is key to making policies learned in simulation robust in the real world. How do you decide what things to randomize, and how much to randomize them?

We do calibration to see what the “right” physics parameters are at a rough level, and we figure out which parameters are the most important to replicate the same behavior in sim. We then set those parameters to the calibrated value and randomize around that mean. The range of the randomizations depends on our certainty: For example, the object size is only slightly randomized because we can measure it accurately.

Some of the randomizations are based on empirical observations. For example, we saw that our policy would sometimes drop the object by lowering the wrist and not lifting it up again in time before the object would roll off. We discovered that due to issues with the low-level controller, the execution of our actions would sometimes be delayed by up to a few hundred milliseconds. While we could have spent the effort to make the controller have entirely deterministic timing, we instead opted to just randomize the duration of each controller timestep in simulation as well. On a higher level, we think this could be an interesting approach for future robot design and engineering; for some problems and applications, designing precise hardware might be prohibitively expensive, but we show these kinds of hardware deficiencies can be corrected using more capable algorithms.

How much would you expect your results to improve if you used (say) 1000 years of simulated experience instead of 100?

On the concrete task, this is somewhat tough to say because we never tested beyond 50 rotations. It’s not clear what the asymptotic performance curve looks like, but we consider the project pretty much completed since even achieving one rotation is so far beyond what current state-of-the-art methods can do— we initially chose 50 because we thought 25 would ensure it’s clearly demonstrated we’ve solved it, and then we added a 100% safety margin :). If you wanted to optimize for really really long sequences and high reliability, increasing training time would probably help. Though at some point, we’d even expect the policy to overfit to simulation and perform worse in the real world even with many randomizations; at that point you’d actually have to add more randomizations to make the simulation harder to solve again, which in turn increases the robustness of the resulting policy.

How well can your results be generalized? For example, how much retraining would be required to manipulate a smaller cube, or a cube that was squishy or slippery? What about using a different arrangement of cameras?

We actually tried manipulating a squishy and slightly smaller foam block using the same policy just for fun, and the performance doesn’t significantly differ at all from the block that’s entirely solid. We also ran experiments with different-sized blocks in simulation (here are some tiny and giant ones), where we re-trained on the new setting, which worked equally well (haven’t tried this on the real robot though). We also randomize the object size in training as one of the randomizations. We haven’t tried this, but I think it’s pretty likely we could increase the range of the block size randomization significantly and then be able to manipulate different-sized blocks with the same policy.

Regarding the cameras, the vision model is trained separately and right now we only randomize the camera positions within a small margin, so we re-train whenever we move cameras. One of our summer interns, Hsiao-Yu Fish Tung, is actually working on making the vision model fully invariant to camera placement using the same basic technique of randomizing the camera pose and orientation over a wide range.

How do you think training in simulation compares to a more brute-force approach of using lots of physical robots?

One thing that’s interesting is that we started out the project questioning the idea that simulation can help advance robotics. For robotics applications in particular, we’ve seen years of results (including our own) that have produced very impressive results in simulation using reinforcement learning. However, when we talked to researchers from classical robotics about them, there was a pretty constant disbelief that these kinds of methods can work in reality. The core issue is that simulators aren’t very physically accurate (even though they look good enough to the human eye). Even worse, the more accurate simulators are the more computationally expensive they tend to get. So what we set out to do was to set a new benchmark that requires working with a very complex hardware platform, where you’re forced to confront all the limitations of simulations.

Regarding the “arm farm” approach, the core limitation of learning on physical robots is the limited scalability to complex tasks. It’s doable for a setup where you have a bunch of objects in a self-stabilizing “stateless” environment (e.g. a bin with walls), but it would be really tough to apply the same method to, say, an assembly task where after each trial, your system is now in a new state. Also, instead of setting up the environment once, you now have to set it up N times, and maintain it when e.g. the robot thrashes around and breaks something. This is all much more easily and safely done in simulation using elastic compute capacity.

In the end, I think our work strengthens the idea of learning in simulation, since we’ve shown that even for very complex robots we can solve the transfer problem. However, this doesn’t invalidate the idea of learning on the real robot; it will be tough to fix fundamental limitations of simulators to, for example, simulate deformable objects and liquids.

Where is your system the weakest?

At this point, I’d say the weakest point is the hand-designed and task-specific randomizations. A possible approach in the future could be to try to learn these randomizations instead, by having another “outer layer” of optimization that represents the process we currently do manually (“try several randomizations and see if they help”). It would also be possible to take it a step further and use self-play between a learning agent and an adversary that tries to hinder the agent’s progress (but not by too much). This dynamic could lead to very robust policies, because as the agent becomes better, the adversary has to be more clever in order to hinder it, which results in a better agent, and so on. This idea has been explored before by Pinto et al.

You say that your ultimate goal is to build robots for the real world. What has to happen before you can do that?

What we’re looking to do is expand the capabilities of robots in unconstrained environments. In such environments, it is impossible to know everything in advance and have models for each object. Additionally, placing special markers on objects outside a lab seems undesirable. Our robots thus have to learn how to deal with many situations and be able to make reasonable choices given situations that they have not encountered before.

What are you working on next?

We’re going to keep working on providing robots with more sophisticated behaviors; at this point it’s too early to say which exactly those are going to be. In the long term, we’re hoping to give robots general manipulation capabilities so that they can learn about their environment similar to how a toddler would learn, by playing with objects in their vicinity but not necessarily with adult supervision. We think that intelligence is grounded in interaction with the real world, and that in order to accomplish our mission of building safe artificial general intelligence, we have to be able to learn from real-world sensory experiences as well as from simulation data.

OpenAI has lots more detail on their blog, linked below, and the paper is available here.

[ OpenAI ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.