We all know how annoying real robots are. They’re expensive, they’re finicky, and teaching them to do anything useful takes an enormous amount of time and effort. One way of making robot learning slightly more bearable is to program robots to teach themselves things, which is not as fast as having a human instructor in the loop, but can be much more efficient because that human can be off doing something else more productive instead. Google industrialized this process by running a bunch of robots in parallel, which sped things up enormously, but you’re still constrained by those pesky physical arms.

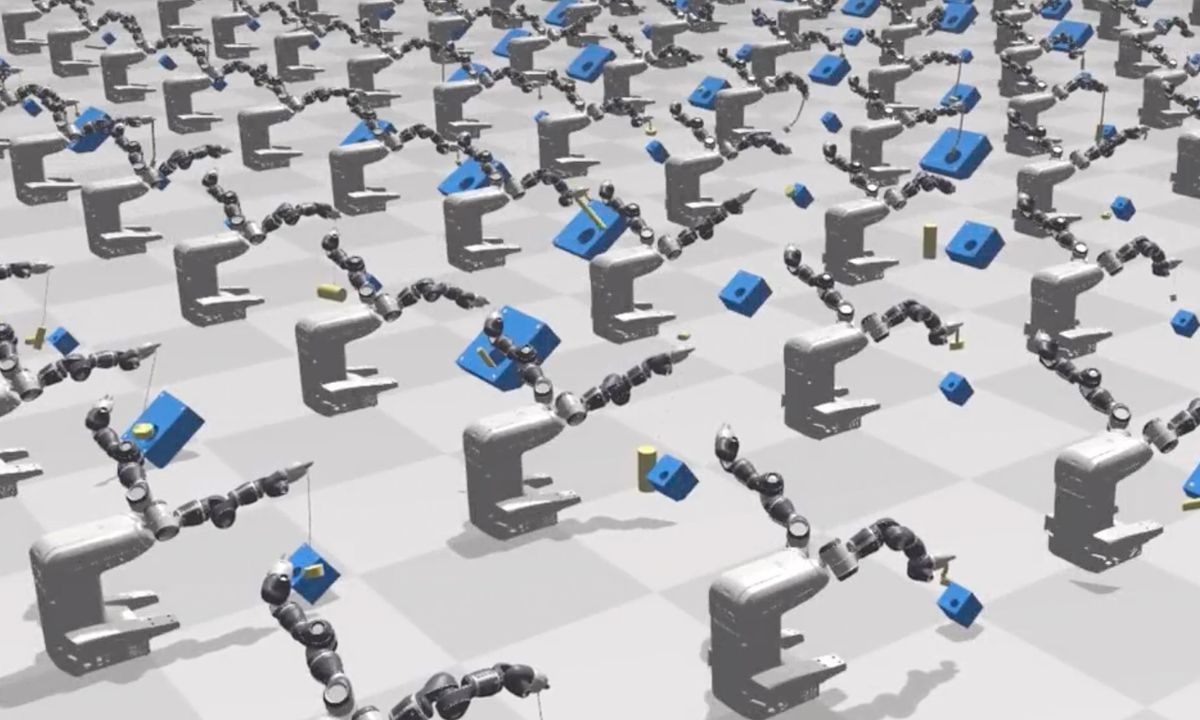

The way to really scale up robot learning is to do as much of it as you can in simulation instead. You can use as many virtual robots running in virtual environments testing virtual scenarios as you have the computing power to handle, and then push the fast forward button so that they’re learning faster than real time. Since no simulation is perfect, it’ll take some careful tweaking to get it to actually be useful and reliable in reality, and that means that humans have get back involved in the process. Ugh.

A team of NVIDIA researchers, working at the company’s new robotics lab in Seattle, is taking a crack at eliminating this final human-dependent step in a paper that they’re presenting at ICRA today. There’s still some tuning that has to happen to match simulation with reality, but now, it’s tuning that happens completely autonomously, meaning that the gap between simulation and reality can be closed without any human involvement at all.

Pieter Abbeel from UC Berkeley summarized this whole “sim-to-real” process when we were talking with him about the Blue robot arm a little while ago:

For everyone who does sim-to-real research, the proof is still in running it on the real robot, and showing that it transfers. And there’s a lot of iteration there. It’s not like you train in sim, you test on the real robot, and you’re done. It’s more like, you train in sim, test on the real robot, realize it’s not generalizable, rethink your approach, and train in a new sim, and hope that now it’ll generalize on the real robot. And this process can go on for a long time before you actually get that generalization behavior that you hope for. And in that process you’re constantly testing on a real robot to see if your generalization works, or doesn’t work.

While it would be amazing if we could just remove the real robot testing completely and just go straight from simulation to deployment, we can’t, because no simulation is a good enough representation of the real world for that to work. The way to deal with this reality gap is to just “mess with” the simulation in specific ways (what is known as “domain randomization”) to build in enough resiliency that you can cope with the inherent uncertainty and occasional chaos that reality throws your way.

It’s this process of messing with (and ideally optimizing) the parameters of the simulation that requires an experienced human, and even if you know what you’re doing, it can be tedious and time consuming. Basically, you run the simulation for a while, try the learned task on a real robot, watch exactly how it fails, and then go in and alter the simulation parameters that you think will help get things closer.

The overall goal here is that you want your algorithm to not be able to distinguish between running in simulation and running in the real world on a real robot. NVIDIA’s approach to achieving this goal without a human in the loop is to use the negative data resulting from testing on a real robot and failing, feeding that back into the simulation to refine the simulation parameters to bring them closer to observed reality.

Basically, the system trains in simulation, tests on a real robot, watches the test fail (with an off-the-shelf 3D sensor), and then says, “Oh hey, that failed real trajectory looked a lot like this particular simulated trajectory, so that simulated scenario must be closer to reality, let’s do more of that.” After a few iterations of this, the system is able to identify simulation parameters that are much closer to what it observes in the real world, leading to success in the task on the real robot.

The NVIDIA researchers say that once the initial range of parameters has been set, the rest of the process is completely hands-off from start to finish. There’s a human nearby just in case the robot goes berserk and needs to be e-stopped, but otherwise, this could be running completely lights-out. The overall performance of the system, according to the researchers, is just as good as you’d get with manual tuning by a human—it’s not going to be as fast because it doesn’t have that special human expert brain sauce, but the end result should be similar.

And remember, this is all about scaling, about leveraging simulation to train more robots on more tasks than would be physically possible otherwise, and to do that we’re going to have to stop relying on having humans in the loop all the time.

“Closing the Sim-to-Real Loop: Adapting Simulation Randomization with Real World Experience,” by Yevgen Chebotar, Ankur Handa, Viktor Makoviychuk, Miles Macklin, Jan Issac, Nathan Ratliff, and Dieter Fox from NVIDIA, University of Southern California, University of Copenhagen, and University of Washington, will be presented this week at ICRA 2019 in Montreal, Canada.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.