Neptune Rising

The biggest undersea observatory ever conceived is taking shape off North America’s Pacific coast

Imagine trying to understand the weather “by looking out your window once a week.” That gives you an idea of what it’s like to be a modern ocean scientist, says Richard Dewey, an ocean-modeling expert at the University of Victoria, in British Columbia, Canada.

To explore the deep, most oceanographers must jockey for a slice of time on a limited number of research ships, which generally leave port in the summer, when weather is most cooperative. After many days of chugging out to a particular spot on the ocean surface, they have just a few more days to launch tethered sensors, minisubs, or divers for a close-up look at what lies below. The result is choppy and piecemeal data, making it difficult to sort out cause and effect. And what data they get, of course, is skewed to the conditions that prevail during calm seas and summer months.

Hardly anyone doubts that such data is inadequate to understanding some of the most important questions about Earth’s oceans—questions whose answers will be critical to our ability to feed a booming human population, power our cities, and fathom Earth’s climate. Technology is responding to this challenge, but the efforts so far have been incremental and inadequate.

More and more, researchers are leaving instruments behind to continue sensing after research vessels depart, but severely constricted power and communications bandwidth limit their usefulness; they run on batteries, and many deliver data via painfully slow acoustic modems. Minisubs go deeper than ever but can’t stay down long. A minisub such as the famous Alvin , operating out of Woods Hole, Mass., can take scientists down 4500 meters (a few go even deeper), but only for 5 to 10 hours. And the high cost of minisubs makes them a rare breed—by one recent count, only 40 minisubs operate today worldwide.

Dewey and his colleagues at a consortium of Canadian and U.S. universities and research labs think they’ve come up with a better way to get the job done. If they’re right, ocean science will never be the same. They are in the vanguard of a growing movement to push ocean science toward the kind of 24/7 observation that scientists on dry land take for granted.

How do they intend to do it? By literally wiring the deep, seeding thousands of square kilometers of the ocean bottom with hundreds of sensors, cameras, and instruments powered from shore via an undersea 100-kilowatt grid. Linked together, the instruments are part of a multigigabit-per-second data network designed to continuously pour information onto the Internet, where scientists on land can access it. It is called the North-East Pacific Time-Series Undersea Networked Experiments, or NEPTUNE.

If NEPTUNE is completed, 200 000 square kilometers of ocean floor off the coasts of Oregon, Washington, and British Columbia will become one vast round-the-clock online undersea observatory pumping ashore a petabyte of invaluable deep-sea data every year.

In a sense, it will be as if dozens of spots on the sea floor had Ethernet ports and power outlets. Into these, scientists will literally be able to plug in almost any kind of instrument they can think of: seismometers, water current meters, nutrient monitors, tethered robotic subs, sea floor rovers, high-definition cameras, and more. “We are providing a whole new dimension to oceanography by bringing power and the Internet to an environment that simply hasn’t had that,” says Christopher Barnes, the distinguished oceanographer and paleobiologist who is executive director for NEPTUNE Canada. This consortium, based at the University of Victoria, is leading the effort to build the northern third of NEPTUNE.

NEPTUNE will cover one of Earth’s most diverse and dynamic landscapes [see map, "NEPTUNE's Realm"]. The network will trace the outline of the Juan de Fuca tectonic plate. One of the smallest of Earth’s several dozen tectonic plates, it is bounded by the coast of Oregon, Washington, and British Columbia to the east and the gigantic Pacific tectonic plate a few hundred kilometers out to sea to the west. The plates consist of crusts of rock, tens of kilometers thick, that float atop Earth’s mantle layer. Collisions among the crusts produce a lot of important geological phenomena, such as earthquakes and tsunamis.

North America is sliding over Juan de Fuca’s eastern edge at the geologically breakneck pace of 4.5 centimeters per year and, in the process, fueling dramatic changes underwater. As the North American plate plows sediments along the ocean bottom, liquids and gases ooze from the seabed, including natural gas hydrates—a frozen mixture of methane and water. The hydrates could prove to be the last frontier for fossil fuel exploration, or they could be a potent source of greenhouse gases whose release could induce sudden climate change.

On Juan de Fuca’s southwest edge, about 400 kilometers west of Portland, Ore., rises an undersea volcanic crater that has exploded three times in the last 15 years, making it one of the most active sites in the world and a perfect laboratory to study volcanism.

A few hundred kilometers west of Vancouver Island, Juan de Fuca’s collision with the vast Pacific plate gives rise to Endeavor Ridge, a hyperactive earthquake zone whose magma chambers produce 300 C jets of water that feed an undersea jungle teaming with bizarre flora and fauna [see photo, "Black Smokers”]. These hydrothermal vents—one of the most important biological discoveries of the past 30 years—have shattered long-held conceptions of life’s limits on this planet and the prospects for finding it elsewhere.

Seawater seeping through cracks in the strained sea floor at Endeavor hits magma-heated rock and erupts to the surface as a superhot soup carrying a corrosive mix of dissolved minerals. When the minerals meet the icy water of the ocean bottom, they precipitate out, depositing rock chimneys tens of meters high. Ancient vents like Endeavor’s are a likely source of many large ore deposits on land.

But just as important as the geology and geophysics behind the vents is the life they sustain. Before the vents were discovered, biological dogma held that all life was ultimately dependent on the sun for energy. Yet hydrothermal vents such as Endeavor’s, which lie in the darkest depths of the sea, support a riot of life. These weird ecosystems—thick mats of microbes, snails, tube worms, giant spider crabs, and stranger stuff—live off the vents’ hot chemical plumes. Endeavor is home to at least a dozen species found nowhere else on Earth, including a microbe that thrives at 121 C, making it the planet’s heat-tolerance champion.

Ecosystems like Endeavor’s give hope that life may find a way in seemingly inhospitable extraterrestrial environments, such as Jupiter’s icy moon Europa. But exploring Endeavor’s hot vents and Juan de Fuca’s other treasures has been a slow and often frustrating process. There is never enough time below with submersibles. And getting positioned to observe the plate at its most dynamic moments is a game of hit or miss with today’s ship-based oceanography.

Consider the swarm of earthquakes that rattled Endeavor early this year. U.S. Navy hydrophones detected 3742 earthquakes over a six-day period, a sign that the plate’s crust was stretching. It was an excellent opportunity to measure how fast the crust is moving—a key parameter needed to predict the Pacific Northwest’s next killer earthquake. Scrambling a research ship, Seattle-based University of Washington seismologists set out to deploy more-sensitive instruments. But they arrived one week after the swarm began—and one day after it ended.

Such frustrations inspired the pioneers behind NEPTUNE. In the early 1990s, John Delaney, a professor of oceanography at the University of Washington, began to work on the idea of making a cabled observatory at Endeavor Ridge that would provide continuous power and telecommunications to instruments there. He found an enthusiastic ally in University of Victoria biologist Verena J. Tunnicliffe, who made some of the earliest discoveries about the vents’ ecology and who was equally frustrated by the piecemeal science she was restricted to on a ship.

By 2000, Delaney and Tunnicliffe had assembled a steering committee from five institutions to try to make NEPTUNE a reality. It included their home institutions as well as the Monterey Bay Aquarium Research Institute; the Woods Hole Oceanographic Institution, which brought experience in developing underwater research technology; and the NASA Jet Propulsion Laboratory. JPL, in Pasadena, Calif., contributed its expertise in engineering for long-term operation in the most remote environment of all: space.

Together, researchers at these institutions have forged a plan for 3000 km of power and fiber-optic cable emanating from shore stations on Vancouver Island and the Oregon coast and linking some 30 science stations, or nodes [see again, "NEPTUNE's Realm"]. Data and power lines radiate from the nodes to dozens of sensors. This integrated network of instruments should be capable of monitoring every shake and shudder of the tectonic plate, each puff from volcanic vents, and the shifting circulation of the sea above without an oceanographer donning so much as a sweater, let alone a wet suit.

With NEPTUNE, oceanographers are aiming for an observatory infrastructure that is robust enough to last 30 years and versatile enough to provide power to, and stream data from, essentially any kind of instrument an ocean scientist might need. Scientists will help design instruments to be installed on NEPTUNE nodes and may get some brief exclusive use, but in short order the instruments—and their data streams—will become available to their peers and perhaps even to the public. Doing science with NEPTUNE will be more like mining a fast-growing database than signing up for a few days aboard Alvin.

Today, after five years of work, the network is launching a little more slowly than expected. The Juan de Fuca plate straddles the Canadian-U.S. border, with a smaller part in Canada. So U.S. partners were to bear the brunt of the cost: 70 percent. But the U.S. funding has stalled, forcing NEPTUNE’s proponents to start small.

Instead of lighting up the entire Juan de Fuca plate, NEPTUNE will at first cover just its northern third. What was originally planned as a 3000-km network touching 30 sites of interest will launch as an 800-km loop with instruments at two or three sites along it. Yet even in its truncated state, NEPTUNE poses a daunting technological challenge. “There’s significant innovation in almost every facet,” says Peter Phibbs, associate director, engineering and operations, for the Canadian team. “Making a communications system work in 3000 meters of water is not trivial.” The power engineers, adds Phibbs, had to start from scratch.

Recognizing the complexity and ambition of the task—particularly in light of tight budgets—the NEPTUNE collaborators hatched two preliminary projects, called MARS and VENUS, to test the parts before tackling the whole. MARS stands for the Monterey Accelerated Research System and VENUS for the Victoria Experimental Network Under the Sea. The real action begins this month as these preliminary projects go into the water.

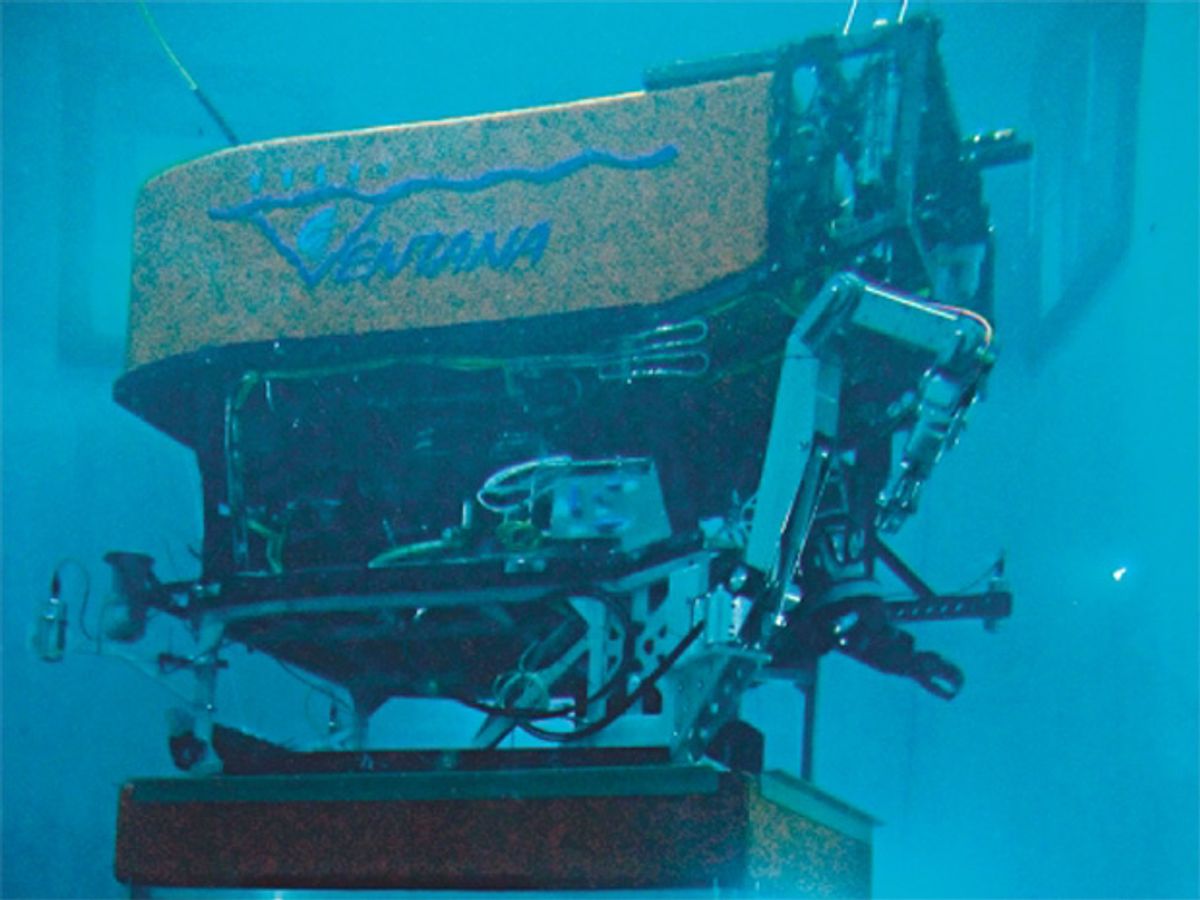

In a sleepy inlet off the southeast shore of Vancouver Island, VENUS engineers from the University of Victoria will test the interface between the power and data infrastructure planned for NEPTUNE and a cluster of scientific instruments—hydrophones, seismometers, and the like. Meanwhile, MARS engineers from the Monterey Bay Aquarium Research Institute, in Moss Landing, Calif., will subject the NEPTUNE infrastructure to crushing pressures in an 800-meter-deep canyon west of the aquarium [see photo, "Coming In for a Landing”].

If these smaller projects work, NEPTUNE itself could hit the water in 2007, setting oceanography on a new course. Scientists around the world are watching its progress and planning their own cabled observatories. Japan’s proposed ARENA observatory, for one, is of similar scale to NEPTUNE, and Europe’s ESONET would outline the entire continent.

While NEPTUNE is not the first attempt to build a cabled undersea observatory, it is by far the biggest and would deliver the infrastructure and engineering knowledge needed to make such observatories routine. If NEPTUNE fails, it would knock the wind out of the cabled observatory movement, and ocean science could remain stuck in its half-blind state for many years to come.

MARS is a trial run of a science node carrying NEPTUNE’s full power and bandwidth in deep water. If all goes according to plan, this month engineers will sink the node and the electric and fiber-optic cable that will link it to shore, turn it on, and hope for the best.

The node is a waterproof titanium vault protected by a truncated pyramid of steel the size of a small car. Packed within the titanium is communications equipment to translate optical signals to electronic ones, route the signals, and then send them out again on copper cables to nearby instruments or via laser along optical fibers to other undersea nodes [see illustration, "Inside a Node”].

But MARS’s main purpose is to demonstrate power conversion at the high voltage needed for NEPTUNE. It’s not uncommon to transport power over long distances as a very high dc voltage to limit current losses. What is uncommon is to convert that power to lower voltage for use under nearly a kilometer’s depth of water. Like NEPTUNE nodes, MARS must step down 10 kilovolts of dc power on the cable from the shore to a more useful 400 volts.

Designing and building a converter to do this fell to Harold Kirkham, a principal engineer at JPL’s Center for In-Situ Exploration and Sample Return, and Vatché Vorpérian, JPL senior engineer and an IEEE Fellow [see photo, "Masters of MARS”].

The design constraints were tight. The MARS and NEPTUNE converters must handle 2 to 5 kW each—about what it would take to power a dozen big-screen plasma TVs—and fit in a meter-long pressure tube. JPL’s design keeps the converter to a manageable size by stringing together 48 small high-frequency converter modules rather than using one or a few large low-frequency converters.

The modules operate on the same principle as the switched-mode power supply you might find in any modern piece of consumer electronics. Within each module, high-frequency transistors switch the dc to ac, a small transformer steps the voltage down, and a rectifier circuit turns the ac back to dc. Each of the 48 modules performs a 200- to 50-V conversion, and the outputs are tied together in groups of eight to deliver 400 V.

“You bolt together the outputs, so they’re forced to be the same voltage, but unfortunately that doesn’t mean that the inputs have to be the same,” says Kirkham. And the inputs are the real danger: the slightest loss of coordination among the high-frequency switches can expose one module to the entire 10-kV input, which would instantly fry it. Vorpérian found a solution that prevents such an undersea barbecue by using a single controller reading a single output signal to coordinate all 48 modules.

Getting repairs done in 1000 meters of water is not easy—when it is possible at all. So the converter’s reliability was a top priority. The converter is designed to be able to lose any one of its 48 modules without failing. And the node carries within its titanium pressure case two complete converters; if one shuts down, the backup automatically kicks in. “We think the failure rate from a converter should be extraordinarily low,” says Kirkham. “It might not even be measurable.”

That is, once it’s ready to launch. As late as this past September, JPL engineers were still troubleshooting start-up problems plaguing the power converter, and Kirkham was grumbling over MARS’s oceanography-scale budget, which precluded the full degree of quality engineering and fault analysis that would be lavished on space-bound equipment.

MARS’s plan is to start with a technology demonstration and add science instruments to its experimental node in the years to come. In contrast, the other NEPTUNE test bed, VENUS, led by the University of Victoria’s Tunnicliffe, will do lots of science right from the start [see photos, "Victorians”]. The point of VENUS is to demonstrate a system for sharing 400 V of dc power and high-speed communications among a bevy of underwater instruments. The number of instruments that can be integrated will determine how much science each of NEPTUNE’s nodes will be able to deliver.

VENUS is basically a smaller version of the MARS node that does only data conversion rather than both data and power. It turns electronic data from the science instruments into optical signals for transport to the shore and routes commands coming down from shore to the right instruments. Tunnicliffe’s team plans to install VENUS this month in 80 meters of water in the Saanich Inlet, 1.5 kilometers offshore of the southernmost part of Vancouver Island. Beginning this coming winter, a cable carrying an optical fiber and a 400-V dc line will power 15 instruments attached to the VENUS node. A second node planned for the nearby Strait of Georgia should interface with more than 40 instruments by the fall of 2006.

Most of VENUS’s complement of scientific equipment is adapted from standard oceanographic fare. However, the project is also financing one novel device specially built to exploit VENUS’s high-bandwidth two-way communications: a remarkably versatile array of hydrophones designed to triangulate and track a much broader range of sounds than any previous instrument. VENUS’s array will pinpoint sounds ranging from the low grumble of container ships (thought to disturb the local killer whale population) to the high-frequency sound of wind, rain, lightning, and the chirp of herring passing gas.

The VENUS designers initially envisioned wet-mating most instruments directly to the nodes—that is, plugging them in underwater. But that scheme looked unrealistic once they discovered that the required connectors cost roughly US $8500 per pair.

Their solution is a custom-built interface that will sit between the node and the instruments: the Science Instrument Interface Module, or SIIM. Several SIIMs can be wet-mated to a node, and they act as platforms that can serve 10 or more instruments hard-wired to them. So instead of needing one wet-mate connector per instrument, NEPTUNE and VENUS may need only one for every 10. Importantly, converting the node’s 400 V to the instruments’ 24 V is performed in the SIIM rather than the node, reducing complexity and heat in the node and making it more reliable.

Technologically, at least, VENUS and MARS are halfway to NEPTUNE. Imagine several VENUS nodes and their science instruments plugged into two dozen MARS nodes all spread out over hundreds of thousands of square kilometers, and you begin to get an idea of the potential scale of a fully deployed NEPTUNE. The final challenge is to build a data and power backbone to link the far-flung nodes.

The NEPTUNE teams based their initial backbone designs on terrestrial networks. The communications system design, led by Woods Hole senior scientist Alan Chave, mirrored the mix of optical fiber and electronics employed by the Internet. At each node, the incoming optical signals would be converted to electronic data packets and fed to an electronic router. The router would then send individual packets to the node’s scientific equipment or to laser transceivers for beaming down another stretch of cable to a neighboring node.

NEPTUNE’s power designers, though lacking a terrestrial model of a dc distribution network to follow, nevertheless stuck to the principles of terrestrial networks. The scheme for protecting the power system from shorts and other troubles relied on several layers of relays—being used essentially as fuses—and extensive communication with the shore station.

Both designs had a potentially fatal flaw: dependence on complex electronic equipment in the nodes deep underwater. This dependence violated the credo of undersea telecommunications engineers, according to NEPTUNE Canada’s Peter Phibbs, a 20-year veteran of the undersea cable industry. “The basic theory of submarine telecom systems is to put nothing underwater!” he says, a bit facetiously.

Experts at Alcatel Submarine Networks SA, in Nozay, France, the company responsible for many of the world’s undersea optical-fiber data cables, drew attention to the problem. Alcatel had invited the NEPTUNE communications team to Greenwich, England, to consider a concept that would let data keep flowing through the backbone even if power were lost at a node.

Part of an optical fiber’s amazing ability to transmit torrents of data comes from the fact that it can carry many wavelengths of light at once. In the original scheme, all the wavelengths of optical signals employed by NEPTUNE were to be converted to electronic ones and then transformed back into optical data again within each node. So losing power at that node would stop the flow to other nodes downstream from it.

Alcatel proposed that, instead, each node’s data should be encoded on one or a few dedicated wavelengths of light. Then, passive optical filters in the backbone, which require no power to operate, would divert just the wavelengths intended for a node to that node, allowing the rest to continue on to other nodes. So, rather than have the data line pass through a node, it would run alongside it, with only that node’s data diverted in. Similarly, data generated by the node’s instruments would be encoded on that same wavelength and fed back into the backbone’s optical fiber for transport to shore.

Noting a similar overdependence on complex electronics in NEPTUNE’s power system, one of Alcatel’s senior power engineers, Phil Lancaster, and JPL’s Harold Kirkham decided that the NEPTUNE system that protected nodes against power outages and overloads needed to be reworked as well.

The resulting redesign “really opens a new frontier for power engineering,” says IEEE Fellow Chen-Ching Liu, a professor and associate dean at the University of Washington who is an expert in power grid protection and a member of NEPTUNE’s power group. As with the optical fiber, the power line in Lancaster and Kirkham’s alternative design no longer runs through the node. In their approach, the backbone contains its own breakers and their simple controls, while the node resides on a spur that branches off from the backbone. The innovation that makes that possible is in how the system handles a fault.

“The traditional concept says if a segment of the cable is bad, then I try to disconnect that segment to keep the rest operating,” says Liu. The result is a partial outage with much of the system unaffected. “The new concept says we can afford to shut down [the whole system], but then we bring it back as quickly as we can.”

Imagine that a fishing trawler breaks open a cable and short-circuits it. The current on the cable will surge, and the shore station will automatically shut off the power. The question then is how to find and isolate the damaged part of the cable and bring the rest of the system back online. NEPTUNE’s first design would have relied on instructions delivered via the communications equipment within each node to test the condition of each segment of the cable and set the system’s breakers—opening them near the damage, closing them everywhere else. That presents a catch-22, because the node’s rather complex communications system needs to be powered and operational for the scheme to work.

In the redesign, the breakers automatically determine whether or not they should be open or closed with a little help from a signal sent from the shore station along the power line itself—a low-voltage pulse of reversed polarity. When the voltage reverses, a timer associated with each breaker starts counting down. The timer is set to a time proportional to the voltage at the breaker. The breaker closest to the damage will have the lowest voltage among them all and will trip first, thereby isolating the trawler-torn segment. Meanwhile, the shore stations measure the total resistance of the cables and feed that information to a system simulator to determine the location of the fault, so a repair ship can be dispatched.

As impressive as its engineering has been, NEPTUNE faces an uncertain future. Whereas Ottawa awarded Canadian $62.4 million (US $51 million) to the University of Victoria to build the 30 percent of NEPTUNE that lies in Canadian waters, funds to build and install the U.S. side of NEPTUNE have yet to materialize.

The latest in a series of bureaucratic roadblocks is the National Science Board, in Arlington, Va., which controls the release of funds for “big science” projects in the United States. The board recently bumped ocean observatories, NEPTUNE included, down a notch. It seems the Arctic science research ship that took NEPTUNE’s place in the funding queue enjoys vigorous support from Alaska’s influential congressional delegation.

As a result, the NEPTUNE concept that began in the United States has become—at least for now—a largely Canadian venture. And the Canadians are plowing ahead. This summer the University of Victoria selected Alcatel to lead the final engineering, construction, and installation of their equipment, and data could start flowing to the Internet in a little over two years. “The Canadians have done brilliantly well at getting their act together and have shamed the U.S.,” says JPL’s Kirkham.

Going it alone is a decidedly mixed blessing for the Canadians. On the one hand, they have gained design freedom. Case in point: NEPTUNE Canada accepted Alcatel’s communications redesign even though the Americans initially rejected it. On the other hand, NEPTUNE Canada must settle for a considerably smaller system than it had hoped to field. Bearing 100 percent of system design costs that the United States was supposed to share leaves a lot less cash to build and equip the system—about Canadian $15 million (US $12.3 million) less, in Phibbs’s estimation. Barring a new infusion of cash, the Canadians’ 800-km loop will host just 2 or 3 science nodes instead of 8 to 10. “We’ve paid the money to get in the game, and now we don’t have very much left to play the game,” Phibbs laments.

Nevertheless, even two nodes could produce spectacular results. In addition to Endeavor Ridge, NEPTUNE Canada will field equipment at Barkley Canyon, which boasts outcroppings of natural gas hydrate the size of small trucks. The outcroppings are the edges of methane-rich strata in the seabed whose stability, or lack thereof, could be one of the factors contributing to the pace of climate change. The hydrates themselves have also long been recognized as a potentially enormous source of energy.

Seismic surveying equipment on the NEPTUNE node should provide the first precise pictures of the underground hydrates, while a tethered robotic crawler assembled at the University of Bremen, in Germany, will monitor the hydrates’ response to changing environmental conditions, such as the Northeast Pacific’s rapidly warming waters.

“Today things are happening, and you do not know they are happening,” says Barnes. When NEPTUNE comes online, scientists will be aware of every twitch and tremble in the hydrate field, and they’ll have a front-row seat.

NEPTUNE’s ambition is emboldening others. Thanks to NEPTUNE, the proposed Japanese and European observatories should benefit from answers to several open questions that are contributing to the U.S. funding delay. How much will cabled observatories cost to operate? What unintended consequences may arise? And how quickly will scientists shift their approach to their work to take advantage of cabled observatories?

The cost of operation, of course, includes maintaining and replacing science instruments. Marcia McNutt, president and CEO of the Monterey Bay Aquarium Research Institute, says she is confident that NEPTUNE’s infrastructure will last 30 years but says the scientific packages plugged into it may be another story. “We don’t know how long the instruments are going to last, how much servicing they are going to need, or what that servicing will cost. That’s a very legitimate concern,” she says. The VENUS hydrophone array is a case in point. Its inventors hope it will last five years, but contractually the hydrophone is guaranteed to operate for just one year.

In the category of unintended consequences are tensions between NEPTUNE and VENUS management and the Canadian and U.S. navies. What concerns the militaries is the possibility that their foes will employ publicly available data from the systems’ sophisticated hydrophones to identify vessels and track their comings and goings. (Canada’s entire Pacific fleet docks at Vancouver Island, just west of Victoria, while a group of U.S. nuclear submarines calls nearby Puget Sound, Wash., home.)

The VENUS organizers granted the Canadian military the power to squelch VENUS’s acoustic data whenever the navy deems national security to be at risk. That worries some NEPTUNE researchers. “For observatories expecting to have a 24/7 feed, that could be very disruptive to the science,” says Benoît Pirenne, the assistant director of information technology for NEPTUNE Canada, who is designing NEPTUNE’s data and control system.

Then there is the question of how, and whether, scientists will use cabled observatories. Pirenne, who previously handled data management for the Hubbell Space Telescope, says oceanographers will have to feel their way through the same transition that astronomers experienced several decades ago in learning how to use data mining to exploit remote and online research facilities. “It’s going to be hard. Even the visionaries, the people who are very close to NEPTUNE, are still thinking in terms of real-time, hands-on experiments. They’re talking about controlling cameras with a joystick,” says Pirenne. “That’s over. It’s time for people to put together powerful algorithms that will pore through the data.”

Many of NEPTUNE’s proponents, such as Barnes and McNutt, see this change in thinking as the biggest hurdle facing ocean observatories such as NEPTUNE. McNutt says it has been a struggle trying to engage oceanography’s leaders, scientists who have profited under the old way of doing things. “It’s not going to be the boon for the leaders in the field who are already very comfortable with yesterday’s technology,” she says. Rather, she believes, NEPTUNE is for tomorrow’s oceanographers: “It’s for the visionaries who are not set in their ways, for the young people who will see ways to use it that we can’t even imagine.”

About the Author

PETER FAIRLEY writes about energy, technology, and the environment from Victoria, B.C., Canada. He wrote about bringing electric light to remote communities in rural Bolivia in “Lighting Up the Andes,” in the December 2004 issue of IEEE Spectrum.

To Probe Further

To follow NEPTUNE’s progress, check NEPTUNE Canada’s Web site at https://www.neptunecanada.ca. The site links to the consortium’s preliminary projects, MARS and VENUS.

The redesigned power system for NEPTUNE is described in “North-East Pacific Time-Series Undersea Networked Experiments (NEPTUNE): Cable Switching and Protection,” by Mohamed A. El-Sharkawi et al., in IEEE Journal of Oceanic Engineering, January 2005, pp. 232–40.