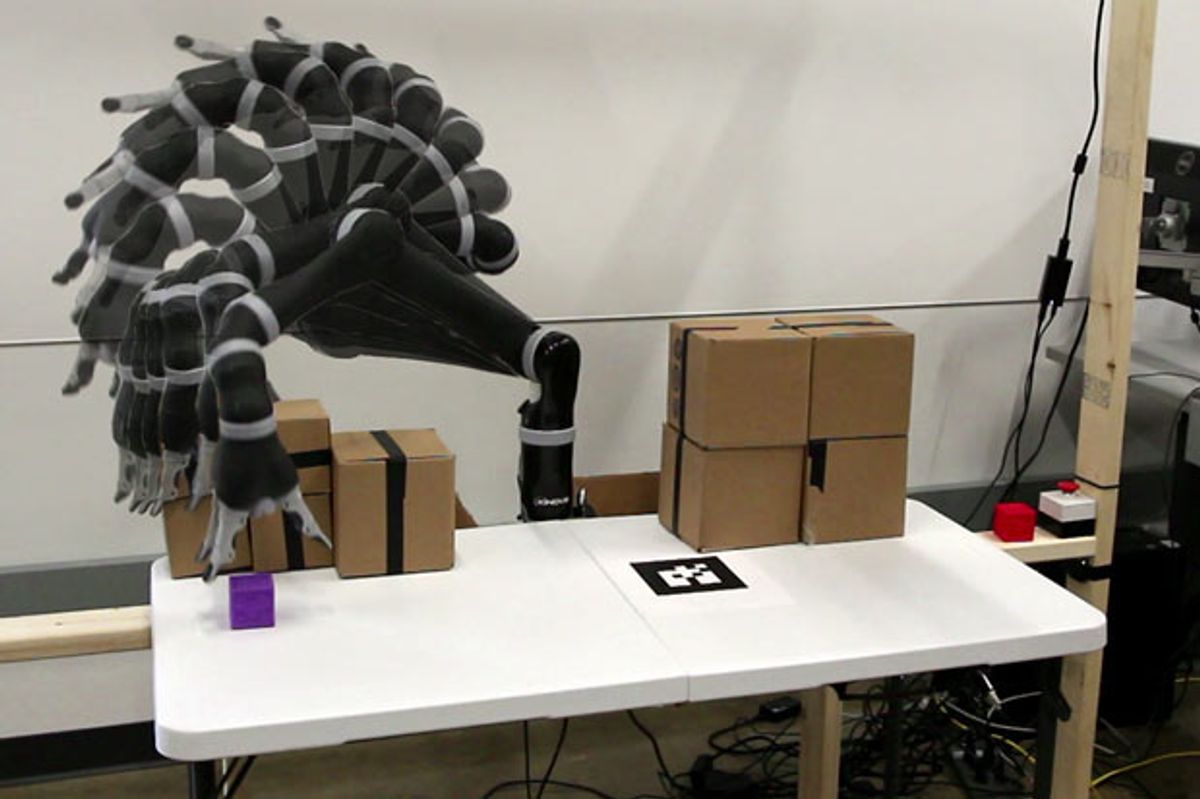

If you’ve seen a robot manipulation demo, you’ve almost certainly noticed that the robot tends to spend a lot of time looking like it’s not doing anything. It’s tempting to say that the robot is “thinking” when this happens, and that might even be mostly correct: Odds are that you’re waiting for some motion-planning algorithm to figure out how to get the robot’s arm and gripper to do what it’s supposed to do without running into anything. This motion-planning process is one of the most important skills a robot can have, and it’s also one of the most time consuming.

Researchers at Duke University, in Durham, N.C., have found a way to speed up motion planning by three orders of magnitude while using one-twentieth the power. Their solution is a custom processor that can perform the most time-consuming part of the job—checking for all potential collisions across the robot’s entire range of motion—with unprecedented efficiency.

Motion planning for something like a robotic arm usually involves first generating a probabilistic road map, or PRM. A PRM is a graph consisting of points in obstacle-free space, with lines called “edges” connecting points where direct movement between them doesn’t result in a collision. Essentially, motion planning consists of picking a starting point and an end point in the PRM, and then figuring out the most efficient path of edges to follow to get from one to the other.

In practice, however, you also have to consider the fact that you’re dealing with a physical robot arm, and that when the arm’s gripper (the bit you care about) moves from one place to another, the rest of the arm (which you don’t care about as much) may run into things. The area that a robot arm moves through is called the swept volume.

This is what motion-planning algorithms struggle with most: determining whether a swept volume will result in a collision with an obstacle, even if the path that the gripper takes doesn’t. One study showed that collision detection consumed 99 percent of a motion planner’s computing time.

To streamline this process, researchers at Duke used a combination of “aggressive precomputation and massive parallelism.” Precomputation happens when you first set up your robot. You generate a single massive PRM that consists of something like 150,000 edges representing possible robot motions while avoiding self-collisions and collisions with things that don’t change position, such as the floor.

Unfortunately, 150,000 edges were way too many for Duke’s prototype system to work with. A more reasonable number is closer to 1,000, so the researchers had to find a way to prune the PRM down.

To do that, they first simulated some 10,000 scenarios with different numbers of randomly located obstacles of varying size, and then they checked to see which edges in the PRM are used for planning. Infrequently used edges are dropped from the PRM, and after a couple of iterations of pruning and retesting, the PRM in one example was reduced to fewer than 1,000 edges without affecting its ability to solve sample motion-planning problems.

Getting the number of edges in the PRM down to something manageable is important because of the limits of the processor that handles the planning. The processor, a field programmable gate array, is programmed with an array of collision-detection circuits, each one of which corresponds to one of the edges in the PRM. So the size of the PRM is limited to the number of such circuits that can fit on the FPGA—a few thousand at most.

In operation, each circuit in the FPGA simultaneously accepts as an input the 3D location of a single pixel in a depth image. The circuit then outputs a single bit that reflects whether the edge that the circuit represents collides with the pixel’s location. If there’s a collision, the chip removes that edge from the PRM. Once the chip has run through all of the pixels in the image, what’s left is a PRM consisting only of collision-free paths. The robot then just picks the shortest one.

The speedup is substantial. No matter how many edges you start with, the FPGA will take just 50 nanoseconds per pixel to determine all the potential collisions. In one particularly complex example, the FPGA took a little over 0.6 millisecond to come up with a plan, and a software-based planner running on a quad-core Intel Xeon processor clocked at 3.5 gigahertz took 2,738 ms—nearly 3 seconds.

Dinesh Manocha, a professor at the University of North Carolina at Chapel Hill who has been working on real-time motion planning with GPUs, agrees that FPGAs have the potential to be much more efficient at motion-planning tasks. “Currently, industrial robots do not use motion planners,” Manocha explains. “As robots are increasingly used in new, uncertain environments, the role of motion planning will increase. I feel that this work is very exciting and provides a very practical solution.”

The researchers at Duke, including professors George Konidaris and Daniel J. Sorin with grad students Sean Murray, William Floyd-Jones, and Ying Qi, are now exploring ways of applying similar techniques to the next bottleneck: finding the shortest path through the PRM.

A startup called Realtime Robotics will be commercializing the Duke technology. That will involve moving from FPGAs to application-specific integrated circuits (ASICs) that contain much larger PRMs (100,000 edges or more), allowing robots to handle a variety of different environments.

“We’ve been talking with many companies in the robotics space,” Sorin says. “There’s great interest in this. Motion-planning software has been a huge limiter to the adoption of robotics, and if you can do real-time motion planning, suddenly robots can now operate in dynamic, unstructured environments. That’s what we’re hoping to enable.”