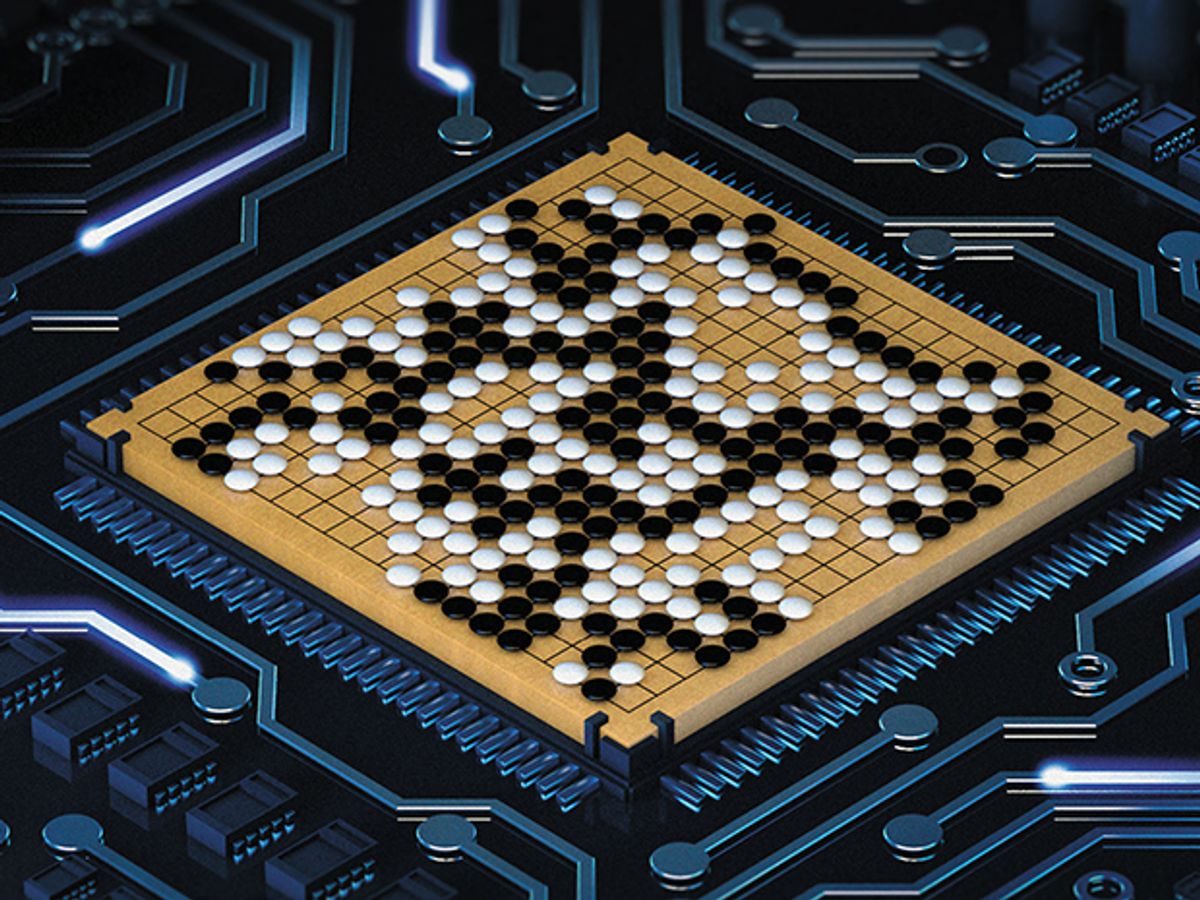

A computer program has defeated a master of the ancient Chinese game of Go, achieving one of the loftiest of the Grand Challenges of AI at least a decade earlier than anyone had thought possible.

The programmers, at Google’s Deep Mind laboratory, in London, write in today’s issue of Nature that their program AlphaGo defeated Fan Hui, the European Go champion, 5 games to nil, in a match held last October in the company’s offices. Earlier, the program had won 494 out of 495 games against the best rival Go programs.

AlphaGo’s creators now hope to seal their victory at a 5-game match against Lee Se-dol, the best Go player in the world. That match, for a $1 million prize fund, is scheduled to take place in March in Seoul, South Korea.

The program’s victory marks the rise not merely of the machines but of new methods of computer programming based on self-training neural networks. In support of their claim that this method can be applied broadly, the researchers cited their success, which we reported a year ago, in getting neural networks to learn how to play an entire set of video games from Atari. Future applications, they say, may include financial trading, climate modeling and medical diagnosis.

Not all of AlphaGo’s skill is self-taught. First, the programmers jumpstarted the training by having the program predict moves in a database of master games. It eventually reached a success rate of 57 percent, versus 44 percent for the best rival programs.

Then, to go beyond mere human performance, the program conducted its own research through a trial-and-error approach that involved playing millions of games against itself. In this fashion it discovered, one by one, many of the rules of thumb that textbooks have been imparting to Go students for centuries. Google DeepMind calls the self-guided method reinforced learning, but it’s really just another word for “deep learning,” the current AI buzzword.

Not only can self-trained machines surpass the game-playing powers of their creators, they can do so in ways that programmers can’t even explain. It’s a different world from the one that AI’s founders envisaged decades ago.

Commenting on the death yesterday of AI pioneer Marvin Minksy, Demis Hassabis, the lead author of the Nature paper, said “It would be interesting to see what he would have said,” said Hassabis. “I suspect he would have been pretty surprised at how quickly this has arrived.”

That’s because, as programmers would say, Go is such a bear. Then again, even chess was a bear, at first. Back in 1957, the late Herbert Simon famously predicted that a computer would beat the world champion at chess within a decade. But it was only in 1997 that World Chess Champion Garry Kasparov lost to IBM’s Deep Blue—a multimillion-dollar, purpose-built machine that filled a small room. Today you can download a $100 program to a decently powered laptop and watch it utterly cream any chess player in the world.

Go is harder for machines because the positions are harder to judge and there are a whole lot more positions.

Judgement is harder because the pieces, or “stones,” are all of equal value, whereas those in chess have varying values—a Queen, for instance, is worth nine times more than a pawn, on average. Chess programmers can thus add up those values (and throw in numerical estimates for the placement of pieces and pawns) to arrive at a quick-and-dirty score of a game position. No such expedient exists for Go.

There are vastly more positions to judge than in chess because Go offers on average 10 times more options at every move and there are about three times as many moves in an game. The number of possible board configurations in Go is estimated at 10 to the 170th power—“more than the number of atoms in the universe,” said Hassabis.

Some researchers tried to adapt to Go some of the forward-search techniques devised for chess; others relied on random simulations of games in the aptly named Monte Carlo method. The Google DeepMind people leapfrogged them all with deep, or convolutional, neural networks, so named because they imitate the brain (up to a point).

A neural network links units that are the computing equivalent to a brain’s neurons—first by putting them into layers, then by stacking the layers. AlphaGo’s are 12 layers deep. Each “neuron” connects to its neighbors in its own layer and also those in the layers directly above and below it. A signal sent to one neuron causes it to strengthen or weaken its connections to other ones, so over time, the network changes its configuration.

To train the system you first expose it to input data. Next, you test the output signal against the metric you’re using—say, predicting a master’s move—and reinforce correct decisions by strengthening the underlying connections. Over time, the system produces better outputs. You might say that it learns.

AlphaGo has two networks. “The policy network cuts down on the number of moves to look at, and the evaluation network allows you to cut short the depth of that search,” or the number of moves the machine must look ahead, Hassabis said. “Both neural networks together make the search tractable.”

The main difference from the system that played Atari is the inclusion of a search-ahead function: “In Atari you can do well by reacting quickly to current events,” said Hassabis. “In Go you need a plan.”

After exhaustive training the two networks, taken by themselves, could play Go as well as any program did. But when the researchers coupled the neural networks to a forward-searching algorithm, the machine was able to dominate rival programs completely. Not only did it win all but one of the hundreds of games it played against them, it was even able to give them a handicap of four extra moves, made at the beginning of the game, and still beat them.

About that one defeat: “The search has stochastic [random] element, so there’s always a possibility that it will make a mistake,” David Silver said. “As we improve, we reduce probability of making a mistake, but mistakes happen. As in that one particular game.”

Anyone might cock an eyebrow at the claim that AlphaGo will have practical spin-offs. Games programmers have often justified their work by promising such things but so far they’ve had little to show for their efforts. IBM’s Deep Blue did nothing but play chess, and IBM’s Watson—designed to beat the television game show Jeopardy!—will need laborious retraining to be of service in its next appointed task of helping doctors diagnose and treat patients.

But AlphaGo’s creators say that because of the generalized nature of their approach, direct spin-offs really will come—this time for sure. And they’ll get started on them just as soon as the March match against the world champion is behind them.

Philip E. Ross is a senior editor at IEEE Spectrum. His interests include transportation, energy storage, AI, and the economic aspects of technology. He has a master's degree in international affairs from Columbia University and another, in journalism, from the University of Michigan.