Every time you open your eyes, a magnificent feat of low-power pattern matching begins in your brain. But it’s very difficult to replicate that same system in conventional computers.

Now researchers led by Wei Lu at the University of Michigan have designed hardware specifically to run brain-like “sparse coding” algorithms. Their system learns and stores visual patterns, and can recognize natural images while using very little power compared to machine learning programs run on GPUs and CPUs. Lu hopes these designs, described this week in the journal Nature Nanotechnology, will be layered on image sensors in self-driving cars.

The key, he says, is thinking about hardware and software in tandem. “Most approaches to machine learning are about the algorithm,” says Lu. Conventional processors use a lot of energy to run these algorithms, because they are not designed to process large amounts of data, he says. “I want to design efficient hardware that naturally fits with the algorithm,” he says. Running a machine-learning algorithm on a powerful processor can require 300 watts of power, says Lu. His prototype uses 20 milliwatts to process video in real time. Lu says that’s due to a few years of careful work modifying the hardware and software designs together.

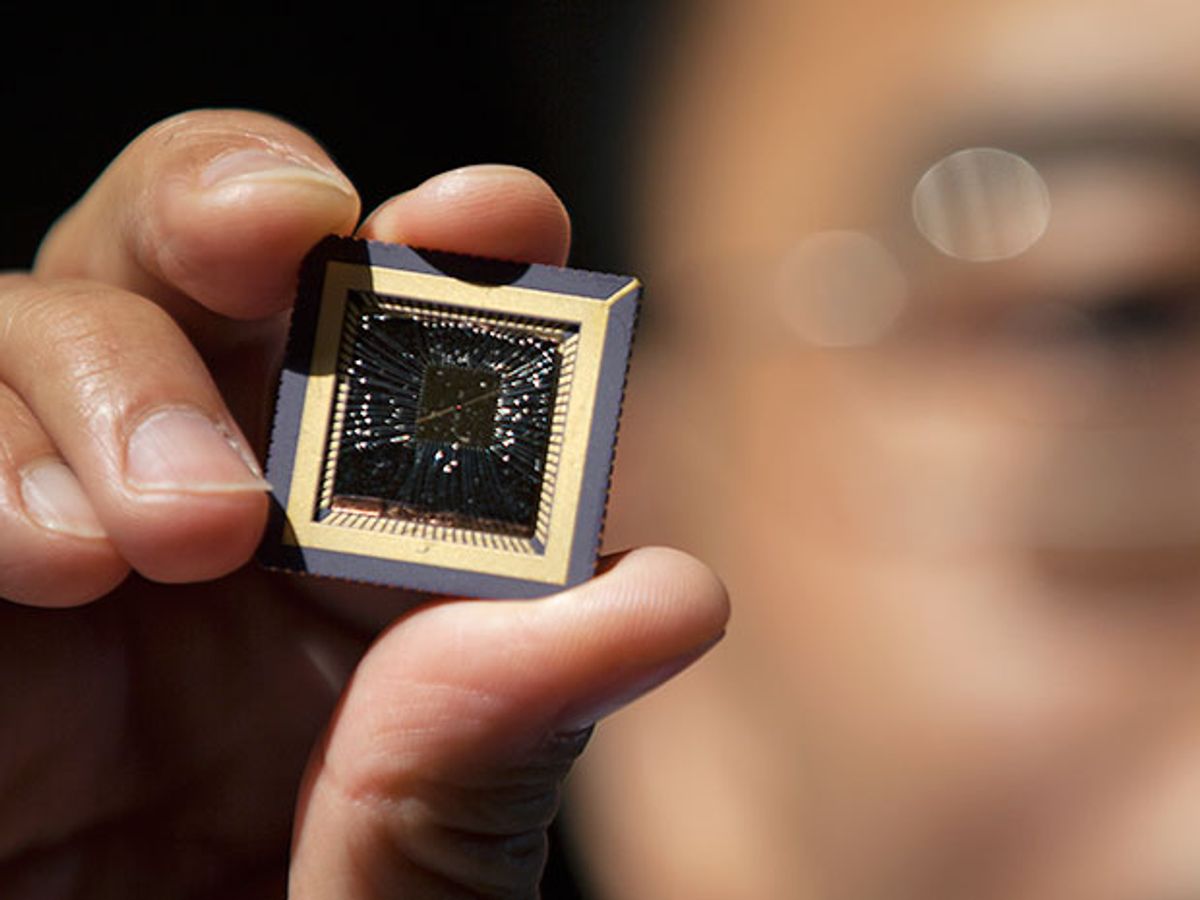

The device design is based on a 32-by-32 array of resistive RAM memory cells based on tungsten oxides. The resistance of these cells can be changed by applying a voltage. “As memory, the device is already pretty mature—it’s available commercially at a large scale,” says Lu. (He co-founded Crossbar, a company that sells resistive RAM.) In a traditional memory application, high resistance in a a resistive RAM cell might represent a 0 and low resistance a 1.

These cells can also be operated in analog mode, taking advantage of a continuum of electrical resistance. This allows them to behave as memristors, a kind of electronic component with a memory. In memristors, the resistance of the cell can be used to modulate signals—in other words, they can both store and process data. That contrasts with conventional computing, where there is a strict delineation between logic and memory.

The Michigan group used the memristor arrays to run a kind of algorithm that performs pattern matching. The algorithm is based on vector multiplication, a way of checking the stored data against incoming data. “The vector multiplication process directly tells you which stored pattern matches the input pattern,” says Lu.

Then the Michigan group took things a step further, programming the memristor array using a brain-inspired approach called sparse coding to save energy. “The firing of neurons is sparse,” he says. In the brain, only a small number of neurons fire in response to an image when matchin it to something you’ve seen before. In Lu’s system only the memory cells storing the relevant visual patterns become active. The sparse code was “mapped” onto the memristor array by training it against a set of sample images.

Lu says these memristor arrays can be stacked on an image sensor, since they don’t use much energy. Instead of sending all the image data to the processor, like in existing designs, the sparse coding hardware could sort out the most important parts and pass those along. He expects this will enable more energy-efficient and speedier video systems for self-driving cars. Lu’s group is currently working on integrated designs.

Katherine Bourzac is a freelance journalist based in San Francisco, Calif. She writes about materials science, nanotechnology, energy, computing, and medicine—and about how all these fields overlap. Bourzac is a contributing editor at Technology Review and a contributor at Chemical & Engineering News; her work can also be found in Nature and Scientific American. She serves on the board of the Northern California chapter of the Society of Professional Journalists.