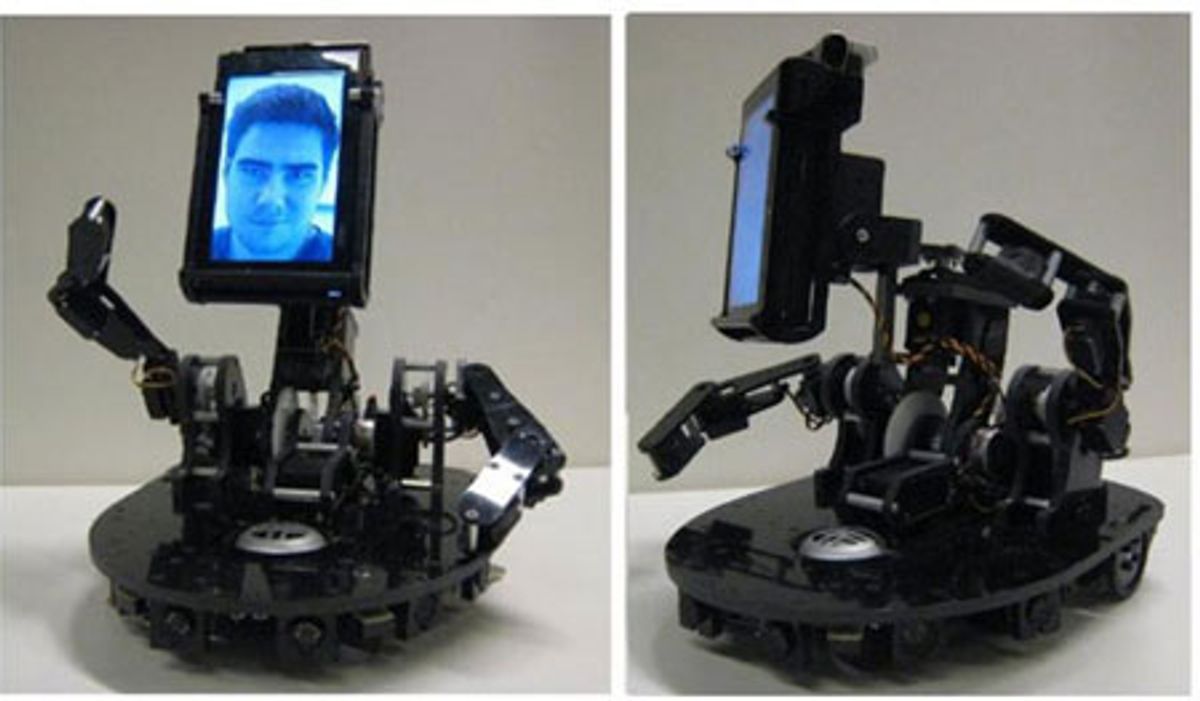

Most telepresence robots (with a few exceptions) aren’t especially presence-y, in that you can see people, and people can see you, but you’re pretty much just a head on a screen on a robotic stick with wheels. MeBot, a project from the Personal Robotics Group at MIT, adds a little bit of personality to telepresence by providing ways for users to communicate non-verbally, through things like head movement, arm movement, and posture:

The clever bit is that you, as the user, don’t need to tell the robot to do any of the expressive stuff that it does with its screen. It watches what you’re doing with your head, and duplicates those socially expressive movements with the robot. Is it effective? You bet:

We conducted an experiment that evaluated how people perceived a robot-mediated operator differently when they used a static telerobot versus a physically embodied and expressive telerobot. Results showed that people felt more psychologically involved and more engaged in the interaction with their remote partners when they were embodied in a socially expressive way. People also reported much higher levels of cooperation both on their own part and their partners as well as a higher score for enjoyment in the interaction.

Even though it has those little 3 DoF arms, MeBot isn’t designed to do anything in particular with its additional axes of motion. You currently control them sympathetically using a second set of arms, the positions and movements of which are duplicated by the arms on the robot. Conceivably, you could add some grippers to the robot and a more comprehensive control system on the other end, but that would defeat a large part of the purpose (and the beauty) of MeBot: it’s designed to be purely expressive, implying a natural simplicity that requires no extra effort or skill. It just does its thing while you do yours, which is how all the best systems (hardware and software alike) tend to function.

Another vid with a few more details, after the jump.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.