Mars For The Rest Of Us

Better cameras, greater bandwidth, and bigger displays put Mars within reach of armchair explorers

This is part of IEEE Spectrum‘s Special Report: Why Mars? Why Now?

I slowly scan the nearby rocks and crags, letting my gaze drift up toward the horizon. My eyes are searching for a recognizable shape—anything that’s not a rock—to give me a sense of scale. I come up empty.

Mars is all around me, here in the StarCAVE, a virtual reality enclosure at the University of California, San Diego. Five projectors transform a room the size of a walk-in closet into a 360-degree panorama of the view from the basin of the large Gusev Crater.

For the near future at least, only robots will touch Martian soil. But even after the rusty surface becomes a trampled mess of human boot prints, we—you and I—probably won’t qualify for the trip. So even if “mankind” one day reaches the Red Planet, most of us are destined, at best, to experience its exploration secondhand.

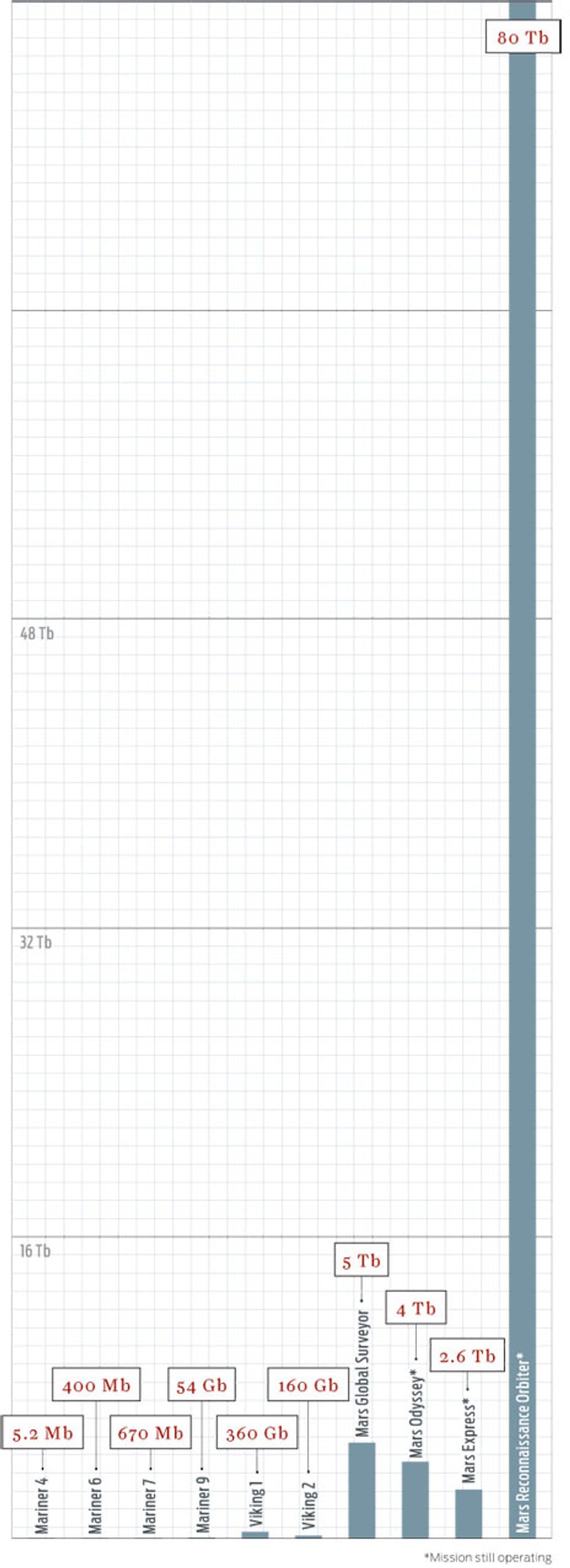

Communications and storage advances have allowed each new orbiter to outdo its predecessors

The good news is that such secondhand participation is likely to be a lot better than it is today. Improved communications, imaging, and visualization technologies will allow NASA to bring much of the experience of being on Mars back to those of us stuck on Earth.

To obtain this one view, for instance, the Mars rover Spirit parked on a small hill near the Home Plate plateau and began snapping a few pictures a day as it waited out the Martian winter in 2006. Over the next four months, it gathered more than 1400 images, which NASA digitally stitched together into a single 130-megapixel panorama. Standing in the middle of it is so immersive that I immediately feel the urge to explore the scene, to peer around rocks and see what’s behind them.

“You can go into a room, and you’re on Mars,” says Larry Smarr, director of the California Institute for Telecommunications and Information Technology, or Calit2, which runs the StarCAVE. The idea of re-creating Mars here sounds appealing, but it is not just fantasy—only by maximizing what can be done from the ground can NASA make Mars exploration politically sustainable and financially worthwhile.

From their inception, U.S. and Soviet space agencies recognized the value in connecting with the public directly. When Sputnik became the first artificial satellite, in 1957, it carried a radio transmitter instead of a scientific payload. If you had a shortwave radio, you could hear the beeps from the craft as it passed overhead, proving beyond all doubt that the Soviet Union had conquered space. The Apollo 11 moon landing had similar public-relations value. It would have been considered a great engineering feat in any case, but the event became a shared global experience when its live video broadcast brought the lunar surface into living rooms around the world.

Since winning the space race, however, NASA has abandoned such showmanship in favor of more rational, pragmatic, and scientific pursuits: remotely exploring the solar system and learning how to live in orbit. The unfortunate side effect is that the public’s engagement with the space program has waned, even if inherent interest in space hasn’t. The draw of Mars, in particular, goes back centuries, and every time a new technology has provided better access to the most Earth-like planet in the solar system, the public has embraced it.

Take the Pathfinder mission, which carried the first rover to Mars in 1997. Individual shots from the lander didn’t look much better than the photos the Viking missions had gathered two decades earlier. But this time there was one big difference: the emergence of the World Wide Web. NASA put the Pathfinder photos online as soon as they came back from Mars, sparking an Internet sensation. The images attracted 47 million hits in a single day, one of the biggest 24-hour bursts of traffic in the history of the Internet to that point.

We’re now on the cusp of another revolution in Mars exploration, where public outreach and scientific investigation will go hand in hand. Increasingly sophisticated imaging systems will allow robots to transmit not just individual photos but also enough data to create huge panoramas and virtual environments for anyone to explore. The sheer amount of information will require and reward more human scrutiny than professionals alone can provide. NASA is also learning, if a bit haphazardly, how to leverage Web 2.0 technologies to make missions interactive. Directly connecting with constituents in this way will be no easy task, but it’s NASA’s best opportunity to create a sustainable future for the space program.

The two Viking missions, in the 1970s, were a great success, providing more than 50 000 images of Mars, including the earliest photos from the surface. But the most powerful imagers, the twin cameras on each Viking orbiter, had a resolution that was no better than what you’d find on a cellphone camera today. Compare that with the Mars Reconnaissance Orbiter (MRO), which has been circling the planet since 2006. Among its science instruments is the High-Resolution Imaging Science Experiment (HiRISE), a camera capable of taking 1200-megapixel black-and-white images and resolving features as small as a meter in size (including the tracks left by the rovers).

MRO sends the images to Earth via the fastest connection in deep space, capable of transmitting nearly 6 megabits per second. It has already sent back 80 terabits of data, more than all the other deep-space missions combined. After it’s finished collecting data, MRO is slated to remain in orbit to serve as a high-speed communications link for future missions.

Once Mars pictures make it to Earth, however, NASA faces an enviable problem: The resolution of the stitched-together panoramas is so great that agency scientists have no way to view them with conventional monitors. At Calit2 the institute has another display called Varrier, around the corner from the StarCAVE. It consists of 60 liquid-crystal displays arranged in a half-cylinder with a total of 115 million pixels. A photographic-film screen affixed to a glass panel is mounted in front of each LCD and, combined with a head-tracking system, provides stereoscopic three-dimensional images without the need for special glasses. “When you map a panorama into this,” Smarr says, “you see the global structure of this place but also the fine details.”

Starting with a view of the whole planet, I use a wireless handheld controller to zoom in and swoop down inside Valles Marineris, a vast chasm that puts the Grand Canyon to shame. I can sense the depths of the canyon from the steep walls at the periphery of my vision. In February, Calit2 and the NASA Lunar Science Institute set up a smaller three-by-three grid of screens—which they call an OptIPortal—giving NASA its first 40-million-pixel display. It may be small compared to the Varrier, but it’s a huge improvement over any other NASA screen. The agency is interested in building more.

But NASA is not alone in noticing the potential of the new technologies. The U.S. Congress also seems to have some sense of how these technologies might benefit the people who, after all, pay the space program’s bills. In the 2008 NASA Authorization Act, the House of Representatives stipulated that the agency “develop a technology plan to enable dissemination of information to the public to allow the public to experience missions to the moon, Mars, or other bodies within our solar system by leveraging advanced exploration technologies.”

“There are people who view projects as having a scientific part and an outreach part. To me, the boundary is artificial and not particularly useful,” says Michael Sims, an intelligent-systems scientist at NASA’s Ames Research Center, in Mountain View, Calif. Sims helped develop the Mars rover that captured the images projected in the StarCAVE. The seamless vistas came courtesy of the Pancam, a robotic pan-and-tilt camera system created in a collaboration between Ames, Carnegie Mellon University, and Google. (The team created a commercial spin-off of the technology, called GigaPan, in 2008.)

As he talks, Sims sits in front of his computer, manipulating a photo that looks like the mouth of a cave. It’s actually a GigaPan image of a lava tube in New Mexico. He begins zooming in—and in and in—and within a second, the picture has gone from one that shows the tube opening and its desert surroundings to one that fills the monitor with the details of a single rock. “Everyone is not going to go into caves [even] where we have wonderful caving,” says Sims. “But there’s no reason why we can’t all experience that.”

Probing Martian images, however, can be an enormous job. “If I showed each HiRISE image for 10 seconds, it would take me about four years to show them all,” says Alfred McEwen, the instrument’s principal investigator. There’s simply too much data for the agency to handle by hand, and computers are notoriously bad at image analysis. NASA scientists will need assistance from the public, and some of them have already begun experimenting with crowd sourcing to help process large amounts of image data.

Through NASA’s Clickworkers program, users look at tiny slices of HiRISE images and mark off features such as channels, gullies, dust-devil tracks, boulder fields, and lava flows. This helps NASA scientists identify interesting features that they would otherwise miss in unexpected places. Users can also examine older photos from the Mars Orbiter Camera (MOC), which flew on the Mars Global Surveyor from 1997 to 2006, and even suggest targets for HiRISE to reimage.

Taking a closer look at old images can certainly pay off: When NASA scientists compared MOC images of the same crater taken in 1999 and 2005, they found a new gully deposit, the first evidence that liquid water still occasionally flows on the surface.

Another such effort to engage the public involves the Stardust spacecraft, which flew through the tail of a comet in 2004, collecting particles in aerogel. On its way there, the probe also passed through regions of interstellar dust. Mission scientists took more than 1.6 million photographs of the gel under a microscope, but they only expect to find maybe 45 grains of interstellar dust. The Stardust@home project provides users with a Web browser–based virtual microscope to search for the telltale tracks in the images.

The project directly contributes to science: The user who first identifies a speck that turns out to be interstellar dust will be automatically included as a coauthor on the scientific publication of the results. Part of what makes Stardust@home work is its scoring system, which rewards users for corroborating the findings of other participants. And scoring high requires skill—each image is, in fact, a collection of scans taken with focal planes set at different depths in the gel, which provides the virtual equivalent of adjusting the microscope’s focus as they examine a speck to discern whether it’s truly interstellar dust.

But even the excitement of improving your score can eventually wear off as you adjust the focus on the 20th grayscale image filled with nothing but bubbles and imperfections. Would people actually want to help out with all the tedious work of science? Yes—or so they claim.

Dittmar Associates, a consulting firm, has conducted surveys for NASA during the last five years to understand the public perception of the agency. While most respondents generally support the space program, they’ve said they want to feel more engaged, more a part of the action. The surveys asked disengaged 18- to 25-year-olds, “What would get you interested in and excited by NASA?” The top answers: being able to go into space themselves, participating in missions in some other way, and having the ability to view what robots and astronauts are seeing in real time (or as quickly as NASA gets the images).

The first request is difficult to grant, but Stardust@home’s crowd-sourcing program addresses the second wish. The project has attracted more than 26 000 “dusters,” who have collectively analyzed some 40 000 000 images. The Clickworkers program, which initially involved the much duller task of marking the edges of craters, attracted more than 80 000 participants [see sidebar of participatory programs, "Make Your Mark"].

But what about being able to see what the robots see? NASA may just be able to go one better, by placing you in the rover’s treads, so to speak.

“In the past 25 years in field robotics, one of the greatest advances in autonomy is virtual environments,” says Sims, the scientist at NASA Ames. Sims and his colleagues developed a program called Viz, which uses the stereo cameras on the Mars exploration rovers Spirit and Opportunity to create a virtual version of the rovers’ surroundings. “It’s a way to give a better perspective on what you’re seeing in images,” he says. Round-trip communication to Mars can take up to 40 minutes, so direct control of rovers isn’t possible. Instead, while they wait for new data, operators can fly around virtual environments and plan their next moves.

The Pathfinder mission was one of the first to use virtual worlds to improve rover operation. When its Sojourner rover was preparing to roll off the lander and onto Martian soil, one of the ramps to the surface was stuck in midair—a fact that was obvious in the virtual environment but difficult to discern from images alone. In another instance, an examination of the virtual world prevented the rover from gouging itself on an overhang it was approaching.

Besides just avoiding unseen obstacles, modeling a robot’s surroundings can help NASA do real science. With Spirit and Opportunity, planetary scientists have used virtual environments to investigate how rock strata formed and changed over time. Sims compares this approach with the utility of multiple viewpoints in a video game. “For problem solving, it’s nice to be able to have the bird’s-eye view,” he says. The fact that NASA is already creating gamelike virtual environments is good news for the rest of us.

Mainstream audiences got their first glimpse of the virtual-reality future when Google added Mars to its Google Earth application in February. Microsoft’s WorldWide Telescope program plans to follow suit shortly. For a more up-close look at the surface, NASA has used the virtual world Second Life to build a visualization of Mars’s Victoria Crater. It’s rendered at nearly one-third its actual scale, making it one of the largest features that virtual visitors can fly over and explore. NASA even has plans for a massively multiplayer online game that may incorporate planetary environments from real data.

The visualizations created for Spirit and Opportunity were available only to the team controlling the rovers. The next step is to make such spaces more sharable. “You can imagine scientists working in these virtual worlds and planning the mission in real time,” Smarr says.

Already Calit2 and the Lunar Science Institute have connected their giant displays via dedicated fiber optics to view huge lunar vistas while simultaneously teleconferencing in high-definition video. If the same connectivity extended beyond the professional network, “everyone in the world could be in that room with them, making contributions in real time,” says Smarr. But before we can entertain such a notion, NASA will have to figure out how to make sense of so many voices.

Twitter, the microblogging service, provides one means to deal with cacophony by at least keeping comments short. Veronica McGregor, a communications officer at the Jet Propulsion Laboratory, in Pasadena, Calif., started a Twitter feed for the Mars Phoenix mission, a lander that traveled to Mars last year. In a stroke of inspiration, she decided to tweet in the first person. What started as a way to save characters (“I” instead of “the lander” or “Phoenix”) soon gave birth to the first Mars robot with personality.

Tweets such as “Are you ready to celebrate? Well, get ready: We have ICE!!!!! Yes, ICE, *WATER ICE* on Mars! woot!!! Best day ever!!” eventually attracted more than 40 000 followers, making @marsphoenix the 30th most popular account on Twitter at one point during the mission.

That represents a different type of participatory exploration—where Web 2.0 technologies make it easier to have personal contact with the space program. Twitter’s short format allowed McGregor to respond in real time to hundreds of questions from readers who followed the feed.

Since then, almost all NASA missions and centers have started Twitter accounts. But only a few have really recaptured the same magic. Viral success is hard to repeat, and not every communications officer has McGregor’s flair. What NASA needs is a group that can help teach the agency how to break out of its insular traditions.

With that objective in mind, S. Pete Worden, the director of NASA Ames, decided in 2006 to turn loose a bunch of twentysomethings on the center. They formed the Collaborative Space Exploration Laboratory, or CoLab, which became the focal point of the participatory exploration movement within NASA. In June 2007, CoLab and NASA Ames hosted the Participatory Exploration Summit, which sought to link like-minded projects from across the agency with partners “outside the gates.”

Taking many of their goals and ideals from the interactivity of Web 2.0 applications, the center has tried to spark collaborations that go beyond the traditional aerospace companies with NASA contracts. Lab members have used the experience of Stardust@home and similar projects to help the scientists running new missions connect with amateurs. CoLab is also behind the CosmosCode project, which is designed to provide an open-source collection of aeronautics and astronautics software.

Somewhat fittingly, CoLab’s biggest presence has been in that most ephemeral of digital spaces: Second Life. Volunteers helped build CoLab Island, which serves as the virtual location of weekly meetings between NASA and outside volunteers.

But making space accessible is difficult. Web 2.0 platforms—Twitter, Facebook, Second Life—are a great way to reach a certain type of Web-savvy amateur, but they leave out a big part of the potential audience. There’s no one-size-fits-all solution to engaging the public. While the Clickworkers project is as easy a way to kill time as playing solitaire, the work eventually gets repetitive. Contributing to NASA’s open-source software is much more intellectually stimulating, but it’s an option only for those who know how to code.

Another problem is that even innovative programs have trouble shaking off the stigma of being mere “outreach and education” activities—in other words, not important. “We’re going for ‘inreach’ as well,” CoLab project coordinator Delia Santiago told me when I visited in October, referring to contributions from the outside that demonstrably help NASA rather than just cost it money. But she knew that CoLab still needed to prove its case. “We have to show our relevance,” she said. “We have to show that we actually add value.”

The CoLab staff was seeking no less than a cultural change within NASA, quite an undertaking in a big, lumbering bureaucracy. Since my visit, the CoLab program has “paused for a bit,” according to Santiago, although many of the programs it championed live on.

Maybe NASA wasn’t quite ready for such a big shift. Take the case of Ariel Waldman. She was hired at CoLab specifically for her social-networking skills, but the contractor she worked under had standard rules that expressly prohibited using social-networking sites at the workplace. After trying without success to get permission for three months to use the tools she needed, Waldman gave up and started her own Web site, Spacehack.org. It collects and organizes the disparate and jumbled set of events, projects, and communities for amateurs interested in space—and it does so better than any NASA site.

It’s a long-held axiom among some segments of the space community that while robotic missions are great for doing science, you have to have a human program, because that’s what excites constituents and Congress enough to pay the bills. Others perceive a true need for people in space.

“Robots discover. Humans explore,” Kent Joosten, a systems engineer at Johnson Space Center, said at a space conference last year. “Exploration is a personal endeavor. There are hundreds of thousands of boot prints on the moon.” He has a point—astronauts on Mars could think on their feet, without relying on delayed radio instructions. Even roboticists agree. “Some aspects of exploration will never be done by a robot,” Sims says.

But rather than a human spaceflight program that supports the rest of NASA, interactive robotics might become the new public crowd-pleaser, if it hasn’t already. Dittmar Associates’ surveys of 18- to 25-year-olds found that they had little interest in or knowledge about either NASA or Constellation, the program to return to the moon, but they were excited by Spirit and Opportunity.

Generation Y represents only about 15 percent of the workforce in the United States today, but experts predict that percentage to nearly double in five years. Persuading this group to take part in virtual space exploration could extend political support for the U.S. space program beyond Texas and Florida.

And the next generation of Mars robots is likely to be even better, eventually providing streaming high-definition video and more. “The vision is sort of the Star Trek holodeck,” says NASA Ames’s Worden. “What does the Martian wind feel like on your face?” he asks, a question that can be answered only in virtual reality, not in Mars’s thin carbon dioxide atmosphere.

Assuming that NASA realizes the benefits of bringing Mars back to Earth, we might eventually have thousands of answers to that question.

For more articles, go to Special Report: Why Mars? Why Now?