Make Way for Flexible Silicon Chips

We need them because thin, pliable organic semiconductors are too slow to serve in tomorrow’s 3‑D chips

Imagine rising from bed to catch an early flight. As you head for the shower, still groggy, a tiny, flexible sensor chip in yesterday’s clothes reminds you that they need to be washed. At breakfast, you check on your flight status and then stream the latest news to a tablet-size flexible display, flipping through pages of text and video. A message from your doctor pops up, reminding you to wear your medical diagnostic patch and pack your medication. As you leave your house, tiny sensors in the carpet and wallpaper put some appliances into standby mode. At the airport, a flexible electronic ticket guides you to the right gate, and a wireless interface between your ticket, your passport, and a retinal scanner gives you immediate clearance.

Such seamless integration of computing into everyday objects isn’t quite here yet, in large part because we still don’t have cheap, thin, flexible electronics. But the technology is already on a path toward ubiquity: radio frequency identification (RFID) tags are used to track goods (and, increasingly, pets and people), flexible sensors in car seats warn parents not to leave their babies behind when they go shopping, and bendable displays are on the way for e-readers. These inherently flexible products can be mass-produced, and some can even be printed, inkjet style, to create large displays.

Made primarily from nonsilicon organic and inorganic semiconductors, including polymers and metal oxide semiconductors, flexible chips are an exciting alternative to rigid silicon circuits in simple products like photovoltaic cells and television screens, because they can be made for a fraction of the cost. But today’s flexible electronics jus t don’t perform as well as silicon chips made the old-fashioned way. For example, in February 2011 the first microprocessor made with organic semiconductors was introduced, but the 4000-transistor, 8-bit logic circuit operated at a clock frequency below 10 Hz. Compare that with the Intel 4004, introduced in 1971, which worked at 100 kilohertz and above—four orders of magnitude as fast.

A new technique for creating ultrathin silicon chips, though, could lead to many high-performance flexible applications, including displays, sensors, wireless interfaces, energy harvesting, and wearable biomedical devices. Silicon is an ideal semiconductor for such chips because its ordered structure allows for well-behaved switches that are far faster than organic alternatives.

So how do we get the best of both worlds? By combining today’s cheap, large-scale flexible electronics with silicon that’s just as powerful as the best available today—but thinner.

Today’s silicon chips are usually built on wafers up to a millimeter thick. In this bulky state, the wafers are stiff and stable enough to survive the fabrication process. Slimmed down to thicknesses of 100 to 300 micrometers, silicon wafers are still stiff, but they must be handled carefully. Between 50 and 100 µm, a wafer may fracture under its own weight.

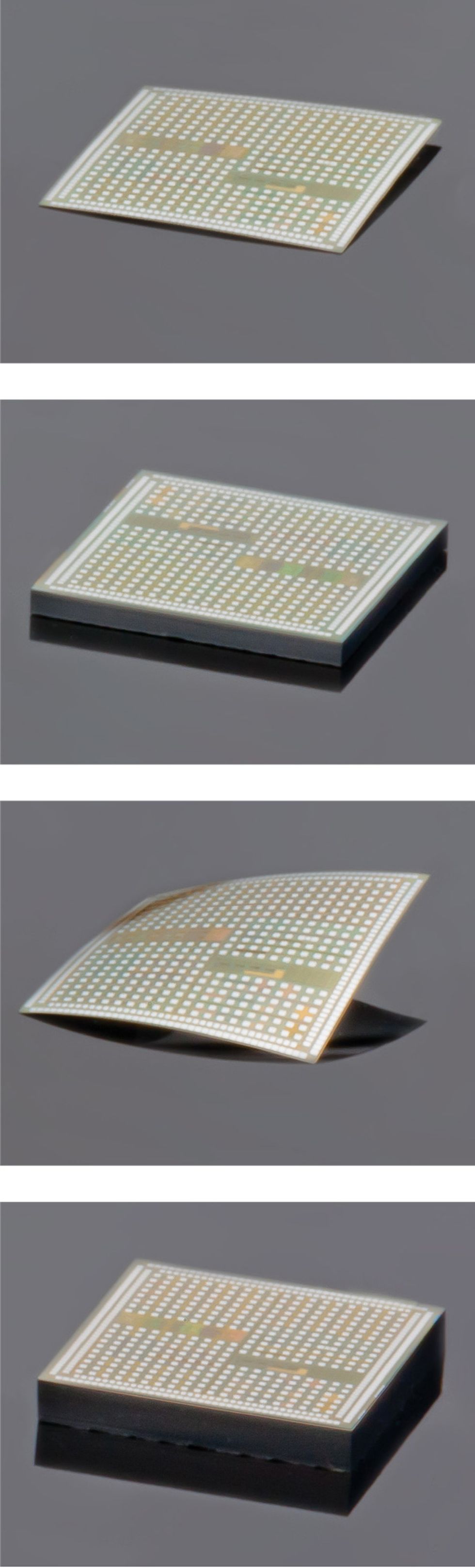

Strangely, though, below 50 µm, silicon chips hit a sweet spot: They get more flexible and more stable. Below 10 µm, a silicon chip even becomes optically transparent, which eases the alignment of chips during assembly and allows for their use as sensors on windows and other transparent surfaces. These sub-50-µm chips are ideal for the futuristic thin-film electronic applications I described above. They’re able to bend, twist, and roll up, yet they’re as strong as stainless steel—after all, they’re still made of high-performance crystalline silicon.

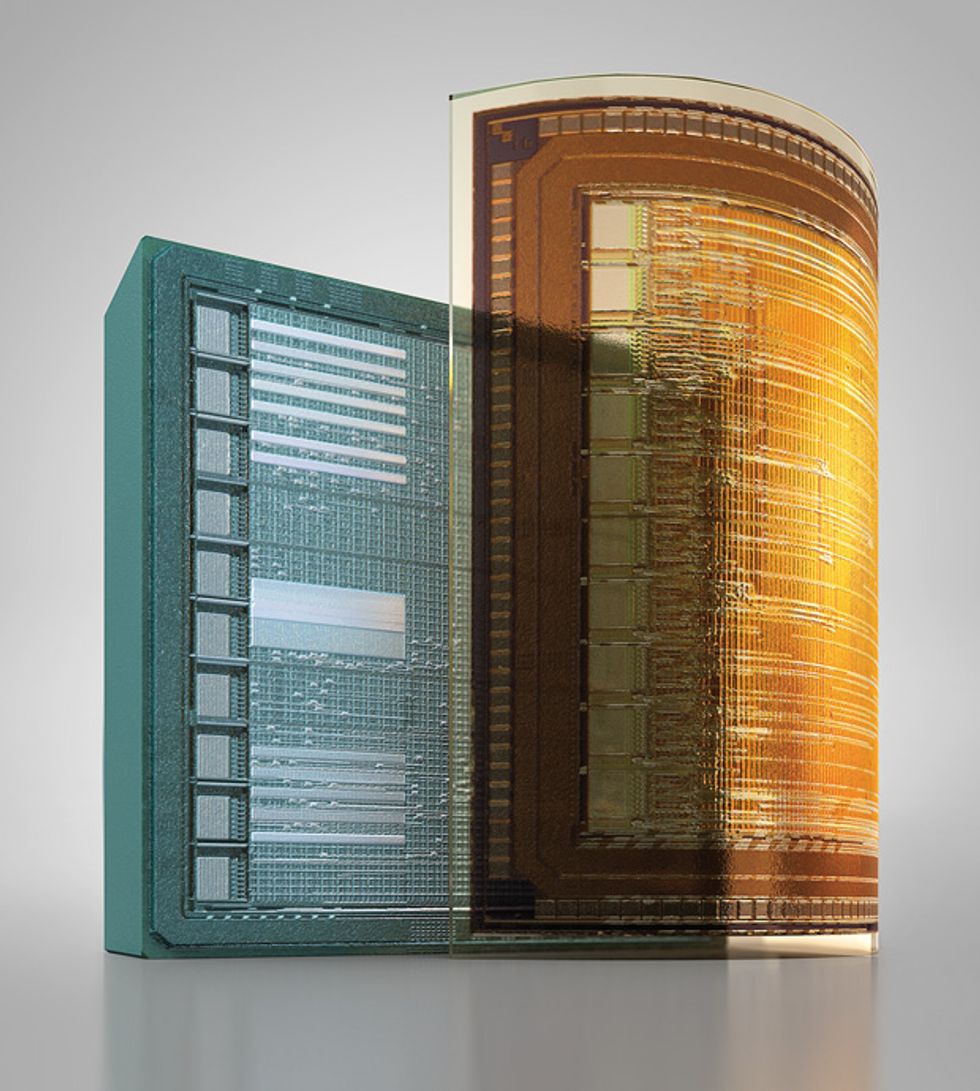

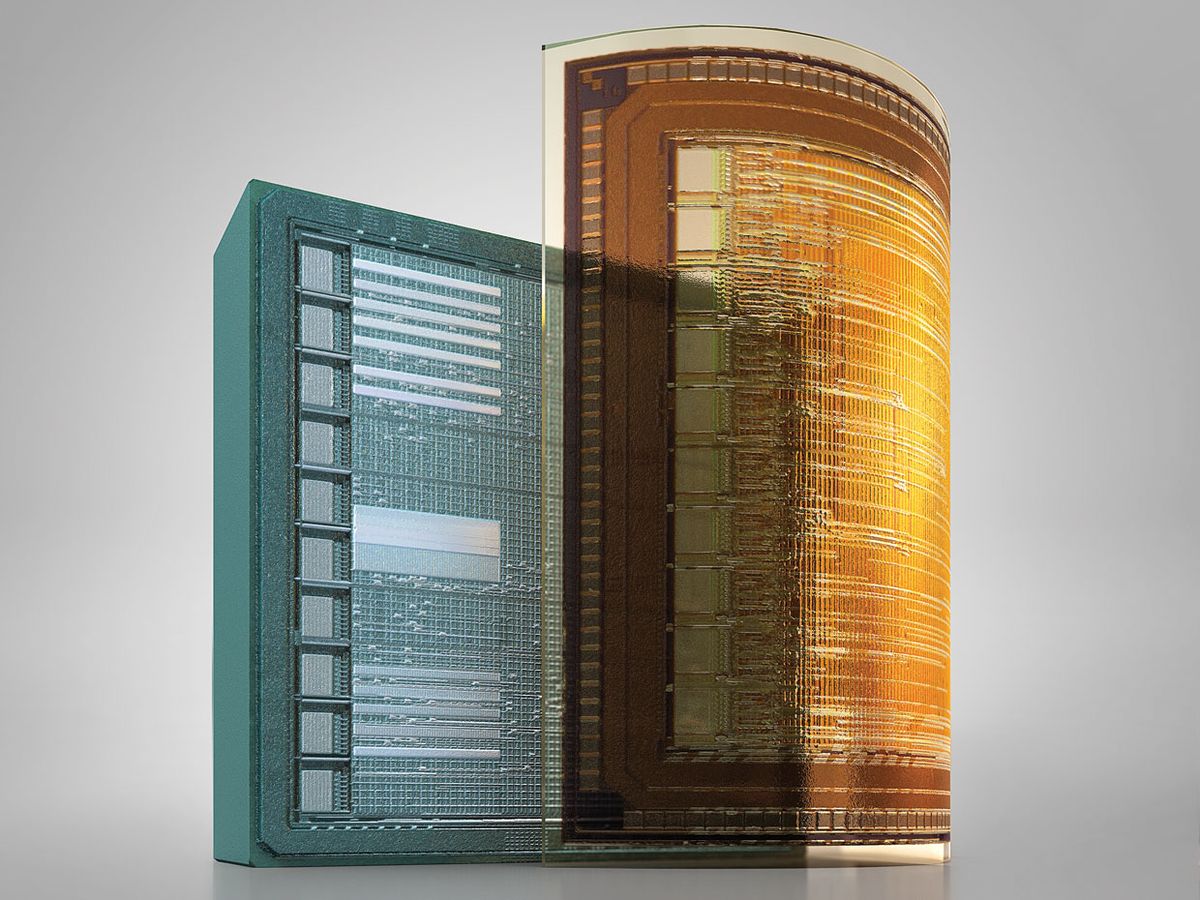

And thinness enhances stackability. This is a critical attribute, given the advent of the three-dimensional integrated circuit, or 3-D IC. As chips become more complex and dense with transistors, the metal interconnects between the transistors grow longer and more convoluted. The purpose of stacking is to shorten the distance between transistors by connecting them vertically using through-silicon vias, thus speeding performance. That’s just what flash memory designers are trying to do right now.

The current generation of 3-D flash memory ICs is built from stacks of 30-µm-thick chips. But to accommodate the smaller transistors that are on the way, chips will need to get even thinner. When that happens, they’ll be ready to support a whole new world of applications. In combination with thin-film electronics, ultrathin silicon chips can be placed on a flexible substrate (commonly a polymer foil, but also paper or even cloth) to form a hybrid system-in-foil (SiF) device. The resulting thin but highly integrated circuits can provide all the muscle needed to diagnose our ills, choreograph our household appliances, and ease our early morning travel.

Obtaining ultrathin silicon chips isn’t a simple proposition. Typically, the chips are cut from large, pizza-size wafers of ultrapure silicon. The electronics reside in the upper 5 to 10 µm of the disk—the top 1 percent, that is. The rest serves as a sturdy base that can withstand the rigors of the automated fabrication line.

The most straightforward way to make a thin chip is to grind away that base. Conveniently, this can be done after the circuits are drawn and before the wafers are diced into chips, so that the sturdy substrate is still present during processing. This subtractive technique was the first strategy suggested by the International Technology Roadmap for Semiconductors in 2005, when it began to account for wafer thinning.

But grinding and dicing aren’t gentle; they introduce crystalline defects and cracks at the edges of the wafer. There are work-arounds that can protect the wafer, but below 50 µm these steps become prohibitively expensive. As wafers get thinner, it’s also more difficult to grind them to a uniform thickness across their entire diameter. Chipmakers must stabilize extremely thin wafers, gluing them temporarily to silicon or glass wafers, known as carriers; mounting and removing those carriers is an expensive process.

Another way to slim down a chip is by building a thin film of silicon on a layer of oxide, which itself lies on a thick silicon substrate. These silicon-on-insulator (SOI) wafers—so-called because silicon oxide is a wonderful insulator—lend themselves to processes that remove silicon selectively and uniformly. SOI wafers cost about 10 times as much as conventional wafers, however, and as with the wafer-grinding technique, they require extra substrates during handling. On top of these practical difficulties, both subtractive techniques waste 99 percent of the silicon wafer. It’s like a baker throwing away the bottoms of his muffins and selling only the crunchy tops.

The alternative is to build the thin chip from the ground up. It’s a radical solution, one that runs counter to half a century of chipmaking, but as applications require thinner and thinner chips, the much less wasteful additive technique looks better and better.

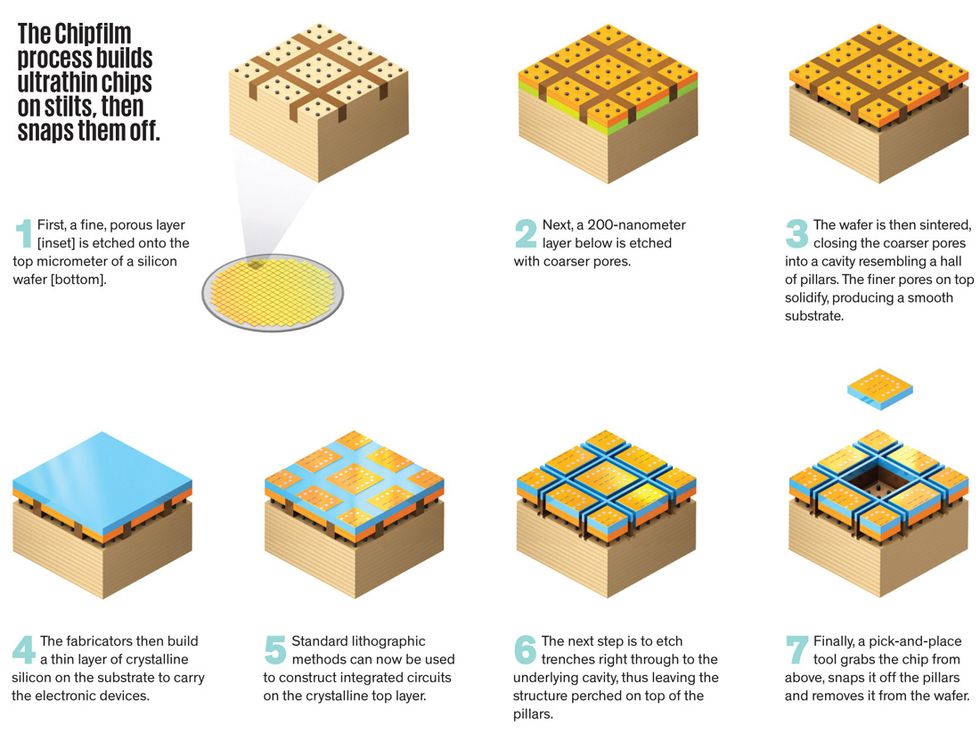

At the Institut für Mikroelektronik Stuttgart, in Germany, we are developing just such an additive technique, under the trade name Chipfilm. It entails growing crystalline silicon, layer by layer, on a foundation laced with sealed cavities. For this scheme, we attach the crystalline silicon layer to an array of small anchors, ensuring that the foundation will be strong enough to support the chip throughout the processing but weak enough to let us snap the finished chip off the top of the wafer. Then we can reuse the bulk of the silicon as a substrate.

We begin by etching a 1-µm layer of porous silicon onto a solid wafer and then a second, 200-nanometer layer of more coarsely porous silicon underneath that. Next, we sinter both layers at high temperature, which causes the nanopores in the coarse layer to merge, like tiny soapsuds fusing into larger bubbles. That fusion closes the coarser pores in the lower layer, producing one continuous cavity interrupted by vertical pillars.

The surface layer serves as a seed for the crystalline silicon, which we grow over the entire surface of the wafer to the desired thickness. Then the chip goes through typical processing—the hundreds of steps needed to create the electronic transistor functions and metal interconnects in and on the chip. After fabrication is complete, the surface layer is still firmly attached to the thick silicon wafer by the array of pillars within the buried cavity.

To snap the chip off the base, we etch a deep trench at the edges of the chip and down into the cavity. That leaves the chip attached to—and supported by—the pillars alone. Our process then makes novel use of a well-known instrument, the pick-and-place tool. This vacuum gripper is typically used to pick up and transfer chips, but we use it to grab the chip and tug it, snapping the vertical pillars with mechanical force. It’s not hard to do, because of the presence of the trench.

By that point, the pillars will have done their job, which is to keep the chip intact during the many fabrication steps that would otherwise ruin a chip as thin as ours. As the chip is always attached to something—either a substrate or the vacuum gripper—it never breaks or rolls up. The pick-and-place tool can then place each chip onto a stack or a flexible substrate with other thin-film components.

After removing the chips, we can polish and recondition the original wafer so that the process can begin again. Each repetition thins down the wafer, but the recycling can go on for quite a while before the wafer becomes too delicate to handle. A 1-millimeter-thick wafer could be whittled down to as little as 400 µm, producing about 50 layers, each of which yields many thin chips. We throw out the remaining stub, but with this technique we waste far less silicon than we do with the subtractive techniques I mentioned earlier.

Subtractive techniques do sometimes have a logistical advantage, because they can be applied after the chip has been fabricated. But because a fully processed silicon wafer is about 100 times as valuable as a virgin silicon wafer, this advantage is realized only if the downstream grinding processes have a high success rate. Those success rates, as we’ve pointed out, decline as chips get thinner and thinner. By contrast, an additive approach shifts most of the thinning steps to the beginning, when any mistakes would ruin an unprocessed wafer rather than a valuable completed one. Another thing: When you’re building from the ground up, the thinner the chip, the cheaper it is to make. That relationship is precisely the opposite of what you get with the subtractive techniques.

Put it all together and the costs and benefits still favor subtractive techniques for chips that are more than 100 µm thick—about the thickness used in today’s smart cards. But for chips less than 30 µm thick—a size ideal for both 3-D ICs and large, flexible SiF applications—the additive approach appears to be more cost-effective.

Introducing a new technology is always a chicken-and-egg problem: Which should come first, the technology or the application? In the case of ultrathin chips, that question has already been answered, to a certain degree, by the industry’s commitment to 3-D ICs. To sustain the miniaturization of microelectronics, the industry road map calls for 5- to 10-µm-thick chips to be used as layers in 3-D stacks by 2020. Chipmakers have a way to go to meet that goal; the thinnest chips currently being made by subtractive techniques in an industrial setting are 50 µm thick.

Our Chipfilm process can make chips as thin as 10 µm, but it isn’t yet ready for large-scale production. For example, because the sintering process leaves some tiny bumps on the upper seed surface, the crystalline silicon that grows on it can develop slight defects or stacking faults. By optimizing the sintering conditions along with the thickness and porosity of the upper silicon layer, we will improve the quality of silicon that grows above it and the circuits we can make. We’re also optimizing the final-stage cracking technique, which damages 5 percent of the chips, to improve yields even further.

Even after our ultrathin chips are finished, however, there are further hurdles for them to clear. Their prized flexibility makes them hard to attach to a substrate. Bubbles can form between the substrate and the chip, like the ones that sometimes get trapped between a protective film and a touch screen. If pressure is then applied to the chip, those bubbles can fracture the die.

These challenges should be surmountable in the seven years the road map for 3-D chips gives us. Meanwhile, the race to develop such chips will drive other applications of ultrathin chips. By 2020, the market for e-paper tablets and other high-performance displays will likely be large enough to call for ultrathin silicon chips. In the meantime, we can greatly improve the reliability of flexible-chip applications like RFID and electronic tags for currency and security documents if the chips are as thin and flexible as the foil patch on which they’re mounted.

Then come the truly mind-boggling applications. Because ultrathin chips can be cut in many shapes, they could be especially useful for biomedical applications. Circular chips are desirable for retinal implants, miniature endoscopic cameras, and diagnostic video pills. Thin, flexible chips may even have the potential to interface with the brain, collecting or stimulating neural activity, perhaps even augmenting our mental powers. Such an interface would be very hard to implement, but one thing is clear: It would require chips of surpassing thinness, the only kind that can move with brain tissue without harming it.

Those applications are many years off. But until then, I’ll be happy to settle for a dirty-laundry alert.

This article originally appeared in print as "You Can’t Be Too Thin or Too Flexible."

About the Author

Joachim N. Burghartz , an IEEE Fellow, is a professor at the University of Stuttgart, in Germany, and directs the Institut für Mikroelektronik Stuttgart.