Loser: D-Wave Does Not Quantum Compute

D-Wave Systems' quantum computers look to be bigger, costlier, and slower than conventional ones

This is part of IEEE Spectrum's special report: Winners & Losers VII

D-Wave Systems, a Canadian start-up, recently booted up a custom-built, multimillion-dollar, liquid-helium-cooled beast of a computer that it says runs on quantum mechanics.

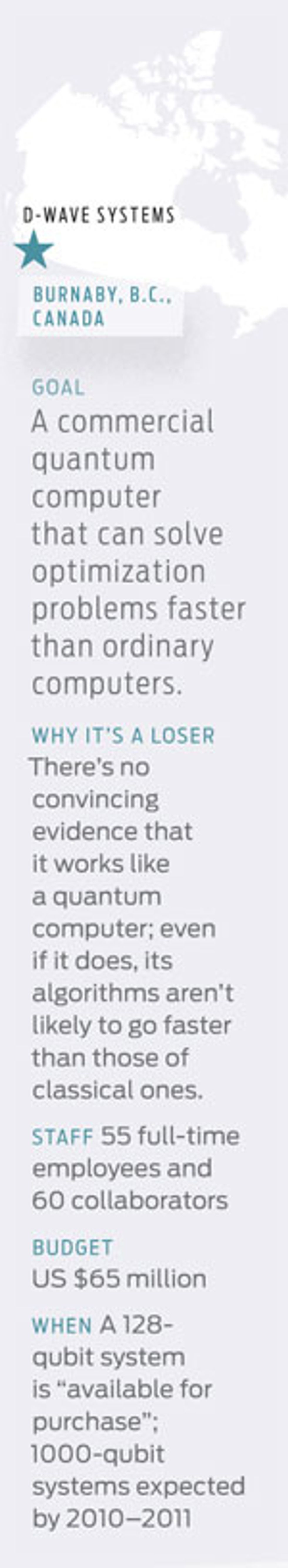

That's right. D-Wave, a 55-person company operating out of an office park in Burnaby, B.C., claims to have built that almost mythical machine, that holy grail of computing, the stuff of sci-fi novels and technothrillers—the quantum computer. Such a system would exploit the bizarre physics that apply on ridiculously small scales to compute ridiculously fast, solving problems that could stymie today's supercomputers for the lifetime of the universe.

Now, building a practical quantum computer has proved hard. Really hard. Despite efforts by some of the world's top physicists and engineers and the likes of IBM, HP, and NEC, progress has been slow. Ask the experts and they'll tell you these systems are a decade—or five—away.

Yet D-Wave believes it can build them now. It has raised some US $65 million from investors that include Goldman Sachs and Draper Fisher Jurvetson, enlisted collaborators from Google and NASA, amassed 50 patents, and transformed its offices into a world-class quantum lab.

Is Schrödinger's cat really out of the bag?

To put things in perspective, consider that one of the most celebrated feats in quantum computing is the factoring of the number 15 (yep, that'd be 3 times 5). The problem is that today's state-of-the-art quantum systems can juggle only a handful of quantum bits, or qubits—the fundamental units of information in quantum computers. Whereas a conventional bit can be in one of two states, 0 or 1, a qubit can be 0, 1, or a superposition of 0 and 1. By linking and manipulating qubits, you can carry out quantum algorithms that solve problems in fewer steps and thus faster than with regular computers. With enough qubits—hundreds to thousands—quantum computers would be able to crack some of the hardest codes, search databases superquickly, and simulate complex quantum systems such as biomolecules.

Rather than build a multipurpose quantum computer, D-Wave says it is building a specialized one, designed to solve specific math problems that have applications in science and business. Its current system has 128 qubits, which the company claims are enough for a research device, one that probably won't beat a powerful PC. To solve larger problems and outperform conventional computers, D-Wave plans to scale up to tens of thousands of qubits in the next two years, eventually reaching millions of qubits.

But experts are skeptical that D-Wave's quantum computer is really, well, quantum.

"If this were the real thing, we would know about it," says Christopher Monroe, a quantum-computing researcher at the University of Maryland, in College Park. He says D-Wave hasn't demonstrated "signatures" believed to be essential to quantum computers, such as entanglement, a coupling between qubits.

Paul Benioff, a physicist who pioneered quantum computing at Argonne National Laboratory, in Illinois, notes that even the best prototypes can't keep more than 10 qubits in entangled states for long. "Because of this I am very skeptical of D-Wave's claims that it has produced a 128-qubit quantum computer," he says, adding that talk of reaching 10 000 qubits at this point is "advertising hype."

Anthony Leggett, a physicist at the University of Illinois at Urbana-Champaign and a Nobel laureate in physics, says that D-Wave has made claims that "have not been generally regarded as substantiated in the community."

But it's all for real, says Geordie Rose, the cofounder and chief technology officer of D-Wave. "We are making good progress," he says, explaining that they are currently testing three 128-qubit systems, to be installed at institutions that will use them for research.

D-Wave's system uses a chip with little loops of niobium metal containing Josephson junctions—two superconductors separated by an insulator. When the chip is cooled to very low temperatures, tiny electrical currents flowing around the loops exhibit quantum properties, and you can use the direction to represent the states of a qubit: Counterclockwise represents 0, clockwise represents 1, and current flowing both ways represents a superposition of 0 and 1.

D-Wave's superconducting qubits are not new, and other groups use similar devices. But whereas most groups are trying to build the quantum logic gates from which all computing operations can be derived—an approach known as the gate model—D-Wave has adopted a different approach, called adiabatic quantum computation. Here's the gist: You initialize a collection of qubits to their lowest energy state. You then ever so gently (or adiabatically) turn on interactions between the qubits, thus encoding a quantum algorithm. In the end, the qubits drift to a new lowest-energy state. You then read out the qubits to get the results.

With enough qubits, D-Wave believes it could beat today's best methods for approximating the solution to difficult optimization problems in financial engineering, logistics, machine learning, and bioinformatics, either by getting the same answer faster or getting a more exact solution.

EXPERT CALLS

"This will never work—if you define ‘never’ as ‘not in 20 years."— Robert W. Lucky

And herein lies the $65 million question for the company. By its own admission, D-Wave will have to go bigger than 128 qubits. But can its system scale up?

Qubits are fragile entities, and stray magnetic fields and other environmental disturbances easily destroy their quantumness, or coherence. David DiVincenzo, a leading quantum computing expert at IBM's T.J. Watson Research Center, in Yorktown Heights, N.Y., says that "there has yet to be an established methodology for how [adiabatic quantum computation] could function fault tolerantly," that is, with effective error correction.

Umesh Vazirani, a computer scientist at the University of California, Berkeley, says D-Wave hasn't taken into account the need to control the rate of the adiabatic process. "Running the adiabatic algorithm without this 'tuning' gives no speedup," he says.

For its part, D-Wave hasn't backtracked. Rose, the CTO, says the company is working on new experiments and simulations that should confirm whether its system operates as a quantum computer.

D-Wave's investors are happy with the company's progress. "Quite happy," says Steve Jurvetson, a director at Draper Fisher Jurvetson.

Hartmut Neven, a Google scientist who is using D-Wave's computer to design and test image-recognition algorithms, says the company is taking a "very sensible approach" and has "a very good chance at getting it to work."

Rose says the collaboration with Google shows that the company is tackling real-world problems, even if it's at the proof-of-concept level. "Our ultimate objective is to build systems with spectacular performance on these sorts of problems," he says.

But when asked whether things are still on track to reach tens of thousands of qubits in the next couple of years, Rose dodges the question. "Right now we are concentrating all our resources on getting the 128-qubit systems up and operational and delivering them to customers," he says.

Which means D-Wave still has a long way until it can build a quantum computer that can solve large real-world problems—and that companies would pay good money for.

Looks like Schrödinger's cat is still in the bag after all.

This article originally appeared in print as "Does Not Quantum Compute."

For all of 2010's Winners & Losers, visit the special report.