At IROS 2012, Gill Pratt declared that grasping was solved, which was a bit of a surprise for all the people doing grasping research. Grasping, after all, is the easiest thing ever, as long as you know absolutely everything there is to know about the thing that you want to grasp. The tricky bit now is perception: recognizing what the object that you want to grasp is, where it is, and how it’s oriented. This is why robots are festooned with all sorts of sensing things, but if all you care about is manipulating an object that you’re familiar with already, dealing with vision is a lot of work.

Liatris is an open-source hardware and software project (led by roboticist Mark Silliman) that does away with vision completely. Instead, you can determine the identity and pose of slightly modified objects with just a touchscreen and an RFID reader. It’s simple, relatively inexpensive, and as long as you’re not trying to deal with anything new, it works impressively well.

To get around the perception problem, Liatris uses a few clever tricks. First, each object has an RFID tag attached to it with a unique identifier, so that the robot can wirelessly detect what it’s working with. Once the robot has scanned the RFID tag, it looks the identifier up in an open source, global database of objects and downloads a CAD model and a grasp pose that “defines the ideal pose for the gripper prior to grasping the object.”

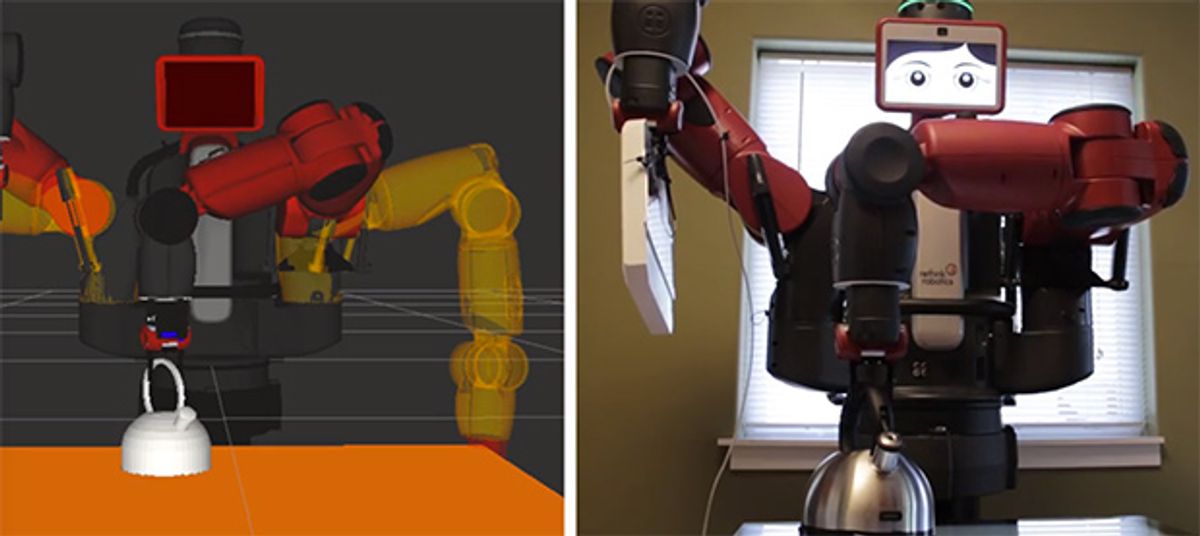

So now that you know what the object is and how to grasp it, you just need to know exactly where it is and what orientation it’s in. You can’t get that sort of information very easily from an RFID tag, so this is where the touchscreen comes in: Each object is (slightly) modified with an isosceles triangle of conductive points on the base, giving the touchscreen an exact location for the object, as well as the orientation, courtesy the pointy end of the triangle. With this data, the robot can accurately visualize the CAD model of the object on the touchscreen, and as long as it knows exactly where the touchscreen is, it can then grasp the real object based solely on the model. The robot doesn’t have to “see” anything: you just need the touchscreen and a RFID reader, and a headless robot arm can grasp just about whatever you want it to.

Here’s a video of the Liatris project in action; keep in mind that this is a proof of concept, which is why it looks kind of like a lot of it is held together with electrical tape:

All of this stuff runs under ROS, using MoveIt! Again, it’s a proof of concept, and things like the open source, global database of objects that it depends on don’t entirely exist yet (although similar things do exist already). In terms of hardware, all you need is a touchscreen and RFID reader: the equipment used in the demo will run you maybe $1,600 in total. It only works with rigid, conductive objects right now because the touchscreen is capacitive, but good multi-touch resistive touchscreens might fix that.

I know we’ve been kind of bashing vision this whole time, but future improvements to Liatris could add cameras to identify object states once the objects are known, for example detecting whether a pot has a lid on it, and whether it’s filled with anything. And with enough touchscreens in enough places, it could make collaborative robots happen without having to rely on vision that we haven’t gotten totally figured out yet, as Mark Silliman and his collaborators explain on the project’s website:

The workspace could have many capacitive touch screens covering all work spaces. The exact location and identity of each touch screen would be passed to the local network, allowing a mobile robot to navigate to the specific touch screen and interact with objects on it. This means that any robot, potentially one of many in this workspace, would know exactly where everything is in the building. The result would be a true “internet of things” experience with humans and robots working together.

[ Liatris ]

UPDATE: We failed to mention some quite relevant related research by Travis Deyle and presented at ICRA in 2013. Travis was able to get a robot to locate and grasp an object using short-range RFID tags embedded in the robot’s gripper, and you can read all about it here.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.