This week, the first meeting of the Convention on Conventional Weapons (CCW) Group of Governmental Experts on lethal autonomous weapons systems is taking place at the United Nations in Geneva. Organizations like the Campaign to Stop Killer Robots are encouraging the U.N. to move forward on international regulation of autonomous weapons, which is great, because talking about how these issues will shape the future of robotics and society is a very important thing.

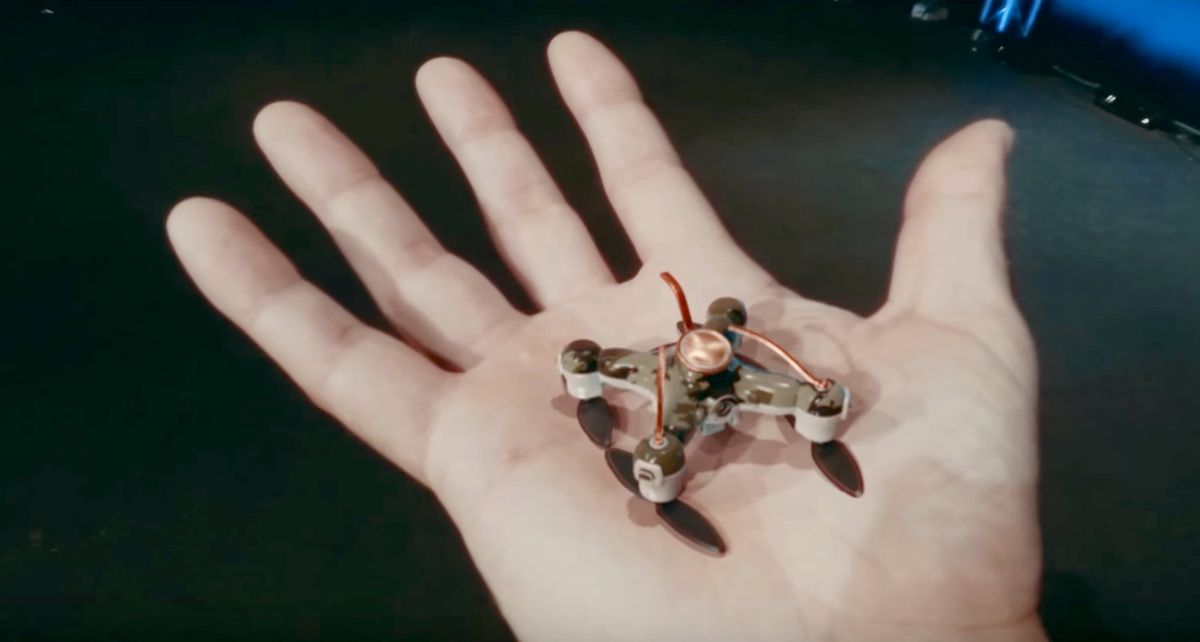

Over the weekend, however, I came across a video that struck me as a disturbing contribution to the autonomous weapons debate. The video, called Slaughterbots and produced with support from Elon Musk’s Future of Life Institute, combines graphic violence with just enough technical plausibility to imagine a very bleak scenario: A fictional near future in which autonomous explosive-carrying microdrones are killing thousands of people around the world.

We are not going to embed the video here because it contains a number of violent scenes, including a terrorist attack in a classroom (!). You can find it on the Future of Life website.

It’s very disappointing to me that robotics and AI researchers whom I otherwise have a lot of respect for would support this kind of sensationalism that seems designed to shock and scare people rather than impart any sort of useful information about what the actual problem is. The message here seems to be that if you’re interested in having a discussion about these issues, or if you think that perhaps there might be other, potentially more effective ways of shaping the future of autonomous weapons besides calling for a ban, then you’re siding with terrorists. This is a dismally familiar form of rhetoric that has been shown to be effective when the objective is not to communicate facts but rather exploit emotions and fears. Another problem is that videos like this, created and promoted by academics, might make the public more distrustful and afraid of robots in general, which is something the robotics community has long been fighting against.

Personally, I am not for “killer robots.” I am not for “autonomous weapons beyond meaningful human control,” either. I find it difficult to support an outright ban at this point because I think doing so would be a potentially ineffective solution to a complex problem that has not yet been fully characterized. AI and arms-control experts are still debating what, specifically, should be regulated or banned, and how it would be enforced. Saying “we have an opportunity to prevent the future you just saw,” as UC Berkeley professor Stuart Russell, one of the creators of Slaughterbots, does at the end of the video, is an oversimplification—in my opinion, a ban on autonomous weapons won’t prevent the miniaturization of drones, won’t prevent advances in facial-recognition technology, and likely won’t prevent the integration of the two, which is just the scenario that Slaughterbots presents.

Asked if the film is relying on fearmongering, one of Russell’s colleagues, Toby Walsh, an AI professor at the University of New South Wales, disagrees. “It’s not fearmongering,” he says. “The Russian ambassador to the U.N. just said to the CCW meeting that we don’t have to worry about lethal autonomous weapons because they’re too distant to necessitate worrying about. To the contrary, the film was designed to demonstrate how near we are to autonomous weapons. It shows what happens if you put together some existing technologies and the misuses to which such technology could be put.”

Two years ago, I responded to the first open letter calling for a ban on offensive autonomous weapons by presenting an alternate perspective about why autonomous weapons might also be beneficial. Russell and Walsh, along with MIT professor Max Tegmark, kindly responded in an article published in IEEE Spectrum, which started with the following:

“We welcome Evan Ackerman’s contribution to the discussion on a proposed ban on offensive autonomous weapons. This is a complex issue and there are interesting arguments on both sides that need to be weighed up carefully.”

I totally agree. Having this discussion now is the best way to work toward a more peaceful future, but to do that, we have to have the discussion, not make scary videos designed to vilify the people we disagree with while reinforcing the unrealistic and increasingly negative perception that many people already have about robotics.

For a variety of expert perspectives on both sides of the autonomous lethal weapons issue and the future of AI, here are links to some of our past coverage:

-

Do We Want Robot Warriors to Decide Who Lives or Dies?

-

Why the United Nations Must Move Forward With a Killer Robots Ban

-

We Should Not Ban ‘Killer Robots,’ and Here’s Why

-

Why We Really Should Ban Autonomous Weapons: A Response

-

Warfighting Robots Could Reduce Civilian Casualties, So Calling for a Ban Now Is Premature

-

Why Should We Ban Autonomous Weapons? To Survive

-

Ban or No Ban, Hard Questions Remain on Autonomous Weapons

-

Industry Urges United Nations to Ban Lethal Autonomous Weapons in New Open Letter

-

Interview: Max Tegmark on Superintelligent AI, Cosmic Apocalypse, and Life 3.0

-

Will Superintelligent AIs Be Our Doom?

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.