Two weeks ago, we posted about quadrotors that were able to autonomously navigate outdoors, relying solely on IMUs and simple vision systems. What we found notable was that the robots didn’t need either GPS or a motion tracking system, implying that they could go out and do their thing in what some people like to call “the real world.” At ICRA 2012 yesterday, MIT’s Adam Bry presented a paper (and video!) demonstrating a micro air vehicle capable of the same sort of self-contained navigation, but indoors and impressively fast.

The background here is that whenever we see “aggressive maneuvering” by an aerial vehicle, there’s almost always a motion tracking system involved to provide constant feedback as to the exact location of said vehicle. There’s nothing really wrong with that (besides that it’s sort of cheating), but practical applications are limited since you’ll never get it to work outside of your lab. On the other hand, when you get autonomous aircraft navigating around outside on their own, they’re either moving very slowly, or their owners have taken care to set them loose as far away as possible from anything that they might accidentally smash into.

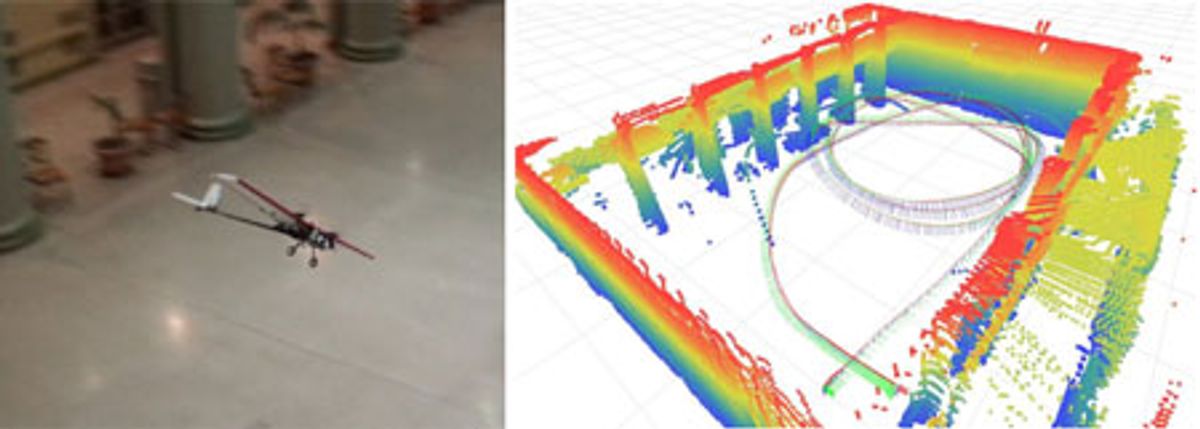

Researchers at MIT CSAIL have decided that slow and obstacle-free flight is boring, so they’ve come up with a way to get MAVs navigating at high speed, indoors, around obstacles, without needing motion tracking or GPS or beacons or any of that nonsense. All they need is a little aircraft that can carry a planar laser rangefinder, an IMU, and a pre-existing 3D occupancy map that the MAV can localize itself in, and you get results like this (the good bits are towards the end):

Now, the caveat here is that the robot already has a 3D map of the space that it’s flying in, so it’s just using the laser range finder and IMU to estimate its location within that space as fast and as accurately as possible. The MAV is not mapping the space as it goes. This isn’t to say that this makes everything easy: getting a robot this size to localize itself fast enough and accurately enough to fly around columns in an underground parking garage is no small feat, and required the use of integrating sensor data through a clever algorithm that could get the job done using nothing more than an 1.6GHz Atom onboard processor.

As for the 3D map that this MAV needs to do what it does, there are plenty of ways to go about using robots to generate one. For example, you could use an autonomous quadrotor that’s set up to do SLAM (simultaneous localization and mapping) with a Kinect or LIDAR sensor(s). Conceivably, the MAV might even be able to do its own SLAM, but that would likely require some hardware compromises and it would certainly require that the MAV be flown less aggressively, at least until it figures out where it is in relation to all those troublesome obstacles.

The upshot of all this is that MIT has managed to put together a MAV that’s capable of treating environments without motion tracking or GPS like environments that have those things available for localization, which opens up all kinds of options for those aggressive maneuvers that we know and love to take place farther from labs festooned with Vicon cameras and closer to my living room.

State Estimation for Aggressive Flight in GPS-Denied Environments Using Onboard Sensing, by Adam Bry, Abraham Bachrach, and Nicholas Roy from the Massachusetts Institute of Technology, was presented yesterday at ICRA 2012 in St. Paul, Minnesota.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.