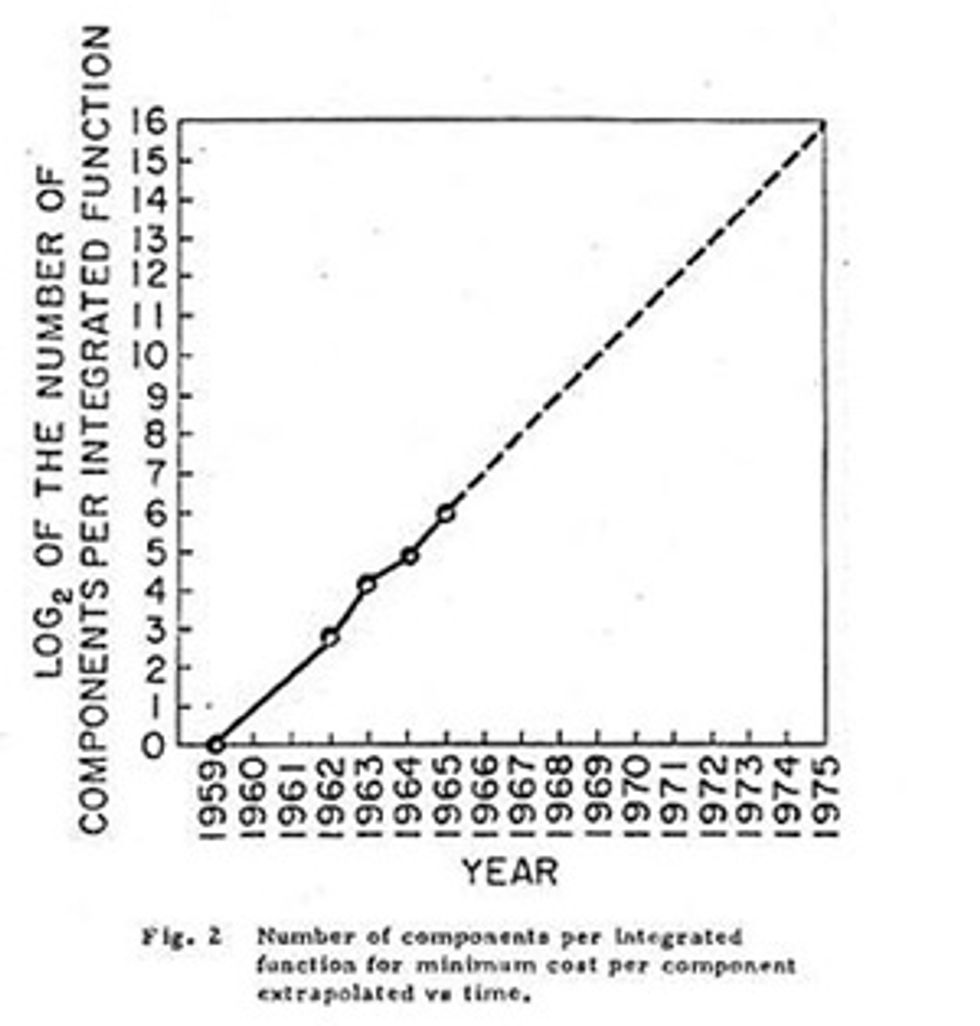

In the 19 April 1965 issue of Electronics, Gordon Moore published a forecast about the future of electronics that, with time, has become famous. His now 50-year-old article predicted that the silicon integrated circuit would continue to develop over the coming decade exactly as it had over the preceding five years. To illustrate his prediction, he drew a plot that has now become almost as famous: a straight line on a logarithmic scale that showed the complexity of microchips had doubled every year since their commercial introduction, climbing from about 8 components (transistors, resistors, and the like) in 1962 to around 64 components apiece. Moore extended this straight line out for 10 additional years. By 1975, he projected, each microchip would contain some 65,000 components.

Moore’s prediction proved spot-on and, with one revision to a doubling every two years, Moore’s Law has held true to the present day. But in commemorating Moore’s Law, we should note that 1965 isn’t the only anniversary we could celebrate. While few realize it, Gordon Moore actually published the key reasoning behind his Electronics paper two years earlier.

As my coauthors and I detail in a new biography of Gordon Moore, this earlier publication is a long article he wrote titled “Semiconductor Integrated Circuits.” It appears in Microelectronics: Theory, Design, and Fabrication, a 1963 book of highly technical articles edited by an electrical engineer named Edward Keonjian. The core of the nearly 100-page chapter is a 10-page section called “Cost and Reliability,” in which Moore carefully walks the reader through an exacting analysis—complete with several formulas—of the economics of silicon microchip manufacturing, what I typically call a “chemical printing” technology. A feeling for Moore’s rigor is captured here:

The total microcircuit production cost C1 can be considered as made up from the cost of a good die plus the cost of assembly as modified by the yield at final test. That is, C1 = (Cd + Ca)Yf-1.

After running through the implications of this and other equations and conditions, he draws several conclusions. First, he notes that integrated circuits were already about as cheap as comparable sets of individually made components:

Even at the circuit-complexity level of the micrologic gate—three transistors, four resistors, and six lead bonds—the cost of producing the integrated circuit is approximately equal to the cost of producing the individual components in equivalent quantities.

Then he noted a central premise, repeated later in his 1965 paper, that the state of the chemical printing technology determined how tightly engineers might pack circuit components on a microchip. Generally speaking, the more components per microchip, the lower the cost per component. At a certain density, though, this packing would get increasingly tough. The number of defects would rise, and the yield—the proportion of good circuits in the batch—would start to fall. Cost would rise. But over time, Moore said, this pain point would get pushed out to greater complexity as improvements were made to the manufacturing technology:

As the complexity is increased, microcircuits are favored more and more and more strongly, until one reaches the point where the yield because of the complexity falls below a production-worthy value. The point at which this occurs will continue to push rapidly in the direction of increasing complexity. As the technology advances, the size of a circuit function that is practical to integrate will increase rapidly.

What Moore had realized was that the technology for producing silicon microchips was open to incredible extension. There would be a huge economic reward to firms that invested in the manufacturing technology, a smorgasbord of processes that encompasses crystal growing, wafer polishing, photolithography, epitaxy, metals deposition, diffusion, ion implantation, and more. Pushing these areas to newer heights would progressively redefine the optimum microchip: the chip with both the highest-performing electronics available and the lowest-cost components.

From his analysis of these fundamentals, Moore predicted the rise of “larger and more complex systems than ever before practical,” with advantages that would carry over into all of electronics, including consumer products.

This was, despite its mild tone, a radical position. Silicon microchips had just emerged as a product, and they were expensive. Nearly all were consumed by the U.S. government for applications such as the onboard guidance computers of nuclear missiles and the Apollo spacecraft. The most pervasive consumer electronics of the day were radios and televisions that contained, at most, several individual transistors. In computers, IBM was on the cusp of releasing its System/360 mainframe, which would allow IBM to capture an astounding share of the computer market in the 1960s, and which, famously, was built from discrete transistors. Integrated circuits were new, and Moore’s claim that microchips would not only enable more powerful mainframes but also find their way into consumer goods was a bold one.

Given Moore’s 1963 analysis and his radical conclusions, do we have the anniversary of Moore’s Law wrong? My best answer is “It depends.” I’m not being wishy-washy. I think the answer touches on an essential difficulty in agreeing on precise dates for discoveries and inventions in general. It’s difficult because definitions are, by their nature, conventions. Pinning Moore’s Law to a particular date depends on exactly what we mean when we talk about it. If we choose to emphasize the explicit, regular-doubling prediction, then Moore’s Law surely dates to that publication of 19 April 1965. If we conceive of it more broadly as Moore’s insights into the fundamentals of silicon microchips—how investments in manufacturing technology would allow ever more complex microchips and make electronics profoundly cheaper—then we can safely say that Moore’s Law dates to 1963, with the publication of his book chapter.

It’s also worth noting that Moore’s Law didn’t arise in a vacuum. Moore came to his fundamental understanding of microchips from his deep immersion in silicon electronics manufacturing. It should come as little surprise, then, that others similarly steeped would come to share viewpoints similar to Moore’s. For example, in 1964, Westinghouse’s C. Harry Knowles (publishing in IEEE Spectrum) and Bell Labs’ Bernard T. Murphy (writing in Proceedings of the IEEE) both independently noted that the current manufacturing technology defined an optimal point where microchip complexity would be high and the cost per component at its lowest.

But their analyses were firmly rooted in the present moment. They didn’t take the next step, as Moore had, to find that both economics and chemical printing technology would favor continual investment, leading to more complex microchips and cheaper electronics, and that there was nothing fundamental—yet—standing in the way of this trend.

And Gordon Moore did not simply rest on his analysis and vision. He directly acted on it. He saw that advantage lay in continued and continuous investment in manufacturing technology. It was an approach that he and, eventually, thousands of his colleagues at Intel and the global semiconductor industry as a whole would dramatically exploit and benefit from for decades.

About the Author

David C. Brock is a historian with the Chemical Heritage Foundation, in Philadelphia. He coauthored a biography of Gordon Moore (Moore’s Law: The Life of Gordon Moore, Silicon Valley’s Quiet Revolutionary), which will be released by Basic Books in May.

David C. Brock is a historian of technology, director of curatorial affairs at the Computer History Museum, and director of CHM’s Software History Center. He focuses on histories of computing and semiconductors as well as on oral history and is occasionally lucky enough to use the restored Alto in the museum’s Shustek Research Archives. He is the coauthor of Moore’s Law: The Life of Gordon Moore, Silicon Valley’s Quiet Revolutionary (Basic Books, 2015) and Makers of the Microchip(The MIT Press, 2022). He is on Twitter @dcbrock and on the Fediverse @dcbrock@federate.social.