Researchers at Intel and Cornell University report that they’ve made an electronic nose that can learn the scent of a chemical after just one exposure to it and then identify that scent even when it’s masked by others. The system is built around Intel’s neuromorphic research chip, Loihi and an array of 72 chemical sensors. Loihi was programmed to mimic the workings of neurons in the olfactory bulb, the part of the brain that distinguishes different smells. The system’s inventors say it could one day watch for hazardous substances in the air, sniff out hidden drugs or explosives, or aid in medical diagnoses.

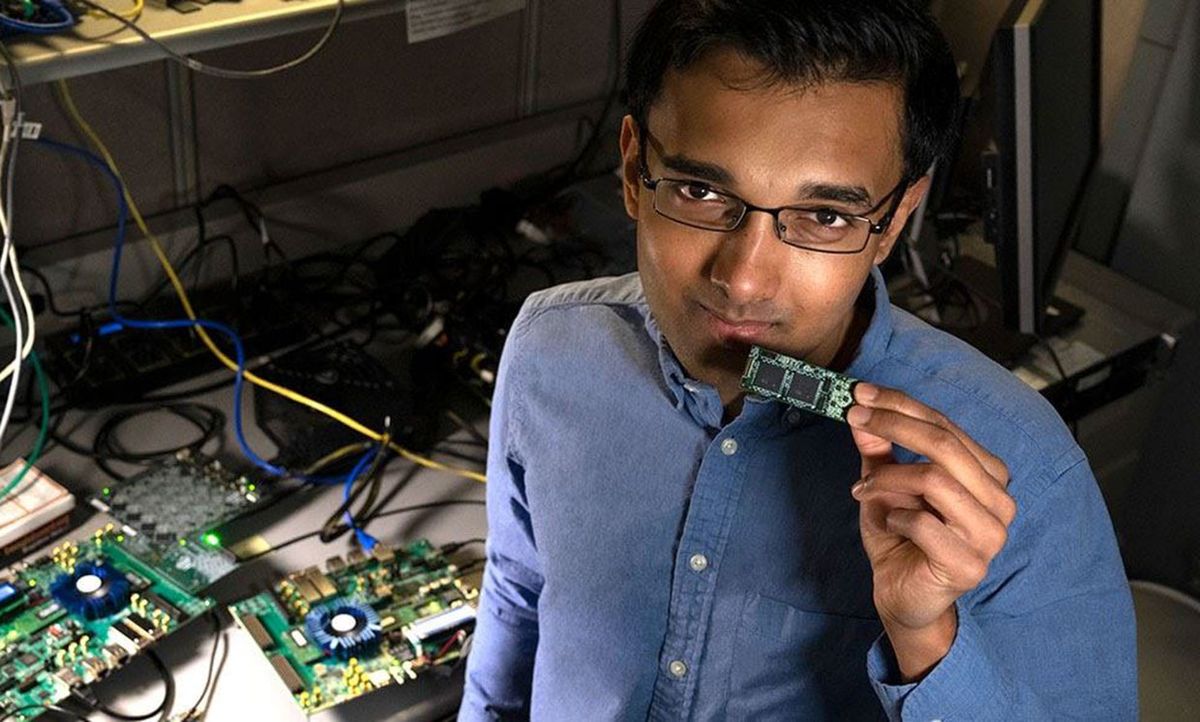

Loihi’s chip architecture is meant to more closely match the way the brain works than the architectures of CPUs or even new accelerator chips designed to speed deep learning. Researchers hope that such neuromorphic chips will be able do things that today’s AI systems can’t do, or at least can’t do without consuming a lot of power or taking too much time.

One of those things is called “one-shot” learning. Your nose can smell something once, and your brain will immediately recognize it again. But today’s AI systems, which often use deep learning artificial neural networks, must be trained using a huge number of previously identified examples. That makes training a time-consuming, power-hungry process. Even worse, most previously trained AI cannot easily learn a new category without damaging its memory of the old ones, meaning it needs to be completely retrained with all the categories.

Unlike the artificial neurons in today’s AI, Loihi’s neurons carry information in the timing of digitally-represented spikes, which is more analogous to what goes on in your brain.

The scent-learning experiments required only one Loihi chip, but Intel designed them to be seamlessly linked together in much larger systems. The company reported this week that it had produce a multi-board, 768-chip, 100-million neuron system. The largest Loihi system prior to that comprised 64 chips and the equivalent of 8 million neurons.

According to Intel senior research scientist Nabil Imam, the next step is “to generalize this approach to a wider range of problems—from sensory scene analysis (understanding the relationships between objects you observe) to abstract problems like planning and decision-making. Understanding how the brain’s neural circuits solve these complex computational problems will provide important clues for designing efficient and robust machine intelligence.”

However, there are challenges to overcome first. In particular, the system needs to be able to group different, closely related aromas, into a common category. For example, it needs to be able to tell that strawberries from California and strawberries from Europe are the same fruit. “These are challenges in olfactory signal recognition that we're working on and that we hope to solve in the next couple of years before this becomes a product that can solve real-world problems beyond the experimental ones we have demonstrated in the lab,” Imam said in a press release.

Imam and Cornell University olfactory expert Thomas A. Cleland reported the new system this week in Nature Machine Intelligence.

This post was updated on 19 March to include mention of the new 100-million neuron Loihi system.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.