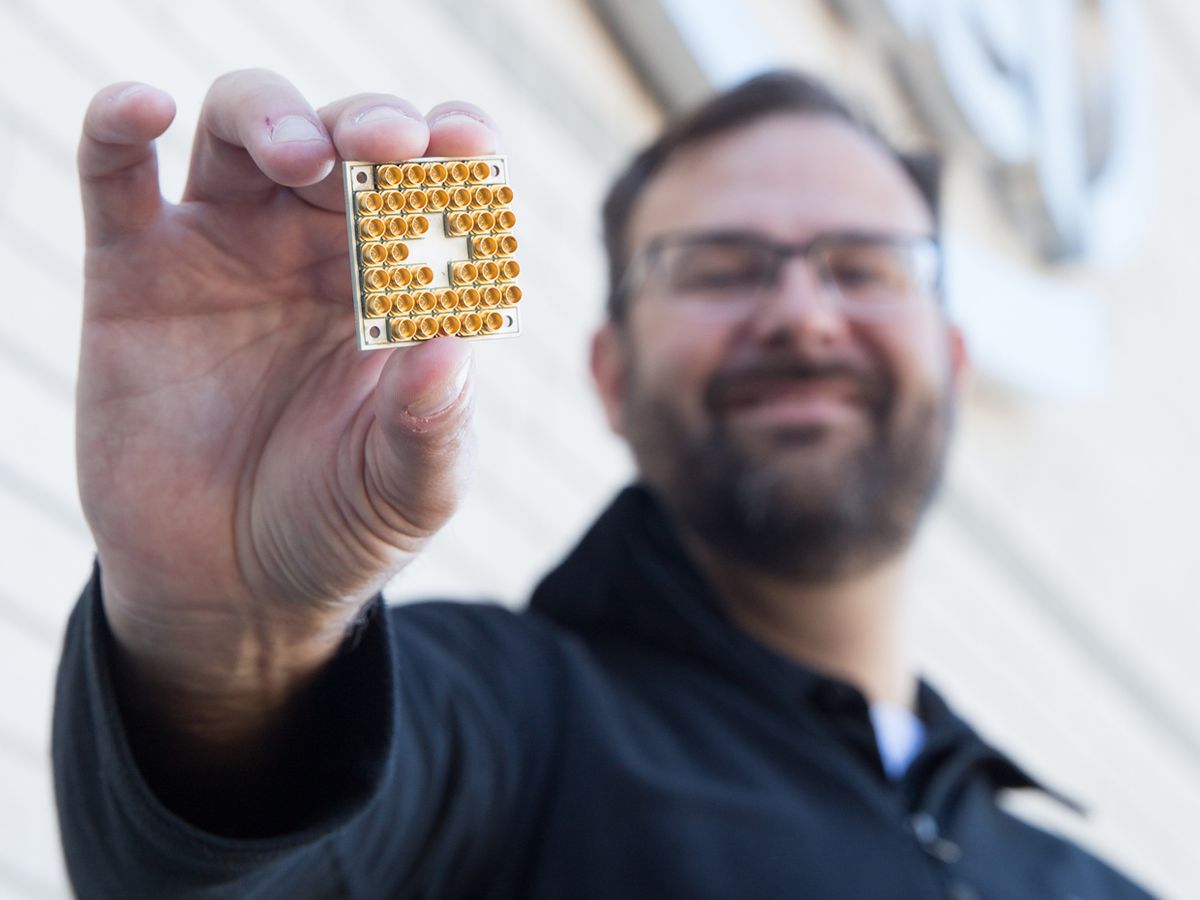

Intel says it is shipping an experimental quantum computing chip to research partners in The Netherlands today. The company hopes to demonstrate that its packaging and integration skills give it an edge in the race to produce practical quantum computers.

The chip contains 17 superconducting qubits—the quantum computer’s fundamental component. According to Jim Clarke, Intel’s director of quantum hardware, the company chose 17 qubits because it’s the minimum needed to perform surface code error correction, an algorithm thought to be necessary to scaling up quantum computers to useful sizes.

Intel’s research partners, at the TU Delft and TNO research center Qutech, will be testing the individual qubits’ abilities as well as performing surface code error correction and other algorithms.

Clarke says that much of the innovation in Intel’s chip has to do with a field that is rarely written about in the popular press (or in IEEE Spectrum): packaging. “With superconducting quantum chips, it’s all about the packaging,” he says. Qubits are extremely sensitive to RF interference, something packaging can help prevent. More importantly, superconducting qubits operate at temperatures of 20 millikelvin. “These are very harsh conditions for semiconductors and packaging,” adds Clarke.

Other superconducting qubit chips use a technology called wire bonding to get signals on and off the chip. Here, micrometer-scale wires link bond pads at the top of the chip to package’s pins, which are then soldered to a circuit board. Though still very much in use, wire bonding is an older technology with limits on how many interconnections can be made.

For the new quantum chip, Intel adapted so-called flip chip technology to work at millikelvin temperatures. Flip chip involves adding a dot of solder to each bond pad, flipping the chip upside down atop the circuit board, and then melting the solder to bond it. The result is a smaller, denser, and lower inductance connection.

Being able to increase the number of connections is key to scaling up to practical million-qubit quantum computers, says Clarke, as each qubit needs at least one control line and probably several. Such a chip would also need control electronics situated close to the qubits to reduce latency. “If we had a million-qubit chip today, we wouldn’t have the means to run it,” says Clarke. “It’d make a nice paper weight.”

By developing the infrastructure technology along with quantum computing chips, “we’re putting in the work to deliver something that looks more like a computer,” says Clarke.

In terms of the pace of development, Intel’s superconducting qubit project has a clear lead over projects using other kinds of qubits. But there’s plenty of competition. Earlier this year, Google tested chips featuring 6 and 9 superconducting qubits on its way to a 49-qubit machine it hopes to have ready by the end of the year. The 49-qubit machine is meant to demonstrate “quantum supremacy”—definitively showing that a quantum system can do something faster than any classical computer.

IBM is also in the superconducting qubit game. And startup Rigetti has launched a fab near Silicon Valley to allow others to get into the game.

“We’re in mile one of a marathon, and Intel is in the lead pack,” Clarke says.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.