Quantum computers are some of the most fiendishly complex machines humans have ever built. But exactly how complex you make them has significant impacts on their performance and scalability. Industry leaders, perhaps not surprisingly, sometimes take very different approaches.

Consider IBM. As of this week (8 August), all of Big Blue's quantum processors will use a hexagonal layout that features considerably fewer connections between qubits—the quantum equivalent of bits—than the square layout used in its earlier designs and by competitors Google and Rigetti Computing.

This is the culmination of several years of experimentation with different processor topologies, which describe a device's physical layout and the connections between its qubits. The company's machines have seen a steady decline in the number of connections despite the fact its own measure of progress, which it dubs "quantum volume", gives significant weight to high connectivity.

That's because connectivity comes at a cost, says IBM researcher Paul Nation. Today's quantum processors are error-prone, and the more connections between qubits, the worse the problem gets. Scaling back that connectivity resulted in an exponential reduction in errors, says Nation, which the company thinks will help them scale faster to the much larger processors that will be required to solve real-world problems.

"In the short term it's painful," says Nation. "But the thinking is not what is best today, it's what is best for tomorrow."

IBM first introduced the so-called "heavy-hex" topology last year, and the company has been gradually retiring processors with alternative layouts. After this week, all of the more than 20 processors available on the IBM Cloud will rely on the design. And Nation says heavy hex will be used in all devices outlined in its quantum roadmap, at least up until the 1,121-qubit Condor processor planned for 2023.

"In the short term it's painful. But the thinking is not what is best today, it's what is best for tomorrow."

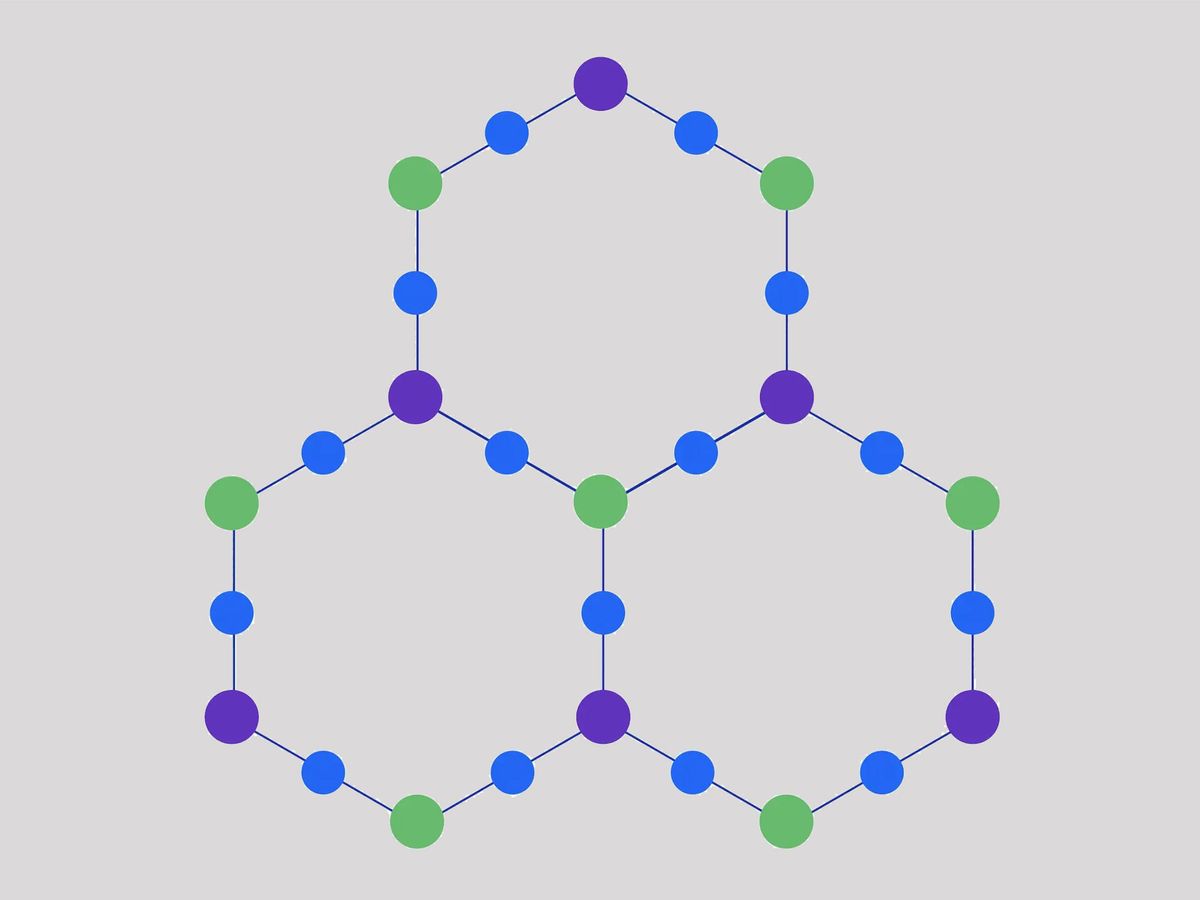

The basic building block is 12 qubits arranged in a hexagon, with a qubit on each point and another on each flat edge. The qubits along the edges only connect to their two closest neighbors, while the ones on the points can connect to a third qubit, which makes it possible to tile hexagons alongside each other to build larger processors.

The layout represents a significant reduction in connections from the square lattice used in the company's earlier processors—as well as most other quantum computers that rely on superconducting qubits. In that topology qubits typically connect to four neighbors to create a grid of squares. Quantum computers that use trapped-ion qubits like those made by Honeywell and IonQ go even further and permit interactions between any two qubits, though the technology comes with its own set of challenges.

The decision was driven by the kind of qubits that IBM uses, says Nation. The qubits used by companies like Google and Rigetti can be tuned to respond to different microwave frequencies, but IBM's are fixed at fabrication. This makes them easier to build and reduces control system complexity, says Nation. But the layout also makes it harder to avoid frequency clashes when controlling multiple qubits at once. Also, connections can never be completely turned off—so qubits still exert a weak influence on their neighbors even when not involved in an operation.

Both phenomena can throw calculations off, but by shifting to a topology with fewer connections, IBM researchers were able to significantly reduce both effects, which led to an exponential decline in errors.

Less connectivity makes implementing circuits considerably harder, says Franco Nori, chief scientist at the Theoretical Quantum Physics Laboratory at the Riken research institute in Japan. If two qubits aren't directly linked, getting them to interact involves a series of swap operations that pass their values from qubit to qubit until they are next to each other. The fewer direct connections, the more operations required, and because each is susceptible to errors, the process can become like a game of "telephone," says Nori.

"You whisper the information to your neighbor, but by the time it gets to the other side, the probability of it being crap is huge," he says. "You don't want to have many intermediaries."

The reduced connectivity does push up the number of operations required, says Nation. But the team found the overhead remains constant as devices scale up. If they keep up the exponential reduction in errors for each operation, its impact will quickly diminish, he adds. "You pay a price," he says. "But if you can continue to improve your two-qubit performance, over time you will more than make up for that cost."

Whether exponential reductions in error will continue is unclear. Nation admits much of the gains came from the reduction in connectivity, an avenue that has now been saturated. Further progress will need to come from advances in other areas like material sciences and hardware design.

The trade-off IBM has made makes sense though, says Fred Chong, a professor at the University of Chicago, who studies quantum computing. While Google's tuneable qubits can support more connections, they are also more complicated to build, he says. Which makes scaling them up harder. Google didn't respond to an interview request.

Edd Gent is a freelance science and technology writer based in Bengaluru, India. His writing focuses on emerging technologies across computing, engineering, energy and bioscience. He's on Twitter at @EddytheGent and email at edd dot gent at outlook dot com. His PGP fingerprint is ABB8 6BB3 3E69 C4A7 EC91 611B 5C12 193D 5DFC C01B. His public key is here. DM for Signal info.