Traditional supercomputers focused on performing calculations at blazing speeds have fallen behind when it comes to sifting through huge amounts of “Big Data.” That is why IBM has redesigned the next generation of supercomputers to minimize the need to shuffle data between the memory that stores the data and the processors that do the computing work. The tech giant recently earned US $325 million in federal contracts to build the world’s most powerful supercomputers for two U.S. government labs by 2017.

The first of the two new supercomputers, called “Summit”, will help researchers crunch the data needed to simulate a nuclear reactor and model climate change at the U.S. Department of Energy’s Oak Ridge National Laboratory in Tennessee. The second supercomputer, named “Sierra”, will provide the predictive power necessary for researchers at Lawrence Livermore National Laboratory in California to conduct virtual testing of the aging nuclear weapons in the U.S. stockpile. Both supercomputers rely upon what IBM says is a “data centric” architecture that spreads computing power throughout the system to crunch data at many different points, rather than moving data back and forth within the system. But the company did not detail how it would accomplish this.

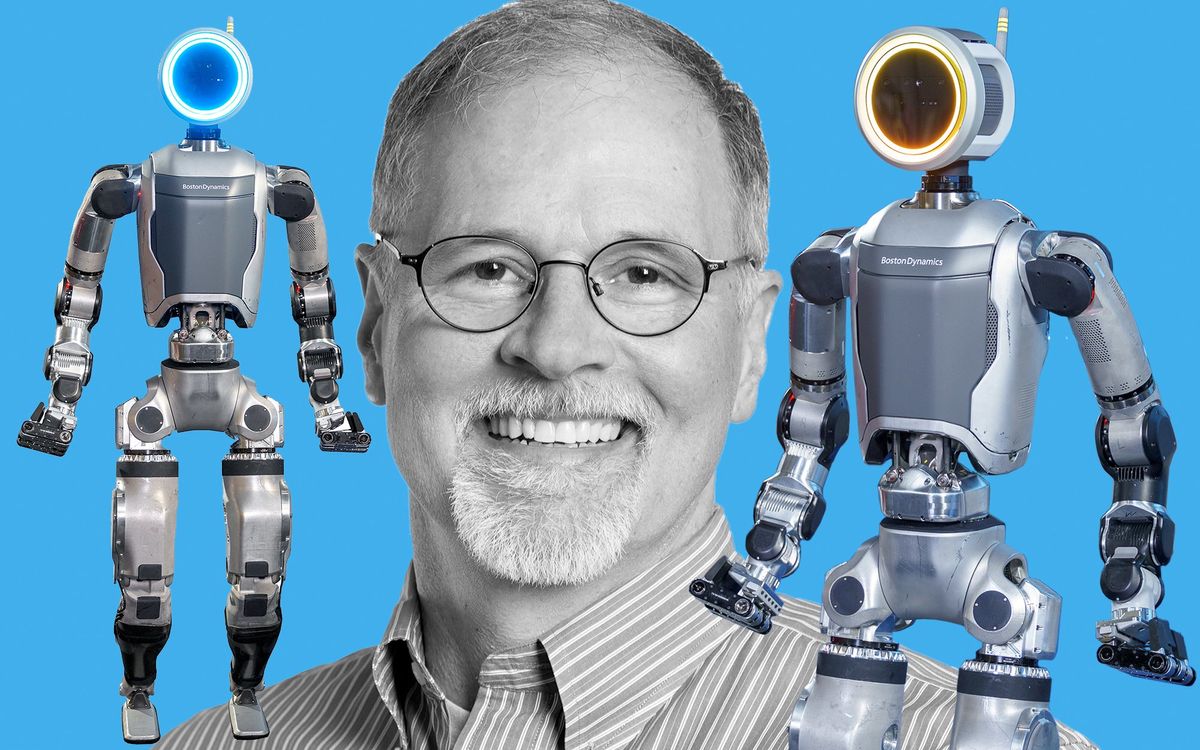

The usual torrent of data being shuffled back and forth becomes “like a mighty river that gets siphoned off along the way until it becomes a trickle once it reaches the final destination,” says Dave Turek, vice president of Technical Computing OpenPower at IBM.

If Summit and Sierra were complete today they would easily be the most powerful supercomputers yet built with peak performances of more than 100 petaflops (100 thousand trillion calculations per second). They would leverage the new IBM architecture to achieve roughly five to 10 times better performance compared to today’s supercomputer systems such as Oak Ridge National Laboratory’s Titan.

IBM’s new supercomputing architecture emphasizes efficiency from a power consumption and a size standpoint. Compared to Titan, Summit would use just a little more power—perhaps 10 percent—while delivering 5 to 10 times more computing power and taking up just one fifth the physical space. Power efficiency in particular is crucial for future supercomputer architectures, given how the old model’s continuous shuffling of data between chips, boards, and racks would lead to impractical power consumption by future supercomputers with performance targets over an exaflop (one million trillion calculations per second).

When necessary, the new Summit and Sierra supercomputers will also be able to zip data around at more than 17 petabytes per second—roughly equivalent to moving over 100 billion photos on Facebook in a second. Such systems would take advantange of NVIDIA’s new NVLink interconnect technology, which will allow IBM’s Power CPUs and NVIDIA’s next-generation GPUs—code-named Volta—to exchange data five to 12 times faster than is possible today. (GPUs tend to beat out CPUs when performing a single operation to a large set of data.)

IBM has also partnered with Mellanox to use the company’s interconnect technology to intelligently manage the data flow within the future supercomputing systems. But ultimately IBM hopes that its new architecture would reduce the rivers of data continuously flowing within the supercomputers to small streams.

Data-centric architecture also represents IBM’s solution to the challenge that even powerful supercomputers face from the so-called Big Data problems. The old supercomputing model of retrieving data from memory storage and moving it to a processor that can analyze the contents can no longer keep up with the huge amounts of data being generated every day. Such problems include datasets of real-world human behavior on a grand scale, ranging from customer purchases on Amazon to the metadata within calls or texts being tracked by the U.S. National Security Agency.

The supercomputing challenge of managing mountains of data has been widely recognized by researchers in both academia and in industry. It even gave birth to a new benchmark list for supercomputers, called Graph 500, which examines how well supercomputers can sift through heaps of data and make connections between data points. By comparison, the traditional Top 500 supercomputer list focuses on calculation speed tests.

“Nobody actually cares how fast you can make an algorithm run; they care how fast you can solve a problem,” Turek says. “If the problem is that you’re being overwhelmed by data movement and data management, it’s extraordinarily short-sighted to have a single focus on making faster microprocessors.”

Instead of grasping for a new “magic widget” to fix the problem, IBM took supercomputing back to the drawing board starting in 2009. The company and its coalition of partners still faces some engineering challenges in making the new supercomputers, but it remains confident that its design philosophy can make the new systems a reality on schedule. Such an architecture could help pave the way toward building an exascale supercomputer, IBM claims.

But customers won’t have to wait until 2017 . IBM says it is already building elements of the new architecture—such as merging computing power with storage—into existing supercomputing systems based on the older computing model. Potential customers such as oil and gas companies, life science firms, financial service companies, and research institutions have begun talking with IBM about using the early components of data centric architecture to tackle their own Big Data problems, according to the company.

Even smaller research groups without the deep pockets of a U.S. government lab or a huge corporation could soon benefit from IBM’s new architecture, says Turek. That’s because the data centric architecture can scale down for smaller supercomputing systems just as well as it could scale up beyond the blueprints for Summit and Sierra. “Our intention is to actually tackle the total opportunity space for supercomputing,” Turek says. “This is not just limited to high-end customers.”

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.