“We have successfully built a 20-qubit and a 50-qubit quantum processor that works,” Dario Gil, IBM’s vice president of science and solutions, told engineers and computer scientists at IEEE Rebooting Computing’s Industry Forum last Friday. The development both ups the size of commercially available quantum computing resources and brings computer science closer to the point where it might prove definitively whether quantum computers can do something classical computers can’t.

“It’s been fundamentally decades in the making, and we’re really proud of this achievement,” said Gil.

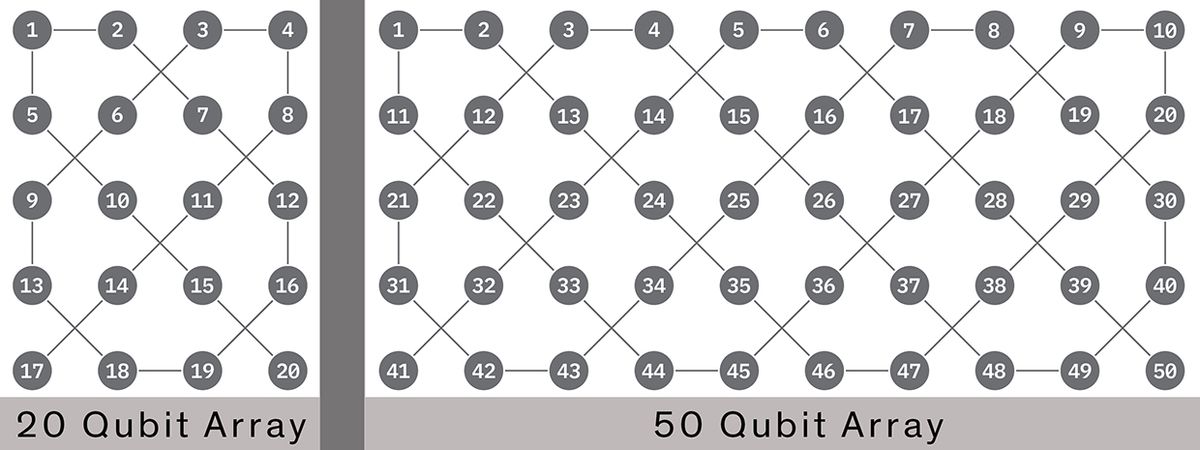

More interconnected qubits translate to exponentially more computing power, so industry has been racing to increase the number of qubits in their experimental processors. The 20-qubit machine, made from improved superconducting qubits that operate at a frigid 15-millikelvin, will be made available to IBM clients through the company’s IBM Q program by the end of 2017. The company first made a 5-qubit machine available in 2016, and then a 16-qubit machine earlier this year.

The 50-qubit device is still a prototype, and Gil did not provide any details regarding when it might become available.

The qubits in the new processors are more stable than those in previous generations. Stability is measured in “coherence time,” the average length a qubit will stay in a quantum state of superposition before environmental influences cause it collapse to either a 1 or 0. The longer the coherence time, the longer the processor has to complete its calculations. The quantum bits in IBM’s 5- and 16-qubit machines averaged 50 and 47 microseconds respectively, Gil said. The new 20- and 50-qubit machines hit 90 microseconds.

Apart from wanting to achieve practical quantum computing, industry giants, Google in particular, have been hoping to hit a number of qubits that will allow scientists to prove definitively that quantum computers are capable of solving problems that are intractable for any classical machine. Earlier this year, Google revealed plans to field a 49-qubit processor by the end of 2017 that would do the job. But recently, IBM computer scientists showed that it would take a bit more than that to reach a “quantum supremacy” moment. They simulated a 56-qubit system using the Vulcan supercomputer at Lawrence Livermore National Lab; their experiments showed that quantum computers will need to have at least 57-qubits.

“There’s a lot of talk about a supremacy moment, which I’m not a fan of,” Gil told the audience. “It’s a moving target. As classical systems get better, their ability to simulate quantum systems will get better. But not forever. It is clear that soon there will be an inflection point. Maybe it’s not 56. Maybe it’s 70. But soon we’ll reach an inflection point” somewhere between 50 and 100 qubits.

(Sweden is apparently in agreement. Today it announced an SEK 1 billion program with the goal of creating a quantum computer with at least 100 superconducting qubits. “Such a computer has far greater computing power than the best supercomputers of today,” Per Delsing, Professor of quantum device physics at Chalmers University of Technology and the initiative's program director said in a press release.)

Gil believes quantum computing turned a corner during the past two years. Before that, we were in what he calls the era of quantum science, when most of the focus was on understanding how quantum computing systems and their components work. But 2016 to 2021, he says, will be the era of “quantum readiness,” a period when the focus shifts to technology that will enable quantum computing to actually provide a real advantage.

“We’re going to look back in history and say that [this five-year period] is when quantum computing emerged as a technology,” he told the audience.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.