Computers have no trouble controlling huge swarms of robots, because the computer can just treat the swarm as a bunch of individually controllable units. But what happens when you have a swarm of really dumb robots, where they're all listening to the exact same controller? Like, you input a command to go left, and every single robot goes left? It seems like this would severely limit what can be done with the swarm, but thanks to some sophisticated algorithms and real world randomness, researchers from Rice University have shown that you can get a swarm of robots like this to do absolutely anything you want.

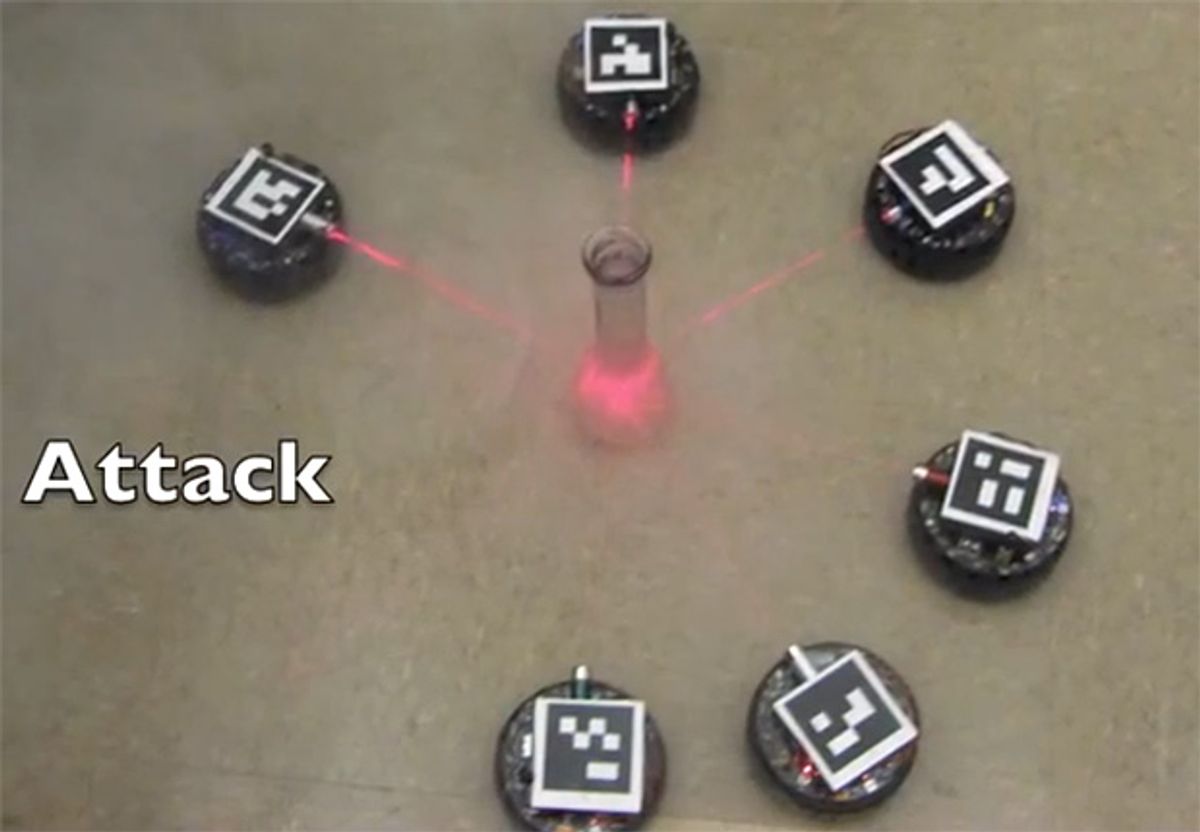

And also, the robots are equipped with laser turrets. Laser turrets.

Here's the problem, as illustrated by Bill Amend in Foxtrot:

The issue with the comic strip is that (shockingly) real life doesn't work this way. While it's certainly possible to control many robots with a single remote, you can't easily control them relative to each other, making it nearly impossible to (say) surround a target.

Inspired by this comic, Aaron Becker set out to show that it is, in fact, possible to do what Jason Fox has done: use one remote to steer a bunch of similar (but not identical) robots into arbitrary positions and orientations, with just forward, backward, and rotate commands that affect all the robots equally. The secret, it turns out, is to take advantage of the rotational noise of the robots. When you send a turn command to your robot swarm, factors like random wheel slip cause each robot to turn a slightly different amount. A computer can track this, and with a clever algorithm, it can exploit it to gradually move robots relative to each other. This video shows the algorithm in action:

The robots in the video form a circle around their target, which isn't how it looks in the comic, but that's fine. The researchers proved that they can take robots from any starting position and get them into any ending position you want, given enough time. Here are a few more examples of that, in simulation and with real robots:

This research is particularly relevant for situations where you have a bunch of robots that can only accept control input from a single source. The best example of this is probably those magnetic nanobots that can zip around inside your eyeballs. Since you've only got one magnetic field to manipulate, using more than one nanobot is going to involve some sort of clever technique like this one.

The researchers (including Aaron Becker and James McLurkin) have devised an online game you can play to help them optimize their algorithms, check it out here. And you can read the paper they'll be presenting at IROS 2013 here.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.