Based on conversations we’ve had with iRobot CEO Colin Angle, we’re expecting that within the next six months or so, robot vacuums will be able to understand our homes on a much more sophisticated and useful level than ever before. Specifically, they’ll be able to generate maps that persist between cleaning sessions, and these maps will allow the robots to identify and remember specific rooms and adjust their cleaning behavior accordingly. ( Neato is also implementing this kind of capability.) For example, if your robot vacuum knows where your kitchen is, it can respond to commands like “Go clean the kitchen,” or autonomously clean there as often as it needs to.

At IROS in September, we got a bit of a sneak peak into how iRobot is going to make this happen, and how much of a difference it can make to the speed and efficiency of home navigation. It’s a big difference, and it can even work on your older (and affordable) Roomba that only has bump sensors on it.

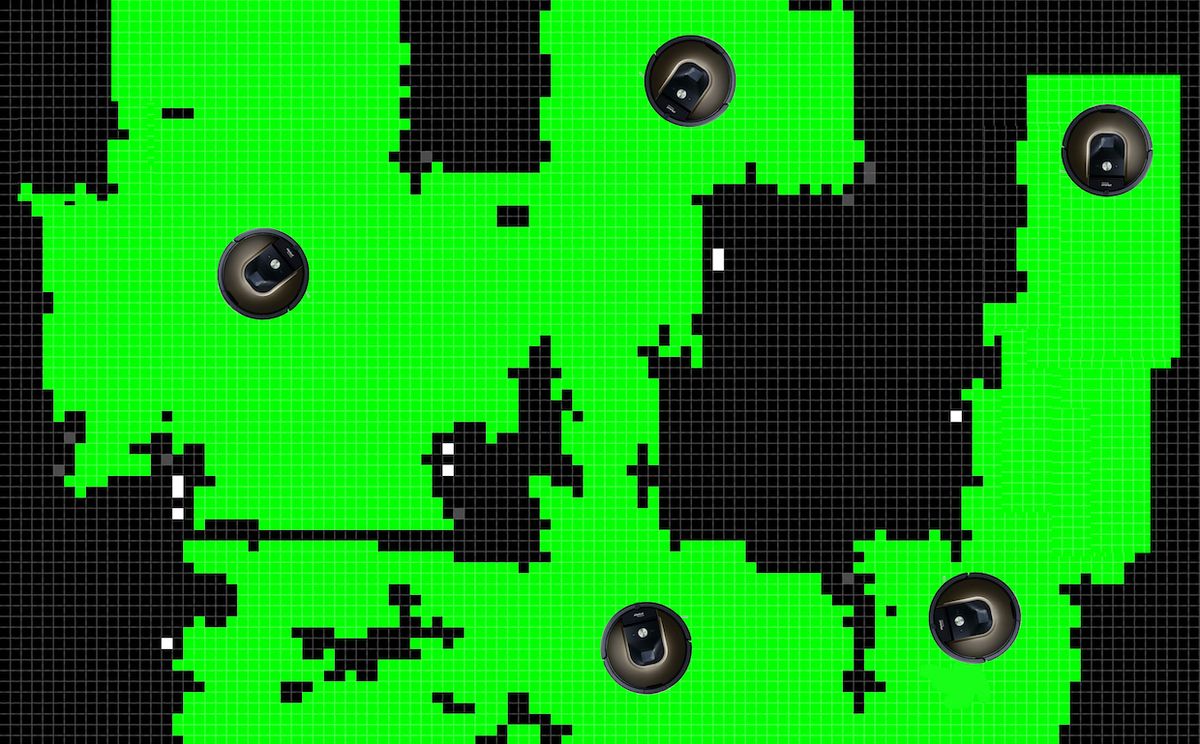

The problem that iRobot is trying to solve here is how to turn a cluttered, messy occupancy grid into something useful. An occupancy grid is a sort of binary map, a representation of whether a given space has something in it or not. As a robot like a Roomba roams around, it adds to the occupancy grid whenever it bumps into something, whether that thing is a wall, a table leg, or a shoe. As you might expect, the occupancy grid that a robot vacuum creates isn’t a very accurate representation of the rooms in your house, but with a little image processing, it doesn’t look all that far off:

The next step is the tricky one. Using the kind of CPU power that even old Roombas have, the occupancy grid needs to be segmented into a bunch of different rooms in a way that would make sense to a human. Once that’s done, the robot can plan the most efficient path possible.

iRobot has developed a method called RoomsSeg that’s able to turn a cluttered occupancy grid like you saw in that first picture above into a nicely segmented map of all the different rooms and hallways in your house. There are existing techniques that can do similar things, but they tend to be computationally expensive, meaning that the robot would need to send maps up to the cloud for processing. This is time-consuming and requires the robot to send data about your home out over the Internet, which may make people uncomfortable—an on-robot solution is far more elegant and efficient.

Rather than just break a floor plan up into chunks, RoomsSeg tries to make sure that the end product is semantically meaningful. For example, it might be tempting for an algorithm to split up long corridors, or to subdivide large rooms that aren’t rectangles. The researchers developed a set of rules to keep things tidy: Corridors are idenitifed as “regions typically of similar width relative to the main direction of the corridor,” while rooms are recognized based on what large open regions are attached to each other, if the connections between them are larger than the average doorway.

In the short term, generating maps like these will help Roombas to clean in a systematic way that makes sense to humans, but is also significantly faster and more efficient relative to the pseudo-random approach taken by Roombas without maps, as iRobot engineers write in their IROS paper:

While conventional systematic cleaning needed in total 2.32 hours, of which 0.52 hours were for path following and 1.8 hours for cleaning, room-by-room cleaning needed only 1.9 hours of which 0.3 hours were for path following and 1.6 hours for cleaning. Overall, the total execution time decreased to 82%, path following to 63%, and turning down to 66%.

At this point, iRobot would be relying on users to manually label the segmented rooms—you have to tell the robot that one particular room is a kitchen, one is a living room, one is a bedroom, and so forth. This is something you’d do through the iRobot app. However, we’re guessing that the robots (especially the ones with more sophisticated sensors) are collecting enough data as they go to make educated guesses about what room they happen to be in based on what obstacles they encounter. Either way, once the rooms are segmented and labeled, you’ll be able to schedule cleaning for specific rooms at specific times, and it’ll also open up all kinds of new smart home integration possibilities, as Colin Angle alluded to in our last interview. (iRobot has also announced that Roomba now supports IFTTT functionality.)

As to when all of this might be available, iRobot’s IROS paper says RoomsSeg “has been internally tested on several thousand individual grid maps.” The implication here is that the method seems to be working on iRobot’s side, so in principle, the company could just flip a switch and give us the persistent, intelligent mapping we’ve been waiting for—and we’re more optimistic than usual that it’s a feature we’ll be seeing very soon.

“A Solution to Room-by-Room Coverage for Autonomous Cleaning Robots,” by Alexander Kleiner, Rodrigo Baravalle, Andreas Kolling, Pablo Pilotti, and Mario Munich from iRobot, was presented at IROS 2017 in Vancouver, Canada.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.