Fifty million posts full of falsehoods about the coronavirus were disseminated on Facebook in April. And 2.5 million ads for face masks, COVID-19 test kits, and other coronavirus products tried to circumvent an advertising ban since 1 March. The ban was designed to prevent scammers and others trying to profit from people’s fears about the pandemic.

Those are just the pieces of coronavirus content that were identified as problematic and flagged or removed by Facebook during the period. The flood of misinformation is certainly larger, as not every fake or exploitative post is easily detectable.

“These are difficult challenges, and our tools are far from perfect,” said a blog post reporting the statistics that was posted today as part of Facebook’s quarterly Community Standards Enforcement Report. The company regularly provides data on its efforts to fight hate speech and other problematic content; today’s report was the first to specifically address coronavirus policy violations.

While Facebook relies on human fact-checkers (it works with 60 fact-checking organizations around the world), the report indicated that the company relies on AI to supplement the scrutiny done by human eyes. The 50 million posts flagged were based on 7,500 false articles identified by fact-checkers. (When the company detects misinformation, it flags it with a warning label—which, Facebook indicates, keeps about 95 percent of users from clicking through to view it.)

Some of the tools Facebook deployed were already in place to deal with general misinformation; some were new.

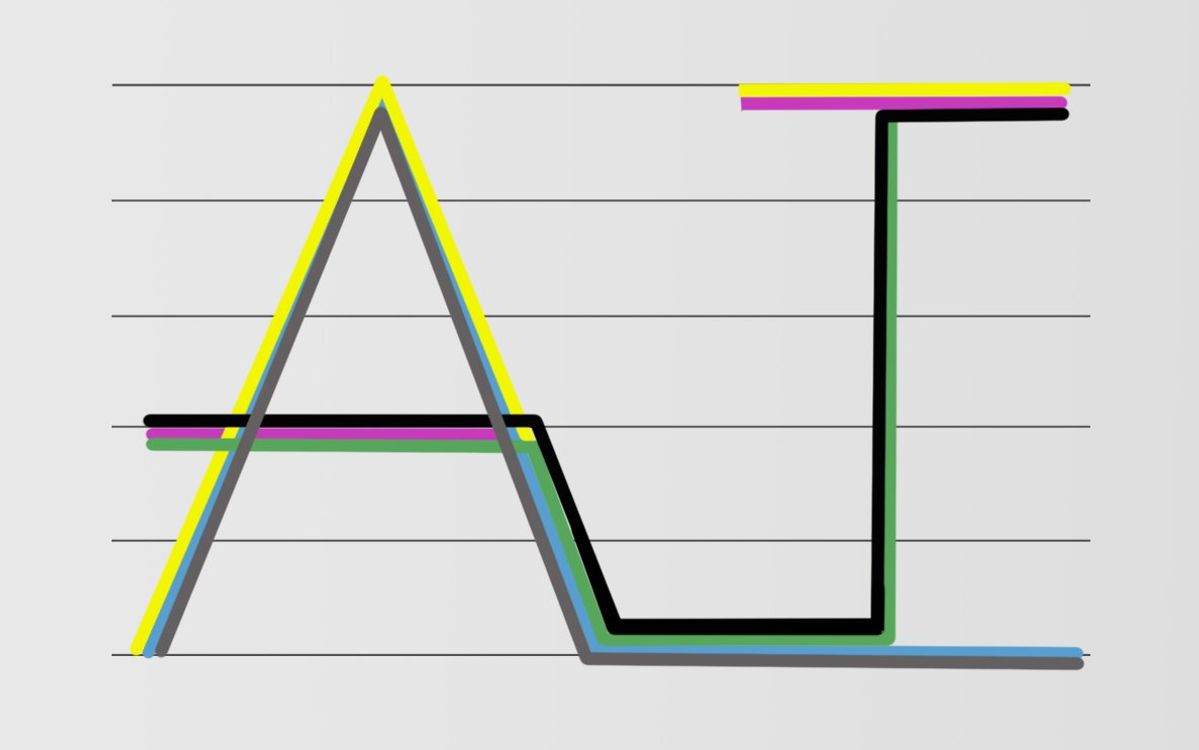

To identify misinformation related to articles spotted by fact-checkers, Facebook reported, its systems had to be trained to detect images that appeared alike to a person but typically not to a computer. A good example would be an image screenshot from an existing post. To a computer, the pixels are very different, but to most humans, they seem the same. The AI also had to detect the difference between images that are essentially the same in terms of the misinformation being presented but have also been tweaked by the addition of a logo or other overlay. To do this, it used launched a new “similarity detector,” SimSearchNet.

Said Mike Schroepfer, Facebook chief technology officer, in a press conference Tuesday morning, “Our previous systems were accurate, but fragile and brittle; [if someone changed] a small number of pixels, we wouldn’t mark it and take it down.”

Facebook is also applying its existing multimodal content analysis tools, which look at both text and images together to interpret a post.

To block coronavirus product ads, Facebook launched a new system that extracts objects from images known to violate its policy, adds those to a database, and then automatically checks objects in any new images posted against the database.

“This local feature-based solution is…more robust to common adversarial modification tactics like cropping, rotation, occlusion, and noise,” Facebook indicated in the blog post. The database also allowed it to train its classifier to find specific objects—like face masks or hand sanitizer—in new images, rather than relying entirely on finding image matches, the company reported. To improve accuracy, Facebook included what it calls a negative image set, for example, images that are not face masks—a sleep mask, a handkerchief—that the classifier might mistake for a face mask.

“We didn’t have a classifier sitting on the shelf that recognized face masks,” Schroepfer said, but “we were able to build this quickly because we had been working on this [problem] for a long time.”

And instead of looking at pixels, he said, we are looking at objects, and have built an object-level database as a different way to attack the problem of people trying to upload similar images.

“This is an adversarial system,” Schroepfer explained. “People upload an ad; it gets blocked. So they edit it and try to upload it again, to find one that works.” Naive AI systems, he explained, can be tricked by a mask placed on a background of a similar texture or color, but by focusing on the objects, Facebook’s new system can ignore the pixels around them.

This system, he indicated, is more aggressive in taking down content than the systems that look for misinformation in posts by ordinary users. “When dealing with ads,” he said, “we are willing to take more false positives.”

While AI is a huge help in amplifying the efforts of the 35,000 human moderators employed by the company, Schroepfer stressed that people will stay in the loop and in control.

“I’m not naive,” he said. “I don’t think AI is the solution to every problem. But with AI, we can take the drudgery out and give people power tools, instead of looking at similar images day after day.”

Much work remains to be done, Facebook’s blog post indicated. “But we are confident,” the post said, that “we can build on our efforts so far, further improve our systems, and do more to protect people from harmful content related to the pandemic.”

Tekla S. Perry is a senior editor at IEEE Spectrum. Based in Palo Alto, Calif., she's been covering the people, companies, and technology that make Silicon Valley a special place for more than 40 years. An IEEE member, she holds a bachelor's degree in journalism from Michigan State University.