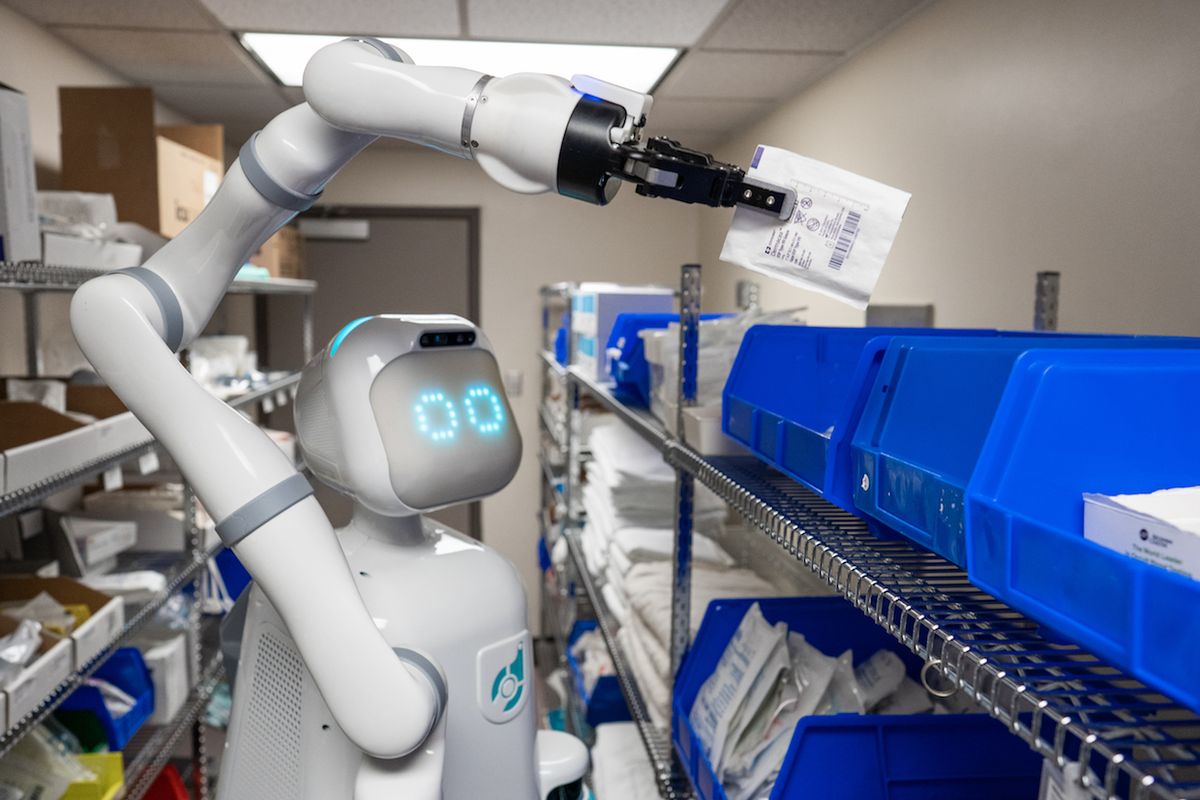

For the last several years, Diligent Robotics has been testing out its robot, Moxi, in hospitals in Texas. Diligent isn’t the only company working on hospital robots, but Moxi is unique in that it’s doing commercial mobile manipulation, picking supplies out of supply closets and delivering them to patient rooms, all completely autonomously.

A few weeks ago, Diligent announced US $10 million in new funding, which comes at a critical time, as the company addressed in their press release:

Now more than ever hospitals are under enormous stress, and the people bearing the most risk in this pandemic are the nurses and clinicians at the frontlines of patient care. Our mission with Moxi has always been focused on relieving tasks from nurses, giving them more time to focus on patients, and today that mission has a newfound meaning and purpose. Time and again, we hear from our hospital partners that Moxi not only returns time back to their day but also brings a smile to their face.

We checked in with Diligent CEO Andrea Thomaz last week to get a better sense of how Moxi is being used at hospitals. “As our hospital customers are implementing new protocols to respond to the [COVID-19] crisis, we are working with them to identify the best ways for Moxi to be deployed as a resource,” Thomaz told us. “The same kinds of delivery tasks we have been doing are still just as needed as ever, but we are also working with them to identify use cases where having Moxi do a delivery task also reduces infection risk to people in the environment.”

Since this is still something that Diligent and their hospital customers are actively working on, it’s a little early for them to share details. But in general, robots making deliveries means that people aren’t making deliveries, which has several immediate benefits. First, it means that overworked hospital staff can spend their time doing other things (like interacting with patients), and second, the robot is less likely to infect other people. It’s not just that the robot can’t get a virus (not that kind of virus, at any rate), but it’s also much easier to keep robots clean in ways that aren’t an option for humans. Besides wiping them down with chemicals, without too much trouble you could also have them autonomously disinfect themselves with UV, which is both efficient and effective.

While COVID-19 only emphasizes the importance of robots in healthcare, Diligent is tackling a particularly difficult set of problems with Moxi, involving full autonomy, manipulation, and human-robot interaction. Earlier this year, we spoke with Thomaz about how Moxi is starting to make a difference to hospital staff.

IEEE Spectrum: Last time we talked, Moxi was in beta testing. What’s different about Moxi now that it’s ready for full-time deployment?

Andrew Thomaz: During our beta trial, Moxi was deployed for over 120 days total, in four different hospitals (one of them was a children’s hospital, the other three were adult acute-care units), working alongside more than 125 nurses and clinicians. The people we were working with were so excited to be part of this kind of innovative research, and how this new technology is going to actually impact workloads. Our focus on the beta trials was to try any idea that a customer had of how Moxi could provide value—if it seemed at all reasonable, then we would quickly try to mock something up and try it.

I think it validates our human-robot interaction approach to building the company, of getting the technology out there in front of customers to make sure that we’re building the product that they really need. We started to see common workflows across hospitals—there are different kinds of patient care that’s happening, but the kinds of support and supplies and things that are moving around the hospital are similar—and so then we felt that we had learned what we needed to learn from the beta trial and we were ready to launch with our first customers.

The primary function that Moxi has right now, of restocking and delivery, was that there from the beginning? Or was that something that people asked for and you realized, oh, well, this is how a robot can actually be the most useful.

We knew from the beginning that our goal was to provide the kind of operational support that an end-to-end mobile manipulation platform can do, where you can go somewhere autonomously, pick something up, and bring it to another location and put it down. With each of our beta customers, we were very focused on opportunities where that was the case, where nurses were wasting time.

We did a lot of that kind of discovery, and then you just start seeing that it’s not rocket science—there are central supply places where things are kept around the hospital, and nurses are running back and forth to these places multiple times a day. We’d look at some particular task like admission buckets, or something else that nurses have to do everyday, and then we say, where are the places that automation can really fit in? Some of that support is just navigation tasks, like going from one place to another, some actually involves manipulation, like you need to press this button or you need to pick up this thing. But with Moxi, we have a mobility and a manipulation component that we can put to work, to redefine workflows to include automation.

You mentioned that as part of the beta program that you were mocking the robot up to try all kinds of customer ideas. Was there something that hospitals really wanted the robot to do, that you mocked up and tried but just didn’t work at all?

We were pretty good at not setting ourselves up for failure. I think the biggest thing would be, if there was something that was going to be too heavy for the Kinova arm, or the Robotiq gripper, that’s something we just can’t do right now. But honestly, it was a pretty small percentage of things that we were kind of asked to manipulate that we had to say, oh no, sorry, we can’t lift that much or we can’t grip that wide. The other reason that things that we tried in the beta didn’t make it into our roadmap is if there was an idea that came up with only one of the beta sites. One example is delivering water: One of the beta sites was super excited about having water delivered to the patients every day, ahead of medication deliveries, which makes a lot of sense, but when we start talking to hospital leadership or other people, in other hospitals, it’s definitely just a “nice to have.” So for us, from a technical standpoint, it doesn’t make as much sense to devote a lot of resources into making water delivery a real task if it’s just going to be kind of a “nice to have” for a small percentage of our hospitals. That’s more how that R&D went—if we heard it from one hospital we’d ask, is this something that everybody wants, or just an idea that one person had.

Let’s talk about how Moxi does what it does. How does the picking process work?

We’re focused on very structured manipulation; we’re not doing general purpose manipulation, and so we have a process for teaching Moxi a particular supply room. There are visual cues that are used to orient the robot to that supply room, and then once you are oriented you know where a bin is. Things don’t really move around a great deal in the supply room, the bigger variability is just how full each of the bins are.

The things that the robot is picking out of the bins are very well known, and we make sure that hospitals have a drop off location outside the patient’s room. In about half the hospitals we were in, they already had a drawer where the robot could bring supplies, but sometimes they didn’t have anything, and then we would install something like a mailbox on the wall. That’s something that we’re still working out exactly—it was definitely a prototype for the beta trials, and we’re working out how much that’s going to be needed in our future roll out.

These aren’t supply rooms that are dedicated to the robot—they’re also used by humans who may move things around unpredictably. How does Moxi deal with the added uncertainty?

That’s really the entire focus of our human-guided learning approach—having the robot build manipulation skills with perceptual cues that are telling it about different anchor points to do that manipulation skill with respect to, and learning particular grasp strategies for a particular category of objects. Those kinds of strategies are going to make that grasp into that bin more successful, and then also learning the sensory feedback that’s expected on a successful grasp versus an unsuccessful one, so that you have the ability to retry until you get the expected sensory feedback.

There must also be plenty of uncertainty when Moxi is navigating around the hospital, which is probably full of people who’ve never seen it before and want to interact with it. To what extent is Moxi designed for those kinds of interactions? And if Moxi needs to be somewhere because it has a job to do, how do you mitigate or avoid them?

One of the things that we liked about hospitals as a semi-structured environment is that even the human interaction that you’re going to run into is structured as well, more so than somewhere like a shopping mall. In a hospital you have a kind of idea of the kind of people that are going to be interacting with the robot, and you can have some expectations about who they are and why they’re there and things, so that’s nice.

We had gone into the beta trial thinking, okay, we’re not doing any patient care, we’re not going into patients’ rooms, we’re bringing things to right outside the patient rooms, we’re mostly going to be interacting with nurses and staff and doctors. We had developed a lot of the social capabilities, little things that Moxi would do with the eyes or little sounds that would be made occasionally, really thinking about nurses and doctors that were going to be in the hallways interacting with Moxi. Within the first couple weeks at the first beta site, the patients and general public in the hospital were having so many more interactions with the robot than we expected. There were people who were, like, grandma is in the hospital, so the entire family comes over on the weekend, to see the robot that happens to be on grandma’s unit, and stuff like that. It was fascinating.

We always knew that being socially acceptable and fitting into the social fabric of the team was important to focus on. A robot needs to have both sides of that coin—it needs to do something functional, be a utility, and provide value, but also be socially acceptable and something that people want to have around. But in the first couple weeks in our first beta trial, we quickly had to ramp up and say, okay, what else can Moxi do to be social? We had the robot, instead of just going to the charger in between tasks, taking an extra social lap to see if there’s anybody that wants to take a selfie. We added different kinds of hot word detections, like for when people say “hi Moxi,” “good morning, Moxi,” or “how are you?” Just all these things that people were saying to the robot that we wanted to turn into fun interactions.

I would guess that this could sometimes be a little problematic, especially at a children’s hospital where you’re getting lots of new people coming in who haven’t seen a robot before—people really want to interact with robots and that’s independent of whether or not the robot has something else it’s trying to do. How much of a problem is that for Moxi?

That’s on our technical roadmap. We still have to figure out socially appropriate ways to disengage. But what we did learn in our beta trials is that there are even just different navigation paths that you can take, by understanding where crowds tend to be at different times. Like, maybe don’t take a path right by the cafeteria at noon, instead take the back hallway at noon. There are always different ways to get to where you’re going. Houston was a great example—in that hospital, there was this one skyway where you knew the robot was going to get held up for 10 or 15 minutes taking selfies with people, but there was another hallway two floors down that was always empty. So you can kind of optimize navigation time for the number of selfies expected, things like that.

To what extent is the visual design of Moxi intended to give people a sense of what its capabilities are, or aren’t?

For us, it started with the functional things that Moxie needs. We knew that we’re doing mobile manipulation, so we’d need a base, and we’d need an arm. And we knew we also wanted it to have a social presence, and so from those constraints, we worked with our amazing head of design, Carla Diana, on the look and feel of the robot. For this iteration, we wanted to make sure it didn’t have an overly humanoid look.

Some of the previous platforms that I used in academia, like the Simon robot or the Curie robot, had very realistic eyes. But when you start to talk about taking that to a commercial setting, now you have these eyeballs and eyelids and each of those is a motor that has to work every day all day long, so we realized that you can get a lot out of some simplified LED eyes, and it’s actually endearing to people to have this kind of simplified version of it. The eyes are a big component—that’s always been a big thing for me because of the importance of attention, and being able to communicate to people what the robot is paying attention to. Even if you don’t put eyeballs on a robot, people will find a thing to attribute attention to: They’ll find the camera and say, “oh, those are its eyes!” So I find it’s better to give the robot a socially expressive focus of attention.

I would say speech is the biggest one that we have drawn the line on. We want to make sure people don’t get the sense that Moxi can understand the full English language, because I think people are getting to be really used to speech interfaces, and we don’t have an Alexa or anything like that integrated yet. That could happen in the future, but we don’t have a real need for that right now, so it’s not there, so we want to make sure people don’t think of the robot as an Alexa or a Google Home or a Siri that you can just talk to, so we make sure that it just does beeps and whistles, and then that kind of makes sense to people. So they get that you can say stuff like “hi Moxi,” but that’s about it.

Otherwise, I think the design is really meant to be socially acceptable, we want to make sure people are comfortable, because like you’re saying, this is a robot that a lot of people are going to see for the first time, and we have to be really sensitive to the fact that the hospital is a stressful place for a lot of people, you’re already there with a sick family member and you might have a lot going on, and we want to make sure that we aren’t contributing to additional stress in your day.

You mentioned that you have a vision for human-robot teaming. Longer term, how do you feel like people should be partnering more directly with robots?

Right now, we’re really focused on looking at operational processes that hit two or three different departments in the hospital and require a nurse to do this and a patient care technician to do that and a pharmacy or a materials supply person to do something else. We’re working with hospitals to understand how that whole team of people is making some big operational workflow happen and where Moxi could fit in.

Some places where Moxi fits in, it’s a completely independent task. Other places, it might be a nurse on a unit calling Moxi over to do something, and so there might be a more direct interaction sometimes. Other times it might be that we’re able to connect to the electronic health record and infer automatically that something’s needed and then it really is just happening more in the background. We’re definitely open to both explicit interaction with the team where Moxi’s being called to do something in particular by someone, but I think some of the more powerful examples from our beta trials were ones that really take that cognitive burden off of people—where Moxi could just infer what could happen in the background.

In terms of direct collaboration, like side-by-side working together kind of thing, I do think there’s just such vast differences between—if you’re talking about a human and a robot cooperating on some manipulation task, robots are just—it’s going to be awhile before a robot is going to be as capable. If you already have a person there, doing some kind of manipulation task, it’s going to be hard for a robot to compete, and so I think it’s better to think about places where the person could be used for better things and you could hand something else off entirely to the robot.

So how feasible in the near-term is a nurse saying, “Moxi, could you hold this for me?” How complicated or potentially useful is that?

I think that’s a really interesting example. So then a question is, is the value of the resource and whether being always available to be like a third hand for any particular clinician is the most valuable thing that this mobile manipulation platform could be doing, and what, we did a little bit of that kind on-demand, you know, hey Moxi come over here and do this thing, in some of our beta trials just to kind of look at that on demand versus pre planned activities, and if you can find things in workflows that can be automated and inferred what the robot’s gonna be doing, we think that’s gonna be the biggest bang for your buck, in terms of the value that the robot’s able to deliver.

I think that there may come a day where every clinician’s walking around and there’s always a robot available to respond to “hey, hold this for me,” and I think that would be amazing. But for now, the question is whether the robot being like a third hand for any particular clinician is the most valuable thing that this mobile manipulation platform could be doing, when it could instead be working all night long to get things ready for the next shift.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.