Looking at a stereoscopic 3-D display takes some mental gymnastics. When you look at objects in real life, your brain expects the area of focus to be the same as where your eyes need to converge. But in order to see in stereoscopic 3-D—in which a different image is presented to each eye—you focus on the screen but your eyes converge where the image appears to be. For some, this is a headache-inducing dilemma.

Holograms get around that by projecting light right to the spot where your eyes would focus: The light beams travel through that point and hit your eyes just as if they’d come from an object that was actually there. Even better, holograms work from any angle and don’t require glasses. Up until now this type of display has been a weighty affair, requiring large projectors and screens or a very restricted viewing angle. But two companies, Ostendo Technologies and Hewlett-Packard spin-off Leia, promise to put such holographic displays—more properly called light-field displays—in your pocket within a year or two. It might not be Princess Leia projected from an astromech droid, but it’s close.

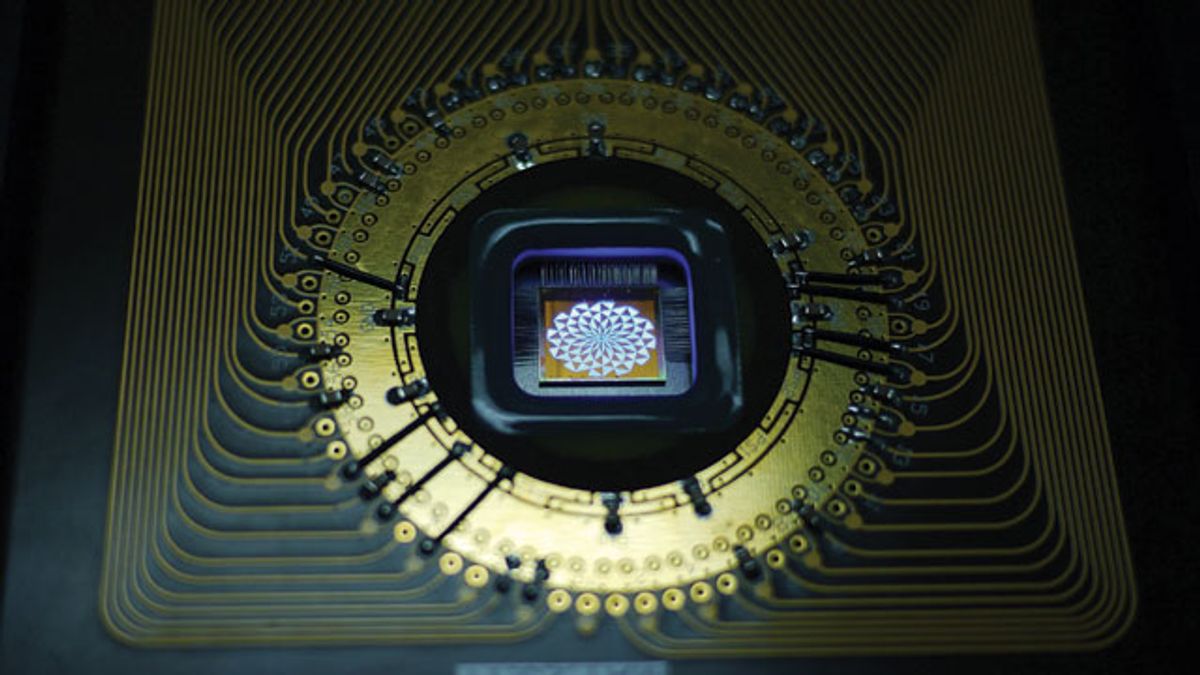

At Display Week in June, Ostendo demonstrated the culmination of nine years of work, an array of eight Quantum Photonic Imager (QPI) chips in a grid projecting three spinning green dice—one seemingly floating behind the display, one at chip level, and the third in front of the chips.

“Almost every display you see emits light that goes everywhere,” says Hussein El-Ghoroury, Ostendo’s CEO. “In contrast, the QPI collimates the light to a very narrow angle before emitting it, so you can emit different images in different directions.” Ostendo’s 3-D images are viewable from 2,500 perspectives.

Each of the 1 million pixels on Ostendo’s little chip consists of a layer each of red, green, and blue micro-LEDs (or lasers, in some iterations) sitting on top of its own small silicon image processor. The pixels are between 5 and 10 micrometers on a side. By modulating the power to the individual layers, each pixel can send out any color of light in a thin, focused beam. Multiple vertical waveguides carry the light out from the layers and modulate its direction—although company representatives won’t specify exactly how—and an array of microlenses focus and direct the beam further. Having an image processor under each pixel saves power and lightens the overall computational load, which is considerable for complex images because they must be simultaneously rendered for viewing from thousands of different perspectives.

“You need to pack a lot of pixels into a small area to create this light-field effect,” says Martin Banks, a professor of optometry and vision science at the University of California, Berkeley, who uses light-field displays in his research on vision. (Banks helped with Ostendo’s application for a U.S. government grant by evaluating the QPI chip’s capabilities.) “That’s what’s promising about their technology—small, low-power-consumption devices that can generate a lot of light with very tightly packed pixels.”

Banks says that along with the high computational load for such a display, another challenge is the geometry of the display itself. Manufacturing microlenses for placement in front of light-field display pixels is difficult because the shapes and positions must be just right to steer the beams at the correct angles. And with so many viewing perspectives to produce, resolution can be a real problem, says Gordon Wetzstein, a researcher at the MIT Media Lab, who is working to address the issues of data volume and resolution that affect all small-scale, glasses-free 3-D technology. “It’s really hard to give multiview or light-field images at high resolution,” he says. “If you want to have 10 views, each of the views is 10 times lower resolution than the original display.” Wetzstein is developing software to weave different views together so that 3-D displays will be less computationally intense and won’t have to sacrifice as much resolution.

“Everybody wants to put 3-D on a smartphone,” says David Fattal, founder of Leia, a light-field display start-up. “And customers aren’t going to want to compromise between a holographic 3-D phone that has mediocre 2-D performance and a normal phone. They’re going to want the best of the best.”

Leia’s 3-D display works by putting a grid of gratings behind an ordinary LCD. The gratings point the light beneath them in different directions, creating up to 64 different viewing angles for a 3-D image or video. Fattal’s goal is a system that would be easy to scale up and integrate with existing screens or even with transparent displays. The company is planning its first commercial product for 2015.

The light-field display “is going to be the next big revolution in displays,” says UC Berkeley’s Banks. “But the public won’t accept it if it doesn’t have good color, doesn’t look high resolution. Right now, it’s not feasible with cost-effective equipment. But computers are getting faster, and people are getting smarter and learning shortcuts, so someday that will be achievable.”

“That day will come,” says El-Ghoroury. “Maybe two to three years, not much longer.”