Halfway to Mars

How a hardy band of researchers braved freezing nights, bad food, and high winds in the Chilean desert to test the next generation of planetary rovers

Out on the rocky horizon, the robot has stopped dead in its tracks. “Uh, Dave, I got a big problem out here,” a voice crackles over the radio.

“OK,” David Wettergreen replies carefully, peering off in the direction of the machine. “Define ‘big.’”

“Big” turns out to be a new part for the robot that doesn’t quite fit and so prevents the robot’s cameras—its eyes—from turning properly. Back at the laboratory, this would be a quick fix, but the robot, Wettergreen, three geologists, two software engineers, two sociologists, an electrical engineer, a mechanical engineer, and a biologist are all out in the middle of Chile’s vast Atacama Desert, [see map] many hours’ drive from civilization.

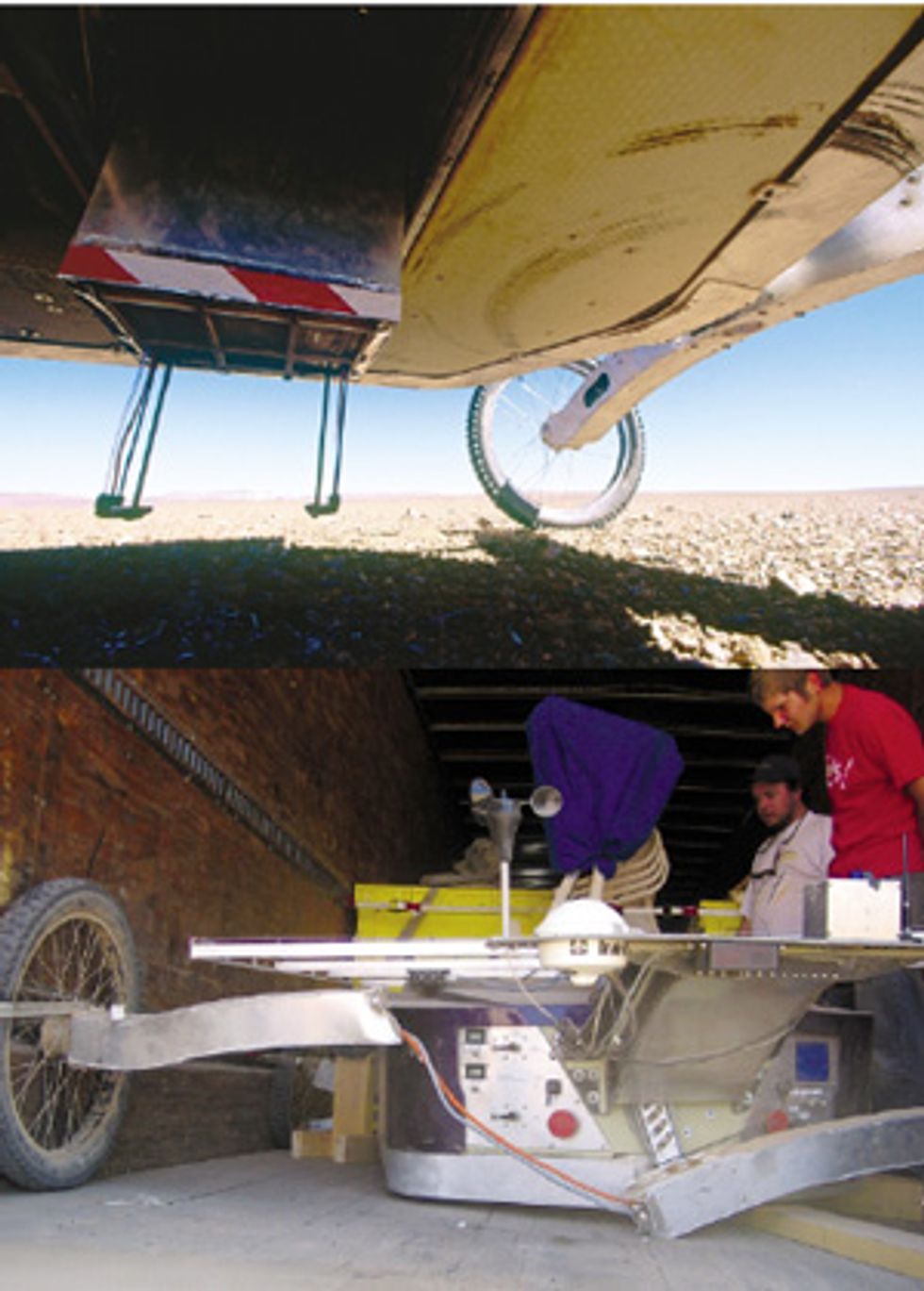

As he strides off to investigate, you get the sense Wettergreen’s enjoying himself. For the better part of an hour, he and two colleagues will wrestle with the aberrant part [see photo, "All in a Day's Work"]. The tedious work produces the standard amount of swearing, but they also joke—when one of them wields his drill stick-’em-up style, Wettergreen gamely throws up his hands. Finally they conclude that the job would be better handled back at the base camp.

Wettergreen, an associate research professor at Carnegie Mellon University’s Robotics Institute, in Pittsburgh, and his team have been roughing it here in the Atacama since August, and they’ll remain until November, just as South America’s spring gives way to summer. They’ve come to test out new concepts and designs for the next generation of planetary rover, because this place, more than any other on Earth, approximates the barren, arid rockiness of the Red Planet. Testing the rover means pushing the technology to its limits, and sometimes beyond. The robot is so unusual and so new that breaking down is, for now, anyway, what it’s supposed to do. “A hundred percent success means we’re not really trying hard enough,” Wettergreen says.

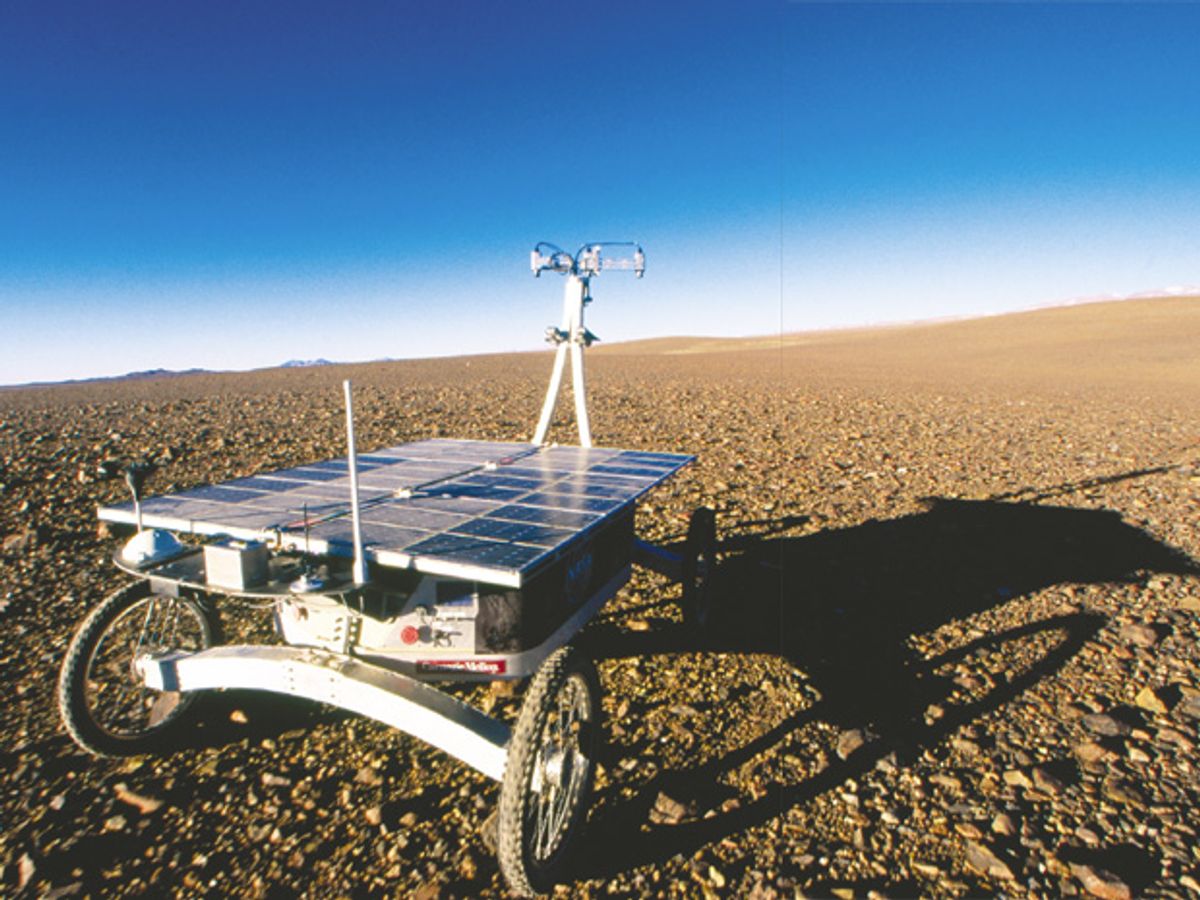

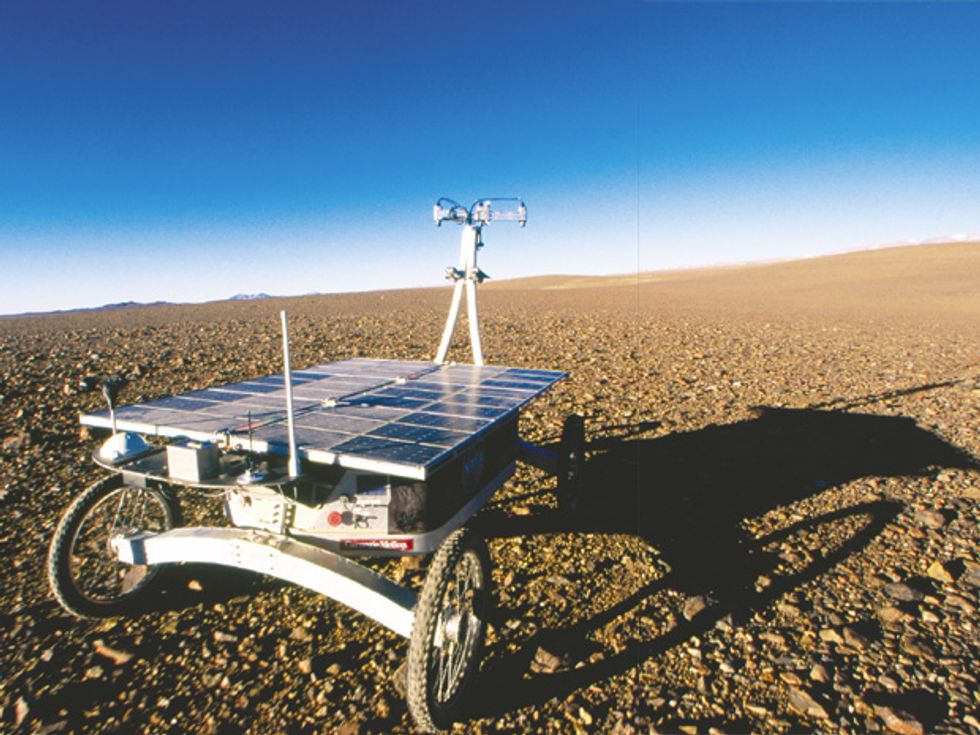

It isn’t the most elegant-looking machine ever built. Weighing in at 180 kilograms, the rover, dubbed Zoë, looks something like a motorized, overgrown ice cream cart. But it is beautiful in the one way that really matters to planetary scientists: unlike all the rovers built thus far, Zoë can roam autonomously [see photo, "Autopilot"]. Its lesser rover cousins still need human drivers back on Earth to issue steady streams of commands that enable the robots to pick their way gingerly among the boulders, slopes, and ridges that constantly threaten to trap or upend them; one false move could terminate an interplanetary mission costing hundreds of millions of dollars [see “Meanwhile, Back on Mars...”]. Zoë is smarter: it can sense, among other things, when it’s on an incline of more than 30 degrees or nearing a too-precipitous drop-off; in such situations, it is programmed to seek an easier route.

The rover can even make some rudimentary decisions about what terrain to explore. In a set of experiments conducted in Chile, Zoë successfully determined which tests to run at a given location. It started by taking an initial image of the spot; based on the density and types of rocks it was seeing, it calculated the probability of finding life there. When it figured the probability to be high enough, it ran through a sequence of tests to look for chlorophyll; when it detected chlorophyll, it went on to check for carbohydrates, proteins, and other signs of life.

The calculations are all done with hardware that is much less powerful than your typical desktop PC. All of Zoë’s six computers are off-the-shelf products designed for factory automation, telecommunications, and other industrial systems. Two 2.4-gigahertz Intel Pentium 4 processors control the robot’s navigation and autonomy. A computer based on an Advanced Micro Devices Celeron chip estimates the robot’s position, while an Intel Pentium microprocessor looks after power management. Two computers control the robot’s motion.

The idea is that eventually, scientists back on Earth won’t need to send step-by-step instructions to the robot; if it spots a rock of particular interest, it will just mosey on over and investigate, instead of waiting for a human to tell it what to do. A fully autonomous rover is still a ways off, Wettergreen says. In addition to making decisions about what instruments to deploy or what tests to run, such a machine also will need to consider the overall picture. If I veer off in this direction to explore that patch of ground, how much power will I consume? How long can I spend on the task without compromising other activities? And so on.

“The simple things will happen first, maybe even in the next generation of rovers,” Wettergreen says. “But it will be a long time before we see complex behaviors showing up in flight systems.”

Wettergreen and his team built Zoë with a US $3.9 million grant from NASA, and they’ve come to the Atacama to see how well the rover copes with the kind of terrain its successors will find on Mars. At Zoë’s top speed of 1.2 meters per second, its human “wranglers,” who trail the robot at all times for safety’s sake, have trouble keeping up. The rover’s wide, boxy body sits low to the ground on fat mountain-bike tires; its shiny back of solar panels feeds two racks of lithium-polymer batteries below. Perched atop a long metal stalk of a neck are three high-resolution color digital cameras, used to look at terrain off in the distance; individual images from these cameras can also be stitched together to build panoramic views. Further down, two wide-angle navigation cameras look a few meters in front of the robot to detect obstacles.

Ungainly as the robot looks, Zoë in motion has a certain gracefulness. Each wheel is driven independently, while the front and rear axles, which attach to a central “spine,” pivot passively—going over bumps, the robot’s chassis appears to undulate. This arrangement allows the wheels to remain on the ground at all times and gives the machine a tight turning radius. But controlling the drive isn’t simple, Wettergreen says. “It’s not just a matter of giving the wheels the right velocities. You have to be a little bit predictive.” Engineers at the Robotics Institute, where the robot was designed and built, added a mechanical linkage that averages the rolls of the front and rear axles and the height of any obstacles the wheels are going over and then distributes the load accordingly.

Zoë, whose name means “life” in Greek, is the prototype of a vehicle that will likely rove Mars in the not-too-distant future, hunting for evidence that some kind of microbial life flourished in the planet’s warmer, watery past, as well as signs that some of it might have held on to the present day. The most important instrument in its suite is a fluorescence imager that exploits the fact that certain substances fluoresce when exposed to light at certain frequencies. It will inspect rocks and dirt for the presence of chlorophyll, lipids, carbohydrates, proteins, and DNA—the chemical signatures of life. Chlorophyll glows naturally when excited; the others do so only when treated with special dyes.

In the lab, a fluorescence imager would excite the sample using high-power lasers, each tuned to a particular frequency. But the robot’s unit has to be compact and low-power, so it uses a xenon flashlamp, which produces a 10-millisecond, 1000-watt burst of full-spectrum light, explains Shmuel Weinstein, a biologist at Carnegie Mellon who helped build the imager. The light passes through a filter wheel, which lets through just the frequencies that a sample might emit when excited, and then into a fiber-optic bundle, which directs the light to the sample below. The imager also has a system for automatically applying the dyes, water, and a mild acid (distilled vinegar, actually, which breaks down cell walls and lets the dye penetrate) to the sample. A charged-coupled-device (CCD) camera takes pictures of the sample, first without the dye and then with it; the robot then compares the images to see where the sample is fluorescing.

Even after Zoë has gathered its pictures, the scientists carefully reexamine the spot by hand, collecting rock samples and, if necessary, running a portable spectrometer over the ground. Later, they’ll compare the robot’s data with their own. This process, known as ground truthing, will tell them whether the robot can be trusted. On Mars, researchers won’t have that chance, so it’s better to know now.

The Atacama Desert, which takes up the northern third of the long, skinny country of Chile, is known as the driest place on Earth. On average, it gets less than 1.5 centimeters of rain a year. California’s Death Valley gets more than three times as much and Mongolia’s Gobi Desert about six times as much. Some spots in the Atacama haven’t seen rain in centuries.

To find the robot team, head south from the industrial shore town of Antofagasta on Chile’s main north-south highway, Ruta 5. The two-lane road winds through an austere landscape. As far as the eye can see, the land is nearly unbroken by any vegetation or landmark, except for the occasional hand-built roadside memorial, usually beside a bad dip in the road or a “curva peligrosa,” designating the spot where some hapless driver met his Maker.

After about 3 hours, around 24.98049 degrees south, 69.90336 degrees west, turn left at the entrance to the Guanaco Mine, and take the dirt washboard road another 42 kilometers to the mine gate. If there’s daylight, multicolored piles of slag—toxic by-products of the gold excavating that goes on here—will greet you in the distance. Just beyond the gate sit the researchers’ dormitories, a set of low-slung, corrugated metal buildings. You have arrived.

The mine is the sixth and final site that the team is exploring during its three-year project, which began in 2003. About 1400 km north of Santiago, Chile’s capital, the mine lies at an altitude of 3000 meters, at the base of the Domeyko Mountains; further to the east are the snow-capped Andes.

In addition to the field team assembled here in Chile, a group of geologists, biologists, and others are gathered in Pittsburgh. They are the science team, and it’s their job to parse the data that Zoë collects and then send back a set of instructions to launch the next day’s mission. The idea is to simulate, as much as possible, an actual mission on Mars. So at each site, the robot “lands”—that is, it’s disgorged from the back of a moving van—takes a reading of its surroundings, and then uploads photos and telemetry data via satellite to Pittsburgh. The team in Pittsburgh pores over the data and then discusses (or, more often than not, argues over) what kind of investigations and maneuvers the robot should do next. The instructions are subsequently sent back, again by satellite, to the robot.

Zoë is solar powered, so it can operate only during the day. The science team receives its data in the early evening, and it spends a good part of the night refining the plan for the next day. The scientists’ knowledge of the site is limited to what they can glean from the data sent back by the robot; the field team is permitted to tell them only as much as they need to know to plan their operations. Occasionally, the science team misses an obvious chance to gather data—for example, they could instruct the navigation cameras to collect periodic images, but they don’t. The engineers in the field can only stand by and watch. “The rover could give a lot more data or images than the scientists actually request,” software engineer Dominic Jonak says. “Sometimes it’s as if their eyes were closed.”

At breakfast on the first day after landing at the Guanaco Mine—which in the researchers’ lingo becomes “Sol 1 at Site F”—the field team hears the plan from the science team. Wettergreen reports that the latter couldn’t decide whether to start by inspecting the landing site for signs of life or by sending the robot to a distant point to look around. “As usual, they decided to split the difference,” he says. And so Zoë will begin with 10 sequences of fluorescence imaging, traveling several meters in between sequences, and then make a 2-km traverse with a stop for photos, winding up the day with a 1-km traverse.

It’s an ambitious plan. Chris Williams, a mechanical engineer at the Robotics Institute and the robot’s chief wrangler, heads out early to boot up Zoë, which had been unloaded the day before and left out in the desert overnight. When the rest of the team arrives at 9 a.m., it’s immediately obvious that doing anything will be difficult: the wind has picked up to a blustery 70 kilometers per hour (43 miles per hour), with gusts of 90 km/h. It’s a punishing wind, the kind that sends unsecured headgear cartwheeling out of reach, turns normal conversations into shouting matches, and makes standing upright a test of will. (Mars gets windy, too, but the effects are far less noticeable, because its atmosphere is less than 1 percent as dense as Earth’s.) Wettergreen is worried that a hard gust might catch on the rover’s solar panels and launch it. So he decides to wait.

And wait. Done in by the wind, most team members soon retreat to their trucks, emerging at intervals when they feel restless. Occasional updates come over the two-way radio:

“Wind has dropped down to a measly 45.5 miles per hour.”

“Glad to hear we’re below hurricane force.”

“No, wait, it just kicked up to 54.4...Now it’s gusting to 53.2.”

“Miles per hour or kilometers per hour?”

“I’m afraid that’s miles per hour.”

“Ouch.”

Spending time in the desert, in all its featurelessness, induces a form of sensory deprivation. It’s so devoid of obvious life that the sighting of a fly or a beetle or a small green plant is a revelation. For the purposes of the project, though, such macroscopic sightings don’t really count. After all, a rover on Mars will never encounter so much as a clump of sagebrush. Amid this paucity of stimuli, something like lunch can take on near-mystical import, even if it’s only Fanta soda, apples, and ham-and-cheese sandwiches—a slight repackaging of the ham, cheese, and bread from breakfast.

It’s 2 p.m. before the wind finally calms down enough to allow for some robot action. Zoë spends the first few minutes driving comically in circles, the result of some confusion as it attempts to home in on its local coordinates.

After that false start, though, the robot begins dutifully running through the first of its 10 fluorescence-imaging cycles. Tucked behind the robot’s removable fiberglass panels, the imager descends from the robot’s belly [see photo, "Moving Parts"], and two thin arms emerge, mantislike, to spray water, acid, and dyes on the soil and rocks below. The flashlamps begin to pulse, and the CCD camera clicks away. It takes about 20 minutes for the rover to complete a full imaging sequence; after it’s done, Zoë rolls on a few meters and begins ministering to another patch of rocks.

Clearly, there won’t be time to complete the day’s science plan. Three hours later, with the sun heading quickly toward the horizon, Sol 1 at Site F has ended.

Some days in the desert are better than others. Even for robots. Just a few weeks earlier, Zoë had a very bad day. The field team had to transport the robot by truck from Site D, on Chile’s northern coast, to Site E, at Salar de Navidad to the south, about 400 km away over rough roads. They had the option of disassembling the robot, as they do when shipping it between Pittsburgh and Chile. But that requires several days of reassembly afterward, so instead, they loaded the fully assembled robot into the truck, secured it to the walls, placed wooden pallets underneath it to cushion the bumps, and hoped for the best. When they arrived at Site E, however, they discovered that the pallets had shaken loose and that Zoë’s front axle had buckled and the rear axle had fractured completely. Several instruments, including the onboard spectrometer and the pan-tilt camera, also took a beating.

“My suspicion is that the wood underneath shifted and the robot basically started hopping up and down in the back of the truck,” Wettergreen says. “The stresses on its axles would have been at least an order of magnitude greater than it would normally experience when it’s driving.”

Fortunately, the team had a spare set of axles in Pittsburgh. Jonak, the software engineer, was scheduled to travel to Chile anyway, so he loaded the spares into a ski bag and brought them down. Within the week, the robot was reassembled and nearly as good as new. Just to be sure, though, when it came time to move from Site E to Site F, the engineers seated the robot on a bed of rubber tires; no further mishap occurred.

Looking back, Wettergreen likes to think of it as an “extreme experiment.” There’s an entire discipline “where you test to the point of failure, basically,” he says and gives a rueful smile. “So we did that.”

Compared with the axle mishap, the various breakdowns, glitches, and bugs that occur on a daily basis seem fairly pedestrian. For example, Sols 2 and 3 at Site F bring a battery problem that necessitates temporarily swapping out the lithium-polymer packs for the spare lead-acid batteries; several software problems, which cause the robot to fail to execute part of its science plan and throw off the navigation cameras for a spell; a problem with the pan-tilt camera that causes the azimuth to slip and requires the entire camera unit to be dismounted, leaving Zoë decapitated for a time; and, perhaps most troubling of all, a lunch problem, caused by a shortage of bread back at camp. In place of the usual ham-and-cheese sandwiches, the cook sends a plastic bag of apples, oranges, and bananas; the field team grimly accepts the proffered fruits.

Often problems arise out of the best of intentions. Explaining the camera’s software glitch, Jonak says, “There’s kind of a black art to stereo calibration. You need to fiddle around with things so it’s just so, and you’re never really totally satisfied that you have the best system...you can always make these minor improvements. Unfortunately, that got us into trouble, because we tried to improve things, and we ended up knocking something out of whack.”

In between repairs on Sols 2, 3, and 4, the robot fits in quite a bit of science and executes a few traverses of several kilometers. “We’re traveling a lot farther than last year, things are running a lot more smoothly, and the [operations] are much more autonomous,” Jonak says. “Despite these mechanical problems we’ve been having, we’re still very happy with the rover’s performance.”

“You just have to embrace your filth.” Wettergreen is leaning back in his chair at dinner, grinning, and trying in his own way to commiserate with a team member who’s complaining about the impossibility of staying clean. It’s the end of Sol 3, and we’ve all spent far too long coated with a fine layer of dust. It’s in every pore and on every bit of hair, every garment. It’s under our fingernails, in the creases behind our ears, in the folds of skin between our fingers.

Still, compared with the tents and sleeping bags at the previous two sites, the dormitories at Site F seem almost homey. The scientists still use sleeping bags, but there are real mattresses here to put them on, and the willful water heaters on occasion offer up a nice hot shower. There’s no heat, though, and as the temperature plummets to below freezing at night, layering on every scrap of clothing you’ve brought along is the only way to keep warm.

But nobody would dream of missing the experience. “I like the desert,” says Roxana Wales, a research scientist at NASA Ames Research Center, Moffett Field, Calif. “I find it very calming.” The team members also get to experience things they won’t soon forget. At Site D, near the coast, recalls Trey Smith, a Ph.D. student at Carnegie Mellon who worked on Zoë’s software, “they have these salt fogs that come over the mountains and through the passes at sunset. Back in Pittsburgh when it’s foggy, the air is still. But here the fog just races past you, like you’re in a snow flurry. It’s an amazing effect.”

While Zoë may represent the next generation of planetary rover, what about the next next generation—and the one after that? “You’re going to see a paradigm shift in planetary exploration soon,” predicts James Dohm, a geologist at the University of Arizona, Tempe, and a member of the science team. “Think about it: you have vast areas that you need to cover. How are you going to do that?” Ground-hugging robots like Zoë can cover at most tens of kilometers a day. Aerial rovers, on the other hand, could travel much farther, Dohm says. “In the future we’re going to see aerial robots as well as fleets of ground-based rovers. That will be a golden age for planetary exploration.” Indeed, in December, NASA announced a new competition to design and build an autonomous aerial vehicle for conducting science investigations on planets and moons that have atmospheres. The competition, which ends in October 2007, carries a prize of $250 000.

Meanwhile, in January, project researchers convened in Chile one last time. The itinerary called for a quick visit to each of the six sites the robot had surveyed during the last three years. For the scientists who’d worked exclusively from Pittsburgh, it was the first time they actually got to see the places in person, rather than merely interpreting them through satellite imagery, science data, or photos. “The scientists had a great time, like kids in a candy store,” Wettergreen says. “Mostly they wanted to get the big picture—which hill did the robot go over? Were we on that side or this side of the drainage? I think the visit confirmed everyone’s expectations—they were happy they’d gotten it right.”

To Probe Further

Life in the Atacama has field reports, images, science data, and other information about the project.