In order to develop a practical quantum computer, scientists will have to design ways to deal with any errors that will inevitably pop up in its performance. Now Google has demonstrated that exponential suppression of such errors is possible, experiments that may help pave the way for scalable, fault-tolerant quantum computers.

A quantum computer with enough components known as quantum bits or "qubits" could in theory achieve a "quantum advantage" allowing it to find the answers to problems no classical computer could ever solve.

However, a critical drawback of current quantum computers is the way in which their inner workings are prone to errors. Current state-of-the-art quantum platforms typically have error rates near 10^-3 (or one in a thousand), but many practical applications call for error rates as low as 10^-15.

In addition to building qubits that are physically less prone to mistakes, scientists hope to compensate for high error rates using stabilizer codes. This strategy distributes quantum information across many qubits in such a way that errors can be detected and corrected. A cluster of these "data qubits" can then all count as one single useful "logical qubit."

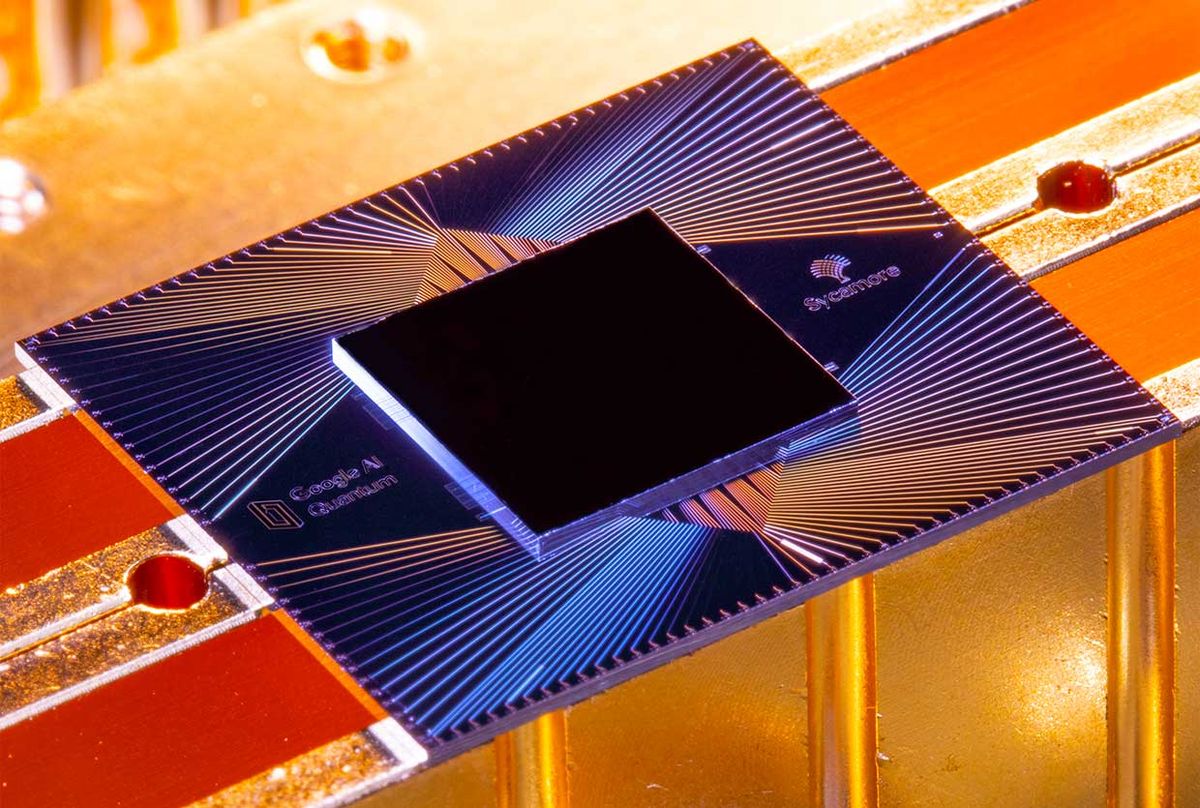

In the new study, Google scientists first experimented with a type of stabilizer code known as a repetition code, in which the qubits of Sycamore — Google's 54-qubit quantum computer — alternated between serving as data qubits and "measure qubits" tasked with detecting errors in their fellow qubits. They arranged these qubits in a one-dimensional chain, such that each qubit had two neighbors at most.

The researchers found that increasing the number of qubits their repetition code is built on led to an exponential suppression of the error rate, reducing the amount of errors per round of corrections up to more than a hundredfold when they scaled the number of qubits from 5 to 21. Such error suppression proved stable over 50 rounds of error correction.

"This work appears to experimentally validate the assumption that error-correction schemes can scale up as advertised," says study senior author Julian Kelly, a research scientist at Google.

However, this repetition code "was limited to looking at quantum errors along just one direction, but errors can occur in multiple directions," says study co-author Kevin Satzinger, a research scientist at Google. As such, they also experimented with a kind of stabilizer code known as a surface code, in which they arranged Sycamore's qubits in a two-dimensional checkerboard pattern of data and measure qubits to detect errors. They found the simplest version of such a surface code — using a two-by-two grid of data qubits and three measure qubits — successfully performed as expected from computer simulations.

These findings suggest that if the scientists can reduce the inherent error rate of qubits by a factor of roughly 10 and increase the size of each logical qubit up to about 1,000 data qubits, "we think we can reach algorithmically relevant logical error rates" Kelly says.

In the future, the scientists aim to scale up their surface codes to grids of three-by-three or five-by-five data qubits to experimentally test whether or not exponential suppression of error rates also occurs in these systems, Satzinger says.

The scientists detailed their findings online July 14 in the journal Nature.

- How Much Has Quantum Computing Actually Advanced? ›

- New Standards Rolling Out for Clocking Quantum-Computer Performance - IEEE Spectrum ›

- How To Build A Fault-Tolerant Superconducting Quantum Computer - IEEE Spectrum ›

- Atomically Thin Materials Significantly Shrink Qubits - IEEE Spectrum ›

- IBM Offers Quantum Error Suppression Out Of The Box - IEEE Spectrum ›

Charles Q. Choi is a science reporter who contributes regularly to IEEE Spectrum. He has written for Scientific American, The New York Times, Wired, and Science, among others.